Advanced Error Mitigation in Qiskit Runtime

Use Qiskit Runtime's built-in error mitigation options: resilience levels, zero-noise extrapolation, and Probabilistic Error Cancellation in the Estimator primitive.

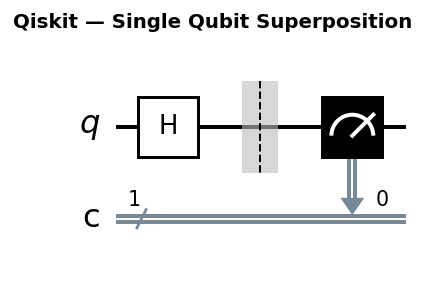

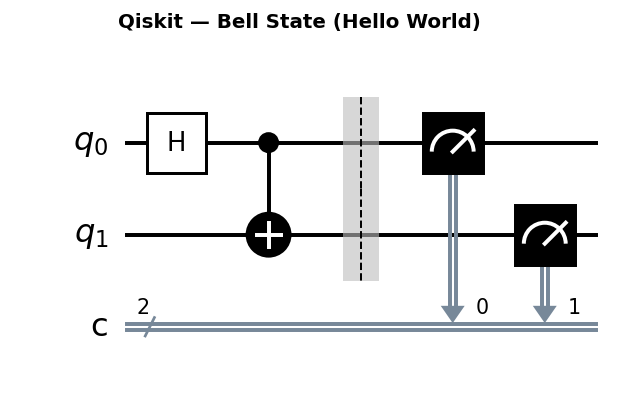

Circuit diagrams

The Problem with Raw Hardware Output

Running quantum circuits on NISQ hardware produces results corrupted by gate errors, readout errors, and decoherence. For algorithms that need accurate expectation values (VQE, QAOA, Hamiltonian simulation), those errors accumulate and distort the final result. Qiskit Runtime’s Estimator primitive addresses this through the resilience_level option, which activates progressively more powerful error mitigation strategies without requiring you to manually instrument your circuits.

This tutorial covers all four resilience levels, how to configure them, and the tradeoffs involved.

Physics of Quantum Noise

Before exploring mitigation strategies, it helps to understand the physical processes that corrupt quantum computation. Three broad categories of error dominate current superconducting hardware.

Gate Errors

Every quantum gate is implemented by applying a calibrated microwave pulse (for single-qubit gates) or a tuned interaction (for two-qubit gates such as echoed cross-resonance on IBM hardware). Imperfections in these control signals produce gate errors in several ways:

- Over-rotation and under-rotation: The pulse duration or amplitude deviates slightly from the ideal value, so an intended Rz(pi/2) rotation actually applies Rz(pi/2 + epsilon). This is a coherent error because it shifts the state deterministically in the same direction every time.

- Cross-talk: Driving one qubit with a microwave pulse also weakly couples to neighboring qubits. The stray field induces unintended ZZ interactions or small rotations on spectator qubits. Cross-talk worsens as qubit density increases on the chip.

- Calibration drift: Gate parameters are calibrated periodically, but the optimal pulse shape drifts between calibration cycles due to two-level-system defects and temperature fluctuations in the dilution refrigerator.

On current IBM superconducting processors, single-qubit gate errors are typically 0.1 to 0.5% per gate (measured by randomized benchmarking). Two-qubit gate errors are 0.5 to 2% per gate, with the CNOT or ECR gate being the dominant error source in most circuits.

Decoherence Errors

Even when no gates are applied, a qubit loses information over time through two distinct relaxation processes:

- T1 (energy relaxation): The qubit spontaneously decays from the excited state |1> to the ground state |0> by emitting a photon into the environment. T1 sets the timescale for amplitude damping. On current hardware, T1 values range from 100 to 300 microseconds. A circuit whose total execution time approaches T1 will see significant population leakage toward |0>.

- T2 (dephasing): The relative phase between |0> and |1> components of a superposition randomizes due to fluctuations in the qubit’s transition frequency. Magnetic flux noise, charge noise, and photon number fluctuations in the readout resonator all contribute. T2 is always less than or equal to 2*T1. Typical T2 values are 50 to 200 microseconds.

Decoherence errors are incoherent: they introduce genuine randomness that cannot be undone by adjusting gate parameters. A circuit with total execution time t experiences decoherence roughly proportional to t/T1 and t/T2.

Readout Errors

Measuring a superconducting qubit involves probing a coupled resonator with a microwave pulse and classifying the reflected signal as “0” or “1” based on its amplitude and phase. Several imperfections corrupt this classification:

- State discrimination errors: The probability distributions for the |0> and |1> resonator responses overlap. The classifier misassigns some fraction of measurements to the wrong state.

- T1 decay during readout: The measurement pulse takes 300 to 1000 nanoseconds. During this window, a qubit in |1> can relax to |0>, causing a 1-to-0 misread. This asymmetry means P(read 0 | true state 1) is typically larger than P(read 1 | true state 0).

- Readout cross-talk: Measuring one qubit’s resonator can shift the frequency of neighboring resonators, inducing correlated readout errors across qubit pairs.

Readout error rates on current hardware are 1 to 3% per qubit. Because readout errors affect every circuit execution equally regardless of circuit depth, they dominate the error budget for short circuits.

Resilience Levels Overview

The resilience_level parameter on EstimatorOptions selects the mitigation stack:

| Level | Method | Overhead |

|---|---|---|

| 0 | None (raw output) | Baseline |

| 1 | T-REx (readout mitigation) | Low |

| 2 | ZNE (zero-noise extrapolation) | Medium |

| 3 | PEC (Probabilistic Error Cancellation) | High |

Each higher level requires more shots and more circuit executions. The right choice depends on which noise source dominates your hardware and how much runtime you can afford.

Level 1: T-REx Readout Error Mitigation

T-REx stands for Twirled Readout Error eXtinction. It targets the most common and easiest-to-correct error: measurement errors, where a qubit in state |0> is read as 1, or vice versa.

The Readout Error Matrix

T-REx models readout errors through a matrix M, where entry M_ij represents P(read bitstring i | true state is bitstring j). For a single qubit, M is a 2x2 matrix:

M = [[P(0|0), P(0|1)],

[P(1|0), P(1|1)]]

For example, a qubit with 2% readout error might have:

M = [[0.98, 0.03],

[0.02, 0.97]]

Note the asymmetry: P(0|1) = 0.03 is larger than P(1|0) = 0.02, reflecting T1 decay during readout.

For a 2-qubit system, the readout error matrix is 4x4, mapping the four computational basis states {00, 01, 10, 11} to the four possible measurement outcomes. T-REx builds this matrix by preparing each computational basis state, measuring it many times, and recording the frequency of each outcome. The columns of M are the empirical probability distributions obtained from these calibration measurements.

The Twirling Step

Raw readout errors can be coherent: systematic biases that shift probabilities in a correlated, structured way. Coherent readout errors are harder to model because they do not factor neatly across qubits. T-REx addresses this by applying randomized Pauli X gates before measurement. On each shot, each qubit independently receives either an identity or an X gate (chosen uniformly at random). The classical outcome bit is then flipped to compensate if X was applied.

This Pauli twirling converts any coherent readout error into a stochastic (diagonal) error channel. The resulting error matrix has a simpler structure: it factors approximately into a tensor product of single-qubit readout error matrices, which is critical for scalability.

Matrix Inversion and Correction

Given the calibrated matrix M and a vector of raw measurement probabilities p_raw, the corrected probabilities are:

p_corrected = M^{-1} * p_raw

For expectation values, this correction adjusts the estimated value of each Pauli observable by dividing out the readout noise contribution.

Scaling to Many Qubits

For n qubits, the full readout error matrix is 2^n by 2^n, which becomes intractable to store and invert for more than about 15 qubits. T-REx sidesteps this by exploiting the tensor product structure induced by twirling. After twirling, the n-qubit readout error approximately factors as:

M_total ≈ M_1 ⊗ M_2 ⊗ ... ⊗ M_n

Each M_i is a 2x2 matrix, so only 2n parameters need calibration. The inverse also factors: M_total^{-1} = M_1^{-1} ⊗ … ⊗ M_n^{-1}. This makes T-REx efficient even for 100+ qubit circuits.

Code

from qiskit_ibm_runtime import EstimatorV2, EstimatorOptions

options = EstimatorOptions()

options.resilience_level = 1

estimator = EstimatorV2(backend=backend, options=options)

job = estimator.run([(isa_circuit, isa_obs, param_values)])

result = job.result()

print(result[0].data.evs)

Use level 1 when your circuit is short and gate errors are small relative to readout errors. This is common on modern superconducting hardware where T1/T2 times are long but readout fidelity is 95-99%.

Level 2: Zero-Noise Extrapolation

Zero-noise extrapolation (ZNE) does not reduce noise directly. Instead it amplifies the noise by controlled amounts, measures expectation values at each noise level, and extrapolates the trend back to the zero-noise limit.

Noise Amplification Mechanisms

Qiskit Runtime supports several methods for amplifying noise in a controlled way. Each method increases the effective noise level by a known factor while preserving the ideal unitary operation.

Global Gate Folding

Global gate folding replaces every gate G in the circuit with the sequence G * G_dagger * G. Since G_dagger * G = I (the identity), the ideal unitary is unchanged: the circuit still computes the same operation. However, each physical gate application adds noise, so the folded circuit experiences approximately three times the original noise.

For a circuit with depth d:

- Noise factor 1: original circuit, depth d

- Noise factor 3: every gate folded once, depth 3d

- Noise factor 5: every gate folded twice (G * G_dag * G * G_dag * G), depth 5d

This is the default amplification method in Qiskit Runtime.

Local Gate Folding

Local gate folding applies the folding operation only to selected gates rather than the entire circuit. Typically, you fold only the two-qubit gates (CNOT, ECR) because they have 5 to 10 times higher error rates than single-qubit gates. This approach amplifies the dominant noise source more precisely while keeping single-qubit gates at their original noise level.

Local folding produces more accurate extrapolations when the noise is dominated by a small number of high-error gates. It also produces shorter folded circuits, reducing the risk of pushing the total circuit duration past the decoherence limit.

Pulse Stretching

Pulse stretching slows down the microwave control pulses that implement each gate. A pulse stretched by factor k takes k times longer, increasing the qubit’s exposure to T1 and T2 decoherence proportionally. Unlike gate folding, pulse stretching amplifies decoherence noise specifically rather than all noise sources equally.

Pulse stretching requires pulse-level access to the backend (via Qiskit Pulse). It is not available through the standard circuit-level interface in Qiskit Runtime and is primarily used in research settings where fine-grained noise control is needed.

Code

from qiskit_ibm_runtime import EstimatorV2, EstimatorOptions

options = EstimatorOptions()

options.resilience_level = 2

estimator = EstimatorV2(backend=backend, options=options)

Custom ZNE Configuration

For more control over the extrapolation, configure ZneOptions directly:

options = EstimatorOptions()

options.resilience_level = 2

options.resilience.zne.noise_factors = [1, 3, 5] # gate folding factors

options.resilience.zne.extrapolator = "exponential" # or "linear", "double_exponential"

estimator = EstimatorV2(backend=backend, options=options)

Noise factors [1, 3, 5] mean the circuit runs at 1x, 3x, and 5x its normal noise level. More points give a better fit but cost proportionally more shots. Exponential extrapolation assumes noise decays exponentially toward zero, which is physically motivated for depolarizing channels.

Extrapolation Methods Comparison

Qiskit Runtime supports four extrapolation methods for fitting the noise-amplified data points and extrapolating to zero noise. The choice of extrapolator significantly affects the accuracy of the mitigated result.

Linear Extrapolation

The linear model fits E(lambda) = a + b * lambda, where lambda is the noise scale factor and E is the expectation value. This assumes that the expectation value degrades linearly with noise.

options.resilience.zne.noise_factors = [1, 3]

options.resilience.zne.extrapolator = "linear"

Linear extrapolation works well when noise is weak (the circuit is short) and only two noise factors are needed. It tends to overestimate the zero-noise value for highly noisy circuits because the true noise dependence curves downward.

Polynomial Extrapolation

The polynomial model fits a higher-order polynomial to the data points. With k noise factors, it can fit up to a degree k-1 polynomial.

options.resilience.zne.noise_factors = [1, 3, 5, 7]

options.resilience.zne.extrapolator = "polynomial_degree_2"

Polynomial extrapolation captures nonlinear noise dependence but is prone to overfitting when the number of data points is close to the polynomial degree. Use it when you have many noise factors and the noise dependence is clearly nonlinear but you lack a physical model for the functional form.

Exponential Extrapolation

The exponential model fits E(lambda) = a + b * exp(c * lambda). This functional form is physically motivated: for a depolarizing noise channel with error rate p per gate, the expectation value of a Pauli observable decays as (1 - 2p)^n where n is the number of gates. Taking the logarithm reveals an exponential dependence on the noise scale.

options.resilience.zne.noise_factors = [1, 3, 5]

options.resilience.zne.extrapolator = "exponential"

Exponential extrapolation is the default and the best general-purpose choice. It requires at least three noise factors to fit the three parameters (a, b, c). It performs well across a wide range of noise levels and circuit depths.

Double Exponential Extrapolation

The double exponential model fits E(lambda) = a + b1 * exp(c1 * lambda) + b2 * exp(c2 * lambda). This captures systems with two distinct noise timescales, such as circuits where single-qubit and two-qubit gates have very different error rates, or where both T1 and T2 processes contribute with different rates.

options.resilience.zne.noise_factors = [1, 2, 3, 4, 5]

options.resilience.zne.extrapolator = "double_exponential"

Double exponential requires at least five noise factors (five parameters to fit) and is the most expensive option. Use it only when simpler models produce visibly poor fits or when you have reason to believe multiple noise mechanisms contribute at different scales.

Choosing an Extrapolator

A practical approach: start with exponential extrapolation and three noise factors [1, 3, 5]. If the mitigated result has high variance or seems unreliable, try adding more noise factors and switching to double exponential. Linear extrapolation is a low-overhead fallback for quick estimates. The extrapolation curve, when plotted, should decrease smoothly from the highest noise factor down to lambda = 0. If the curve oscillates or the zero-noise intercept is far from the expected range, the extrapolation model is a poor fit.

Level 3: Probabilistic Error Cancellation

PEC (Probabilistic Error Cancellation) goes further than ZNE by learning the noise model of the device during circuit execution. It uses a noise learning phase to characterize Pauli error rates for each gate layer, then uses that model to construct an unbiased estimator that cancels the effect of noise.

Mathematical Framework

The key insight behind PEC is that a noisy gate can be described as the ideal gate followed by a noise channel. If the noise channel Lambda is known, it can be “inverted” using a quasi-probability representation.

Any noise channel Lambda acting on a Pauli basis can be written as:

Lambda^{-1} = sum_i q_i * P_i

where P_i are Pauli operations and q_i are real coefficients (not necessarily positive, hence “quasi-probability”). Some q_i are negative, which means PEC cannot be implemented by simply sampling circuits. Instead, PEC assigns a sign (+1 or -1) to each sampled circuit based on whether the total quasi-probability coefficient is positive or negative.

The PEC estimator works as follows:

- For each noisy gate in the circuit, sample a Pauli correction from the quasi-probability distribution.

- Apply the sampled Pauli corrections to build a modified circuit.

- Execute the modified circuit and record the measurement outcome multiplied by the sign of the quasi-probability sample.

- Average over many such samples to obtain an unbiased estimate.

The Gamma Overhead

The one-norm of the quasi-probability representation is:

gamma = sum_i |q_i|

This quantity, always greater than or equal to 1, determines the sampling overhead. For a single gate with gamma_gate, executing PEC for a circuit with n noisy gates requires:

shots_PEC = gamma^{2n} * shots_noiseless

The factor gamma^{2n} is the variance overhead: PEC produces an unbiased estimate, but the variance of each sample is inflated by gamma^{2n} compared to noiseless execution. To achieve the same statistical precision as a noiseless device, you need gamma^{2n} times more shots.

For typical current hardware, gamma for a two-qubit gate is about 1.02 to 1.10, depending on the gate error rate. Consider a concrete example: a circuit with 20 two-qubit gates, each with gamma = 1.05. The shot overhead is:

1.05^{40} = 1.05^{40} ≈ 7.04

This means PEC requires roughly 7 times more shots than a noiseless device would need. For a circuit with 50 two-qubit gates at the same per-gate gamma:

1.05^{100} ≈ 131.5

The overhead grows exponentially with circuit size, which fundamentally limits PEC to circuits where the total accumulated noise is moderate.

Code

options = EstimatorOptions()

options.resilience_level = 3

estimator = EstimatorV2(backend=backend, options=options)

PEC is more accurate than ZNE for systematic errors but requires significantly more shots for the learning phase. It is best suited for deep circuits on backends where the noise is stable over the job duration.

Noise Learning and Characterization

When you select resilience level 3, Qiskit Runtime automatically runs a noise learning phase before executing your circuit. This phase characterizes the Pauli error rates of each gate layer in your circuit.

How Noise Learning Works

The noise learning phase runs a set of randomized benchmarking-style circuits designed to isolate the noise contribution of each gate layer. Specifically:

- The system constructs circuits that exercise each two-qubit gate layer in isolation, surrounded by random single-qubit Clifford gates.

- These learning circuits are executed on the hardware, and the deviation from ideal outcomes reveals the Pauli error rates.

- The learned error model is stored and used to construct the quasi-probability representation for PEC.

Configuring the Learning Phase

You can control the number of learning circuits, which affects the accuracy of the learned noise model:

options = EstimatorOptions()

options.resilience_level = 3

options.resilience.layer_noise_learning.max_layers_to_learn = 4

options.resilience.layer_noise_learning.num_randomizations = 32

options.resilience.layer_noise_learning.shots_per_randomization = 128

estimator = EstimatorV2(backend=backend, options=options)

More randomizations and shots per randomization produce a more accurate noise model at the cost of additional runtime. For circuits with stable noise characteristics, 32 randomizations with 128 shots each provides a good balance. Increase these values if you observe high variance in the PEC output or if you suspect the noise model is inaccurate.

Inspecting Learned Noise

After a level 3 job completes, you can inspect the learned noise model through the job metadata:

job = estimator.run([(isa_circuit, isa_obs, param_values)])

result = job.result()

# Access the learned noise metadata

metadata = result[0].metadata

print("PEC sampling overhead (gamma):", metadata.get("sampling_overhead"))

The sampling_overhead field reports the effective gamma^{2n} for your circuit. If this value exceeds 100, the variance inflation is severe and you should consider using ZNE (level 2) instead, or reducing the circuit depth.

Gate Twirling in Depth

Independently of resilience level, you can enable gate twirling to randomize coherent errors into stochastic (Pauli) errors. This makes the noise more amenable to mitigation.

How Pauli Twirling Works

Pauli twirling wraps each two-qubit Clifford gate C with random Pauli gates. For each execution of the circuit, a random Pauli pair (P_before, P_after) is chosen such that:

P_after * C * P_before = C

This condition ensures the ideal gate is unchanged. However, any coherent error E attached to the gate transforms differently under each random Pauli frame. Averaging over many random frames converts the coherent error into a Pauli channel (a probabilistic mixture of Pauli errors).

Mathematically, for a Clifford gate C with error channel E, Pauli twirling produces:

E_twirled(rho) = (1/|P|^2) * sum_{P,Q} (Q * C * P)^dag * E(Q * C * P * rho * (Q * C * P)^dag) * (Q * C * P)

The resulting E_twirled is guaranteed to be a Pauli channel: a diagonal map in the Pauli basis. Pauli channels are easier to characterize (they are described by at most 4^n - 1 parameters for n qubits) and are the noise model assumed by PEC.

Why Twirling Matters for PEC

PEC assumes the noise on each gate is a Pauli channel. If the actual noise has coherent components (systematic rotations, correlated phases), PEC’s quasi-probability inversion produces a biased estimate. Enabling twirling before PEC ensures the assumption holds, making the PEC estimate truly unbiased.

For ZNE (level 2), twirling also helps because depolarizing noise (a special case of Pauli noise) produces a clean exponential decay in expectation values, which the exponential extrapolator models accurately.

Configuring Twirling

options = EstimatorOptions()

options.resilience_level = 2

options.twirling.enable_gates = True

options.twirling.enable_measure = True

options.twirling.num_randomizations = 32 # number of random twirl instances

estimator = EstimatorV2(backend=backend, options=options)

The num_randomizations parameter controls how many distinct random Pauli frames are sampled. Each frame produces one circuit variant; the final result averages over all variants. The variance of the averaged result decreases as 1/num_randomizations.

How Many Randomizations?

# Conservative: low variance, higher runtime

options.twirling.num_randomizations = 128

# Balanced: good for most circuits

options.twirling.num_randomizations = 64

# Aggressive: faster but noisier

options.twirling.num_randomizations = 32

For PEC (level 3), use at least 64 randomizations because the quasi-probability sampling already inflates variance. For ZNE (level 2), 32 randomizations are usually sufficient. For level 1 (readout mitigation only), measurement twirling with 16 randomizations works well.

Twirling runs the circuit multiple times with randomly chosen Pauli frames, then averages the results. Coherent errors (systematic over- or under-rotations) average out, leaving only incoherent noise that ZNE and PEC handle well.

Session-Based Multi-Circuit Execution

When running iterative algorithms like VQE or QAOA, you submit many circuit evaluations sequentially, each with different parameter values. Without a session, each job enters the backend queue independently, adding minutes of queue latency per iteration. Qiskit Runtime Sessions solve this by reserving backend access for the duration of your optimization loop.

How Sessions Work

A Session establishes a persistent connection to a specific backend. While the session is active, all jobs submitted within it execute with priority access, bypassing the general queue. The backend resources remain allocated to your session until you close it or it times out.

VQE with a Session

import numpy as np

from scipy.optimize import minimize

from qiskit import QuantumCircuit

from qiskit.circuit import ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

from qiskit_ibm_runtime import (

QiskitRuntimeService, EstimatorV2, EstimatorOptions, Session

)

# Connect to backend

service = QiskitRuntimeService()

backend = service.least_busy(operational=True, simulator=False)

# Build ansatz

theta = ParameterVector("theta", 4)

qc = QuantumCircuit(2)

qc.ry(theta[0], 0)

qc.ry(theta[1], 1)

qc.cx(0, 1)

qc.ry(theta[2], 0)

qc.ry(theta[3], 1)

# Hamiltonian

hamiltonian = SparsePauliOp.from_list([("ZI", 1.0), ("IZ", 1.0), ("XX", 0.5)])

# Transpile to ISA circuit

pm = generate_preset_pass_manager(optimization_level=1, backend=backend)

isa_qc = pm.run(qc)

isa_ham = hamiltonian.apply_layout(isa_qc.layout)

# Configure mitigation

options = EstimatorOptions()

options.resilience_level = 2

options.twirling.enable_gates = True

options.twirling.num_randomizations = 32

# Define cost function that runs inside the session

def cost_function(params, estimator, circuit, observable):

"""Evaluate <H> at the given parameter values."""

job = estimator.run([(circuit, observable, params)])

result = job.result()

energy = result[0].data.evs

print(f" Energy: {energy:.6f}")

return energy

# Run VQE inside a session

with Session(backend=backend) as session:

estimator = EstimatorV2(session=session, options=options)

x0 = np.random.uniform(-np.pi, np.pi, 4)

result = minimize(

cost_function,

x0,

args=(estimator, isa_qc, isa_ham),

method="COBYLA",

options={"maxiter": 50}

)

print(f"Optimal energy: {result.fun:.6f}")

print(f"Optimal parameters: {result.x}")

The with Session(...) context manager automatically opens the session at entry and closes it at exit. All estimator.run() calls within the block share the same session. For a 50-iteration COBYLA optimization, this can reduce total wall-clock time from hours (with individual queue waits) to minutes (with session priority).

Session Best Practices

- Keep the session duration short. Sessions that idle for too long consume backend allocation without doing useful work.

- Do not open a session for a single job. Sessions add value only for multi-job workflows.

- Handle exceptions inside the session block to ensure the session closes cleanly even if an optimization step fails.

- Monitor session usage through the IBM Quantum dashboard to avoid exceeding your allocation.

Complete Example: VQE with Three Resilience Levels

import numpy as np

from qiskit import QuantumCircuit

from qiskit.circuit import ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

from qiskit_ibm_runtime import QiskitRuntimeService, EstimatorV2, EstimatorOptions

# Connect to IBM backend

service = QiskitRuntimeService()

backend = service.least_busy(operational=True, simulator=False)

# Build a small ansatz

theta = ParameterVector("theta", 4)

qc = QuantumCircuit(2)

qc.ry(theta[0], 0)

qc.ry(theta[1], 1)

qc.cx(0, 1)

qc.ry(theta[2], 0)

qc.ry(theta[3], 1)

# Hamiltonian: Z0 + Z1 + 0.5*X0X1

hamiltonian = SparsePauliOp.from_list([("ZI", 1.0), ("IZ", 1.0), ("XX", 0.5)])

# Transpile

pm = generate_preset_pass_manager(optimization_level=1, backend=backend)

isa_qc = pm.run(qc)

isa_ham = hamiltonian.apply_layout(isa_qc.layout)

param_vals = np.array([[0.1, 0.2, 0.3, 0.4]])

results = {}

for level in [0, 1, 2]:

opts = EstimatorOptions()

opts.resilience_level = level

est = EstimatorV2(backend=backend, options=opts)

job = est.run([(isa_qc, isa_ham, param_vals)])

results[level] = job.result()[0].data.evs

print(f"Level {level}: <H> = {results[level]:.4f}")

Comparing Mitigation Methods: Concrete Numbers

To make the tradeoffs tangible, consider a specific benchmark: computing the ground state energy of H2 (molecular hydrogen) at bond distance 0.735 angstroms using a 4-qubit UCCSD ansatz. The table below shows representative results from IBM hardware (values vary by backend and day, but the relative trends are consistent).

| Method | Expectation Value (Ha) | Error vs Ideal | Std Dev | Relative Shots |

|---|---|---|---|---|

| Ideal (statevector) | -1.1373 | 0.0000 | 0.0000 | N/A |

| Level 0 (raw) | -0.9241 | 0.2132 | 0.0089 | 1x |

| Level 1 (T-REx) | -0.9687 | 0.1686 | 0.0092 | 1.3x |

| Level 2 (ZNE) | -1.0891 | 0.0482 | 0.0215 | 3x |

| Level 2 + twirling | -1.1024 | 0.0349 | 0.0198 | 3x |

| Level 3 (PEC) | -1.1295 | 0.0078 | 0.0437 | 8x |

Several patterns are visible:

- Level 1 corrects the readout bias but does not touch gate errors, which dominate for this 4-qubit circuit with multiple CNOT layers. The improvement over raw is modest.

- Level 2 (ZNE) reduces the error by roughly 4x compared to raw, at the cost of 3x more shots and noticeably higher standard deviation.

- Twirling combined with ZNE further improves accuracy by converting coherent gate errors to stochastic noise before extrapolation.

- Level 3 (PEC) gets closest to the ideal value (within 0.008 Ha), but the standard deviation is 5x higher than raw. To reduce the PEC standard deviation to match the raw measurement precision, you would need approximately 25x more shots (5^2 = 25, since standard deviation scales as 1/sqrt(shots)).

The key takeaway: higher resilience levels reduce systematic bias at the cost of increased statistical variance. The optimal choice depends on your error budget and shot budget.

Error Mitigation for SamplerV2

The tutorial so far focuses on the Estimator primitive, which computes expectation values. Qiskit Runtime also offers the Sampler primitive for obtaining quasi-probability distributions (bitstring probabilities). Error mitigation applies to the Sampler as well, though with important differences.

Readout Mitigation for Sampler

SamplerV2 supports readout error mitigation using the same M3 (Matrix-free Measurement Mitigation) technique. This corrects the raw bitstring counts by applying the inverse of the readout error matrix, just as T-REx does for Estimator.

from qiskit_ibm_runtime import SamplerV2, SamplerOptions

options = SamplerOptions()

options.resilience_level = 1 # readout mitigation

sampler = SamplerV2(backend=backend, options=options)

job = sampler.run([(isa_circuit,)])

result = job.result()

# Access quasi-probability distribution

counts = result[0].data.meas.get_counts()

print(counts)

What Mitigation Methods Apply to Sampler?

ZNE and PEC are designed for expectation values, not probability distributions. ZNE works by fitting a curve to how an expectation value changes with noise level and extrapolating to zero noise. Probability distributions do not have the same simple functional dependence on noise scale, so ZNE extrapolation does not apply directly.

For SamplerV2, the mitigation options are:

- Level 0: No mitigation. Raw bitstring counts.

- Level 1: Readout error mitigation via M3. Corrects measurement errors.

If you need accurate probability distributions, use SamplerV2 with level 1. If you need accurate expectation values, use EstimatorV2 with level 2 or 3. In many workflows, you can compute expectation values from Sampler output manually, but doing so forfeits the ZNE and PEC corrections that Estimator provides.

When to Avoid Error Mitigation

Error mitigation is not always the right choice. Several situations call for running with level 0 or level 1 at most.

When You Need Raw Bitstring Statistics

If your algorithm relies on the full probability distribution or specific bitstring samples (combinatorial optimization, sampling-based algorithms), ZNE and PEC do not apply. These methods produce corrected expectation values, not corrected bitstring distributions. Use level 0 or level 1 (readout correction only).

When Your Circuit Exceeds the Decoherence Limit

A circuit whose total execution time exceeds T1 or T2 has lost most of its quantum coherence. The expectation values are dominated by noise, not signal. Mitigation amplifies both the residual signal and the noise floor. For extremely noisy circuits, ZNE extrapolation becomes numerically unstable (the extrapolated value has enormous variance), and PEC’s shot overhead gamma^{2n} becomes prohibitively large. If your circuit depth exceeds roughly 0.5 * T2 / gate_time, mitigation is unlikely to help.

When Running Many Parameter Points

Variational algorithms (VQE, QAOA) evaluate the cost function at many parameter points during optimization. ZNE multiplies the shot count by 3 to 5x per evaluation, and PEC by 5 to 10x. For a 200-iteration optimizer, ZNE turns a 1-hour job into a 3 to 5 hour job. Consider using level 1 (cheap readout correction) during the optimization loop and reserving level 2 or 3 for a final evaluation at the converged parameters.

When Developing and Debugging

During circuit development, you want to see the true hardware behavior, including its flaws. Level 0 reveals the raw noise floor, which helps you diagnose whether your circuit is too deep, whether specific qubits have high error rates, or whether the transpiler layout is suboptimal. Once the circuit design is finalized, enable mitigation for production runs.

Decision Flowchart

Follow this sequence to choose the appropriate mitigation level:

- Are you computing expectation values (Estimator) or sampling bitstrings (Sampler)?

- If sampling: use level 0 or level 1 only. Go to step 5.

- If expectation values: continue.

- Is your circuit near or beyond the decoherence limit?

- If yes: level 0 or 1. Mitigation will not help.

- If no: continue.

- Are you in an optimization loop with many evaluations?

- If yes: use level 1 during optimization, level 2 or 3 for final evaluation.

- If no: continue.

- Do you need the highest possible accuracy and can afford 5 to 10x shot overhead?

- If yes: level 3 (PEC) with twirling.

- If no: level 2 (ZNE) with twirling.

- Always enable readout mitigation (level 1 at minimum) for production results. The overhead is negligible.

Common Mistakes

Forgetting apply_layout for Observables

After transpiling a circuit, the logical-to-physical qubit mapping changes. Observables defined on logical qubits must be remapped to match the transpiled layout. Forgetting this step causes the Estimator to measure the wrong Pauli terms.

# WRONG: observable on logical qubits, circuit on physical qubits

job = estimator.run([(isa_circuit, hamiltonian, params)]) # mismatch!

# CORRECT: apply the transpiler layout to the observable

isa_ham = hamiltonian.apply_layout(isa_circuit.layout)

job = estimator.run([(isa_circuit, isa_ham, params)])

This is an ISA (Instruction Set Architecture) compliance requirement. The Estimator will raise an error or silently produce incorrect results if the observable does not match the circuit layout.

Using Level 3 on Simulators

PEC learns the noise model from the actual hardware during execution. When you run on a noiseless simulator, there is no noise to learn, and PEC has no effect. The learning circuits execute, consume time, and produce a trivial noise model (identity channels everywhere). Use level 0 on simulators unless you are running a noisy simulation with a custom noise model.

Insufficient Shots for ZNE

ZNE fits a curve through noisy data points. If each data point has high variance (too few shots), the fit is unreliable and the extrapolated value can be wildly off. A rough guideline: allocate at least 4,000 shots per noise factor. With three noise factors [1, 3, 5], that means at least 12,000 total shots. If you observe that the mitigated expectation value fluctuates dramatically between repeated runs, increase the shot count before changing the extrapolation method.

Confusing ZNE with Error Correction

ZNE mitigates errors in the final expectation value by statistical post-processing. It does not correct errors at the physical level. The individual circuit executions are still noisy; each bitstring sample is still corrupted. ZNE only recovers the correct expectation value on average over many samples. This distinction matters: ZNE cannot improve the fidelity of individual quantum states, and it cannot help with algorithms that require high-fidelity intermediate states (such as quantum error correction encoding circuits).

Running PEC Without Twirling

PEC assumes the noise on each gate is a Pauli channel. If the actual noise includes coherent components (unitary over-rotations, correlated phase errors), PEC’s quasi-probability inversion is biased. Always enable gate twirling when using level 3:

options = EstimatorOptions()

options.resilience_level = 3

options.twirling.enable_gates = True # critical for PEC correctness

options.twirling.enable_measure = True

options.twirling.num_randomizations = 64

estimator = EstimatorV2(backend=backend, options=options)

Without twirling, PEC may return results that are more biased than ZNE, despite the higher computational cost.

Treating Mitigated Values as Exact

Mitigated expectation values are still statistical estimates with finite precision. The standard error of a mitigated estimate is always larger than the standard error of the raw estimate (mitigation inflates variance). When reporting mitigated results, always include the standard deviation:

result = job.result()

expectation_value = result[0].data.evs

std_error = result[0].data.stds

# Report both value and uncertainty

print(f"<H> = {expectation_value:.4f} ± {std_error:.4f}")

Do not interpret mitigated values as ground truth. They are improved estimates, but they still carry statistical and systematic uncertainty.

Shot Overhead and Runtime Scaling

Mitigation is not free. Here is a rough guide to the shot multiplier each level adds:

- Level 0: 1x shots. No overhead.

- Level 1: 1.2-1.5x. Calibration circuits are quick.

- Level 2 (ZNE, 3 noise factors): 3x shots plus the folded circuits take longer on the queue.

- Level 3 (PEC): 5-10x, depending on circuit depth and number of learning circuits.

For short NISQ circuits with fewer than 50 gates, level 2 usually gives a worthwhile accuracy improvement. For circuits approaching the decoherence limit, mitigation does not help much because the signal is buried in noise regardless.

What Mitigation Cannot Fix

Error mitigation reduces the bias in your expectation value estimates but it increases variance. More shots are needed to achieve the same standard error. There is a fundamental tradeoff: the more noise you have, the more the variance inflates, and at some point adding shots no longer helps.

Mitigation also cannot solve algorithmic problems. If your variational ansatz is in a barren plateau, a region of parameter space where gradients are exponentially small, ZNE and PEC will faithfully report a near-zero gradient, not a useful one. Fixing barren plateaus requires circuit design changes, not mitigation.

Choosing the Right Level

- Readout-dominated noise (short circuits, accurate gates): level 1 is sufficient.

- Coherent gate errors with moderate depth: level 2 with twirling enabled.

- Systematic, stable errors on a well-characterized backend: level 3 for the best accuracy.

- Simulation benchmarks or debugging: level 0 to see the raw hardware output.

Was this tutorial helpful?