Fault-Tolerant Quantum Gates: Why T Gates Need Magic States

Physical vs logical gates, transversal Cliffords on the surface code, the Eastin-Knill obstruction, and the 15-to-1 magic state protocol that makes T gates fault-tolerant.

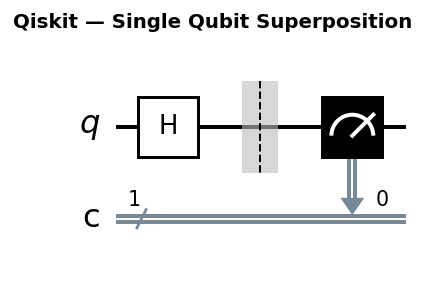

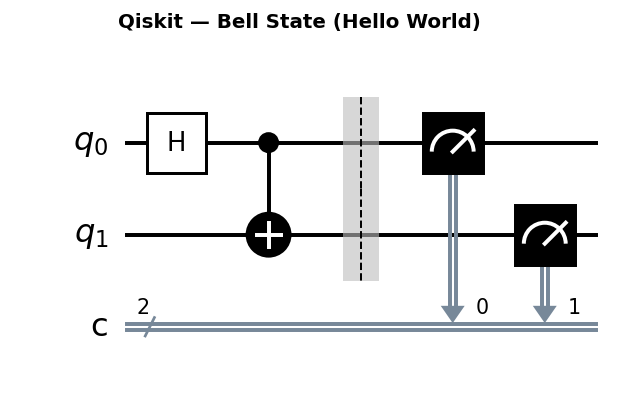

Circuit diagrams

Fault-tolerant quantum computing is the regime where quantum error correction (QEC) is active, logical error rates fall exponentially with code distance, and computation can run indefinitely without accumulating catastrophic errors. Getting there requires more than encoding qubits into error-correcting codes. Every gate applied to a logical qubit must itself be fault-tolerant, meaning a single physical error during the gate cannot propagate into an uncorrectable logical error. That constraint reshapes almost everything about how we think about quantum computation.

Physical Gates vs. Logical Gates

A physical gate is a pulse or interaction applied directly to one or more hardware qubits. On a superconducting processor, a physical CNOT might be a microwave pulse lasting 100-400 ns with a two-qubit error rate of 0.1-1%. Physical gates are the native vocabulary of the hardware.

A logical gate acts on a logical qubit, which is encoded across many physical qubits by a QEC code. For the distance-3 surface code, one logical qubit spans 9 data qubits and 8 ancilla qubits. A logical gate on this qubit is a carefully coordinated pattern of physical gates designed so that:

- It implements the correct logical operation when no errors occur.

- A single physical error anywhere in the pattern produces at most a correctable error pattern on the output.

These two requirements are in tension. Naively applying a physical CNOT between corresponding qubits in two code blocks would implement a logical CNOT, but an error on one physical qubit could spread to many qubits through the entangling gates, potentially exceeding the code’s correction capacity.

Transversal Gates and Why They Work

The elegant solution for some gates is transversality: apply the physical gate bitwise, qubit-by-qubit, between two code blocks. No qubit in block A interacts with more than one qubit in block B.

For the surface code and many stabilizer codes, the Clifford group (H, S, CNOT, and their compositions) can be implemented transversally or through similarly structured low-overhead schemes. A transversal CNOT on the surface code pairs each data qubit in the control block with the corresponding data qubit in the target block. Because each physical CNOT touches only one qubit per block, an error on qubit i in block A can only affect qubit i in block B. The resulting error pattern is still a correctable single-qubit error in block B. Fault tolerance is maintained by construction.

The Clifford group under transversal implementation gives a rich set of logical gates: logical Hadamard flips the lattice orientation, logical CNOT is bitwise, logical S (phase gate) can be implemented via ancilla-assisted techniques on the surface code. Together these cover a huge fraction of practically useful quantum operations, including all quantum error correction circuits themselves.

The Eastin-Knill Theorem and the T Gate Problem

Here the good news runs out. The Eastin-Knill theorem (2009) states that no quantum error-correcting code can implement a universal gate set transversally. There will always be at least one gate that cannot be done transversally. For all leading codes, including the surface code, that gate is the T gate (also written as the pi/8 rotation), defined as:

T = [[1, 0], [0, exp(i*pi/4)]]

The T gate matters because {H, CNOT, T} is a universal gate set. Without a fault-tolerant T gate, fault-tolerant universal computation is impossible regardless of how good your Clifford implementation is. And T gates appear constantly in real algorithms: Toffoli gates (used in every arithmetic and oracle circuit) decompose into 7 T gates. The quantum Fourier transform, Hamiltonian simulation, and Shor’s algorithm all need many T gates.

You cannot simply apply a physical T gate to a logical qubit. A single error during the gate can create a logical error that the code cannot distinguish from the intended operation.

Magic State Distillation: The 15-to-1 Protocol

The standard solution is magic state distillation, introduced by Bravyi and Kitaev in 2005. The key insight is that T gates are hard but Clifford operations are easy. You can prepare a special resource state, called a magic state, using noisy physical operations and then consume that state (via Clifford gates only) to apply a perfect logical T gate. The remaining problem is that the magic state is noisy. Distillation purifies many noisy magic states into fewer, higher-fidelity ones.

The canonical protocol is the 15-to-1 distillation circuit. It takes 15 noisy |T> states (each with physical error rate p) and, using only Clifford operations and measurements, outputs 1 magic state with error rate roughly 35p^3. If p = 0.1%, the output error rate is 35 * (10^-3)^3 = 3.5 * 10^-8. One round of distillation is often sufficient; two rounds can achieve error rates below 10^-15.

The 15-to-1 protocol is essentially a verification of the [[15,1,3]] Reed-Muller code. The 15 input magic states are checked against the stabilizers of this code. If any stabilizer measurement fails, the batch is rejected. Accepted outputs have dramatically suppressed error rates.

The resource cost is staggering. Each of the 15 input magic states must itself be prepared on a small dedicated code block. For a surface code at distance 5, preparing one noisy magic state requires roughly 50 physical qubits and several rounds of syndrome measurement. The 15-to-1 factory therefore needs ~750 physical qubits to produce a single logical T gate. More conservative estimates for practical computation target distances of 7-15, pushing the factory to hundreds of physical qubits per logical T gate consumed.

For a 100-logical-qubit algorithm with a T count of 10^6 (modest by Shor’s algorithm standards), the total physical qubit requirement can exceed 100,000 qubits with a substantial fraction dedicated to T gate factories running in parallel.

Simulating a Logical CNOT on a 3-Qubit Repetition Code

The 3-qubit bit-flip repetition code encodes one logical qubit into three: |0_L> = |000>, |1_L> = |111>. A logical CNOT between two such code blocks is transversal: apply physical CNOT from qubit i of block A to qubit i of block B, for i in {0,1,2}.

from qiskit import QuantumCircuit, QuantumRegister, ClassicalRegister

from qiskit_aer import AerSimulator

# Two logical qubits, each encoded in 3 physical qubits

# Plus 4 ancilla for syndrome measurement (2 per block)

ctrl = QuantumRegister(3, 'ctrl')

tgt = QuantumRegister(3, 'tgt')

anc = QuantumRegister(4, 'anc') # anc[0,1] for ctrl, anc[2,3] for tgt

c_out = ClassicalRegister(3, 'c_out')

qc = QuantumCircuit(ctrl, tgt, anc, c_out)

# --- Encode logical |1> in control block: |1_L> = |111> ---

qc.x(ctrl[0])

qc.cx(ctrl[0], ctrl[1])

qc.cx(ctrl[0], ctrl[2])

# --- Encode logical |0> in target block: |0_L> = |000> (already |000>) ---

qc.barrier(label="encoded")

# --- Transversal logical CNOT ---

# Each physical qubit in ctrl controls the corresponding qubit in tgt

for i in range(3):

qc.cx(ctrl[i], tgt[i])

qc.barrier(label="after logical CNOT")

# --- Syndrome measurement on target block ---

# Ancilla anc[2] measures parity of tgt[0] ^ tgt[1]

# Ancilla anc[3] measures parity of tgt[1] ^ tgt[2]

qc.h(anc[2])

qc.cx(anc[2], tgt[0])

qc.cx(anc[2], tgt[1])

qc.h(anc[2])

qc.h(anc[3])

qc.cx(anc[3], tgt[1])

qc.cx(anc[3], tgt[2])

qc.h(anc[3])

# --- Measure target data qubits to verify logical state ---

qc.measure(tgt, c_out)

print(qc.draw(output='text', fold=90))

# Simulate

sim = AerSimulator()

result = sim.run(qc, shots=1024).result()

counts = result.get_counts()

print("\nMeasurement outcomes (target block):")

for outcome, count in sorted(counts.items(), key=lambda x: -x[1]):

print(f" {outcome}: {count}")

print("\nExpected: all shots should show '111' (logical |1>)")

print("A logical CNOT on |1_L>|0_L> produces |1_L>|1_L>.")

The target block starts as |000> (logical |0>). After the transversal CNOT controlled on logical |1>, the target should be |111> (logical |1>). All 1024 shots should read 111, confirming that the transversal gate worked correctly with no errors injected.

Resource Overhead and the Path to Fault-Tolerant Advantage

Current NISQ devices operate in the regime where physical error rates must fall below the fault-tolerance threshold (~1% for surface codes). Many devices are at or near this boundary, but practical fault-tolerant computation additionally requires error rates well below threshold to achieve useful suppression at moderate code distances. Crossing into the practical fault-tolerant regime requires:

- Physical error rates well below threshold (targeting sub-0.1% two-qubit gate error for efficient fault tolerance, roughly 10x below the ~1% threshold).

- Enough physical qubits to encode logical qubits at sufficient code distance. A d=7 surface code requires 2*7^2 - 1 = 97 physical qubits per logical qubit.

- Fast syndrome measurement with classical decoding completing faster than the next round begins (real-time decoding).

- T gate factories operating in parallel with the main computation.

Conservative estimates place fault-tolerant quantum advantage in chemistry and optimization at 1-10 million physical qubits. Google’s Willow (105 qubits) and IBM’s Heron series are early demonstrations of below-threshold operation at small scale. The engineering roadmap to millions of qubits involves new modular architectures, cryogenic interconnects, and co-designed classical control stacks.

The fault-tolerant era is not a distant abstraction. It is an engineering program with specific milestones, and understanding the gate-level resource requirements is essential context for evaluating quantum hardware progress.

Was this tutorial helpful?