Quantum Monte Carlo for Options Pricing

Learn how quantum amplitude estimation delivers a quadratic speedup over classical Monte Carlo for pricing European call options, using Qiskit Finance's built-in circuits and AerSimulator.

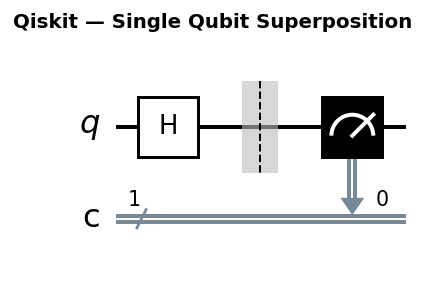

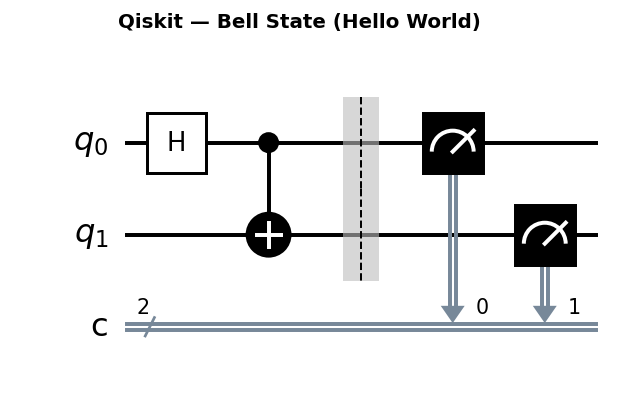

Circuit diagrams

Why Finance Cares About Monte Carlo

Options pricing is a cornerstone of quantitative finance. A European call option gives the holder the right, but not the obligation, to buy an asset at a fixed strike price K at expiration. Its fair value today depends on the probability-weighted average of all possible future asset prices, discounted to the present.

The standard approach is Monte Carlo simulation: sample thousands of random paths for the underlying asset price, compute the payoff on each path, and average the results. The payoff for a European call is max(S_T - K, 0) where S_T is the asset price at maturity.

Classical Monte Carlo has a well-known limitation: to halve the estimation error, you must quadruple the number of samples. More precisely, the root-mean-square error scales as O(1 / sqrt(N)), which means achieving accuracy epsilon requires O(1 / epsilon^2) samples. For real-time risk management systems repricing thousands of instruments simultaneously, this cost is substantial.

The Quantum Speedup

Quantum amplitude estimation (QAE), introduced by Brassard, Hoyer, Mosca, and Tapp, achieves the same accuracy with only O(1 / epsilon) circuit evaluations. This is a genuine quadratic speedup in query complexity. Instead of estimating an expectation by repeated random sampling, QAE encodes the probability distribution into a quantum state and uses quantum phase estimation to read out the amplitude of the target outcome.

The key insight: if you can prepare a quantum state where the amplitude of a marked basis state encodes the quantity of interest (here, the expected payoff), then QAE extracts that amplitude with provably fewer queries to the circuit than any classical estimator can achieve with the equivalent random number generator.

Installing Qiskit Finance

pip install qiskit qiskit-finance qiskit-aer qiskit-algorithms scipy matplotlib

Qiskit Finance provides ready-made distribution loaders and payoff circuits. Qiskit Aer provides the statevector and QASM simulators.

The Black-Scholes Model from First Principles

Before building quantum circuits, we need to understand the mathematical model that defines our pricing problem.

Under the risk-neutral measure, the stock price at maturity follows a geometric Brownian motion. The solution to the stochastic differential equation dS = r*S*dt + sigma*S*dW gives the terminal price:

S_T = S_0 * exp((r - sigma^2 / 2) * T + sigma * sqrt(T) * Z)

where Z ~ N(0,1) is a standard normal random variable, r is the risk-free interest rate, sigma is the annualized volatility, and T is the time to maturity. Taking the logarithm of both sides:

log(S_T / S_0) ~ N(mu_drift, sigma_dist^2)

where mu_drift = (r - sigma^2/2) * T and sigma_dist = sigma * sqrt(T). This means the log-return follows a normal distribution, and therefore S_T itself follows a log-normal distribution.

The Black-Scholes analytical formula for a European call option price is:

C = S_0 * N(d1) - K * exp(-r * T) * N(d2)

where:

d1 = (log(S_0 / K) + (r + sigma^2/2) * T) / (sigma * sqrt(T))d2 = d1 - sigma * sqrt(T)N(x)is the cumulative distribution function of the standard normal

Let us plot the log-normal distribution and compute the Black-Scholes price:

import numpy as np

import matplotlib.pyplot as plt

from scipy.stats import norm, lognorm

# Parameters for the underlying asset

S0 = 2.0 # current stock price

vol = 0.4 # annualized volatility

r = 0.05 # risk-free rate

T = 40 / 365 # time to maturity (40 trading days)

K = 1.896 # strike price (approximately at the money)

# Log-normal distribution parameters

mu = (r - 0.5 * vol ** 2) * T + np.log(S0)

sigma_dist = vol * np.sqrt(T)

# Black-Scholes analytical price

d1 = (np.log(S0 / K) + (r + 0.5 * vol ** 2) * T) / (vol * np.sqrt(T))

d2 = d1 - vol * np.sqrt(T)

bs_price = S0 * norm.cdf(d1) - K * np.exp(-r * T) * norm.cdf(d2)

print(f"Black-Scholes analytical price: ${bs_price:.6f}")

# Plot the log-normal PDF for S_T

s_values = np.linspace(0.5, 4.0, 500)

pdf_values = lognorm.pdf(s_values, s=sigma_dist, scale=np.exp(mu))

plt.figure(figsize=(10, 5))

plt.plot(s_values, pdf_values, 'b-', linewidth=2, label='Log-normal PDF of $S_T$')

plt.axvline(x=K, color='r', linestyle='--', linewidth=1.5, label=f'Strike K = {K}')

plt.fill_between(s_values, pdf_values, where=(s_values > K), alpha=0.3, color='green',

label='In-the-money region')

plt.xlabel('Stock Price at Maturity ($S_T$)')

plt.ylabel('Probability Density')

plt.title('Log-Normal Distribution of Terminal Stock Price')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.savefig('lognormal_distribution.png', dpi=150)

plt.show()

The green shaded region represents states where the option finishes in the money (S_T > K). The expected payoff is the integral of (S_T - K) * f(S_T) over this region, discounted by exp(-r*T).

Classical Monte Carlo Baseline

Before diving into the quantum approach, let us implement a classical Monte Carlo pricer from scratch. This gives us a concrete baseline for comparison.

import numpy as np

from scipy.stats import norm

# Parameters (same as above)

S0 = 2.0

vol = 0.4

r = 0.05

T = 40 / 365

K = 1.896

# Black-Scholes analytical price for validation

d1 = (np.log(S0 / K) + (r + 0.5 * vol ** 2) * T) / (vol * np.sqrt(T))

d2 = d1 - vol * np.sqrt(T)

bs_price = S0 * norm.cdf(d1) - K * np.exp(-r * T) * norm.cdf(d2)

np.random.seed(42)

print(f"{'N':>8} | {'MC Price':>10} | {'95% CI Width':>12} | {'Error vs BS':>12}")

print("-" * 52)

for N in [100, 1_000, 10_000, 100_000, 1_000_000]:

# Draw N samples from the standard normal distribution

Z = np.random.standard_normal(N)

# Simulate terminal stock prices using the exact log-normal formula

S_T = S0 * np.exp((r - 0.5 * vol ** 2) * T + vol * np.sqrt(T) * Z)

# Compute the payoff for each sample

payoffs = np.maximum(S_T - K, 0)

# Discount to present value

discounted_payoffs = np.exp(-r * T) * payoffs

# Monte Carlo estimate: sample mean

mc_price = np.mean(discounted_payoffs)

# Standard error of the mean

std_error = np.std(discounted_payoffs, ddof=1) / np.sqrt(N)

# 95% confidence interval

ci_low = mc_price - 1.96 * std_error

ci_high = mc_price + 1.96 * std_error

ci_width = ci_high - ci_low

error = abs(mc_price - bs_price)

print(f"{N:>8} | ${mc_price:>8.4f} | ${ci_width:>10.6f} | ${error:>10.6f}")

print(f"\nBlack-Scholes: ${bs_price:.6f}")

print(f"\nCI width scaling: 1/sqrt(N)")

print(f" N=100 -> N=10000: ratio = {np.sqrt(10000/100):.1f}x more samples, "

f"CI width should shrink ~{np.sqrt(100/10000):.2f}x")

The confidence interval width shrinks proportionally to 1/sqrt(N). Achieving 10x better accuracy requires 100x more samples. This is the fundamental bottleneck that quantum amplitude estimation addresses.

Amplitude Estimation Theory

Quantum amplitude estimation turns a probability estimation problem into a phase estimation problem. Here is the mathematical framework.

The Setup

Suppose we have a unitary operator A acting on (n+1) qubits such that:

A|0> = sqrt(a)|psi_1>|1> + sqrt(1-a)|psi_0>|0>

where the last qubit is the “objective” qubit. The value a is the quantity we want to estimate (in our case, the normalized expected payoff). We can write a = sin^2(theta_a) where theta_a = arcsin(sqrt(a)).

The Grover Operator

QAE constructs the Grover operator G from two reflections:

G = A * (I - 2|0><0|) * A^dagger * (I - 2|psi_1><psi_1| tensor |1><1|)

The first reflection (I - 2|psi_1><psi_1| tensor |1><1|) flips the sign of the “good” states (where the objective qubit is |1>). The second part A * (I - 2|0><0|) * A^dagger reflects about the state A|0>.

The eigenvalues of G are exp(+/- 2i * theta_a). This is exactly the same structure as in Grover’s search, but here we use it for estimation rather than amplification.

Phase Estimation on G

Canonical QAE applies quantum phase estimation (QPE) to the Grover operator G using m additional “counting” qubits. QPE produces the state:

|k/2^m> (approximately)

where k * pi / 2^m is the closest multiple of pi/2^m to theta_a. Measuring the counting register gives k, from which we compute:

theta_estimate = k * pi / 2^m

a_estimate = sin^2(theta_estimate)

The estimation error satisfies:

|a - a_estimate| = O(1/2^m)

Since each application of QPE uses O(2^m) applications of G, and each G uses one call to A and one call to A^dagger, the total number of oracle calls is O(2^m) = O(1/epsilon). Compare this to classical Monte Carlo, which needs O(1/epsilon^2) samples. That is the quadratic speedup.

Loading a Log-Normal Distribution

Asset prices under the Black-Scholes model follow a log-normal distribution. Qiskit Finance encodes this distribution into the amplitudes of a quantum register using the LogNormalDistribution class.

import numpy as np

from qiskit_finance.circuit.library import LogNormalDistribution

# Parameters for the underlying asset

num_uncertainty_qubits = 3 # 2^3 = 8 discretization points

S0 = 2.0 # current stock price

vol = 0.4 # annualized volatility

r = 0.05 # risk-free rate

T = 40/365 # time to maturity (40 trading days)

# Log-normal parameters

mu = (r - 0.5 * vol**2) * T + np.log(S0)

sigma = vol * np.sqrt(T)

mean = np.exp(mu + sigma**2 / 2)

# Price bounds for the discretization

low = 0.0

high = 3.0 * mean

# Build the distribution circuit

uncertainty_model = LogNormalDistribution(

num_uncertainty_qubits,

mu=mu,

sigma=sigma,

bounds=(low, high)

)

print(f"Distribution circuit depth: {uncertainty_model.decompose().depth()}")

print(f"Price points: {uncertainty_model.values}")

The circuit prepares a superposition where each computational basis state corresponds to a discretized price, and the amplitude squared equals the probability of that price under the log-normal model.

Building a Distribution Loader Manually

To demystify what LogNormalDistribution does internally, let us build a simple 2-qubit distribution loader by hand. With 2 qubits, we encode 4 price points. The idea is to set the amplitudes so that |amplitude_i|^2 = p_i for each target probability p_i.

import numpy as np

from qiskit import QuantumCircuit

from qiskit_aer import AerSimulator

from scipy.stats import lognorm

# Discretize the log-normal distribution into 4 bins

num_qubits = 2

num_bins = 2 ** num_qubits # 4 bins

S0 = 2.0

vol = 0.4

r = 0.05

T = 40 / 365

mu = (r - 0.5 * vol ** 2) * T + np.log(S0)

sigma_dist = vol * np.sqrt(T)

low = 0.5

high = 3.5

bin_width = (high - low) / num_bins

bin_centers = [low + (i + 0.5) * bin_width for i in range(num_bins)]

# Compute target probabilities by integrating the log-normal PDF over each bin

target_probs = []

for i in range(num_bins):

bin_low = low + i * bin_width

bin_high = low + (i + 1) * bin_width

p = lognorm.cdf(bin_high, s=sigma_dist, scale=np.exp(mu)) - \

lognorm.cdf(bin_low, s=sigma_dist, scale=np.exp(mu))

target_probs.append(p)

# Normalize (probabilities may not sum to exactly 1 due to truncation)

target_probs = np.array(target_probs)

target_probs = target_probs / target_probs.sum()

print("Target probabilities:", target_probs)

print("Bin centers (prices):", bin_centers)

# Build the circuit using a binary tree of Ry rotations

# Step 1: The first qubit splits probability between |0x> and |1x>

# p(qubit_0 = 0) = p0 + p1, p(qubit_0 = 1) = p2 + p3

p_left = target_probs[0] + target_probs[1]

p_right = target_probs[2] + target_probs[3]

theta_0 = 2 * np.arcsin(np.sqrt(p_right))

# Step 2: Conditioned on qubit_0 = 0, split between |00> and |01>

# p(01 | qubit_0=0) = p1 / (p0 + p1)

if p_left > 1e-10:

theta_1_left = 2 * np.arcsin(np.sqrt(target_probs[1] / p_left))

else:

theta_1_left = 0.0

# Step 3: Conditioned on qubit_0 = 1, split between |10> and |11>

# p(11 | qubit_0=1) = p3 / (p2 + p3)

if p_right > 1e-10:

theta_1_right = 2 * np.arcsin(np.sqrt(target_probs[3] / p_right))

else:

theta_1_right = 0.0

# Construct the circuit

qc = QuantumCircuit(num_qubits)

# Ry on qubit 0 to set the top-level split

qc.ry(theta_0, 0)

# Controlled Ry on qubit 1, conditioned on qubit 0 = |0>

# Apply X to qubit 0, then CRy, then X again

qc.x(0)

qc.cry(theta_1_left, 0, 1)

qc.x(0)

# Controlled Ry on qubit 1, conditioned on qubit 0 = |1>

qc.cry(theta_1_right, 0, 1)

print("\nDistribution loader circuit:")

print(qc.draw("text"))

# Verify by running statevector simulation

from qiskit.quantum_info import Statevector

sv = Statevector.from_instruction(qc)

probs = sv.probabilities()

print("\nLoaded probabilities vs target:")

for i in range(num_bins):

print(f" |{i:02b}> = {probs[i]:.4f} (target: {target_probs[i]:.4f})")

This binary tree approach is the core technique behind LogNormalDistribution. For n qubits, it uses a tree of depth n with 2^n - 1 controlled rotations, each setting the conditional probability at one node in the tree.

The Grover Oracle for Options Pricing

The amplitude estimation circuit needs an oracle that marks “in-the-money” states. For options pricing, the oracle identifies basis states where the price exceeds the strike (S_T > K).

Here is how to build a simple comparator circuit for a 3-qubit price register. The comparator checks if the encoded price exceeds K and flips an ancilla qubit accordingly.

import numpy as np

from qiskit import QuantumCircuit, QuantumRegister, AncillaRegister

num_price_qubits = 3

num_prices = 2 ** num_price_qubits # 8 prices

# Define the price grid

low = 0.5

high = 3.5

prices = [low + (i + 0.5) * (high - low) / num_prices for i in range(num_prices)]

K = 1.896

# Determine which states are in the money

in_the_money = [1 if p > K else 0 for p in prices]

print("Prices:", [f"{p:.3f}" for p in prices])

print("In the money:", in_the_money)

print(f"States |{min(i for i, x in enumerate(in_the_money) if x):03b}> "

f"through |{num_prices-1:03b}> are in the money")

# Build a comparator: mark states >= threshold index

# For this example, find the threshold index

threshold_idx = min(i for i, x in enumerate(in_the_money) if x)

print(f"Threshold index: {threshold_idx} (binary: {threshold_idx:03b})")

# Simple approach: use multi-controlled X gates to flip ancilla

# for each in-the-money state

price_reg = QuantumRegister(num_price_qubits, 'price')

ancilla = QuantumRegister(1, 'objective')

qc = QuantumCircuit(price_reg, ancilla)

# For each in-the-money state, add a multi-controlled X

for i in range(num_prices):

if in_the_money[i]:

# Convert index to binary and apply X gates for 0-bits

binary = format(i, f'0{num_price_qubits}b')

# Flip qubits that should be |0> in this state

for bit_idx, bit in enumerate(reversed(binary)):

if bit == '0':

qc.x(price_reg[bit_idx])

# Multi-controlled X targeting the ancilla

qc.mcx(list(price_reg), ancilla[0])

# Uncompute the X gates

for bit_idx, bit in enumerate(reversed(binary)):

if bit == '0':

qc.x(price_reg[bit_idx])

print(f"\nComparator circuit depth: {qc.depth()}")

print(f"Comparator circuit gate count: {qc.size()}")

print(qc.draw("text"))

In practice, Qiskit Finance uses a more efficient comparator based on quantum arithmetic circuits (ripple-carry adders). The naive approach above uses O(2^n) multi-controlled gates for the in-the-money states, while the arithmetic comparator uses O(n) gates with O(n) ancillas. The EuropeanCallPricing class handles this automatically.

Building the Payoff Circuit

The payoff function max(S - K, 0) must be encoded as a quantum operation. Qiskit Finance provides EuropeanCallPricing, which combines the distribution loader with a comparator circuit and a linear payoff approximation.

from qiskit_finance.circuit.library import EuropeanCallPricing

strike_price = 1.896 # at-the-money approximately

rescaling_factor = 0.25 # controls linear approximation accuracy

european_call = EuropeanCallPricing(

num_state_qubits=num_uncertainty_qubits,

strike_price=strike_price,

rescaling_factor=rescaling_factor,

bounds=(low, high),

uncertainty_model=uncertainty_model

)

print(f"Total qubits needed: {european_call.num_qubits}")

european_call.decompose().draw("text")

Internally, EuropeanCallPricing uses a piecewise linear approximation to the payoff, encoded via controlled rotations on an ancilla qubit. The probability of measuring that ancilla in the |1> state encodes the normalized expected payoff.

Iterative vs Canonical Amplitude Estimation

Qiskit provides two main QAE variants, each with different tradeoffs.

Canonical QAE uses quantum phase estimation with m additional “counting” qubits. It builds a single deep circuit that applies the Grover operator 2^m - 1 times in superposition. The circuit depth scales as O(1/epsilon), but it produces the estimate in a single run.

Iterative QAE (IQAE) avoids the QPE overhead entirely. Instead of m extra qubits and one deep circuit, IQAE uses O(log(1/epsilon)) rounds. In each round, it applies the Grover operator a specific number of times (a “power” of G) and measures many shots to estimate the success probability. A classical algorithm then narrows the confidence interval for theta after each round.

The practical difference is significant:

| Property | Canonical QAE | Iterative QAE |

|---|---|---|

| Extra qubits | m counting qubits | 0 extra qubits |

| Max circuit depth | O(1/epsilon) | O(1/epsilon) per round |

| Number of rounds | 1 | O(log(1/epsilon)) |

| Total oracle calls | O(1/epsilon) | O(1/epsilon) |

| NISQ friendliness | Poor (very deep circuit) | Better (shorter per-round circuits) |

Both achieve the same O(1/epsilon) query complexity. The advantage of IQAE is that individual circuits are shallower, making it more practical on noisy hardware where deep circuits accumulate errors.

from qiskit_algorithms import (

IterativeAmplitudeEstimation,

AmplitudeEstimation,

EstimationProblem,

)

from qiskit_aer.primitives import Sampler

# Define the estimation problem (shared by both algorithms)

problem = EstimationProblem(

state_preparation=european_call,

objective_qubits=[european_call.num_qubits - 1],

)

sampler = Sampler()

# --- Canonical QAE ---

num_eval_qubits = 4 # m = 4 counting qubits, giving precision ~1/2^4

canonical_qae = AmplitudeEstimation(

num_eval_qubits=num_eval_qubits,

sampler=sampler,

)

canonical_result = canonical_qae.estimate(problem)

print("=== Canonical QAE ===")

print(f"Estimate: {canonical_result.estimation:.6f}")

print(f"Number of oracle queries: {canonical_result.num_oracle_queries}")

# --- Iterative QAE ---

epsilon_target = 0.01

alpha = 0.05

iae = IterativeAmplitudeEstimation(

epsilon_target=epsilon_target,

alpha=alpha,

sampler=sampler,

)

iae_result = iae.estimate(problem)

print("\n=== Iterative QAE ===")

print(f"Estimate: {iae_result.estimation:.6f}")

print(f"Confidence interval: [{iae_result.confidence_interval[0]:.6f}, "

f"{iae_result.confidence_interval[1]:.6f}]")

print(f"Number of oracle queries: {iae_result.num_oracle_queries}")

For NISQ-era experiments, prefer IQAE. For theoretical analysis and fault-tolerant projections, canonical QAE gives cleaner bounds.

Interpreting the Result

The raw amplitude estimate is a normalized value between 0 and 1. To recover the actual option price, rescale it back to the original price domain:

import numpy as np

# Rescale from [0, 1] to actual price domain

conf_int = np.array(iae_result.confidence_interval)

# The pricing circuit normalizes payoffs to [0, 1]; invert that

price_scale = (high - low)

estimated_price = iae_result.estimation * price_scale

conf_low = conf_int[0] * price_scale

conf_high = conf_int[1] * price_scale

print(f"Estimated option price: ${estimated_price:.4f}")

print(f"95% confidence interval: [${conf_low:.4f}, ${conf_high:.4f}]")

print(f"Total circuit evaluations used: {iae_result.num_oracle_queries}")

For reference, compute the Black-Scholes analytical price to validate:

from scipy.stats import norm

d1 = (np.log(S0 / strike_price) + (r + 0.5 * vol**2) * T) / (vol * np.sqrt(T))

d2 = d1 - vol * np.sqrt(T)

bs_price = S0 * norm.cdf(d1) - strike_price * np.exp(-r * T) * norm.cdf(d2)

print(f"Black-Scholes analytical price: ${bs_price:.4f}")

A well-tuned amplitude estimation run should match the analytical price to within the stated confidence interval.

European Put Option Pricing

The framework extends naturally to put options. A European put has payoff max(K - S_T, 0). There are two approaches.

Approach 1: Put-Call Parity

If you already have the call price from QAE, compute the put price using put-call parity:

C - P = S_0 - K * exp(-r * T)

Rearranging:

P = C - S_0 + K * exp(-r * T)

import numpy as np

from scipy.stats import norm

S0 = 2.0

vol = 0.4

r = 0.05

T = 40 / 365

K = 1.896

# Black-Scholes call and put prices for validation

d1 = (np.log(S0 / K) + (r + 0.5 * vol ** 2) * T) / (vol * np.sqrt(T))

d2 = d1 - vol * np.sqrt(T)

bs_call = S0 * norm.cdf(d1) - K * np.exp(-r * T) * norm.cdf(d2)

bs_put = K * np.exp(-r * T) * norm.cdf(-d2) - S0 * norm.cdf(-d1)

# Put from put-call parity using the QAE call estimate

qae_call_price = estimated_price # from previous QAE run

put_from_parity = qae_call_price - S0 + K * np.exp(-r * T)

print(f"QAE call price: ${qae_call_price:.4f}")

print(f"Put via put-call parity: ${put_from_parity:.4f}")

print(f"Black-Scholes put price: ${bs_put:.4f}")

# Verify put-call parity holds for Black-Scholes prices

parity_check = bs_call - bs_put - (S0 - K * np.exp(-r * T))

print(f"\nPut-call parity residual: {parity_check:.10f} (should be ~0)")

Approach 2: Direct QAE for the Put

You can also price the put directly by modifying the payoff circuit. Qiskit Finance’s EuropeanCallPricing can be adapted by reversing the comparator logic (mark states where S_T < K) and computing K - S_T for those states. The direct approach is useful when you do not already have a call price estimate.

from qiskit_finance.circuit.library import EuropeanCallPricing

# For a put, we can use the same framework but adjust the payoff

# by inverting the comparator and the linear function

# Alternatively, compute the put as: put_payoff = K - S_T for S_T < K

# This is equivalent to pricing a "reversed" call from K's perspective

# Using put-call parity is generally simpler and avoids building

# a separate circuit, so it is the recommended approach for puts

Sensitivity Analysis: Computing the Greeks

Options traders need more than just the price. The “Greeks” measure how the option price responds to changes in the underlying parameters. Quantum amplitude estimation can compute these via finite differences.

Delta: dC/dS_0

Delta measures the sensitivity of the option price to changes in the underlying stock price. The finite-difference approximation is:

Delta = (C(S_0 + dS) - C(S_0 - dS)) / (2 * dS)

import numpy as np

from scipy.stats import norm

from qiskit_finance.circuit.library import LogNormalDistribution, EuropeanCallPricing

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

from qiskit_aer.primitives import Sampler

S0 = 2.0

vol = 0.4

r = 0.05

T = 40 / 365

K = 1.896

num_uncertainty_qubits = 3

rescaling_factor = 0.25

dS = 0.1 # perturbation size

sampler = Sampler()

prices_for_delta = {}

for S0_shifted in [S0 + dS, S0 - dS]:

mu_shifted = (r - 0.5 * vol ** 2) * T + np.log(S0_shifted)

sigma_shifted = vol * np.sqrt(T)

mean_shifted = np.exp(mu_shifted + sigma_shifted ** 2 / 2)

low_shifted = 0.0

high_shifted = 3.0 * mean_shifted

dist = LogNormalDistribution(

num_uncertainty_qubits, mu=mu_shifted,

sigma=sigma_shifted, bounds=(low_shifted, high_shifted)

)

call_circuit = EuropeanCallPricing(

num_state_qubits=num_uncertainty_qubits,

strike_price=K,

rescaling_factor=rescaling_factor,

bounds=(low_shifted, high_shifted),

uncertainty_model=dist,

)

problem = EstimationProblem(

state_preparation=call_circuit,

objective_qubits=[call_circuit.num_qubits - 1],

)

iae = IterativeAmplitudeEstimation(

epsilon_target=0.01, alpha=0.05, sampler=sampler

)

result = iae.estimate(problem)

price_scale = high_shifted - low_shifted

prices_for_delta[S0_shifted] = result.estimation * price_scale

# Compute numerical Delta

qae_delta = (prices_for_delta[S0 + dS] - prices_for_delta[S0 - dS]) / (2 * dS)

# Black-Scholes analytical Delta = N(d1)

d1 = (np.log(S0 / K) + (r + 0.5 * vol ** 2) * T) / (vol * np.sqrt(T))

bs_delta = norm.cdf(d1)

print(f"QAE numerical Delta: {qae_delta:.4f}")

print(f"Black-Scholes Delta N(d1): {bs_delta:.4f}")

Vega: dC/dsigma

Vega measures the sensitivity of the option price to changes in volatility. The same finite-difference approach applies:

d_sigma = 0.01 # 1% volatility perturbation

prices_for_vega = {}

for vol_shifted in [vol + d_sigma, vol - d_sigma]:

mu_shifted = (r - 0.5 * vol_shifted ** 2) * T + np.log(S0)

sigma_shifted = vol_shifted * np.sqrt(T)

mean_shifted = np.exp(mu_shifted + sigma_shifted ** 2 / 2)

low_shifted = 0.0

high_shifted = 3.0 * mean_shifted

dist = LogNormalDistribution(

num_uncertainty_qubits, mu=mu_shifted,

sigma=sigma_shifted, bounds=(low_shifted, high_shifted)

)

call_circuit = EuropeanCallPricing(

num_state_qubits=num_uncertainty_qubits,

strike_price=K,

rescaling_factor=rescaling_factor,

bounds=(low_shifted, high_shifted),

uncertainty_model=dist,

)

problem = EstimationProblem(

state_preparation=call_circuit,

objective_qubits=[call_circuit.num_qubits - 1],

)

iae = IterativeAmplitudeEstimation(

epsilon_target=0.01, alpha=0.05, sampler=sampler

)

result = iae.estimate(problem)

price_scale = high_shifted - low_shifted

prices_for_vega[vol_shifted] = result.estimation * price_scale

qae_vega = (prices_for_vega[vol + d_sigma] - prices_for_vega[vol - d_sigma]) / (2 * d_sigma)

# Black-Scholes analytical Vega = S0 * sqrt(T) * N'(d1)

d1 = (np.log(S0 / K) + (r + 0.5 * vol ** 2) * T) / (vol * np.sqrt(T))

bs_vega = S0 * np.sqrt(T) * norm.pdf(d1)

print(f"\nQAE numerical Vega: {qae_vega:.4f}")

print(f"Black-Scholes Vega: {bs_vega:.4f}")

The finite-difference approach requires running QAE twice for each Greek. This doubles the quantum resource cost per sensitivity. Research into quantum gradient estimation may eventually provide more efficient alternatives, but for now, the finite-difference method is the standard approach.

Multi-Asset Basket Option

Real portfolios contain multiple correlated assets. A basket option pays max(w1*S1_T + w2*S2_T - K, 0) where w1 and w2 are weights, and S1, S2 follow correlated log-normal processes.

Classical Monte Carlo for Correlated Assets

For correlated assets, we use the Cholesky decomposition of the correlation matrix to generate correlated normal samples:

import numpy as np

from scipy.stats import norm

# Two correlated assets

S1_0 = 2.0

S2_0 = 2.0

vol1 = 0.3

vol2 = 0.4

r = 0.05

T = 40 / 365

rho = 0.5 # correlation between the two assets

K = 2.0 # basket strike

w1, w2 = 0.5, 0.5 # equal weights

# Cholesky decomposition of the correlation matrix

# [[1, rho], [rho, 1]] = L * L^T

# L = [[1, 0], [rho, sqrt(1-rho^2)]]

L = np.array([[1.0, 0.0],

[rho, np.sqrt(1 - rho ** 2)]])

np.random.seed(42)

N = 100_000

# Generate independent standard normals

Z_independent = np.random.standard_normal((2, N))

# Correlate them using Cholesky

Z_correlated = L @ Z_independent

# Simulate terminal prices

S1_T = S1_0 * np.exp((r - 0.5 * vol1 ** 2) * T + vol1 * np.sqrt(T) * Z_correlated[0])

S2_T = S2_0 * np.exp((r - 0.5 * vol2 ** 2) * T + vol2 * np.sqrt(T) * Z_correlated[1])

# Basket payoff

basket_value = w1 * S1_T + w2 * S2_T

payoffs = np.maximum(basket_value - K, 0)

basket_price = np.exp(-r * T) * np.mean(payoffs)

print(f"Classical MC basket option price: ${basket_price:.4f}")

print(f"Correlation between simulated log-returns: "

f"{np.corrcoef(np.log(S1_T/S1_0), np.log(S2_T/S2_0))[0,1]:.3f} "

f"(target: {rho})")

Quantum Approach for Basket Options

On the quantum side, encoding a multi-asset distribution requires qubits for each asset. For 2 assets with n qubits each, we need 2n qubits for the distribution alone, plus ancillas for the comparator and payoff circuits.

# Qiskit Finance provides GaussianConditionalIndependenceModel

# for modeling correlated defaults (credit risk).

# For basket options, we construct the multi-asset distribution

# using correlated Ry rotations on separate registers.

# Conceptual outline (the full implementation requires careful

# circuit construction):

#

# 1. Allocate n qubits for asset 1, n qubits for asset 2

# 2. Load the marginal distribution for asset 1 using Ry tree

# 3. Load the conditional distribution for asset 2 given asset 1

# using controlled Ry rotations (this encodes the correlation)

# 4. Combine weighted sum w1*S1 + w2*S2 using quantum arithmetic

# 5. Compare the sum against K using a comparator circuit

# 6. Apply amplitude estimation as before

# Qubit count for 2 assets with n=3 qubits each:

n_per_asset = 3

n_assets = 2

n_distribution = n_per_asset * n_assets # 6 qubits

n_arithmetic = n_per_asset + 1 # for weighted sum

n_comparator = 1 # objective qubit

n_ancilla = n_per_asset # work qubits

total_qubits = n_distribution + n_arithmetic + n_comparator + n_ancilla

print(f"Estimated qubit count for 2-asset basket: {total_qubits}")

print(f"For 5 assets with 5 qubits each: ~{5*5 + 6 + 1 + 5} qubits")

print(f"For 10 assets with 5 qubits each: ~{10*5 + 6 + 1 + 5} qubits")

The qubit count scales linearly with the number of assets, but the circuit depth grows significantly because the conditional distribution loading requires controlled operations across asset registers. This makes large basket options one of the most challenging applications for near-term quantum hardware.

Noise Impact and Error Mitigation

On real quantum hardware, gate errors corrupt the amplitude estimation result. Let us simulate this effect using Qiskit Aer’s noise model.

import numpy as np

from qiskit_aer import AerSimulator

from qiskit_aer.noise import NoiseModel, depolarizing_error

from qiskit_aer.primitives import Sampler

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

from qiskit_finance.circuit.library import LogNormalDistribution, EuropeanCallPricing

# Rebuild the pricing circuit

S0, vol, r, T, K = 2.0, 0.4, 0.05, 40/365, 1.896

num_uncertainty_qubits = 3

mu = (r - 0.5 * vol**2) * T + np.log(S0)

sigma = vol * np.sqrt(T)

mean = np.exp(mu + sigma**2 / 2)

low, high = 0.0, 3.0 * mean

uncertainty_model = LogNormalDistribution(

num_uncertainty_qubits, mu=mu, sigma=sigma, bounds=(low, high)

)

european_call = EuropeanCallPricing(

num_state_qubits=num_uncertainty_qubits,

strike_price=K, rescaling_factor=0.25,

bounds=(low, high), uncertainty_model=uncertainty_model,

)

problem = EstimationProblem(

state_preparation=european_call,

objective_qubits=[european_call.num_qubits - 1],

)

# --- Noiseless run ---

sampler_ideal = Sampler()

iae_ideal = IterativeAmplitudeEstimation(

epsilon_target=0.01, alpha=0.05, sampler=sampler_ideal

)

result_ideal = iae_ideal.estimate(problem)

price_ideal = result_ideal.estimation * (high - low)

# --- Noisy run (1% depolarizing error on 2-qubit gates) ---

noise_model = NoiseModel()

error_2q = depolarizing_error(0.01, 2) # 1% error rate

error_1q = depolarizing_error(0.001, 1) # 0.1% error rate

noise_model.add_all_qubit_quantum_error(error_2q, ['cx', 'cz'])

noise_model.add_all_qubit_quantum_error(error_1q, ['u1', 'u2', 'u3', 'ry', 'rx', 'rz'])

noisy_backend = AerSimulator(noise_model=noise_model)

sampler_noisy = Sampler(backend=noisy_backend)

iae_noisy = IterativeAmplitudeEstimation(

epsilon_target=0.01, alpha=0.05, sampler=sampler_noisy

)

result_noisy = iae_noisy.estimate(problem)

price_noisy = result_noisy.estimation * (high - low)

# --- Compare ---

from scipy.stats import norm

d1 = (np.log(S0/K) + (r + 0.5*vol**2)*T) / (vol*np.sqrt(T))

d2 = d1 - vol*np.sqrt(T)

bs_price = S0 * norm.cdf(d1) - K * np.exp(-r*T) * norm.cdf(d2)

print(f"Black-Scholes price: ${bs_price:.4f}")

print(f"Noiseless QAE price: ${price_ideal:.4f}")

print(f"Noisy QAE price: ${price_noisy:.4f}")

print(f"Noise-induced bias: ${abs(price_noisy - price_ideal):.4f}")

Depolarizing noise systematically biases the amplitude estimate toward 0.5 (the maximally mixed state). This means noisy QAE tends to either overestimate small amplitudes or underestimate large ones. The bias grows with circuit depth, which is why shorter-depth IQAE circuits are preferable on noisy hardware.

Zero-Noise Extrapolation (ZNE)

Zero-noise extrapolation is a leading error mitigation technique. The idea is to run the circuit at multiple intentionally increased noise levels, then extrapolate back to the zero-noise limit. Qiskit Runtime supports this through the resilience_level parameter, and the open-source library mitiq provides a standalone implementation.

# Conceptual ZNE implementation using noise scaling

# (In production, use mitiq or Qiskit Runtime's resilience_level=2)

# Step 1: Run at noise scale factors 1, 2, 3

# Noise scaling is achieved by replacing each CNOT with 3 CNOTs (scale=3)

# or 5 CNOTs (scale=5), which increases the effective error rate

# Step 2: Fit a polynomial to (scale_factor, estimate) pairs

# Step 3: Extrapolate to scale_factor = 0

# Example with manual noise scaling:

scale_factors = [1.0, 2.0, 3.0]

noisy_estimates = []

for scale in scale_factors:

noise_scaled = NoiseModel()

error_2q_scaled = depolarizing_error(0.01 * scale, 2)

error_1q_scaled = depolarizing_error(0.001 * scale, 1)

noise_scaled.add_all_qubit_quantum_error(error_2q_scaled, ['cx', 'cz'])

noise_scaled.add_all_qubit_quantum_error(error_1q_scaled,

['u1', 'u2', 'u3', 'ry', 'rx', 'rz'])

backend_scaled = AerSimulator(noise_model=noise_scaled)

sampler_scaled = Sampler(backend=backend_scaled)

iae_scaled = IterativeAmplitudeEstimation(

epsilon_target=0.01, alpha=0.05, sampler=sampler_scaled

)

result_scaled = iae_scaled.estimate(problem)

noisy_estimates.append(result_scaled.estimation * (high - low))

# Linear extrapolation to zero noise (Richardson extrapolation)

# For scale factors [1, 2, 3], fit a quadratic and evaluate at 0

coeffs = np.polyfit(scale_factors, noisy_estimates, 2)

zne_estimate = np.polyval(coeffs, 0.0)

print(f"\nZero-Noise Extrapolation:")

for s, e in zip(scale_factors, noisy_estimates):

print(f" Scale {s:.0f}x: ${e:.4f}")

print(f" ZNE estimate: ${zne_estimate:.4f}")

print(f" Black-Scholes: ${bs_price:.4f}")

print(f" ZNE error: ${abs(zne_estimate - bs_price):.4f}")

ZNE partially corrects the noise bias but introduces additional variance from the extrapolation. It works best when the noise is well-characterized and the extrapolation model (linear, quadratic) matches the actual noise scaling behavior.

Practical Timeline and Hardware Requirements

The quadratic speedup from O(1/epsilon^2) to O(1/epsilon) is real but requires fault-tolerant hardware to deliver practical value. Here is a concrete analysis of the hardware requirements.

What “Practical Advantage” Means

In finance, pricing accuracy of 1 basis point (0.01%) is often the minimum acceptable threshold. For a $100 option, this means accuracy to $0.01.

Scaling Analysis

To achieve accuracy epsilon with quantum amplitude estimation:

-

Oracle calls needed:

N_oracle = O(1/epsilon). Forepsilon = 0.0001(1 basis point), we need roughly 10,000 oracle calls. -

Circuit depth per oracle call: Each application of the Grover operator G has depth proportional to the state preparation circuit A. For a 10-qubit distribution loader with arithmetic comparator, the depth of A is roughly 100-200 gates. Each Grover iteration applies A, A^dagger, and two reflections, giving depth approximately 400-500 per iteration.

-

Maximum circuit depth: For IQAE, the deepest circuit in the final round applies G roughly

N_oracle / log(N_oracle)times. WithN_oracle = 10,000, the deepest circuit has depth approximately10,000 / 13 * 500 = 385,000gates. -

Error budget: For a circuit of depth D on Q qubits, the total number of locations where an error can occur is approximately

D * Q. For the circuit to produce a meaningful result, the total error probability must be small:

gate_error_rate * D * Q < 1

With D = 385,000 and Q = 15 (distribution + ancillas), we need:

gate_error_rate < 1 / (385,000 * 15) = 1.7 * 10^-7

This is far below current hardware capabilities (error rates of 10^-3 to 10^-2).

Fault-Tolerant Requirements

With quantum error correction, the effective error rate per logical gate can be made arbitrarily small, at the cost of physical qubit overhead. Using the surface code with physical error rate p = 10^-3:

- Code distance needed:

d ~ log(D * Q) / log(1/p) ~ 20-30 - Physical qubits per logical qubit:

2d^2 ~ 800-1800 - Total physical qubits:

15 logical * 1000 physical/logical = 15,000

import numpy as np

# Hardware requirement calculator

epsilon = 0.0001 # 1 basis point

n_oracle = int(1 / epsilon)

n_qubits_logical = 15

depth_per_grover = 500

max_grover_power = n_oracle # worst case for canonical QAE

total_depth = max_grover_power * depth_per_grover

error_locations = total_depth * n_qubits_logical

required_gate_error = 1.0 / error_locations

print(f"Target accuracy: {epsilon} ({epsilon*10000:.0f} basis points)")

print(f"Oracle calls needed: {n_oracle:,}")

print(f"Max circuit depth: {total_depth:,} gates")

print(f"Required gate error rate (no QEC): {required_gate_error:.2e}")

print(f"Current best gate error rates: ~10^-3 to 10^-4")

print(f"Gap: {int(np.log10(required_gate_error / 1e-3)):.0f} orders of magnitude")

# With surface code error correction

physical_error_rate = 1e-3

code_distance = int(np.ceil(np.log(error_locations) / np.log(1 / physical_error_rate)))

physical_per_logical = 2 * code_distance ** 2

total_physical = n_qubits_logical * physical_per_logical

print(f"\nWith surface code (p_physical = {physical_error_rate}):")

print(f"Code distance needed: ~{code_distance}")

print(f"Physical qubits per logical qubit: ~{physical_per_logical}")

print(f"Total physical qubits: ~{total_physical:,}")

print(f"\nTimeline estimate: fault-tolerant hardware at this scale")

print(f"is projected for 2030-2035 by major hardware vendors.")

The bottom line: quantum advantage for options pricing requires fault-tolerant quantum computers with thousands of physical qubits running error-corrected logical operations. Current NISQ devices are useful for algorithm development and proof-of-concept demonstrations, but they cannot yet outperform classical Monte Carlo for production pricing.

Common Mistakes

Newcomers to quantum finance frequently encounter these pitfalls. Avoiding them will save you significant debugging time.

1. Forgetting to Rescale the Output

QAE returns a raw amplitude estimate in the range [0, 1]. The actual option price requires multiplying by the price range (high - low) and potentially applying additional normalization factors from the rescaling parameter. A common mistake is to report the raw amplitude as the option price. The result will be a small number that looks vaguely plausible (say, 0.03) but is actually a normalized probability, not a dollar value.

Always apply the rescaling:

# Wrong: reporting raw amplitude

wrong_price = result.estimation # This is in [0, 1], NOT dollars

# Correct: rescale to the original domain

correct_price = result.estimation * (high - low)

2. Using Too Few Uncertainty Qubits

With 3 qubits, you get only 8 price points to approximate a continuous distribution. The discretization error can be larger than the QAE estimation error, making the entire exercise pointless. For financial applications where accuracy matters, use 5-7 uncertainty qubits (32-128 price points). However, each additional qubit roughly doubles the circuit depth for the distribution loader, so there is a direct tradeoff between discretization accuracy and circuit feasibility.

3. Not Validating Against Black-Scholes First

Always compute the Black-Scholes analytical price and verify that your QAE estimate falls within its confidence interval. If they disagree, the most likely causes are: incorrect distribution parameters (mu, sigma), wrong price bounds (low, high) that truncate significant probability mass, a bug in the payoff circuit, or incorrect rescaling. Never claim a quantum speedup before confirming the quantum result is actually correct.

4. Ignoring NISQ Overhead

On current hardware, noise corrections (error mitigation, increased shot counts, circuit repetitions for ZNE) add 10-100x overhead that erases the theoretical quantum speedup. A classical Monte Carlo simulation with 1 million samples runs in milliseconds on a laptop. The equivalent QAE circuit, even simulated classically, takes longer due to circuit compilation and shot overhead. The quantum advantage is currently theoretical and only achievable on fault-tolerant hardware.

5. Confusing N_oracle with Total Shots

IQAE operates in multiple rounds. Each round runs the circuit with many measurement shots. The total number of circuit executions (shots across all rounds) is much larger than N_oracle (the number of Grover operator applications counted across the deepest circuits). When comparing quantum versus classical sample complexity, the correct comparison is oracle calls versus random samples. Reporting total shots instead of oracle calls makes the quantum algorithm look worse than it actually is.

# After running IQAE, report the correct metric:

print(f"Oracle queries (for complexity comparison): {result.num_oracle_queries}")

# Do NOT compare total shots to classical N; compare oracle queries to classical N

Next Steps

The same framework extends to other financial instruments. Basket options require multi-asset distributions. Credit risk models use Gaussian copulas. VaR (Value at Risk) calculations are a natural fit because they involve tail probability estimation: exactly the kind of amplitude extraction QAE performs.

Explore qiskit_finance.circuit.library for GaussianConditionalIndependenceModel (credit risk) and FixedIncomePricing (bond pricing) to see how the same QAE workflow adapts to other products.

Was this tutorial helpful?