Error Suppression with Qiskit Runtime

Use Qiskit Runtime's built-in error suppression techniques including dynamical decoupling, TREX readout error mitigation, and resilience levels to improve results on real hardware.

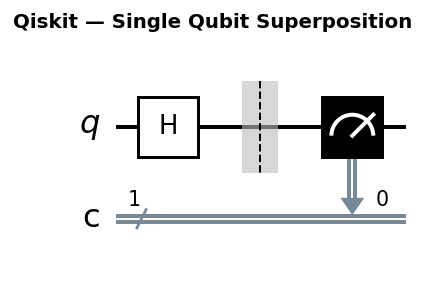

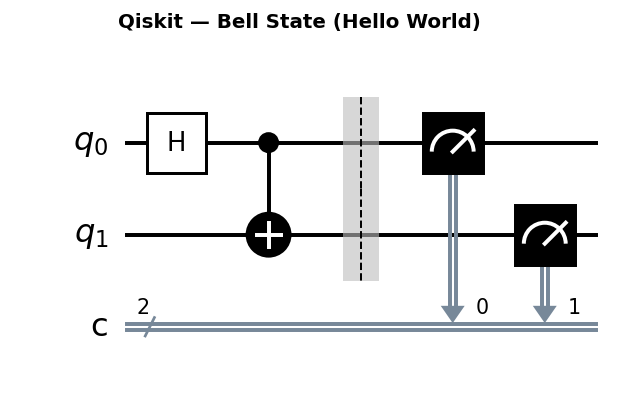

Circuit diagrams

Error Suppression vs Error Mitigation

Running circuits on real quantum hardware introduces errors from decoherence, gate imperfections, and measurement noise. Qiskit Runtime provides two complementary strategies for dealing with these errors.

Error suppression acts before or during circuit execution to reduce the number of errors that occur. Techniques include dynamical decoupling (inserting pulse sequences during idle periods) and gate twirling (randomizing errors into a depolarizing channel).

Error mitigation acts after execution by post-processing the noisy results to estimate the ideal noiseless output. Techniques include readout error mitigation, zero-noise extrapolation (ZNE), and probabilistic error cancellation (PEC).

This tutorial covers both sides in depth: the physics of why each technique works, the API surface for configuring them, and practical decision-making for real hardware runs.

Understanding the Noise You Are Fighting

Before choosing a suppression strategy, you need to understand the noise sources and their magnitudes on current hardware. Every technique in this tutorial targets a specific physical mechanism, and knowing the numbers helps you predict which techniques will matter most for your circuit.

Decoherence: T1 and T2

Every superconducting qubit has two fundamental timescales that limit how long it can hold quantum information.

T1 (energy relaxation) is the time for a qubit in the |1> state to decay to |0> by releasing energy to its environment. On IBM Eagle and Heron processors, T1 ranges from 100 to 500 microseconds depending on the qubit.

T2 (dephasing) is the time for a superposition state to lose its phase coherence. T2 is always less than or equal to 2*T1 (and usually significantly less). Typical values on current IBM hardware range from 50 to 300 microseconds.

Here is where these numbers become concrete. A single-qubit gate (like an X or SX gate) takes approximately 35 nanoseconds. A two-qubit CX (CNOT) gate takes 300 to 500 nanoseconds. Consider a circuit with 20 CX gates executed sequentially at 400 ns each:

Total circuit time = 20 * 400 ns = 8,000 ns = 8 microseconds

Fraction of T1 consumed = 8 / 100 = 8% (using T1 = 100 us, worst case)

Fraction of T2 consumed = 8 / 50 = 16% (using T2 = 50 us, worst case)

An 8% T1 loss means roughly 8% of your |1> population decays to |0> during execution. A 16% T2 loss means your superposition phases have degraded by about 16%. These are significant error sources, and they get worse for deeper circuits. A 100-gate circuit at the same rate would consume 40 microseconds, eating 40% of T1 and 80% of T2. At that point, the quantum state is mostly gone.

Crosstalk

Qubits on a chip are not perfectly isolated. Neighboring qubits can exchange energy through residual couplings that the device designers try to minimize but cannot eliminate entirely. When a CX gate operates on qubits (3, 4), nearby qubits (2 and 5, for example) experience a small unwanted rotation. This is crosstalk. On current hardware, crosstalk error rates range from 0.1% to 1% per neighboring gate operation. The effect compounds: circuits with many parallel CX gates on adjacent qubit pairs accumulate crosstalk errors faster than serial circuits.

Gate Errors

Every gate operation has an intrinsic error rate measured through randomized benchmarking. On current IBM processors:

- Single-qubit gate error: 0.01% to 0.05% (essentially negligible for most circuits)

- Two-qubit CX gate error: 0.3% to 1.5%, depending on the qubit pair

The CX error rate varies dramatically across different qubit pairs on the same chip. Some pairs achieve 99.7% fidelity while others sit at 98.5%. This is why qubit routing matters: the transpiler’s choice of which physical qubits to use can make or break your results.

For a circuit with N two-qubit gates, the approximate total gate error is:

Total fidelity ~ (1 - e)^N

Example: 20 CX gates at 0.5% error each

Fidelity ~ (0.995)^20 = 0.905, so about 9.5% total error

Readout Errors

Measurement is itself noisy. The probability of correctly reading out a |0> state is typically 98% to 99.5%. The probability of correctly reading out a |1> state is usually worse, around 95% to 99%, because T1 decay during the readout pulse can flip |1> to |0>. This asymmetry between 0-to-1 and 1-to-0 errors is a key detail that TREX exploits.

Setting Up Qiskit Runtime

from qiskit_ibm_runtime import QiskitRuntimeService, Session, EstimatorV2 as Estimator

from qiskit_ibm_runtime import SamplerV2 as Sampler

from qiskit_ibm_runtime.options import EstimatorOptions, SamplerOptions

from qiskit.circuit.library import EfficientSU2

from qiskit.quantum_info import SparsePauliOp

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

from qiskit import QuantumCircuit

import numpy as np

# Connect to IBM Quantum

service = QiskitRuntimeService()

backend = service.least_busy(operational=True, simulator=False, min_num_qubits=5)

print(f"Using backend: {backend.name}")

Dynamical Decoupling

Qubits that sit idle while other gates execute are vulnerable to decoherence. In a multi-qubit circuit, idle time is unavoidable: while a CX gate operates on qubits 0 and 1, qubits 2, 3, and 4 accumulate errors from their environment.

Dynamical decoupling (DD) fills those idle windows with carefully chosen pulse sequences that cancel out environmental noise to first order.

Why Dynamical Decoupling Works: The Physics

A qubit couples to its environment through fluctuating fields. In the rotating frame of the qubit, the dominant effect of these fields is a random Z rotation: the qubit’s phase drifts unpredictably over time. If left alone, this drift accumulates as a random walk in phase, degrading coherence.

A DD sequence counteracts this by periodically flipping the qubit. Consider the simplest case: a single X pulse applied halfway through an idle period. During the first half, the environment pushes the qubit’s phase by some angle +phi. The X pulse flips the sign of the Z axis. During the second half, the same environmental coupling now pushes the phase by -phi (because the X pulse inverted the frame). The net effect: the two halves cancel, and the qubit’s phase is preserved.

For the XY4 sequence (X, Y, X, Y pulses at quarter intervals), the cancellation is more complete. The four pulses implement a cyclic symmetry group that averages out noise in both the X and Z directions. Mathematically, if the environment induces a small error operator:

E = I + epsilon * (a*X + b*Y + c*Z) + O(epsilon^2)

then after the XY4 sequence, the effective error becomes:

E_eff = I + O(epsilon^2)

The first-order noise term vanishes entirely. Only second-order effects survive, which are much smaller (epsilon squared instead of epsilon). This is why DD can significantly extend the effective coherence time of idle qubits.

DD Sequence Types and When to Use Each

Qiskit Runtime supports several DD sequences, each optimized for different noise profiles.

XpXm (X, -X): The simplest sequence. Two pulses cancel Z noise effectively but leave Y noise untouched. Use this when idle windows are too short to fit four pulses (the minimum spacing between DD pulses is constrained by the hardware’s pulse resolution).

XY4 (X, Y, X, Y): Cancels noise in both the X and Z directions. This is the best general-purpose choice and the recommended default. The four-pulse structure provides robust first-order cancellation against a wide range of noise spectra.

CPMG (Carr-Purcell-Meiboom-Gill): A sequence originally developed in NMR spectroscopy. It uses Y, Y pulse pairs and is specifically optimized for extending T2 coherence time. It performs best when the dominant noise source is low-frequency dephasing.

UR (Uhrig Dynamic Decoupling): Uses non-uniformly spaced pulses, with timing optimized to cancel higher orders of a specific noise spectrum. It outperforms XY4 when the noise is dominated by low-frequency components (1/f noise), which is common in superconducting qubits.

from qiskit_ibm_runtime.options import EstimatorOptions

# XY4: best general-purpose choice

options_xy4 = EstimatorOptions()

options_xy4.dynamical_decoupling.enable = True

options_xy4.dynamical_decoupling.sequence_type = "XY4"

# XpXm: for short idle windows

options_xpxm = EstimatorOptions()

options_xpxm.dynamical_decoupling.enable = True

options_xpxm.dynamical_decoupling.sequence_type = "XpXm"

# CPMG: optimized for T2 extension

options_cpmg = EstimatorOptions()

options_cpmg.dynamical_decoupling.enable = True

options_cpmg.dynamical_decoupling.sequence_type = "CPMG"

# UR: best for 1/f noise spectra

options_ur = EstimatorOptions()

options_ur.dynamical_decoupling.enable = True

options_ur.dynamical_decoupling.sequence_type = "UR"

Basic DD Example

# Build a test circuit with idle time on some qubits

qc = QuantumCircuit(4)

qc.h(0)

qc.cx(0, 1)

qc.barrier()

qc.delay(200, [2, 3], unit="dt") # Explicit idle: qubits 2,3 wait

qc.cx(2, 3)

qc.measure_all()

# Configure DD with XY4

options = EstimatorOptions()

options.dynamical_decoupling.enable = True

options.dynamical_decoupling.sequence_type = "XY4"

print("DD sequence: XY4")

print("Inserts X-Y-X-Y pulses during idle periods on qubits 2,3.")

Transpilation Interaction with DD

DD insertion happens after transpilation, not before. The transpiler first maps your abstract circuit onto the backend’s physical qubits, routes SWAP gates as needed, and schedules the operations. Only then does Qiskit Runtime identify idle windows and insert DD pulses.

This ordering matters. If you use a custom PassManager, you need to be aware that:

- Higher optimization levels (

optimization_level=3) can aggressively consolidate and reschedule gates, sometimes eliminating idle windows that would have benefited from DD. - If you build a fully custom pass manager, you need to add the

PadDynamicalDecouplingpass after routing and scheduling.

Here is how to manually add DD to a custom pass manager:

from qiskit.transpiler import PassManager

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

from qiskit.transpiler.passes import PadDynamicalDecoupling, ALAPScheduleAnalysis

from qiskit.circuit.library import XGate, YGate

# Generate a base pass manager from the backend

pm = generate_preset_pass_manager(optimization_level=2, backend=backend)

# Define the DD sequence: XY4

dd_sequence = [XGate(), YGate(), XGate(), YGate()]

# Create a scheduling + DD pass manager to run after transpilation

scheduling_pm = PassManager([

ALAPScheduleAnalysis(backend.target),

PadDynamicalDecoupling(backend.target, dd_sequence=dd_sequence),

])

# Transpile, then apply DD

transpiled = pm.run(qc)

transpiled_with_dd = scheduling_pm.run(transpiled)

print(f"Circuit depth after DD insertion: {transpiled_with_dd.depth()}")

When using Qiskit Runtime’s built-in DD options (via options.dynamical_decoupling.enable = True), the Runtime service handles all of this automatically. The manual approach is only needed when you want full control over the scheduling pass.

TREX: Twirled Readout Error eXtinction

Readout errors are one of the largest noise sources on current hardware. A qubit prepared in |1> can be misread as |0> with probability 2% to 5%, and the reverse error (|0> misread as |1>) typically occurs at a lower rate, around 0.5% to 2%. This asymmetry between the two error directions makes raw correction difficult.

TREX (Twirled Readout Error eXtinction) solves this by symmetrizing the readout errors through randomized twirling.

How TREX Works: Step by Step

The TREX protocol operates on every shot of your circuit execution:

Step 1: Random bit generation. Before each shot, the system generates a random binary string of length n (where n is the number of measured qubits). Each bit is 0 or 1 with equal probability.

Step 2: Conditional X gates. For each qubit where the random bit is 1, an X gate is applied immediately before measurement. This flips that qubit’s state. The system records which qubits were flipped.

Step 3: Circuit execution and measurement. The modified circuit runs on hardware. The measurement result is a noisy bitstring that includes both the quantum signal and readout errors.

Step 4: Classical correction. After readout, the system flips back the classical bits corresponding to the qubits that were toggled in Step 2. This undoes the intentional X gates in the classical record.

Step 5: Averaging. Steps 1 through 4 repeat across many shots with different random toggle patterns.

The key insight is this: without twirling, the readout error matrix is asymmetric. The probability of misreading |0> as |1> differs from the probability of misreading |1> as |0>. By randomly toggling qubits before measurement, TREX ensures that each qubit spends roughly half its shots in the |0> state and half in the |1> state (from the readout hardware’s perspective). This averages the two error rates into a single symmetric error rate.

Symmetric (depolarizing) readout errors are much easier to correct. If the error rate is p in both directions, the correction is a simple rescaling:

corrected_expectation = raw_expectation / (1 - 2*p)

Compare this to the full asymmetric case, which requires calibrating a 2^n by 2^n matrix for n qubits. TREX reduces the problem from exponential to linear.

# Enable TREX readout error mitigation

options = EstimatorOptions()

options.resilience.measure_mitigation = True # Enables TREX by default

# TREX is compatible with dynamical decoupling; both can be active simultaneously

options.dynamical_decoupling.enable = True

options.dynamical_decoupling.sequence_type = "XY4"

print("TREX + DD enabled simultaneously.")

Gate Twirling

Gate twirling randomizes coherent errors in two-qubit gates into incoherent (depolarizing) errors. It does not remove errors, but it converts them into a form that is easier to characterize and cancel with ZNE or PEC.

Why Twirling Helps: Coherent vs Incoherent Errors

Coherent errors have a preferred direction. For example, a CX gate might consistently over-rotate by 0.5 degrees around the Z axis. This systematic rotation accumulates constructively over repeated applications: 20 CX gates produce a 10-degree over-rotation, not a random walk.

Incoherent (depolarizing) errors have no preferred direction. They push the qubit toward a random state with equal probability in all directions. These errors accumulate as a random walk: 20 gates produce an error that scales as sqrt(20), not linearly with 20.

This distinction matters for error mitigation. ZNE (zero-noise extrapolation) works by running circuits at artificially amplified noise levels and extrapolating back to zero noise. This extrapolation assumes that errors are incoherent, because depolarizing noise has a simple exponential decay that extrapolates cleanly. Coherent errors produce oscillatory behavior that confounds extrapolation.

Gate twirling converts the first case into the second, making ZNE and PEC work correctly.

How Pauli Twirling Works

For each two-qubit gate (CX) in the circuit, Pauli twirling:

- Draws a random pair of Pauli operators (P1, P2) from a specific set

- Applies P1 to the control qubit and P2 to the target qubit before the CX gate

- Applies adjusted Paulis (Q1, Q2) after the CX gate, chosen so that the net ideal operation is still exactly CX

The adjustment works because CX has a specific commutation structure with Pauli operators. For each choice of (P1, P2), there exists a unique (Q1, Q2) such that:

(Q1 tensor Q2) * CX * (P1 tensor P2) = CX (up to a global phase)

By averaging over all 16 random Pauli choices, any coherent error channel attached to the CX gate is converted into a depolarizing channel with the same average error rate.

options = EstimatorOptions()

options.resilience_level = 2 # ZNE enabled

options.twirling.enable_gates = True # Twirl 2-qubit gates

options.twirling.enable_measure = True # Twirl measurements (TREX)

options.twirling.num_randomizations = 32 # Number of random twirl instances

# num_randomizations controls the variance-overhead trade-off:

# - 8 randomizations: low overhead, higher variance in results

# - 32 randomizations: moderate overhead, good variance reduction (recommended)

# - 128 randomizations: high overhead, minimal additional variance reduction

# Doubling randomizations doubles the number of circuits submitted.

Resilience Levels

Qiskit Runtime’s Estimator primitive provides a convenience interface through resilience levels that bundle multiple suppression and mitigation techniques together.

Resilience Level Reference

| Level | Techniques Enabled | Circuit Overhead | Shot Overhead | Typical Runtime Multiplier | Best Use Case |

|---|---|---|---|---|---|

| 0 | None | 1x | 1x | 1x | Fast exploration, debugging, simulator comparison |

| 1 | TREX readout mitigation | 1x | ~1.5x (twirl repetitions) | 1.2x to 1.5x | Default for most work, good accuracy/cost balance |

| 2 | TREX + ZNE + gate twirling | 3x to 5x (noise amplification factors) | ~1.5x per circuit | 4x to 8x | Energy estimation, expectation values requiring accuracy |

| 3 | TREX + PEC + gate twirling | 1x to 3x | 10x to 100x (quasi-probability sampling) | 20x to 200x | Final verification, publishable results |

The shot overhead for Level 3 deserves explanation. PEC works by sampling from a quasi-probability distribution, and the sampling overhead (called gamma) grows exponentially with the total noise in the circuit. For low-noise circuits, the overhead is modest. For noisy circuits, it can be prohibitive.

# Resilience level 0: no error mitigation

options_level0 = EstimatorOptions()

options_level0.resilience_level = 0

# Resilience level 1: TREX readout mitigation only (default)

options_level1 = EstimatorOptions()

options_level1.resilience_level = 1

# Resilience level 2: TREX + ZNE gate noise mitigation

options_level2 = EstimatorOptions()

options_level2.resilience_level = 2

# Resilience level 3: TREX + PEC (most accurate, most expensive)

options_level3 = EstimatorOptions()

options_level3.resilience_level = 3

Probabilistic Error Cancellation (PEC): Level 3 in Depth

Level 3 uses Probabilistic Error Cancellation, the most powerful (and most expensive) mitigation technique. Understanding its cost model helps you decide when it is worthwhile.

How PEC Works

PEC characterizes the noise channel E for each gate in your circuit, then mathematically constructs the inverse channel E^(-1). Since E^(-1) is not a physical quantum operation (it is not completely positive), PEC implements it by sampling from a quasi-probability distribution over physical operations. Some samples carry negative weights, which means the final expectation value is computed as a weighted average that includes subtractions.

The Gamma Overhead

The sampling overhead is governed by the one-norm of the quasi-probability distribution, denoted gamma. For a single gate with depolarizing error rate p:

gamma_gate = (1 + 2p) / (1 - 2p) ≈ 1 + 4p for small p

For a circuit with N independent noisy gates, the total overhead multiplies:

gamma_total = product of gamma_gate for all N gates

≈ exp(4 * p * N) for small p

The number of samples needed for a given statistical precision scales as gamma_total squared. Let’s compute some examples:

20 CX gates at 0.5% error each:

gamma = exp(4 * 0.005 * 20) = exp(0.4) = 1.49

Overhead: 1.49^2 ≈ 2.2x shots needed. Very manageable.

50 CX gates at 0.5% error each:

gamma = exp(4 * 0.005 * 50) = exp(1.0) = 2.72

Overhead: 2.72^2 ≈ 7.4x shots needed. Moderate.

100 CX gates at 1.0% error each:

gamma = exp(4 * 0.01 * 100) = exp(4.0) = 54.6

Overhead: 54.6^2 ≈ 2981x shots needed. Impractical.

PEC is only viable for relatively shallow circuits with low gate error rates. For deep or noisy circuits, Level 2 (ZNE) is the practical ceiling.

PEC Noise Model Freshness

Qiskit Runtime performs automatic noise characterization for PEC, but this characterization reflects the hardware state at calibration time. Superconducting qubits drift over hours. If the hardware has drifted significantly since its last calibration, PEC can actually produce worse results than ZNE, because the inverse channel no longer matches the actual noise. For best results with Level 3, run on a freshly calibrated backend and complete your jobs within a few hours.

Error Budget Analysis

Before choosing a resilience level, analyze your circuit’s error budget. This tells you where the noise comes from and how much mitigation can help.

# Extract gate error rates from the backend's target

target = backend.target

# Find CX error rates for all qubit pairs

cx_errors = {}

if "cx" in target.operation_names:

gate_name = "cx"

elif "ecr" in target.operation_names:

gate_name = "ecr" # Some backends use ECR as the native 2q gate

else:

gate_name = None

if gate_name:

for qargs in target.qargs:

if len(qargs) == 2: # Two-qubit gate

props = target[gate_name].get(qargs, None)

if props and props.error is not None:

cx_errors[qargs] = props.error

# Print sorted by error rate

sorted_pairs = sorted(cx_errors.items(), key=lambda x: x[1])

print("Best qubit pairs (lowest 2q gate error):")

for pair, err in sorted_pairs[:5]:

print(f" Qubits {pair}: error = {err:.4%}")

print(f"\nWorst qubit pairs (highest 2q gate error):")

for pair, err in sorted_pairs[-5:]:

print(f" Qubits {pair}: error = {err:.4%}")

# Estimate total circuit error for a given circuit

def estimate_circuit_error(transpiled_circuit, backend):

"""Estimate total fidelity from gate errors."""

target = backend.target

total_fidelity = 1.0

ops = transpiled_circuit.count_ops()

for gate_name, count in ops.items():

if gate_name in ("barrier", "measure", "delay"):

continue

if gate_name in target.operation_names:

# Average error across all qargs for this gate

errors = []

for qargs in target.qargs:

props = target[gate_name].get(qargs, None)

if props and props.error is not None:

errors.append(props.error)

if errors:

avg_error = np.mean(errors)

total_fidelity *= (1 - avg_error) ** count

return total_fidelity

# Example usage after transpilation

pm = generate_preset_pass_manager(optimization_level=2, backend=backend)

bell = QuantumCircuit(2)

bell.h(0)

bell.cx(0, 1)

transpiled_bell = pm.run(bell)

fidelity = estimate_circuit_error(transpiled_bell, backend)

print(f"\nEstimated circuit fidelity: {fidelity:.4f}")

print(f"Estimated total error: {1 - fidelity:.4%}")

if fidelity > 0.90:

print("Recommendation: Level 2 (ZNE) or Level 3 (PEC) will work well.")

elif fidelity > 0.70:

print("Recommendation: Level 2 (ZNE) is appropriate. PEC overhead may be high.")

else:

print("Recommendation: Level 1 (TREX) only. ZNE extrapolation is unreliable at >30% error.")

The 70% fidelity threshold for ZNE is an empirical guideline, not a hard cutoff. ZNE extrapolates an exponential decay curve, and when the signal has decayed by more than about 30%, the extrapolation becomes sensitive to the assumed noise model. Below 70% fidelity, you are likely better off reducing circuit depth (through circuit optimization or different algorithm design) rather than relying on post-processing.

Comparing Suppression Strategies: Full Example

This example runs the same observable estimation at different resilience levels on real hardware, using best practices for transpilation, qubit selection, and session management.

from qiskit_ibm_runtime import QiskitRuntimeService, Session

from qiskit_ibm_runtime import EstimatorV2 as Estimator

from qiskit_ibm_runtime import SamplerV2 as Sampler

from qiskit_ibm_runtime.options import EstimatorOptions, SamplerOptions

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

from qiskit.quantum_info import SparsePauliOp

from qiskit import QuantumCircuit

service = QiskitRuntimeService()

backend = service.least_busy(operational=True, simulator=False, min_num_qubits=5)

print(f"Backend: {backend.name}")

# Step 1: Find the best qubit pair for a 2-qubit experiment

target = backend.target

gate_name = "cx" if "cx" in target.operation_names else "ecr"

best_pair = None

best_error = 1.0

for qargs in target.qargs:

if len(qargs) == 2:

props = target[gate_name].get(qargs, None)

if props and props.error is not None and props.error < best_error:

best_error = props.error

best_pair = qargs

print(f"Best qubit pair: {best_pair} with {gate_name} error = {best_error:.4%}")

# Step 2: Build and transpile the circuit

bell = QuantumCircuit(2)

bell.h(0)

bell.cx(0, 1)

pm = generate_preset_pass_manager(

optimization_level=2,

backend=backend,

initial_layout=list(best_pair), # Pin to the best qubit pair

)

transpiled_bell = pm.run(bell)

print(f"Transpiled depth: {transpiled_bell.depth()}")

print(f"Gate counts: {transpiled_bell.count_ops()}")

# Step 3: Define the observable

observable = SparsePauliOp("ZZ")

# Step 4: Run at multiple resilience levels within a single Session

# A Session keeps the backend reserved between jobs, avoiding re-queuing.

with Session(backend=backend) as session:

results = {}

for level in [0, 1, 2]:

opts = EstimatorOptions()

opts.resilience_level = level

opts.dynamical_decoupling.enable = True

opts.dynamical_decoupling.sequence_type = "XY4"

opts.default_shots = 8192

# For level 2, also enable gate twirling explicitly

if level == 2:

opts.twirling.enable_gates = True

opts.twirling.num_randomizations = 32

estimator = Estimator(mode=session, options=opts)

job = estimator.run([(transpiled_bell, observable)])

result = job.result()

ev = result[0].data.evs

std = result[0].data.stds

results[level] = (float(ev), float(std))

print(f"Level {level}: <ZZ> = {ev:.4f} +/- {std:.4f}")

# The ideal value of <ZZ> for the Bell state |Phi+> = (|00> + |11>)/sqrt(2) is +1.0

# (Both qubits always agree, so ZZ eigenvalue is always +1)

print("\nIdeal <ZZ> = 1.0")

print("Level 0 shows the largest deviation from ideal.")

print("Level 2 should be closest to 1.0.")

Using SamplerV2 with the Same Options

Both the Estimator and Sampler primitives accept the same error suppression options. If you need raw measurement counts instead of expectation values, use SamplerV2:

from qiskit_ibm_runtime.options import SamplerOptions

# Sampler accepts the same DD and twirling options

sampler_opts = SamplerOptions()

sampler_opts.dynamical_decoupling.enable = True

sampler_opts.dynamical_decoupling.sequence_type = "XY4"

sampler_opts.default_shots = 8192

# Add measurement to the transpiled circuit for Sampler

bell_measured = transpiled_bell.copy()

bell_measured.measure_all()

with Session(backend=backend) as session:

sampler = Sampler(mode=session, options=sampler_opts)

job = sampler.run([bell_measured])

result = job.result()

# Access the counts

counts = result[0].data.meas.get_counts()

print(f"Counts: {counts}")

# Expected: mostly '00' and '11' entries for a Bell state

Session Mode vs Batch Mode

When comparing multiple configurations (as in the example above), the choice of execution mode affects both wall-clock time and cost.

Session mode (Session(backend=backend)) reserves a backend for the duration of the session. All jobs within the session run on the same device without re-entering the queue. This minimizes total wall-clock time and ensures consistent hardware conditions across jobs.

Batch mode (Batch(backend=backend)) groups jobs for scheduling but does not guarantee they run consecutively. Jobs may be interleaved with other users’ jobs. Batch mode is appropriate for independent jobs that do not need to share hardware state.

For comparing resilience levels, always use Session mode. Hardware noise drifts over time, so running all configurations on the same device in quick succession eliminates drift as a confounding variable.

from qiskit_ibm_runtime import Session, Batch

# Session: reserved backend, sequential execution, no queue re-entry

with Session(backend=backend) as session:

estimator = Estimator(mode=session, options=opts)

# All jobs here run on the same device consecutively

# Batch: grouped scheduling, may interleave with other users

with Batch(backend=backend) as batch:

estimator = Estimator(mode=batch, options=opts)

# Jobs are grouped but not guaranteed sequential

Common Mistakes

1. Enabling DD on simulators

Simulators do not model idle-time noise by default. Enabling DD on a simulator inserts extra gates that add to the circuit depth and execution time without providing any benefit. Worse, if the simulator does model gate errors, the additional DD gates introduce new errors that would not exist otherwise. Only enable DD when running on real hardware.

2. Confusing resilience_level with optimization_level

These are completely independent settings that control different stages of the pipeline.

optimization_level (set during transpilation) controls how aggressively the transpiler optimizes your circuit: gate cancellation, routing efficiency, and gate decomposition. Values range from 0 (minimal optimization) to 3 (maximum optimization). Higher levels reduce circuit depth and gate count.

resilience_level (set in EstimatorOptions) controls post-transpilation error suppression and mitigation: readout correction, noise extrapolation, and error cancellation. Values range from 0 (no mitigation) to 3 (maximum mitigation). Higher levels improve accuracy at the cost of more shots.

Both should be tuned independently. A common good combination is optimization_level=2 (during transpilation) with resilience_level=1 or 2 (during execution).

3. PEC on drifted hardware

PEC (Level 3) relies on an accurate noise model. Qiskit Runtime calibrates this model automatically, but hardware drifts over time. If you start a long PEC job hours after calibration, the noise model may no longer match reality. In this situation, PEC can amplify errors instead of canceling them. For best PEC results:

- Use a freshly calibrated backend

- Complete your PEC jobs within 2 to 3 hours of calibration

- Compare PEC results against Level 2 (ZNE) as a sanity check

4. Insufficient shots for TREX

TREX averages over multiple twirled circuits. Each twirl configuration needs enough shots to produce reliable statistics. If you set default_shots=100, each twirl might only get 50 to 70 shots (after splitting across configurations), which produces high-variance results. For TREX to work well:

- Use at least 4,096 shots for simple circuits

- Use 8,192 or more shots for circuits with many qubits (the readout error correction becomes more sensitive with more qubits)

- If your results have large error bars, increase shots before increasing resilience level

5. Ignoring qubit selection

Not all qubits on a device perform equally. The error rate difference between the best and worst CX pairs can be 5x or more. Always check the backend’s calibration data and route your circuit to the lowest-error qubits. The error budget analysis code in this tutorial shows how to extract per-pair error rates programmatically.

Practical Guidelines

Start with Level 1 and DD. For exploratory work, resilience_level=1 with dynamical_decoupling.enable=True and sequence_type="XY4" removes most readout bias and idle-time decoherence at minimal extra cost. This is the right default for 90% of workloads.

Analyze your error budget before escalating. Use the estimate_circuit_error function to check whether your circuit’s fidelity is high enough for ZNE or PEC to help. If estimated fidelity is below 70%, focus on reducing circuit depth instead of increasing resilience level.

Use Level 2 for energy estimation. When computing expectation values for variational algorithms (VQE, QAOA), Level 2 provides a meaningful accuracy improvement. Enable gate twirling with at least 32 randomizations to ensure the noise is well-depolarized before ZNE extrapolation.

Reserve Level 3 for final results. PEC at Level 3 gives the most accurate results but at 10x to 100x cost. Use it only after you have confirmed with Level 2 that your circuit is in the right regime, and when you need results accurate enough for publication or decision-making.

Always run inside a Session. Sessions prevent queue re-entry between jobs and ensure consistent hardware conditions. This is especially important when comparing different configurations, because hardware drift between jobs would confound the comparison.

Check backend calibration data. Gate error rates, T1, and T2 values vary across qubits and drift over time. Route your circuits to the best-performing qubits, and verify that the backend was recently calibrated before running expensive Level 2 or Level 3 jobs.

Was this tutorial helpful?