Qiskit Patterns: A Structured Workflow for Quantum Algorithms

Learn IBM's 4-step Qiskit Patterns methodology and implement a complete VQE using SparsePauliOp, parametric ansatz, ISA transpilation, and RuntimeEstimator.

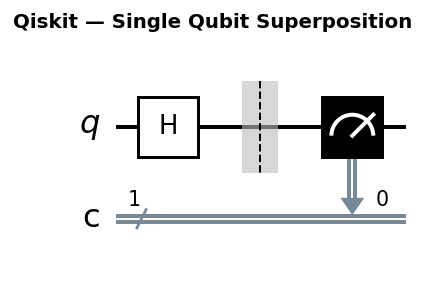

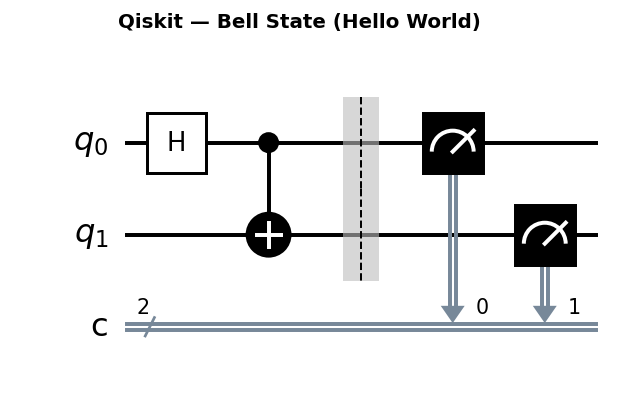

Circuit diagrams

What Are Qiskit Patterns?

As quantum algorithms grow more complex, ad-hoc code quickly becomes difficult to reason about, reproduce, or extend. IBM’s Qiskit Patterns framework addresses this by prescribing a four-step methodology that cleanly separates concerns in a quantum workflow:

- Map the classical problem to a quantum representation

- Optimize circuits for a specific target backend

- Execute on real hardware or a simulator

- Post-process raw results into a meaningful answer

This structure might seem like overhead for small experiments, but it pays dividends the moment you need to swap a backend, try a different ansatz, or scale to larger problem sizes. Each step has a well-defined interface, making the entire pipeline modular and testable.

Why This Structure Matters

Traditional quantum programming often blurs these boundaries. A developer might build a circuit, apply a noise model, run it, and then parse outputs all in one function. When something breaks, it is not obvious which step failed. Qiskit Patterns enforces separation so that:

- Circuit construction is decoupled from hardware-specific transpilation

- Primitive selection (Estimator vs Sampler) is an explicit choice, not an afterthought

- Classical post-processing logic is isolated and unit-testable

The pattern also maps cleanly onto IBM Quantum’s Runtime primitives, making it straightforward to move from local simulation to cloud execution without rewriting core logic.

Setting Up

Install the required packages:

pip install qiskit qiskit-aer qiskit-ibm-runtime scipy matplotlib

All code in this tutorial runs on Qiskit 1.x with the V2 primitive interface.

Step 1: Map the Problem to Quantum

The mapping step translates a classical problem into two quantum objects: a Hamiltonian (the operator whose expectation value you want to compute) and a parameterized ansatz circuit (the family of quantum states you search over).

Pauli Operator Theory and SparsePauliOp

Any Hermitian operator acting on n qubits can be decomposed as a linear combination of n-qubit Pauli strings. A Pauli string is a tensor product of single-qubit Pauli matrices chosen from {I, X, Y, Z}. For n qubits, there are 4^n possible Pauli strings, and together they form a complete basis for the space of 2^n x 2^n Hermitian matrices. This means you can write any observable H as:

H = sum_k c_k P_k

where each P_k is a Pauli string and each c_k is a real coefficient. For a typical physical Hamiltonian, most of the 4^n coefficients are zero. A two-qubit system has 4^2 = 16 possible Pauli strings, but the H2 molecular Hamiltonian only requires 5 non-zero terms. This is why SparsePauliOp is the right data structure: it stores only the non-zero terms, keeping memory and computation efficient.

Constructing the H2 Hamiltonian

The simplified electronic Hamiltonian for the hydrogen molecule (H2) at equilibrium bond length (0.735 angstroms) in the STO-3G basis, after qubit mapping via the Jordan-Wigner transformation, is:

from qiskit.quantum_info import SparsePauliOp

import numpy as np

# H2 Hamiltonian in the STO-3G basis at equilibrium bond length

# These coefficients come from quantum chemistry calculations

h2_hamiltonian = SparsePauliOp.from_list([

("II", -1.0523),

("ZI", 0.3979),

("IZ", -0.3979),

("ZZ", -0.0112),

("XX", 0.1809),

])

print("H2 Hamiltonian:")

print(h2_hamiltonian)

print(f"\nNumber of Pauli terms: {len(h2_hamiltonian)}")

print(f"Number of qubits: {h2_hamiltonian.num_qubits}")

The from_list constructor accepts tuples of (pauli_string, coefficient). Qiskit uses little-endian qubit ordering, so "ZI" means Z on qubit 1 and I on qubit 0.

To verify this Hamiltonian, convert it to a dense matrix and compute its eigenvalues:

# Convert to a 4x4 matrix and compute eigenvalues

h2_matrix = h2_hamiltonian.to_matrix()

eigenvalues = np.linalg.eigvalsh(h2_matrix)

print("Eigenvalues of H2 Hamiltonian:")

for i, ev in enumerate(eigenvalues):

print(f" E_{i} = {ev:.6f} Ha")

print(f"\nGround state energy: {eigenvalues[0]:.6f} Ha")

print(f"Reference value (FCI): -1.8573 Ha")

The ground state eigenvalue should be approximately -1.8573 Hartree, matching the Full Configuration Interaction (FCI) result for H2 in this basis. This exact diagonalization serves as the benchmark against which we compare our VQE result.

SparsePauliOp Manipulation

Before moving to the ansatz, it is worth exploring several SparsePauliOp operations that become essential in larger workflows.

# Simplify: combine duplicate Pauli terms

h_duplicate = SparsePauliOp.from_list([

("ZZ", 0.3),

("XX", 0.2),

("ZZ", 0.2), # duplicate ZZ term

])

h_simplified = h_duplicate.simplify()

print("Before simplify:", h_duplicate)

print("After simplify:", h_simplified)

# ZZ now has coefficient 0.5

# Chop: zero out terms with small coefficients

h_noisy = SparsePauliOp.from_list([

("ZZ", 0.5),

("XX", 1e-12), # numerically negligible

])

h_chopped = h_noisy.chop(threshold=1e-10)

print("After chop:", h_chopped)

# XX term is removed

# Arithmetic: add Hamiltonians and scale them

h1 = SparsePauliOp.from_list([("ZZ", 1.0), ("XI", 0.5)])

h2 = SparsePauliOp.from_list([("ZZ", -0.3), ("IY", 0.2)])

h_sum = (h1 + h2).simplify()

h_scaled = (0.5 * h1).simplify()

print("h1 + h2 =", h_sum)

print("0.5 * h1 =", h_scaled)

# Commutator: [H1, H2] = H1 @ H2 - H2 @ H1

commutator = (h1 @ h2 - h2 @ h1).simplify()

print("Commutator [h1, h2]:", commutator)

# Check if two operators commute (commutator is zero)

commutator_chopped = commutator.chop(threshold=1e-10)

operators_commute = len(commutator_chopped) == 0

print(f"h1 and h2 commute: {operators_commute}")

These operations are essential for constructing Hamiltonians programmatically, grouping Pauli terms that commute (to reduce the number of measurement circuits), and verifying algebraic properties of your operators.

Ansatz Design Principles

The ansatz defines the family of quantum states that the optimizer searches over. Choosing a good ansatz is one of the most important decisions in variational quantum algorithms. The central tradeoff is between expressibility (the ability to represent the true ground state) and trainability (the ability of a classical optimizer to find good parameters).

Hardware-efficient ansatze use gates native to the hardware (single-qubit rotations and nearest-neighbor entangling gates). They have low circuit depth, which reduces noise on real devices, but they may not be expressive enough to reach chemically accurate energies. They can also suffer from barren plateaus, where the cost function landscape becomes exponentially flat.

Chemically-motivated ansatze like UCCSD (Unitary Coupled Cluster Singles and Doubles) are designed to capture the physics of electron correlation. They are highly expressive for chemistry problems but require deep circuits that are impractical on current hardware.

Here are three ansatz options for a 2-qubit system:

Option A: Manual Ry-CNOT ansatz

from qiskit.circuit import QuantumCircuit, ParameterVector

# Simple hardware-efficient ansatz: Ry rotations + CNOT entangler

num_qubits = 2

depth = 2

theta = ParameterVector("theta", length=num_qubits * depth)

ansatz_manual = QuantumCircuit(num_qubits)

idx = 0

for d in range(depth):

for q in range(num_qubits):

ansatz_manual.ry(theta[idx], q)

idx += 1

if d < depth - 1:

ansatz_manual.cx(0, 1)

print("Manual Ry-CNOT ansatz:")

print(ansatz_manual.draw("text"))

print(f"Parameters: {ansatz_manual.num_parameters}")

print(f"Depth: {ansatz_manual.depth()}")

print(f"CX count: {ansatz_manual.count_ops().get('cx', 0)}")

Option B: TwoLocal for flexible layered ansatze

from qiskit.circuit.library import TwoLocal

# TwoLocal lets you specify rotation and entanglement gates

ansatz_twolocal = TwoLocal(

num_qubits=2,

rotation_blocks=["ry", "rz"], # two rotation gates per qubit per layer

entanglement_blocks="cx",

entanglement="linear",

reps=2, # number of repetition layers

insert_barriers=True,

)

print("TwoLocal ansatz:")

print(ansatz_twolocal.decompose().draw("text"))

print(f"Parameters: {ansatz_twolocal.num_parameters}")

print(f"Depth: {ansatz_twolocal.decompose().depth()}")

Option C: EfficientSU2

from qiskit.circuit.library import EfficientSU2

# EfficientSU2: Ry + Rz rotations with CX entanglement

# A common default for 2-qubit variational algorithms

ansatz_su2 = EfficientSU2(

num_qubits=2,

reps=2,

entanglement="linear",

)

print("EfficientSU2 ansatz:")

print(ansatz_su2.decompose().draw("text"))

print(f"Parameters: {ansatz_su2.num_parameters}")

print(f"Depth: {ansatz_su2.decompose().depth()}")

For a 2-qubit system, the manual Ry-CNOT ansatz with depth 2 has 4 parameters and 1 CX gate. TwoLocal with ["ry", "rz"] rotations and 2 reps has 12 parameters and 2 CX gates. EfficientSU2 with 2 reps has 12 parameters and 2 CX gates. More parameters and entangling gates generally increase expressibility, but they also increase circuit depth and the risk of barren plateaus.

For the rest of this tutorial, we use the simple Ry-CNOT ansatz, which is sufficient for the 2-qubit H2 problem:

# Use the manual ansatz for the VQE workflow

ansatz = ansatz_manual

hamiltonian = h2_hamiltonian

This completes the Map step. We have a quantum Hamiltonian (SparsePauliOp) and a parameterized ansatz (QuantumCircuit with ParameterVector) representing the original problem.

Step 2: Optimize Circuits

The Optimize step prepares the circuit for a specific backend. This includes transpilation (decomposing gates into the backend’s native gate set), layout selection (mapping logical qubits to physical qubits), and routing (inserting SWAP gates when the circuit requires connectivity that the hardware does not natively support). In Qiskit Runtime, circuits must be in ISA (Instruction Set Architecture) form before they can be passed to a primitive.

ISA Compliance Explained

Real quantum devices support only a limited set of native gates. A typical IBM superconducting processor supports: ECR (or CX) as the two-qubit gate, plus Rz, SX, and X as single-qubit gates. The device also has a fixed qubit connectivity graph: not every pair of qubits can directly interact. An ISA-compliant circuit uses only these native gates and respects the connectivity constraints.

When you write a circuit with abstract H and CNOT gates, these are not ISA-compliant. The transpiler decomposes H into Rz and SX sequences, maps CNOT to ECR (or CX, depending on the backend), and inserts SWAP gates where needed to satisfy connectivity. If you attempt to pass a non-ISA circuit to a Runtime primitive, it raises an error.

Transpilation with generate_preset_pass_manager

from qiskit.providers.fake_provider import GenericBackendV2

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

# Create a 5-qubit backend with linear connectivity

# This forces SWAP insertion for non-adjacent qubit interactions

backend = GenericBackendV2(num_qubits=5, seed=42)

pass_manager = generate_preset_pass_manager(

optimization_level=1,

backend=backend,

)

isa_ansatz = pass_manager.run(ansatz)

# The Hamiltonian must be mapped to physical qubit indices

# After transpilation, logical qubit 0 might be placed on physical qubit 3

# apply_layout transforms the Hamiltonian's qubit indices accordingly

isa_hamiltonian = hamiltonian.apply_layout(isa_ansatz.layout)

print(f"Original circuit depth: {ansatz.depth()}")

print(f"ISA circuit depth: {isa_ansatz.depth()}")

print(f"Original gate counts: {dict(ansatz.count_ops())}")

print(f"ISA gate counts: {dict(isa_ansatz.count_ops())}")

The apply_layout step is critical and easy to forget. Without it, the Hamiltonian’s qubit indices refer to the original abstract qubits, while the circuit operates on physical qubits. If logical qubit 0 was placed on physical qubit 3 during transpilation, measuring ZI without layout correction means you measure the wrong qubit, producing a completely incorrect energy.

Optimization Level Comparison

The optimization_level parameter (0, 1, 2, 3) controls how aggressively the transpiler optimizes your circuit:

- Level 0: No optimization. Maps gates to the native set and inserts SWAPs, but does not simplify the circuit.

- Level 1: Layout selection plus basic gate cancellation and commutation-based optimization. This is the default and a good starting point.

- Level 2: Noise-adaptive layout (places qubits on the least noisy physical qubits) plus additional optimization passes.

- Level 3: Heaviest optimization, including unitary synthesis. The transpiler may resynthesize blocks of gates to find shorter decompositions.

import time

print(f"{'Level':<8} {'Depth':<8} {'2Q Gates':<10} {'Time (ms)':<10}")

print("-" * 36)

for opt_level in range(4):

pm = generate_preset_pass_manager(

optimization_level=opt_level,

backend=backend,

)

t0 = time.time()

isa_circ = pm.run(ansatz)

elapsed = (time.time() - t0) * 1000

# Count two-qubit gates (ECR, CX, or CZ depending on backend)

ops = isa_circ.count_ops()

two_q_gates = sum(

count for gate, count in ops.items()

if gate in ("ecr", "cx", "cz")

)

print(f"{opt_level:<8} {isa_circ.depth():<8} {two_q_gates:<10} {elapsed:<10.1f}")

For a small 2-qubit circuit, the differences between optimization levels are modest. The benefit becomes dramatic for larger circuits (10+ qubits) where SWAP insertion and gate cancellation can reduce circuit depth by 2-5x. Level 3 is the slowest and can sometimes produce longer circuits than level 2 due to synthesis heuristics that do not always converge to the global optimum. Benchmark levels 1 and 2 before defaulting to level 3.

For the rest of this tutorial, we use optimization level 1:

# Finalize the ISA circuit and Hamiltonian for execution

pass_manager = generate_preset_pass_manager(optimization_level=1, backend=backend)

isa_ansatz = pass_manager.run(ansatz)

isa_hamiltonian = hamiltonian.apply_layout(isa_ansatz.layout)

Step 3: Execute

With ISA circuits and observables in hand, we can execute using a primitive. Qiskit provides two V2 primitives:

Estimator vs Sampler: Choosing the Right Primitive

EstimatorV2 computes expectation values <psi|O|psi> directly, returning a scalar for each observable. This is the right choice for VQE, QAOA energy evaluation, and any algorithm that needs an observable’s expectation value. You never see individual measurement outcomes; the primitive handles the statistics internally.

SamplerV2 returns quasi-probability distributions over measurement outcomes. This is the right choice for algorithms that need bit-string statistics: Grover’s search (looking for specific marked states), QFT-based algorithms (extracting phase information from measurement patterns), and QAOA state sampling (reading off the solution bitstring).

Here is a comparison using a Bell state:

from qiskit.primitives import StatevectorEstimator, StatevectorSampler

from qiskit.circuit import QuantumCircuit

from qiskit.quantum_info import SparsePauliOp

# Create a Bell state circuit

bell = QuantumCircuit(2)

bell.h(0)

bell.cx(0, 1)

# Estimator: compute <ZZ> expectation value

estimator = StatevectorEstimator()

zz_observable = SparsePauliOp.from_list([("ZZ", 1.0)])

est_pub = (bell, [zz_observable])

est_result = estimator.run([est_pub]).result()

print(f"<ZZ> for Bell state: {est_result[0].data.evs[0]:.4f}")

# Expected: 1.0 (|00> + |11> are both +1 eigenstates of ZZ)

# Sampler: get measurement probability distribution

sampler = StatevectorSampler()

bell_measured = bell.copy()

bell_measured.measure_all()

sam_result = sampler.run([bell_measured]).result()

counts = sam_result[0].data.meas.get_counts()

print(f"Sampler counts: {counts}")

# Expected: {'00': ~0.5, '11': ~0.5}

For VQE, we use the Estimator because we need the scalar energy value <psi|H|psi> at each optimizer step.

Running the VQE Optimization

from qiskit.primitives import StatevectorEstimator

import numpy as np

from scipy.optimize import minimize

# StatevectorEstimator runs locally without an IBM Quantum account

# For real hardware, use EstimatorV2 from qiskit_ibm_runtime

estimator = StatevectorEstimator()

def compute_energy(params):

"""Evaluate <psi(params)|H|psi(params)> using the Estimator primitive."""

pub = (isa_ansatz, [isa_hamiltonian], [params])

job = estimator.run([pub])

result = job.result()

return result[0].data.evs[0].real

# Initial random parameters

np.random.seed(42)

x0 = np.random.uniform(-np.pi, np.pi, len(theta))

print(f"Initial energy: {compute_energy(x0):.6f} Ha")

Now wrap this in a classical optimizer with logging:

history = []

def cost_with_logging(params):

"""Cost function that records energy at each step."""

energy = compute_energy(params)

history.append(energy)

return energy

result = minimize(

cost_with_logging,

x0,

method="COBYLA",

options={"maxiter": 200, "rhobeg": 0.5},

)

print(f"Converged: {result.success}")

print(f"VQE ground state energy: {result.fun:.6f} Ha")

print(f"Number of function evaluations: {result.nfev}")

Parallel Circuit Execution with PUBs

The PUB (Primitive Unified Bloc) format in Qiskit Runtime V2 enables efficient batched execution. A PUB is a tuple of (circuit, observables, parameter_values, precision). When you pass multiple PUBs to EstimatorV2.run(), they are evaluated in parallel within a single job submission. This avoids the overhead of multiple job submissions (queuing, initialization, result retrieval).

Here is how to evaluate 10 different parameter sets in a single call:

# Generate 10 random parameter sets

np.random.seed(0)

param_sets = [

np.random.uniform(-np.pi, np.pi, len(theta))

for _ in range(10)

]

# Create one PUB per parameter set

pubs = [

(isa_ansatz, [isa_hamiltonian], [params])

for params in param_sets

]

# Submit all 10 evaluations as a single job

job = estimator.run(pubs)

results = job.result()

print("Energies from batched execution:")

for i, res in enumerate(results):

energy = res.data.evs[0].real

print(f" Parameter set {i}: {energy:.6f} Ha")

On real hardware, batching 10 evaluations into one job can be 5-10x faster than submitting 10 individual jobs, because job submission overhead dominates for small circuits. This is especially important inside optimizer loops where hundreds of evaluations are needed.

Session-Based Hardware Execution

For real IBM Quantum hardware, you use a Session to keep the backend reserved across multiple job submissions. This eliminates queue wait time between optimizer steps.

# Hardware execution workflow (requires IBM Quantum account)

# Uncomment and fill in your credentials to run on real hardware

# from qiskit_ibm_runtime import QiskitRuntimeService, Session, EstimatorV2

# service = QiskitRuntimeService(channel="ibm_quantum")

# backend = service.least_busy(simulator=False, min_num_qubits=5)

# with Session(service=service, backend=backend) as session:

# estimator = EstimatorV2(session=session)

#

# def hardware_cost(params):

# pub = (isa_ansatz, [isa_hamiltonian], [params])

# job = estimator.run([pub])

# try:

# result = job.result()

# return result[0].data.evs[0].real

# except Exception as e:

# print(f"Job failed: {e}")

# return float('inf') # return high energy on failure

#

# result = minimize(

# hardware_cost,

# x0,

# method="COBYLA",

# options={"maxiter": 100, "rhobeg": 0.5},

# )

#

# print(f"Hardware VQE energy: {result.fun:.6f} Ha")

The Session context manager ensures that all jobs submitted within the block are routed to the same backend instance. When the session closes, the backend reservation is released. The try/except block handles transient hardware errors gracefully: returning a high energy value tells the optimizer that this parameter set is bad, allowing it to recover on subsequent steps.

Error Mitigation in the Execute Step

Qiskit Runtime’s EstimatorV2 supports built-in error mitigation through the resilience_level parameter:

- resilience_level=0: No mitigation. Raw expectation values from shot statistics.

- resilience_level=1: M3 (Matrix-free Measurement Mitigation) readout error correction. Adds approximately 2x shot overhead.

- resilience_level=2: Zero Noise Extrapolation (ZNE) for gate noise. Adds approximately 5-10x overhead because the circuit is run at multiple artificially amplified noise levels and extrapolated to zero noise.

# Error mitigation example (requires qiskit-ibm-runtime)

# from qiskit_ibm_runtime import EstimatorV2, EstimatorOptions

# # No mitigation

# options_raw = EstimatorOptions(resilience_level=0)

# estimator_raw = EstimatorV2(session=session, options=options_raw)

# # Readout mitigation only

# options_m3 = EstimatorOptions(resilience_level=1)

# estimator_m3 = EstimatorV2(session=session, options=options_m3)

# # Full ZNE + readout mitigation

# options_zne = EstimatorOptions(resilience_level=2)

# estimator_zne = EstimatorV2(session=session, options=options_zne)

# # Compare energies at the same parameters

# pub = (isa_ansatz, [isa_hamiltonian], [optimal_params])

#

# energy_raw = estimator_raw.run([pub]).result()[0].data.evs[0].real

# energy_m3 = estimator_m3.run([pub]).result()[0].data.evs[0].real

# energy_zne = estimator_zne.run([pub]).result()[0].data.evs[0].real

#

# print(f"Raw energy: {energy_raw:.6f} Ha")

# print(f"M3 mitigated: {energy_m3:.6f} Ha")

# print(f"ZNE mitigated: {energy_zne:.6f} Ha")

# print(f"Exact: {exact_ground_state:.6f} Ha")

For VQE on noisy hardware, resilience_level=1 is the usual starting point. It corrects the most common error source (measurement bit-flip errors) at a modest cost. Level 2 is reserved for cases where gate noise dominates and you need the highest accuracy, because the additional shot overhead translates directly into longer execution time and higher cost.

Step 4: Post-process

Post-processing extracts the final answer from the raw optimization result and interprets it in the problem’s original language.

Comparing VQE to Exact Diagonalization

optimal_params = result.x

ground_state_energy = result.fun

# Exact eigenvalues from the Hamiltonian matrix

exact_eigenvalues = np.linalg.eigvalsh(hamiltonian.to_matrix())

exact_ground_state = exact_eigenvalues[0]

print(f"VQE energy: {ground_state_energy:.6f} Ha")

print(f"Exact ground state: {exact_ground_state:.6f} Ha")

print(f"Error: {abs(ground_state_energy - exact_ground_state):.6f} Ha")

print(f"Chemical accuracy (1.6 mHa): "

f"{'ACHIEVED' if abs(ground_state_energy - exact_ground_state) < 0.0016 else 'NOT achieved'}")

# Reconstruct the optimal circuit for inspection

optimal_circuit = isa_ansatz.assign_parameters(optimal_params)

print("\nOptimal circuit:")

print(optimal_circuit.draw("text"))

Chemical accuracy (1.6 milliHartree, or about 1 kcal/mol) is the standard threshold for quantum chemistry. If the VQE energy is within this tolerance of the exact answer, the result is useful for predicting chemical properties.

Energy Convergence Analysis

Visualizing the optimization trajectory reveals how the optimizer explores the energy landscape:

import matplotlib.pyplot as plt

fig, axes = plt.subplots(1, 2, figsize=(12, 5))

# Energy vs iteration

axes[0].plot(history, linewidth=1.5)

axes[0].axhline(y=exact_ground_state, color='r', linestyle='--', label='Exact ground state')

axes[0].set_xlabel("Optimizer step")

axes[0].set_ylabel("Energy (Ha)")

axes[0].set_title("VQE Energy Convergence")

axes[0].legend()

# Error vs iteration (log scale)

errors = [abs(e - exact_ground_state) for e in history]

axes[1].semilogy(errors, linewidth=1.5)

axes[1].axhline(y=0.0016, color='orange', linestyle='--', label='Chemical accuracy')

axes[1].set_xlabel("Optimizer step")

axes[1].set_ylabel("Absolute error (Ha)")

axes[1].set_title("Convergence to Exact Ground State")

axes[1].legend()

plt.tight_layout()

plt.savefig("vqe_convergence.png", dpi=150)

plt.show()

# Compute convergence metrics

for threshold in [0.01, 0.001, 0.0001]:

steps_to_reach = next(

(i for i, e in enumerate(errors) if e < threshold),

None

)

status = f"step {steps_to_reach}" if steps_to_reach is not None else "not reached"

print(f"Steps to reach {threshold:.4f} Ha accuracy: {status}")

Classical Optimizer Comparison

Different classical optimizers have different strengths. The choice of optimizer significantly affects convergence speed and reliability.

from scipy.optimize import minimize

optimizers = {

"COBYLA": {"method": "COBYLA", "options": {"maxiter": 200, "rhobeg": 0.5}},

"Nelder-Mead": {"method": "Nelder-Mead", "options": {"maxiter": 200}},

"SLSQP": {"method": "SLSQP", "options": {"maxiter": 200}},

}

np.random.seed(42)

x0 = np.random.uniform(-np.pi, np.pi, len(theta))

print(f"{'Optimizer':<15} {'Energy':<12} {'Evals':<8} {'Converged':<10} {'Error':<10}")

print("-" * 55)

for name, kwargs in optimizers.items():

eval_count = [0]

def cost(params, counter=eval_count):

counter[0] += 1

return compute_energy(params)

res = minimize(cost, x0, **kwargs)

error = abs(res.fun - exact_ground_state)

print(f"{name:<15} {res.fun:<12.6f} {eval_count[0]:<8} {str(res.success):<10} {error:<10.6f}")

COBYLA is gradient-free and handles noisy function evaluations well, making it the default choice for hardware execution. SLSQP uses finite-difference gradients, which can converge faster on noiseless simulators but may struggle with shot noise on real devices. Nelder-Mead is another gradient-free option that can be effective for low-dimensional parameter spaces.

For real hardware, SPSA (Simultaneous Perturbation Stochastic Approximation) is often preferred. SPSA estimates the gradient using only 2 function evaluations per step, regardless of the number of parameters. This makes it efficient in high-dimensional parameter spaces and robust to stochastic noise. Qiskit provides SPSA through qiskit_algorithms.optimizers.SPSA.

Scaling: QAOA with Qiskit Patterns

To demonstrate that Qiskit Patterns generalizes beyond VQE, here is a complete QAOA (Quantum Approximate Optimization Algorithm) example for the MaxCut problem on a 3-node graph.

Step 1 (Map): MaxCut Hamiltonian

The MaxCut objective for a graph with edges {(0,1), (1,2), (0,2)} is:

C = (1/2) * sum_{(i,j) in edges} (1 - Z_i Z_j)

Each edge contributes a term that evaluates to +1 when the two qubits are in different states (contributing to the cut) and 0 when they are in the same state.

from qiskit.quantum_info import SparsePauliOp

from qiskit.circuit import QuantumCircuit, Parameter

import numpy as np

# 3-node triangle graph: edges (0,1), (1,2), (0,2)

edges = [(0, 1), (1, 2), (0, 2)]

num_qubits = 3

# Build the MaxCut cost Hamiltonian

pauli_terms = []

for i, j in edges:

# (1 - Z_i Z_j) / 2 per edge

# The constant 1/2 per edge shifts energy; we track the ZZ terms

z_string = ["I"] * num_qubits

z_string[i] = "Z"

z_string[j] = "Z"

pauli_terms.append(("".join(z_string), -0.5))

# Add the constant offset: len(edges) / 2

pauli_terms.append(("I" * num_qubits, len(edges) / 2))

cost_hamiltonian = SparsePauliOp.from_list(pauli_terms).simplify()

print("MaxCut Hamiltonian:")

print(cost_hamiltonian)

# Verify: eigenvalues give the cut values for all 2^3 = 8 bitstrings

eigenvalues = np.linalg.eigvalsh(cost_hamiltonian.to_matrix())

print(f"\nAll cut values: {sorted(set(np.round(eigenvalues, 4)))}")

print(f"Maximum cut: {max(eigenvalues):.1f} edges")

Step 1 (Map): QAOA Circuit

The QAOA circuit alternates between the cost unitary exp(-i * gamma * C) and the mixer unitary exp(-i * beta * sum(X_i)):

gamma = Parameter("gamma")

beta = Parameter("beta")

# QAOA circuit with p=1 layer

qaoa_circuit = QuantumCircuit(num_qubits)

# Initial superposition

for q in range(num_qubits):

qaoa_circuit.h(q)

# Cost unitary: exp(-i * gamma * C)

# For ZZ terms, this decomposes into CNOT-Rz-CNOT

for i, j in edges:

qaoa_circuit.cx(i, j)

qaoa_circuit.rz(gamma, j)

qaoa_circuit.cx(i, j)

# Mixer unitary: exp(-i * beta * sum(X_i))

for q in range(num_qubits):

qaoa_circuit.rx(2 * beta, q)

print("QAOA circuit (p=1):")

print(qaoa_circuit.draw("text"))

Step 2 (Optimize): Transpile

from qiskit.providers.fake_provider import GenericBackendV2

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

qaoa_backend = GenericBackendV2(num_qubits=5, seed=42)

qaoa_pm = generate_preset_pass_manager(optimization_level=1, backend=qaoa_backend)

isa_qaoa = qaoa_pm.run(qaoa_circuit)

isa_cost_ham = cost_hamiltonian.apply_layout(isa_qaoa.layout)

print(f"QAOA ISA circuit depth: {isa_qaoa.depth()}")

print(f"QAOA ISA gate counts: {dict(isa_qaoa.count_ops())}")

Step 3 (Execute): Optimize QAOA Parameters

For QAOA, we can use either the Estimator (to maximize the expected cut value) or the Sampler (to find the most probable bitstring). Here we use the Estimator to find optimal gamma and beta, then the Sampler to read out the solution:

from qiskit.primitives import StatevectorEstimator, StatevectorSampler

estimator = StatevectorEstimator()

def qaoa_cost(params):

"""Negative expected cut value (we minimize, so negate the MaxCut objective)."""

gamma_val, beta_val = params

pub = (isa_qaoa, [isa_cost_ham], [[gamma_val, beta_val]])

result = estimator.run([pub]).result()

return -result[0].data.evs[0].real # negate because we minimize

# Grid search for initial guess, then refine with optimizer

best_cost = float('inf')

best_params = None

for g in np.linspace(0, 2 * np.pi, 12):

for b in np.linspace(0, np.pi, 12):

c = qaoa_cost([g, b])

if c < best_cost:

best_cost = c

best_params = [g, b]

# Refine with COBYLA

qaoa_result = minimize(

qaoa_cost,

best_params,

method="COBYLA",

options={"maxiter": 100},

)

print(f"Optimal gamma: {qaoa_result.x[0]:.4f}")

print(f"Optimal beta: {qaoa_result.x[1]:.4f}")

print(f"Expected cut value: {-qaoa_result.fun:.4f}")

Step 4 (Post-process): Extract the Solution Bitstring

sampler = StatevectorSampler()

# Build the measurement circuit with optimal parameters

qaoa_meas = qaoa_circuit.copy()

qaoa_meas.measure_all()

# Transpile the measurement circuit

isa_qaoa_meas = qaoa_pm.run(qaoa_meas)

# Run the sampler

sam_result = sampler.run(

[(isa_qaoa_meas, qaoa_result.x)]

).result()

counts = sam_result[0].data.meas.get_counts()

# Sort by probability

sorted_counts = sorted(counts.items(), key=lambda x: x[1], reverse=True)

print("\nMeasurement results (sorted by probability):")

for bitstring, count in sorted_counts:

# Compute cut value for this bitstring

bits = [int(b) for b in bitstring]

cut_value = sum(1 for i, j in edges if bits[i] != bits[j])

print(f" {bitstring}: probability={count/sum(counts.values()):.3f}, cut={cut_value}")

# Best solution

best_bitstring = sorted_counts[0][0]

best_bits = [int(b) for b in best_bitstring]

best_cut = sum(1 for i, j in edges if best_bits[i] != best_bits[j])

print(f"\nBest solution: {best_bitstring} with cut value {best_cut}")

For the 3-node triangle graph, the maximum cut is 2 (you cannot cut all 3 edges of a triangle). The optimal bitstrings are 001, 010, 100, 011, 101, and 110, each of which cuts exactly 2 edges.

Scaling to Complex Workflows

The real power of Qiskit Patterns emerges when you chain multiple primitives or repeat the pattern across a parameter sweep. For example, a potential energy surface scan wraps the entire workflow in a loop over bond lengths:

# Pseudocode for a PES scan

# build_hamiltonian and run_vqe are your problem-specific implementations

def build_hamiltonian(r): ... # returns SparsePauliOp for bond length r

def run_vqe(h_isa, ansatz): ... # returns ground state energy

bond_lengths = np.linspace(0.5, 3.0, 20)

energies = []

for r in bond_lengths:

# Step 1: Rebuild Hamiltonian at this geometry

h = build_hamiltonian(r)

# Step 2: Re-apply layout (transpilation done once outside the loop)

h_isa = h.apply_layout(isa_ansatz.layout)

# Step 3: Run VQE

e = run_vqe(h_isa, isa_ansatz)

# Step 4: Record

energies.append(e)

Each iteration follows the same four-step structure. The Optimize step only needs to run once if the ansatz topology does not change, and only the Hamiltonian coefficients are updated at each bond length.

Common Mistakes and How to Avoid Them

1. Forgetting apply_layout on the Hamiltonian

This is the most common and most confusing mistake. After transpilation, logical qubit 0 might be placed on physical qubit 3. If you pass the original Hamiltonian (which references logical qubit 0), the Estimator measures the wrong physical qubit. The resulting energy is not just slightly wrong; it can be completely meaningless.

Always call hamiltonian.apply_layout(isa_circuit.layout) after transpilation, and verify that the Hamiltonian’s qubit count matches the ISA circuit’s qubit count.

2. Passing a Fully-Bound Circuit to the Estimator

The Estimator expects a parametrized circuit so it can bind different parameter values efficiently. If you call circuit.assign_parameters(values) before passing the circuit to the Estimator, you lose the ability to sweep parameters and must create a new job for each evaluation. Keep parameters symbolic and pass the values through the PUB tuple.

# Wrong: pre-assigning parameters

bound_circuit = isa_ansatz.assign_parameters(x0)

# pub = (bound_circuit, [isa_hamiltonian]) # No parameter slot!

# Correct: keep parameters symbolic, pass values in the PUB

pub = (isa_ansatz, [isa_hamiltonian], [x0])

3. Using optimization_level=3 by Default

Level 3 applies unitary synthesis, which can be very slow for large circuits. Worse, the synthesis heuristics do not always find the optimal decomposition, so level 3 can sometimes produce circuits with more gates than level 2. Always benchmark levels 1 and 2 first. Reserve level 3 for cases where you have confirmed that extra synthesis effort reduces circuit depth.

4. Re-running Map and Optimize Inside the Optimizer Loop

The Hamiltonian construction and transpilation should happen once, outside the optimizer loop. If you re-transpile inside the cost_function, you waste time and risk getting a different physical qubit layout on each call. Different layouts mean the Hamiltonian needs different apply_layout transformations, potentially introducing bugs.

# Wrong: transpiling inside the cost function

def bad_cost(params):

isa = pass_manager.run(ansatz) # re-transpiles every call

h_isa = hamiltonian.apply_layout(isa.layout)

pub = (isa, [h_isa], [params])

return estimator.run([pub]).result()[0].data.evs[0].real

# Correct: transpile once, reuse

isa_ansatz = pass_manager.run(ansatz)

isa_hamiltonian = hamiltonian.apply_layout(isa_ansatz.layout)

def good_cost(params):

pub = (isa_ansatz, [isa_hamiltonian], [params])

return estimator.run([pub]).result()[0].data.evs[0].real

5. Not Handling Optimizer Convergence Failures

COBYLA and other gradient-free optimizers do not always converge. The result.success flag is False when the optimizer exits due to reaching maxiter without satisfying the convergence criteria. Always check this flag before trusting result.fun. For better reliability, run the optimization from multiple random initial points and take the lowest energy:

best_energy = float('inf')

best_result = None

for trial in range(5):

np.random.seed(trial)

x0_trial = np.random.uniform(-np.pi, np.pi, len(theta))

res = minimize(

compute_energy,

x0_trial,

method="COBYLA",

options={"maxiter": 300, "rhobeg": 0.5},

)

if res.fun < best_energy:

best_energy = res.fun

best_result = res

print(f"Best energy across 5 trials: {best_result.fun:.6f} Ha")

print(f"Converged: {best_result.success}")

This multi-start strategy is especially important for ansatze with many parameters, where the energy landscape has many local minima.

Was this tutorial helpful?