Measuring Quantum Volume with Qiskit

Implement the quantum volume protocol in Qiskit to compare hardware quality across devices. Understand how QV relates to circuit depth, qubit count, and gate fidelity.

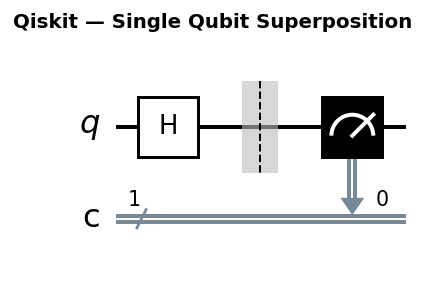

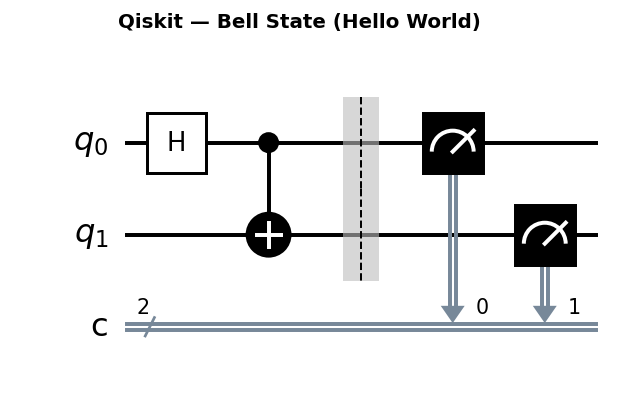

Circuit diagrams

Raw qubit count is not a useful measure of quantum computer quality. A 1,000-qubit device with 10% gate error rates performs worse than a 100-qubit device with 0.1% error rates, because nearly every circuit on the noisy device produces garbage outputs. The number that matters is how many qubits can work together reliably on circuits of meaningful depth.

IBM introduced Quantum Volume (QV) in 2018 to capture exactly this. QV is the largest square random circuit (m qubits wide, m layers deep) that a device can execute with more than 2/3 probability of producing a “heavy output.” The square constraint is deliberate: it tests whether the device can sustain coherence and gate fidelity across both the width dimension (many qubits participating) and the depth dimension (many sequential gate layers) simultaneously. A device that passes at width m but fails at depth m reveals that its qubits decohere too quickly or its gates accumulate too much error over sequential operations.

The resulting number, QV = 2^m, grows exponentially with the number of reliable qubits. A device with QV = 64 can reliably execute 6-qubit, 6-layer random circuits. A device with QV = 1024 handles 10-qubit, 10-layer circuits. This exponential scaling means that each doubling of QV represents a genuine, compounding improvement in device quality.

What Quantum Volume Measures

A device with quantum volume QV = 2^m can successfully execute m x m random circuits (m qubits wide, m layers deep) with heavy output probability greater than 2/3.

The protocol:

- Generate random

m x mSU(4) circuits (ideal two-qubit unitaries composed from random single-qubit rotations and CNOT pairs). - Classically simulate each circuit to find the “heavy” output bitstrings (those with above-median probability).

- Run the same circuits on hardware and check what fraction of outputs are heavy.

- If the heavy output probability exceeds 2/3 with statistical confidence, the device achieves

QV = 2^m.

Each step involves concepts that deserve closer examination.

Why Random SU(4) Circuits?

SU(4) is the special unitary group of dimension 4, which contains every possible two-qubit unitary operation. When the QV protocol generates a random circuit, it samples two-qubit gates uniformly from SU(4). Each gate is then decomposed into the device’s native gate set (typically single-qubit rotations plus CNOT or CZ gates) during transpilation.

Using random SU(4) gates is a deliberate design choice. Because the circuits are random, QV measures the device’s average-case performance across all possible gate sequences rather than its performance on any specific algorithm. This makes QV hardware-agnostic: it does not favor devices whose native gate set happens to match a particular algorithm’s requirements. A device that excels at QV genuinely handles arbitrary quantum computations well at that scale.

The m layers in an m-qubit QV circuit each apply SU(4) gates to random pairs of the m qubits, creating a circuit that is both wide and deep enough to stress-test the device across multiple dimensions of quality.

What Is a “Heavy Output”?

For a given random circuit, the QV protocol uses a classical simulator to compute the ideal output probability distribution over all 2^m possible bitstrings. It then sorts these probabilities and splits them at the median. The bitstrings with above-median probability are called “heavy outputs,” and they make up roughly half of all possible outputs.

The intuition is straightforward: if the quantum device is working well, it should mostly produce the same high-probability outputs that the ideal simulation predicts. A perfectly noiseless device would produce heavy outputs with probability approximately (1 - 1/e), which is about 0.632. A completely broken device (one that outputs uniformly random bitstrings) would produce heavy outputs with probability 0.5, since half of all bitstrings are heavy by definition.

The heavy output probability (HOP) therefore sits on a scale from 0.5 (device is producing random noise) to roughly 0.632 (device is performing ideally). Values above 0.632 are possible with finite sampling but are not expected on average.

Why the 2/3 Threshold?

The threshold of 2/3 (approximately 0.6667) is chosen because a perfect device achieves a heavy output probability of exactly (1 - 1/e), which is approximately 0.632. At first glance, 2/3 seems higher than the ideal value, which would make the test impossible to pass. The resolution is that the QV protocol does not require the raw measured HOP to exceed 2/3. Instead, it requires statistical confidence that the true HOP exceeds 2/3, using a one-sided confidence interval.

In practice, a device with a measured HOP around 0.66 or above, combined with enough shots and circuits to produce a tight confidence interval, will pass the test. The 2/3 threshold ensures that only devices performing close to the theoretical ideal qualify.

The Statistical Confidence Test

A single QV experiment generates a set of random circuits, runs each on hardware, and measures the HOP for each circuit. The protocol then computes a one-sided bootstrap confidence interval on the mean HOP across all circuits. The lower bound of this confidence interval (typically at the 97.5% level) must exceed 2/3 for the test to pass.

This statistical machinery protects against a real risk: with a small number of shots or circuits, random fluctuations can push the measured HOP above the threshold even when the device’s true performance falls below it. The confidence interval requirement ensures that passing the QV test reflects genuine device capability, not statistical luck.

More shots and more circuits produce tighter confidence intervals, making the test both harder to pass by luck and easier to pass when the device genuinely qualifies.

Installation

pip install qiskit qiskit-aer qiskit-experiments

Note: All code in this tutorial requires

qiskit-experiments. Install it before running any blocks.

Running the Quantum Volume Experiment

from qiskit_aer import AerSimulator

from qiskit_experiments.library import QuantumVolume

# Test QV for m=4 qubits

qubits = [0, 1, 2, 3]

qv_exp = QuantumVolume(qubits, seed=42)

# Use a noisy fake backend for a realistic result

from qiskit_ibm_runtime.fake_provider import FakeSherbrooke

noisy_sim = AerSimulator.from_backend(FakeSherbrooke())

job = qv_exp.run(noisy_sim, shots=1024)

result = job.block_for_results()

qv_result = result.analysis_results("quantum_volume")

print(f"Quantum Volume: {qv_result.value}")

hop = result.analysis_results("HOP")

print(f"Heavy Output Probability: {hop.value:.4f}")

A heavy output probability above 2/3 (0.6667) means the device achieves the tested QV level.

Choosing Which Qubits to Test

QV is always measured on a specific subset of qubits. For a device with dozens or hundreds of qubits, the choice of which m qubits to test directly affects the result. You typically want to select the m best-connected, lowest-error qubits on the device.

IBM backends expose calibration data that you can use to make this selection. The key metrics to look at are CNOT (or ECR) error rates between qubit pairs and single-qubit readout error rates.

from qiskit_ibm_runtime.fake_provider import FakeSherbrooke

from qiskit_aer import AerSimulator

backend = FakeSherbrooke()

props = backend.properties()

# Gather two-qubit gate error rates for each pair

gate_errors = {}

for gate in props.gates:

if gate.gate in ("cx", "ecr") and len(gate.qubits) == 2:

error = gate.parameters[0].value # gate error rate

gate_errors[tuple(gate.qubits)] = error

# Sort pairs by error rate (lowest first)

sorted_pairs = sorted(gate_errors.items(), key=lambda x: x[1])

print("Top 10 lowest-error qubit pairs:")

for pair, err in sorted_pairs[:10]:

print(f" Qubits {pair}: error = {err:.5f}")

# Build a candidate qubit set from the best pairs

# Start with the best pair and greedily add connected low-error qubits

best_qubits = set()

for pair, err in sorted_pairs:

if len(best_qubits) >= 6: # target 6 qubits for QV=64

break

best_qubits.update(pair)

qubits = sorted(best_qubits)[:6]

print(f"\nSelected qubits for QV test: {qubits}")

This greedy approach is a reasonable starting point. For production QV measurements, IBM’s own tooling performs more sophisticated qubit selection that accounts for the full connectivity graph, crosstalk between neighboring qubits, and the specific transpilation routing that the compiler will use.

Keep in mind that calibration data changes over time. Devices are recalibrated regularly (often daily), and a qubit subset that performs well today may perform differently tomorrow. For the most reliable QV measurement, pull fresh calibration data immediately before running the experiment.

Interpreting the Result

# Requires: qiskit_experiments (result is from QV experiment above)

# Check if the QV test passed

passed = result.analysis_results("QV_success")

print(f"QV test passed: {passed.value}")

# View confidence interval

print(f"HOP confidence interval: {hop.extra}")

The test requires statistical confidence: the lower bound of a one-sided bootstrap confidence interval must exceed 2/3. This protects against lucky results from finite shot counts.

Reading the Numbers

The raw HOP value tells you how the device performed, but the pass/fail decision depends on the confidence interval. Here is how to interpret different HOP ranges:

HOP around 0.52 to 0.55 (just above random chance). The device is struggling heavily at this QV level. An ideal device would produce HOP near 0.632, and a completely random device would produce 0.5. A measured HOP barely above 0.5 means the device’s noise is drowning out nearly all of the circuit’s quantum signal. This QV level is far out of reach for this qubit subset. Try reducing m by 2 or more.

HOP around 0.60 to 0.65 (close to the threshold). The device is performing reasonably well but may not pass the statistical confidence test. The measured HOP is near the ideal value of 0.632, but the confidence interval may still straddle 2/3. In this situation, you have several options: increase the number of shots (2,048 or 4,096) to tighten the confidence interval, select a different qubit subset with lower error rates, or run the experiment shortly after the device completes a calibration cycle when gate fidelities tend to be highest.

HOP around 0.66 to 0.70 (comfortably above threshold). This is a clean pass. The device reliably handles this QV level. You should increase m by 1 and test the next QV level to find the device’s ceiling.

HOP around 0.80 to 0.90 (unusually high). This is higher than the theoretical ideal of 0.632, which means the device is either very well-calibrated at this circuit size or the circuit depth is not yet challenging enough. This strongly suggests you should test a larger m. You may also be seeing this on a simulator with an artificially low noise model, so verify your backend configuration if results seem too good.

Scanning Across QV Levels

To find a device’s QV, sweep over increasing qubit counts:

from qiskit_experiments.library import QuantumVolume

for n_qubits in [2, 3, 4]: # QV = 2^n_qubits: 4, 8, 16

qubits = list(range(n_qubits))

qv = QuantumVolume(qubits, seed=42)

job = qv.run(noisy_sim, shots=2048)

result = job.block_for_results()

hop_val = result.analysis_results("HOP").value

passed = result.analysis_results("QV_success").value

print(f"QV={2**n_qubits}: HOP={hop_val:.3f}, passed={passed}")

The QV of the device is the largest power-of-two for which the test passes.

When scanning, start from a small m where the device easily passes and increment by 1 until the test fails. The last passing level is the device’s QV. Do not skip levels, because QV is defined as the largest m that passes, and a device that fails at m = 5 should not be reported as having QV = 2^6 even if some lucky trial at m = 6 happens to pass.

For each level, use consistent experimental parameters (same number of shots, same number of random circuits, same confidence level) so the results are comparable across levels.

QV and CLOPS

QV measures quality; CLOPS (Circuit Layer Operations Per Second) measures speed. IBM defines CLOPS as the number of QV circuit layers a device can execute per second, using the QV protocol as the benchmark workload.

# qiskit_experiments CLOPS is not directly available

# but you can estimate it from QV job metadata

import time

start = time.time()

job = qv_exp.run(noisy_sim, shots=100)

job.block_for_results()

elapsed = time.time() - start

# Very rough CLOPS estimate

num_layers = len(qubits) # m layers for m qubits

num_circuits = 100 # number of QV circuits

clops_estimate = (num_circuits * num_layers) / elapsed

print(f"Rough CLOPS estimate: {clops_estimate:.0f}")

Real CLOPS measurements require the full IBM-defined protocol with exact timing methodology.

QV, CLOPS, and Application-Level Benchmarks

QV and CLOPS answer fundamentally different questions, and neither tells the full story on its own. Understanding where each benchmark sits in the hierarchy helps you choose the right metric for your decision.

QV: the quality ceiling. QV answers the question “can this device execute a circuit of this size and depth at all?” It measures the maximum scale at which the device produces meaningful quantum output rather than noise. Think of QV as a pass/fail gate: if your algorithm requires circuits on 8 qubits with depth 8, a device with QV below 256 is unlikely to produce useful results. QV does not tell you how fast the device runs or how well it performs on your specific algorithm. It only tells you whether the device’s quality is sufficient for circuits of a given scale.

CLOPS: throughput. CLOPS answers “how many circuit layers per second can this device process?” Two devices with identical QV can have dramatically different CLOPS. A superconducting device might achieve 10,000+ CLOPS while a trapped-ion device with the same QV might achieve 100 CLOPS, because ion trap gate operations are physically slower. For variational algorithms like VQE and QAOA that require thousands of circuit evaluations in an optimization loop, CLOPS directly determines wall-clock runtime. A high-QV, low-CLOPS device might take days to finish an optimization that a moderate-QV, high-CLOPS device completes in hours.

Application-level benchmarks: real workload performance. Benchmarks like mirror circuits, randomized benchmarking on specific gate sequences, and algorithmic benchmarks (running a small instance of Grover’s or VQE and checking the solution quality) test how the device performs on workloads that resemble actual use cases. These benchmarks capture effects that QV misses: the impact of specific native gate sets, the compiler’s ability to optimize for a particular circuit structure, and the device’s performance on non-random circuit patterns that may trigger correlated errors or crosstalk in ways that random SU(4) circuits do not.

For a complete picture of device suitability, check all three: QV to verify the device meets the minimum quality bar, CLOPS to estimate runtime, and application-level benchmarks to validate that the device handles your specific workload.

Known QV Values for Reference

| Device Generation | Approximate QV |

|---|---|

| Early 5-qubit IBM devices | 8 - 16 |

| IBM Falcon (2020) | 32 - 64 |

| IBM Eagle / Heron (2022+) | 128 - 512+ |

| Ion trap devices (IonQ, Quantinuum) | 512 - 8192+ |

Ion trap devices tend to have higher QV than superconducting devices due to lower gate error rates and all-to-all connectivity, despite being slower. This is a direct illustration of the QV vs. CLOPS tradeoff: ion traps win on quality but lose on throughput.

Limitations of QV in Detail

QV is a useful single-number comparison, but treating it as the only metric leads to blind spots. Here are the specific limitations you should keep in mind.

QV was designed for NISQ-era devices. The protocol assumes physical qubits running without error correction, with gate error rates in the 0.1% to 1% range and qubit counts in the tens to low hundreds. For fault-tolerant quantum computers that use quantum error correction, the relevant metric is the logical error rate per logical gate, not the physical gate fidelity. A fault-tolerant device with 1,000 physical qubits encoding 10 logical qubits at very low logical error rates is fundamentally different from a NISQ device with 1,000 noisy physical qubits. QV does not capture this distinction. As the field moves toward error-corrected systems, QV will become less relevant as a primary benchmark.

QV is blind to qubit count beyond m. A device that passes QV = 64 (m = 6) demonstrates that it has at least 6 high-quality qubits, but it says nothing about the remaining qubits. A 1,000-qubit device might achieve QV = 64 using its 6 best qubits while the other 994 qubits have error rates ten times higher. If your algorithm requires 100 qubits, the device’s QV = 64 tells you almost nothing about whether those 100 qubits can work together effectively. Always check per-qubit and per-gate error rates for the specific qubits your algorithm will use, not just the QV headline number.

QV does not capture coherence times directly. T1 (energy relaxation) and T2 (dephasing) coherence times only affect QV through their impact on the specific m-depth circuit structure used in the protocol. A device with short T2 times might pass QV at depth m because the circuit completes within the coherence window, but fail on a different circuit of the same depth that happens to be more sensitive to dephasing. QV averages over random circuit instances, which smooths out these effects but also hides them.

QV circuits may not match your algorithm’s gate requirements. QV uses random SU(4) gates, which get decomposed into the device’s native gate set during transpilation. Your actual algorithm likely uses a specific, non-random pattern of gates. A device that performs well on random SU(4) circuits might perform poorly on circuits that heavily use a particular gate type (for example, many consecutive Toffoli gates decomposed into long CNOT sequences). Conversely, a device with a native gate set that matches your algorithm’s needs might outperform its QV on that specific workload.

QV does not account for classical processing overhead. Real quantum algorithms involve classical pre-processing (circuit compilation, transpilation, optimization) and post-processing (measurement decoding, error mitigation, classical optimization loops). QV only measures the quantum execution step. A device with high QV but poor compiler support, slow job queuing, or limited classical integration may still deliver poor end-to-end performance.

Use QV alongside application-level benchmarks, per-qubit error data, and CLOPS for a complete picture of device quality.

Common Mistakes

Several pitfalls frequently lead to misleading QV results. Avoiding them saves time and produces measurements you can actually trust.

Using too few shots. The QV protocol requires enough measurement shots per circuit to estimate the heavy output probability with reasonable precision. The minimum commonly used is 1,024 shots, but this produces wide confidence intervals that make borderline results unreliable. Using 2,048 to 4,096 shots per circuit gives substantially tighter confidence intervals and more reproducible pass/fail decisions. If you are reporting QV results in a publication or comparison, use at least 2,048 shots.

Testing on a fixed qubit layout without optimizing qubit selection. The default list(range(m)) qubit selection uses qubits 0 through m-1, which may not be the best qubits on the device. Always check calibration data and select qubits with the lowest two-qubit gate errors and best connectivity. The difference between a naive qubit selection and an optimized one can easily be the difference between passing and failing a QV level.

Reporting QV without specifying the confidence interval. A bare statement like “this device has QV = 128” is incomplete. The QV number is meaningful only when accompanied by the confidence level and the experimental parameters (number of circuits, number of shots, qubit selection). Two groups can measure different QV values on the same device simply by choosing different qubit subsets or different confidence levels. Always report the full experimental setup.

Confusing Quantum Volume with circuit volume. Quantum Volume (capital Q, capital V) is a specific benchmarking protocol with a defined statistical test. Circuit volume is a general property of a quantum circuit (roughly, qubit count times depth). A circuit with volume 64 is not the same as a device with QV = 64. The former describes a circuit’s size; the latter describes a device’s verified capability. Mixing these terms leads to incorrect comparisons.

Running QV on a simulator without a noise model. If you run QV on a perfect (noiseless) simulator, the test will pass at every level you try, up to the limit of classical simulation. This tells you nothing useful. Always use a noise model (via AerSimulator.from_backend() with a real or fake backend) or run on actual hardware to get meaningful QV results.

Was this tutorial helpful?