Solving Optimization Problems with Qiskit Optimization

Use Qiskit's optimization module to formulate and solve combinatorial problems: Max-Cut, knapsack, and TSP using QAOA and the MinimumEigenOptimizer.

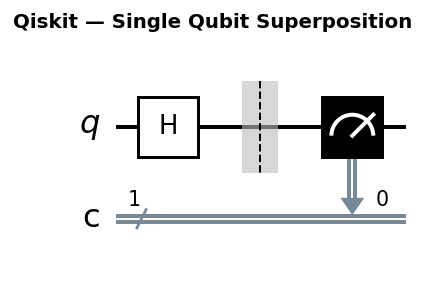

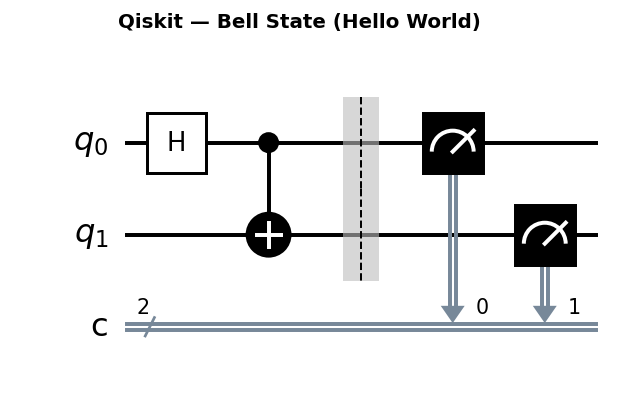

Circuit diagrams

Why Quantum Optimization?

Combinatorial optimization problems (finding the best configuration out of an exponentially large set of possibilities) are candidates for quantum speedup. Algorithms like QAOA (Quantum Approximate Optimization Algorithm) approximate solutions by running a parameterized quantum circuit and measuring the resulting state distribution.

Qiskit Optimization provides a high-level toolkit for this workflow: define the problem in a familiar form, convert it automatically to a QUBO (Quadratic Unconstrained Binary Optimization) that quantum algorithms can handle, and hand it off to a solver.

Installing

pip install qiskit qiskit-optimization qiskit-algorithms networkx matplotlib

The qiskit-optimization package provides QuadraticProgram, converters, and optimizers. qiskit-algorithms provides the QAOA implementation. We add matplotlib for visualization later in the tutorial.

QUBO Formulation Theory

Before diving into code, it helps to understand the mathematical object that sits at the center of quantum optimization: the QUBO.

The QUBO Objective

A Quadratic Unconstrained Binary Optimization problem minimizes:

f(x) = x^T Q x

where x is a binary vector (each x_i is 0 or 1) and Q is an upper-triangular matrix of real coefficients. Expanding this out for a 3-variable problem:

f(x) = Q_00 * x_0 + Q_11 * x_1 + Q_22 * x_2

+ Q_01 * x_0 * x_1 + Q_02 * x_0 * x_2 + Q_12 * x_1 * x_2

The diagonal entries Q_ii act as linear coefficients because x_i^2 = x_i for binary variables. The off-diagonal entries Q_ij capture pairwise interactions between variables.

Converting Constraints to Penalty Terms

Real optimization problems have constraints, but QUBO is unconstrained by definition. The standard technique is to add penalty terms to the objective that make constraint-violating solutions very expensive.

For an equality constraint like sum(x_i) = k, the penalty term is:

P(x) = A * (sum(x_i) - k)^2

where A is a large positive penalty coefficient. When the constraint is satisfied, the penalty is zero. When it is violated, the penalty adds A times the squared violation to the objective value, pushing the optimizer away from that solution.

Choosing the penalty coefficient A: Set A larger than the maximum absolute value of any coefficient in the original objective function. This guarantees that violating a constraint always costs more than any gain from the objective. A common rule of thumb is A = 1 + max(|objective coefficients|). Setting A too large creates numerical issues; setting A too small lets the optimizer violate constraints.

Max-Cut QUBO Matrix Example

Consider Max-Cut on a 4-node path graph: 0-1-2-3 (edges: {0,1}, {1,2}, {2,3}). The Max-Cut objective counts edges (i,j) where x_i differs from x_j. In QUBO form, each edge contributes (x_i + x_j - 2x_ix_j), which equals 1 when exactly one endpoint is in the cut.

Since QUBO minimizes, we negate to convert the maximization. The QUBO matrix Q for this 4-node path graph is:

x_0 x_1 x_2 x_3

x_0 [ -1 2 0 0 ]

x_1 [ 0 -2 2 0 ]

x_2 [ 0 0 -2 2 ]

x_3 [ 0 0 0 -1 ]

The diagonal entry Q_ii equals the negative degree of node i (number of edges incident to i). The off-diagonal entry Q_ij equals +2 for each edge (i,j). The global minimum of this QUBO corresponds to the maximum cut.

The QuadraticProgram Class

QuadraticProgram is the central abstraction for encoding optimization problems. You add binary or continuous variables, set an objective (minimize or maximize), and add linear or quadratic constraints.

from qiskit_optimization import QuadraticProgram

qp = QuadraticProgram("my_problem")

qp.binary_var("x0")

qp.binary_var("x1")

qp.binary_var("x2")

# Minimize: x0 + x1 + x2

qp.minimize(linear={"x0": 1, "x1": 1, "x2": 1})

# Constraint: x0 + x1 >= 1

qp.linear_constraint(linear={"x0": 1, "x1": 1}, sense=">=", rhs=1, name="c1")

print(qp.export_as_lp_string())

QuadraticProgram in Depth

Beyond binary variables and linear objectives, QuadraticProgram supports integer variables, quadratic terms in the objective, and a rich set of inspection methods.

Adding Integer Variables

from qiskit_optimization import QuadraticProgram

qp = QuadraticProgram("integer_example")

# Binary variable: takes values 0 or 1

qp.binary_var("b0")

# Integer variable: takes values in [0, 5]

qp.integer_var(name="y", lowerbound=0, upperbound=5)

# Continuous variable: takes values in [0.0, 10.0]

qp.continuous_var(name="z", lowerbound=0.0, upperbound=10.0)

Note that integer and continuous variables get encoded into binary variables during QUBO conversion. An integer variable with range [0, 5] requires ceil(log2(6)) = 3 binary variables. This encoding overhead means that integer variables expand the qubit count.

Quadratic Terms in the Objective

qp2 = QuadraticProgram("quadratic_objective")

qp2.binary_var("x0")

qp2.binary_var("x1")

qp2.binary_var("x2")

# Minimize: 2*x0 + 3*x1 - x2 + 4*x0*x1 - 2*x1*x2

qp2.minimize(

linear={"x0": 2, "x1": 3, "x2": -1},

quadratic={("x0", "x1"): 4, ("x1", "x2"): -2}

)

print(qp2.export_as_lp_string())

Inspecting Problem Statistics

print(f"Number of variables: {qp2.get_num_vars()}")

print(f"Number of binary variables: {qp2.get_num_binary_vars()}")

print(f"Number of linear constraints: {qp2.get_num_linear_constraints()}")

print(f"Number of quadratic constraints: {qp2.get_num_quadratic_constraints()}")

print(f"Variable names: {[v.name for v in qp2.variables]}")

print(f"Objective sense: {qp2.objective.sense}")

Substituting Variables

The substitute_variables method fixes specific variables to known values, reducing the problem size. This is useful for testing, for warm starting, or when you know part of the solution in advance.

from qiskit_optimization import QuadraticProgram

qp3 = QuadraticProgram("substitution_demo")

qp3.binary_var("x0")

qp3.binary_var("x1")

qp3.binary_var("x2")

qp3.minimize(

linear={"x0": 1, "x1": 2, "x2": 3},

quadratic={("x0", "x1"): 4}

)

# Fix x0 = 1, leaving x1 and x2 free

reduced = qp3.substitute_variables(constants={"x0": 1})

print("Original problem:")

print(qp3.export_as_lp_string())

print("\nAfter fixing x0 = 1:")

print(reduced.export_as_lp_string())

# The objective becomes: (1) + 2*x1 + 3*x2 + 4*(1)*x1 = 1 + 6*x1 + 3*x2

# The constant 1 appears in the LP output as an offset

Worked Example: Verifying the Mathematical Form

Consider a simple problem: minimize x0 + 2*x1 subject to x0 + x1 = 1. We encode it and verify:

from qiskit_optimization import QuadraticProgram

from qiskit_optimization.converters import QuadraticProgramToQubo

qp_verify = QuadraticProgram("verify_example")

qp_verify.binary_var("x0")

qp_verify.binary_var("x1")

qp_verify.minimize(linear={"x0": 1, "x1": 2})

qp_verify.linear_constraint(

linear={"x0": 1, "x1": 1}, sense="==", rhs=1, name="exactly_one"

)

print("Original LP:")

print(qp_verify.export_as_lp_string())

# Convert to QUBO and verify

converter = QuadraticProgramToQubo()

qubo = converter.convert(qp_verify)

print("\nQUBO form:")

print(qubo.export_as_lp_string())

# Manually check: for the constraint x0 + x1 = 1 with penalty A,

# the QUBO objective should be:

# x0 + 2*x1 + A*(x0 + x1 - 1)^2

# Expanding: x0 + 2*x1 + A*(x0^2 + x1^2 + 1 + 2*x0*x1 - 2*x0 - 2*x1)

# Since x_i^2 = x_i: (1 - 2A + A)*x0 + (2 - 2A + A)*x1 + 2A*x0*x1 + A

# = (1 - A)*x0 + (2 - A)*x1 + 2A*x0*x1 + A

Problem 1: Max-Cut on a 5-Node Graph

Max-Cut asks: partition graph nodes into two sets to maximize the number of edges that cross the partition boundary. This maps naturally to binary variables (which side each node is on).

import networkx as nx

from qiskit_optimization import QuadraticProgram

from qiskit_optimization.applications import Maxcut

# Build a random 5-node graph

G = nx.gnm_random_graph(5, 6, seed=42)

# The Maxcut application class builds the QuadraticProgram automatically

maxcut = Maxcut(G)

qp = maxcut.to_quadratic_program()

print(qp.export_as_lp_string())

Convert to QUBO and Solve with QAOA

from qiskit_optimization.converters import QuadraticProgramToQubo

from qiskit_optimization.algorithms import MinimumEigenOptimizer

from qiskit_algorithms import QAOA

from qiskit_algorithms.optimizers import COBYLA

from qiskit.primitives import Sampler

# Convert to QUBO (handles constraints via penalty terms automatically)

converter = QuadraticProgramToQubo()

qubo = converter.convert(qp)

# Set up QAOA with p=2 layers

sampler = Sampler()

qaoa = QAOA(sampler=sampler, optimizer=COBYLA(maxiter=200), reps=2)

optimizer = MinimumEigenOptimizer(qaoa)

result = optimizer.solve(qp) # pass original qp, not qubo

print(result)

print("Cut value:", maxcut.interpret(result))

MinimumEigenOptimizer handles the QUBO conversion internally. The result object contains the binary assignment for each node and the objective value.

QUBO Conversion Details

The QuadraticProgramToQubo converter does several things behind the scenes. Understanding its internals helps you debug problems where the optimizer returns constraint-violating solutions.

What the Converter Handles

-

Maximization to minimization: If the original problem maximizes, the converter negates all objective coefficients. QUBO is always a minimization problem.

-

Linear constraints to quadratic penalties: Each equality constraint c^T x = b becomes a penalty term A * (c^T x - b)^2 added to the objective. Each inequality constraint c^T x <= b is first converted to an equality by introducing slack variables, then penalized the same way.

-

Slack variables for inequality constraints: An inequality constraint like x0 + x1 <= 3 becomes x0 + x1 + s = 3, where s is a new integer variable in [0, 3] that gets binary-encoded. This adds ceil(log2(4)) = 2 extra binary variables to the problem.

Inspecting Penalty Coefficients

import networkx as nx

from qiskit_optimization.applications import Maxcut

from qiskit_optimization.converters import QuadraticProgramToQubo

G = nx.gnm_random_graph(5, 6, seed=42)

maxcut = Maxcut(G)

qp = maxcut.to_quadratic_program()

# Convert with a specific penalty factor

converter = QuadraticProgramToQubo(penalty=10.0)

qubo = converter.convert(qp)

# Compare the original and converted problems

print(f"Original variables: {qp.get_num_vars()}")

print(f"QUBO variables: {qubo.get_num_vars()}")

print(f"Penalty used: {converter.penalty}")

print()

print("QUBO objective (LP form):")

print(qubo.export_as_lp_string())

If you leave the penalty parameter as None, the converter selects a penalty automatically based on the problem coefficients. You can override it when the auto-selected penalty is too small (leading to constraint violations in the solution) or too large (creating numerical instability).

QUBO Expansion for the Knapsack Problem

The knapsack problem has one inequality constraint (total weight <= capacity), which requires slack variables. Examine how the conversion expands the variable count:

from qiskit_optimization import QuadraticProgram

from qiskit_optimization.converters import QuadraticProgramToQubo

weights = [2, 3, 4, 5, 1]

values = [3, 4, 5, 7, 2]

capacity = 8

qp_ks = QuadraticProgram("knapsack_qubo_demo")

for i in range(len(weights)):

qp_ks.binary_var(f"x{i}")

qp_ks.maximize(linear={f"x{i}": values[i] for i in range(len(values))})

qp_ks.linear_constraint(

linear={f"x{i}": weights[i] for i in range(len(weights))},

sense="<=",

rhs=capacity,

name="weight_limit"

)

converter = QuadraticProgramToQubo()

qubo_ks = converter.convert(qp_ks)

print(f"Original: {qp_ks.get_num_vars()} variables, "

f"{qp_ks.get_num_linear_constraints()} constraints")

print(f"QUBO: {qubo_ks.get_num_vars()} variables, "

f"{qubo_ks.get_num_linear_constraints()} constraints")

print(f"\nThe slack variable encoding added "

f"{qubo_ks.get_num_vars() - qp_ks.get_num_vars()} extra binary variables")

# The inequality x0*2 + x1*3 + x2*4 + x3*5 + x4*1 <= 8

# requires a slack variable s in [0, 8], encoded with ceil(log2(9)) = 4 bits

# Total: 5 original + 4 slack = 9 QUBO variables

The original 5-variable knapsack problem becomes a 9-variable QUBO after the slack variable encoding. Each additional qubit doubles the Hilbert space, so this expansion directly impacts the quantum resources required.

Problem 2: 0/1 Knapsack

The knapsack problem: given items with weights and values, choose which to pack to maximize total value without exceeding a weight capacity.

from qiskit_optimization import QuadraticProgram

from qiskit_optimization.algorithms import MinimumEigenOptimizer

weights = [2, 3, 4, 5, 1]

values = [3, 4, 5, 7, 2]

capacity = 8

qp = QuadraticProgram("knapsack")

for i in range(len(weights)):

qp.binary_var(f"x{i}")

# Maximize total value

qp.maximize(linear={f"x{i}": values[i] for i in range(len(values))})

# Weight constraint

qp.linear_constraint(

linear={f"x{i}": weights[i] for i in range(len(weights))},

sense="<=",

rhs=capacity,

name="weight_limit"

)

# Solve with QAOA

sampler = Sampler()

qaoa = QAOA(sampler=sampler, optimizer=COBYLA(maxiter=300), reps=2)

optimizer = MinimumEigenOptimizer(qaoa)

result = optimizer.solve(qp)

print(result)

Compare Against Brute Force

# Requires: qiskit_optimization (weights/values/result from above)

from itertools import product

best_val, best_combo = 0, None

for combo in product([0, 1], repeat=len(weights)):

total_weight = sum(w * x for w, x in zip(weights, combo))

total_value = sum(v * x for v, x in zip(values, combo))

if total_weight <= capacity and total_value > best_val:

best_val, best_combo = total_value, combo

print(f"Brute force optimal: {best_val}, items: {best_combo}")

print(f"QAOA result: {-result.fval}") # objective is stored as negated

QAOA is a heuristic and does not guarantee the optimal solution, especially at low circuit depth (small reps). Increasing reps and maxiter improves approximation quality at the cost of longer runtime.

Problem 3: Traveling Salesman (3 Cities)

TSP asks for the shortest tour that visits every city exactly once. The QuadraticProgram formulation uses binary variables x_{i,t} = 1 if city i is visited at time step t.

from qiskit_optimization.applications import Tsp

import numpy as np

# Distance matrix for 3 cities

distances = np.array([

[0, 10, 15],

[10, 0, 12],

[15, 12, 0],

])

tsp = Tsp(distances)

qp_tsp = tsp.to_quadratic_program()

print(f"TSP with 3 cities: {qp_tsp.get_num_binary_vars()} binary variables")

# 3 cities * 3 time steps = 9 binary variables

TSP scales quadratically in qubit count: n cities need n^2 qubits. For 5 cities you need 25 qubits, which is already beyond what current NISQ hardware can handle accurately with QAOA.

Problem 4: Vehicle Routing

The Vehicle Routing Problem (VRP) generalizes TSP to multiple vehicles. Given n customers and k vehicles starting from a depot, find routes for each vehicle so that every customer is visited exactly once and total travel distance is minimized.

Binary Variable Encoding

VRP uses binary variables x_{v,i,j} = 1 to indicate that vehicle v travels directly from location i to location j. For n customers plus one depot (n+1 locations) and k vehicles, the number of binary variables is k * (n+1)^2. Even a small instance grows quickly:

| Customers | Vehicles | Binary Variables |

|---|---|---|

| 3 | 1 | 16 |

| 4 | 2 | 50 |

| 6 | 2 | 98 |

| 10 | 3 | 363 |

Solving VRP with Qiskit

import numpy as np

from qiskit_optimization.applications import VehicleRouting

from qiskit_optimization.algorithms import MinimumEigenOptimizer

from qiskit_algorithms import QAOA, NumPyMinimumEigensolver

from qiskit_algorithms.optimizers import COBYLA

from qiskit.primitives import Sampler

# Create a small VRP: 3 customers + 1 depot, 2 vehicles

# The first node (index 0) is the depot

n_customers = 3

n_vehicles = 2

# Distance matrix (depot + 3 customers = 4 locations)

distances = np.array([

[0, 5, 8, 3],

[5, 0, 6, 7],

[8, 6, 0, 4],

[3, 7, 4, 0],

])

vrp = VehicleRouting(

num_vehicles=n_vehicles,

num_nodes=n_customers + 1, # customers + depot

cost_matrix=distances,

)

qp_vrp = vrp.to_quadratic_program()

print(f"VRP binary variables: {qp_vrp.get_num_binary_vars()}")

print(f"VRP constraints: {qp_vrp.get_num_linear_constraints()}")

# Solve exactly first (feasible for small instances)

exact = MinimumEigenOptimizer(NumPyMinimumEigensolver())

exact_result = exact.solve(qp_vrp)

print(f"\nExact result: {exact_result.fval}")

print(f"Route assignment: {exact_result.x}")

The large variable count makes VRP one of the most resource-hungry optimization problems for quantum approaches. A 4-customer, 2-vehicle VRP already requires more qubits than most NISQ devices can handle with QAOA, which is why VRP research focuses on decomposition strategies that break the problem into smaller subproblems.

QAOA Circuit Structure

Understanding the QAOA circuit helps you reason about circuit depth, parameter counts, and hardware requirements.

Circuit Components

For a Max-Cut problem with n nodes, m edges, and p layers, the QAOA circuit consists of:

-

Initialization: n Hadamard gates create an equal superposition of all 2^n bitstrings.

-

Cost unitary (repeated p times): For each edge (i,j) in the graph, apply a ZZ interaction parameterized by gamma. This is implemented as CNOT-Rz-CNOT (or equivalent decomposition). The cost unitary encodes the Max-Cut objective into the quantum state.

-

Mixer unitary (repeated p times): Apply Rx(2*beta) on every qubit. The mixer drives transitions between bitstrings, enabling the optimizer to explore the solution space.

-

Measurement: Measure all qubits in the computational basis to obtain a candidate solution.

The circuit has 2p parameters total: gamma_1, …, gamma_p and beta_1, …, beta_p. Finding the optimal values for these parameters is the classical optimization task.

Explicit Circuit for a 4-Node Ring Graph (p=1)

import networkx as nx

from qiskit_optimization.applications import Maxcut

from qiskit_optimization.converters import QuadraticProgramToQubo

from qiskit_algorithms import QAOA

from qiskit_algorithms.optimizers import COBYLA

from qiskit.primitives import Sampler

# 4-node ring graph: 0-1-2-3-0

G_ring = nx.cycle_graph(4)

maxcut_ring = Maxcut(G_ring)

qp_ring = maxcut_ring.to_quadratic_program()

# Build QAOA with p=1

sampler = Sampler()

qaoa_ring = QAOA(sampler=sampler, optimizer=COBYLA(maxiter=100), reps=1)

# Convert to operator to see the circuit

converter = QuadraticProgramToQubo()

qubo_ring = converter.convert(qp_ring)

from qiskit_optimization.translators import to_ising

operator, offset = to_ising(qubo_ring)

print("Ising Hamiltonian:")

print(operator)

print(f"Offset: {offset}")

print(f"\nCircuit parameters: 2 * p = 2 * 1 = 2 (one gamma, one beta)")

print(f"Qubits: {G_ring.number_of_nodes()}")

print(f"ZZ interactions per layer: {G_ring.number_of_edges()} (one per edge)")

print(f"Rx gates per layer: {G_ring.number_of_nodes()} (one per qubit)")

For the 4-node ring with 4 edges and p=1, each QAOA layer contains 4 ZZ interactions (each decomposed into 2 CNOTs + 1 Rz) and 4 Rx gates. The total circuit depth scales as O(m * p) where m is the edge count.

Parameter Landscape Visualization

For p=1, QAOA has only two parameters (gamma, beta), which makes it possible to visualize the full energy landscape as a 2D heatmap. This visualization reveals the structure of the optimization problem and explains why some optimizer configurations converge better than others.

import numpy as np

import networkx as nx

import matplotlib.pyplot as plt

from qiskit_optimization.applications import Maxcut

from qiskit_optimization.converters import QuadraticProgramToQubo

from qiskit_optimization.translators import to_ising

from qiskit_algorithms import QAOA

from qiskit_algorithms.optimizers import COBYLA

from qiskit.primitives import Sampler

from qiskit.quantum_info import SparsePauliOp

# 4-node ring graph

G_ring = nx.cycle_graph(4)

maxcut_ring = Maxcut(G_ring)

qp_ring = maxcut_ring.to_quadratic_program()

converter = QuadraticProgramToQubo()

qubo_ring = converter.convert(qp_ring)

operator, offset = to_ising(qubo_ring)

# Scan over a 30x30 grid of (gamma, beta) values

n_points = 30

gammas = np.linspace(0, 2 * np.pi, n_points)

betas = np.linspace(0, np.pi, n_points)

energy_grid = np.zeros((n_points, n_points))

sampler = Sampler()

for i, gamma in enumerate(gammas):

for j, beta in enumerate(betas):

# Build QAOA circuit with fixed parameters

qaoa_eval = QAOA(

sampler=sampler,

optimizer=COBYLA(maxiter=0), # no optimization, just evaluate

reps=1,

initial_point=[gamma, beta],

)

# Evaluate the expectation value

result = qaoa_eval.compute_minimum_eigenvalue(operator)

energy_grid[j, i] = result.eigenvalue.real + offset

# Plot the energy landscape

fig, ax = plt.subplots(1, 1, figsize=(8, 6))

im = ax.pcolormesh(gammas, betas, energy_grid, shading="auto", cmap="viridis")

ax.set_xlabel(r"$\gamma$")

ax.set_ylabel(r"$\beta$")

ax.set_title("QAOA p=1 Energy Landscape (4-Node Ring Max-Cut)")

fig.colorbar(im, ax=ax, label="Objective value")

# Mark the minimum

min_idx = np.unravel_index(np.argmin(energy_grid), energy_grid.shape)

ax.plot(gammas[min_idx[1]], betas[min_idx[0]], "r*", markersize=15)

plt.tight_layout()

plt.savefig("qaoa_landscape.png", dpi=150)

plt.show()

print(f"Best energy found: {energy_grid.min():.4f}")

print(f"At gamma={gammas[min_idx[1]]:.4f}, beta={betas[min_idx[0]]:.4f}")

Interpreting the Landscape

The QAOA energy landscape for Max-Cut on regular graphs has a characteristic periodic structure. Several features stand out:

- Multiple local minima: The landscape contains multiple valleys. Gradient-based optimizers can get trapped in suboptimal valleys depending on their starting point.

- Periodicity: The landscape repeats with period 2*pi in gamma and pi in beta. This symmetry comes from the structure of the Max-Cut cost function.

- Global minimum basin: The deepest valley corresponds to the best QAOA approximation achievable at p=1. For the 4-node ring, p=1 QAOA can find the exact maximum cut.

- Flat regions: Some parameter regions produce nearly uniform energy, where gradient information is uninformative. This is related to the barren plateau phenomenon that affects variational quantum algorithms at larger scales.

This landscape motivates the use of parameter initialization strategies. Random initialization works for small problems, but structured approaches (like the interpolation strategy from Zhou et al. 2020) transfer good parameters from p to p+1 layers.

Classical Optimizer Comparison

The classical optimizer is a critical component of QAOA. It searches the parameter space (gamma, beta values) to minimize the quantum circuit’s expectation value. Different optimizers suit different scenarios.

COBYLA (Derivative-Free)

COBYLA (Constrained Optimization BY Linear Approximation) uses linear approximations to the objective function. It does not require gradient evaluations, making it robust to shot noise from finite sampling.

from qiskit_algorithms.optimizers import COBYLA

cobyla = COBYLA(

maxiter=500, # maximum iterations (increase for better convergence)

tol=1e-6, # convergence tolerance

disp=False, # set True to print convergence info

)

# Use with QAOA

qaoa_cobyla = QAOA(sampler=Sampler(), optimizer=cobyla, reps=2)

When to use: COBYLA is the default choice for simulator-based optimization. It handles shot noise well and converges reliably for small to medium problems. The main drawback is that convergence can be slow for many parameters (high p values).

SPSA (Stochastic Perturbation)

SPSA (Simultaneous Perturbation Stochastic Approximation) estimates gradients using only two function evaluations per iteration, regardless of the parameter count. This makes it efficient for high-dimensional parameter spaces and robust to noise.

from qiskit_algorithms.optimizers import SPSA

spsa = SPSA(

maxiter=300,

learning_rate=0.05,

perturbation=0.1,

)

# Use with QAOA

qaoa_spsa = QAOA(sampler=Sampler(), optimizer=spsa, reps=2)

When to use: SPSA is often the best choice for real quantum hardware, where function evaluations are expensive and noisy. The stochastic gradient estimates are inherently compatible with shot noise. It converges more reliably than gradient-based methods in noisy environments.

L-BFGS-B (Gradient-Based)

L-BFGS-B is a quasi-Newton method that uses gradient information to build an approximate Hessian. It converges quickly in smooth landscapes but requires accurate gradient estimates.

from qiskit_algorithms.optimizers import L_BFGS_B

lbfgsb = L_BFGS_B(

maxfun=200, # max function evaluations

maxiter=100,

)

# Use with QAOA (works well with statevector simulator, poorly with shots)

qaoa_lbfgsb = QAOA(sampler=Sampler(), optimizer=lbfgsb, reps=2)

When to use: L-BFGS-B is excellent for noiseless simulation (statevector or high shot count) where gradient estimates are accurate. It converges much faster than COBYLA for smooth problems. Avoid using it with low shot counts or on real hardware, where noisy gradients cause erratic behavior.

Optimizer Comparison Summary

| Optimizer | Gradient-Free | Shot Noise Robust | Convergence Speed | Best For |

|---|---|---|---|---|

| COBYLA | Yes | Good | Moderate | Simulators |

| SPSA | Yes (approx) | Excellent | Moderate | Real hardware |

| L-BFGS-B | No | Poor | Fast | Noiseless sims |

Graph Types and Max-Cut Performance

QAOA performance depends heavily on graph structure. This section compares QAOA on three graph families to illustrate these differences.

import networkx as nx

import numpy as np

from qiskit_optimization.applications import Maxcut

from qiskit_optimization.algorithms import MinimumEigenOptimizer

from qiskit_algorithms import QAOA, NumPyMinimumEigensolver

from qiskit_algorithms.optimizers import COBYLA

from qiskit.primitives import Sampler

def run_maxcut_qaoa(graph, reps, maxiter=500):

"""Run QAOA on a Max-Cut instance and return the approximation ratio."""

maxcut_app = Maxcut(graph)

qp = maxcut_app.to_quadratic_program()

# Exact solution

exact_solver = MinimumEigenOptimizer(NumPyMinimumEigensolver())

exact_result = exact_solver.solve(qp)

exact_val = exact_result.fval

# QAOA solution

sampler = Sampler()

qaoa = QAOA(sampler=sampler, optimizer=COBYLA(maxiter=maxiter), reps=reps)

qaoa_solver = MinimumEigenOptimizer(qaoa)

qaoa_result = qaoa_solver.solve(qp)

qaoa_val = qaoa_result.fval

ratio = qaoa_val / exact_val if exact_val != 0 else 0

return ratio, exact_val, qaoa_val

# Test graphs (all 6 nodes for fair comparison)

np.random.seed(42)

graphs = {

"Random (Erdos-Renyi)": nx.gnm_random_graph(6, 8, seed=42),

"3-Regular": nx.random_regular_graph(3, 6, seed=42),

"Complete (K6)": nx.complete_graph(6),

}

print(f"{'Graph':<25} {'p':>3} {'Edges':>6} {'Exact':>7} {'QAOA':>7} {'Ratio':>7}")

print("-" * 60)

for name, G in graphs.items():

for p in [1, 2]:

ratio, exact, qaoa_val = run_maxcut_qaoa(G, reps=p)

print(f"{name:<25} {p:>3} {G.number_of_edges():>6} "

f"{exact:>7.2f} {qaoa_val:>7.2f} {ratio:>7.4f}")

Key Observations

-

3-regular graphs are a well-studied benchmark. At p=1, QAOA on 3-regular graphs achieves an expected approximation ratio of exactly 0.6924. This is a theoretical result proven by Farhi, Goldstone, and Gutmann (2014). Increasing to p=2 improves the ratio to approximately 0.7559.

-

Complete graphs are easier for QAOA because every node has the same degree, creating a highly symmetric landscape. The optimizer converges quickly and often finds good solutions even at p=1.

-

Random graphs have irregular structure, which breaks the symmetries that QAOA exploits. Performance varies widely depending on the specific graph instance. Some random graphs are easy; others are hard.

-

Increasing p always helps (in theory), but each additional layer adds more parameters and circuit depth. For p > 3 on current hardware, the noise from deep circuits typically cancels the benefit of additional layers.

Grover Optimizer

Qiskit Optimization provides a GroverOptimizer that uses Grover’s quantum search algorithm to find the minimum of a QUBO objective. Unlike QAOA (which is variational and heuristic), Grover’s approach provides a structured search with provable quadratic speedup.

from qiskit_optimization import QuadraticProgram

from qiskit_optimization.algorithms import GroverOptimizer, MinimumEigenOptimizer

from qiskit_algorithms import NumPyMinimumEigensolver

from qiskit.primitives import Sampler

# Define a small knapsack problem (keep it small for Grover)

weights = [2, 3, 4]

values = [3, 4, 5]

capacity = 5

qp_grover = QuadraticProgram("grover_knapsack")

for i in range(len(weights)):

qp_grover.binary_var(f"x{i}")

qp_grover.maximize(linear={f"x{i}": values[i] for i in range(len(values))})

qp_grover.linear_constraint(

linear={f"x{i}": weights[i] for i in range(len(weights))},

sense="<=",

rhs=capacity,

name="weight_limit"

)

# Solve with Grover optimizer

# num_value_qubits controls the precision of the objective function encoding

sampler = Sampler()

grover_opt = GroverOptimizer(num_value_qubits=3, sampler=sampler)

grover_result = grover_opt.solve(qp_grover)

# Compare with exact solver

exact_solver = MinimumEigenOptimizer(NumPyMinimumEigensolver())

exact_result = exact_solver.solve(qp_grover)

print(f"Grover result: x={grover_result.x}, fval={grover_result.fval}")

print(f"Exact result: x={exact_result.x}, fval={exact_result.fval}")

Grover vs QAOA

| Feature | QAOA | Grover Optimizer |

|---|---|---|

| Type | Variational (heuristic) | Search (structured) |

| Speedup | Problem-dependent | Quadratic (in principle) |

| Parameters | 2p classical parameters | None (parameter-free) |

| Circuit depth | O(p * edges) | O(sqrt(2^n)) |

| Qubit overhead | n (problem qubits only) | n + value qubits + ancillas |

| Best for | Larger problems, NISQ era | Small problems, exact answers |

The Grover optimizer requires significantly more qubits than QAOA (due to the arithmetic circuits for evaluating the objective function), which limits it to very small problem instances on current hardware. However, it finds the exact optimum rather than an approximation.

Classical Comparison with NumPySolver

For benchmarking, use the exact classical solver to find the true optimum:

from qiskit_optimization.algorithms import MinimumEigenOptimizer

from qiskit_algorithms import NumPyMinimumEigensolver

numpy_solver = MinimumEigenOptimizer(NumPyMinimumEigensolver())

exact_result = numpy_solver.solve(qp) # Max-Cut example from earlier

print("Exact optimal:", exact_result.fval)

print("QAOA result: ", result.fval)

print("Approximation ratio:", result.fval / exact_result.fval)

A QAOA approximation ratio above 0.8 is generally considered useful for practical problems. For Max-Cut with p=1, QAOA achieves the Goemans-Williamson bound of ~0.878 on some graph families.

Warm Starting QAOA

Warm starting uses a classical relaxation (continuous LP/SDP) to initialize the QAOA parameters near a good solution, often improving convergence:

from qiskit_optimization.algorithms import WarmStartQAOAOptimizer

warm_qaoa = WarmStartQAOAOptimizer(

pre_solver=MinimumEigenOptimizer(NumPyMinimumEigensolver()),

relax_for_pre_solver=True,

qaoa=QAOA(sampler=Sampler(), optimizer=COBYLA(maxiter=200), reps=2),

eps=0.25,

)

warm_result = warm_qaoa.solve(qp)

print(warm_result)

Recursive QAOA

Recursive QAOA (RQAOA) iteratively fixes the value of the most correlated variable pair, reducing the problem size one variable at a time until it is small enough to solve exactly. It often gives better approximation ratios than flat QAOA at the same circuit depth:

from qiskit_optimization.algorithms import RecursiveMinimumEigenOptimizer

rqaoa_optimizer = RecursiveMinimumEigenOptimizer(

min_num_vars=3,

min_num_vars_optimizer=MinimumEigenOptimizer(NumPyMinimumEigensolver()),

optimizer=MinimumEigenOptimizer(

QAOA(sampler=Sampler(), optimizer=COBYLA(maxiter=200), reps=1)

),

)

rqaoa_result = rqaoa_optimizer.solve(qp)

print("RQAOA result:", rqaoa_result.fval)

Running on Real Hardware with Qiskit Runtime

Switching from a local simulator to real IBM quantum hardware requires a few changes to the workflow. Qiskit Runtime provides cloud-based primitives (Sampler and Estimator) that run on actual quantum processors.

Connecting to IBM Quantum

from qiskit_ibm_runtime import QiskitRuntimeService, SamplerV2

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

# Save your credentials (only needed once)

# QiskitRuntimeService.save_account(channel="ibm_quantum", token="YOUR_API_TOKEN")

service = QiskitRuntimeService(channel="ibm_quantum")

# Select a backend

backend = service.least_busy(simulator=False, min_num_qubits=5)

print(f"Selected backend: {backend.name}")

Transpiling for the Target Hardware

Real hardware has limited qubit connectivity (not all-to-all). You must transpile the QAOA circuit to match the backend’s coupling map and native gate set.

from qiskit_algorithms import QAOA

from qiskit_algorithms.optimizers import SPSA

from qiskit_optimization.algorithms import MinimumEigenOptimizer

# Use SamplerV2 from Qiskit Runtime instead of the local Sampler

sampler_runtime = SamplerV2(backend)

# Use SPSA optimizer (better for noisy hardware than COBYLA)

spsa = SPSA(maxiter=100)

# Build QAOA with the runtime sampler

qaoa_hw = QAOA(

sampler=sampler_runtime,

optimizer=spsa,

reps=1, # keep shallow for NISQ

)

hw_optimizer = MinimumEigenOptimizer(qaoa_hw)

# hw_result = hw_optimizer.solve(qp) # uncomment to run on real hardware

Error Mitigation

Readout errors are one of the largest noise sources on current hardware. Qiskit Runtime supports error mitigation through the resilience_level option:

from qiskit_ibm_runtime import Options

options = Options()

options.resilience_level = 1 # enables readout error mitigation

options.execution.shots = 4000

sampler_mitigated = SamplerV2(backend, options=options)

- resilience_level=0: No mitigation (fastest, noisiest)

- resilience_level=1: Readout error mitigation (good balance of speed and accuracy)

- resilience_level=2: Additional zero-noise extrapolation (slowest, most accurate)

For optimization workloads, resilience_level=1 provides a meaningful improvement in solution quality without excessive overhead.

Practical Hardware Considerations

- Keep p=1 for QAOA on current hardware. Deeper circuits accumulate too much noise.

- Prefer backends with lower error rates on 2-qubit gates, not just more qubits.

- Run the exact solver on a simulator first to establish the target solution, then compare the hardware result.

- Expect approximation ratios 10-30% lower on hardware compared to noiseless simulation.

Scaling Analysis

Understanding how quantum optimization scales with problem size is essential for setting realistic expectations.

Max-Cut Scaling Table

| Nodes | QAOA Qubits | Circuit Depth (p=1) | Brute Force (2^n) | Current HW Feasible? |

|---|---|---|---|---|

| 5 | 5 | ~20 | 32 | Yes |

| 10 | 10 | ~50 | 1,024 | Yes (noisy) |

| 20 | 20 | ~120 | 1,048,576 | Marginal |

| 50 | 50 | ~300 | ~10^15 | No (too deep) |

| 100 | 100 | ~600 | ~10^30 | No |

Circuit depth is estimated as approximately 3 * (number of edges) for p=1, since each ZZ interaction requires about 3 native gates (2 CNOTs + 1 Rz). The actual depth after transpilation depends on the hardware connectivity; sparse coupling maps increase depth due to SWAP insertions.

The Honest Assessment

For problem sizes where quantum optimization could offer value over brute force (n > 30), the QAOA circuit depth exceeds what current NISQ hardware can execute reliably. Classical heuristics like simulated annealing, tabu search, and semidefinite programming relaxations routinely solve Max-Cut instances with thousands of nodes in seconds.

The path to quantum advantage in optimization requires one or more of:

- Fault-tolerant hardware: Error-corrected qubits allow deep circuits, enabling high-p QAOA or Grover-based approaches with proven speedups.

- Better algorithms: New variational ansatze or hybrid strategies that achieve good approximation ratios with shallow circuits.

- Problem-specific structure: Certain problem families may admit quantum speedups at low circuit depth, though none have been conclusively demonstrated.

Current QAOA implementations are best understood as research tools for understanding quantum optimization, not as practical alternatives to classical solvers. This will change as hardware improves, but it is important to set honest expectations.

Common Mistakes

1. Using Too Few QAOA Layers

Setting reps=1 (p=1) often gives poor approximation ratios. For 3-regular Max-Cut, p=1 achieves only 0.6924, while p=2 improves to 0.7559. Start with at least p=2 for any problem where solution quality matters.

# Too few layers

qaoa_shallow = QAOA(sampler=Sampler(), optimizer=COBYLA(maxiter=200), reps=1)

# Better starting point

qaoa_deeper = QAOA(sampler=Sampler(), optimizer=COBYLA(maxiter=200), reps=2)

2. Not Increasing COBYLA maxiter

The default maxiter=200 is often insufficient for convergence, especially with p >= 2 (which has 4+ parameters). If your QAOA result looks poor, try increasing maxiter before adding more layers.

# May not converge

qaoa_bad = QAOA(sampler=Sampler(), optimizer=COBYLA(maxiter=200), reps=3)

# Give the optimizer more room

qaoa_good = QAOA(sampler=Sampler(), optimizer=COBYLA(maxiter=1000), reps=3)

3. Forgetting That MinimumEigenOptimizer Minimizes

MinimumEigenOptimizer always minimizes. For maximization problems (like knapsack), the QuadraticProgramToQubo converter negates the objective internally. If you pass a maximization problem directly, the conversion handles this. But if you manually build the QUBO, you must negate the objective yourself.

# WRONG: manually building QUBO without negating for maximization

# qp.minimize(linear=values) # This minimizes instead of maximizes!

# CORRECT: use qp.maximize() and let the converter handle negation

qp.maximize(linear={f"x{i}": values[i] for i in range(len(values))})

4. Misinterpreting result.fval Sign

For maximization problems, result.fval stores the negated objective value (because the solver minimizes internally). To get the actual maximization objective value, negate it.

# For a maximization problem:

print(f"Stored fval: {result.fval}") # negative number

print(f"Actual objective: {-result.fval}") # positive, the true value

5. Choosing Penalty Coefficients Too Small

When penalty coefficients are too small, the optimizer finds solutions that violate constraints because the objective improvement outweighs the penalty cost. If your solutions violate constraints, increase the penalty.

from qiskit_optimization.converters import QuadraticProgramToQubo

# May be too small for some problems

converter_weak = QuadraticProgramToQubo(penalty=1.0)

# Explicitly set a large penalty

converter_strong = QuadraticProgramToQubo(penalty=100.0)

# Or let the converter choose automatically (usually safe)

converter_auto = QuadraticProgramToQubo() # penalty=None means auto

6. Expecting Quantum Advantage on Current Hardware

Current QAOA implementations on NISQ hardware do not outperform classical solvers for any practical problem size. This is a research tool, not a production solver. If you need to solve a real optimization problem today, use a classical solver (Gurobi, CPLEX, or even scipy.optimize) and use QAOA to learn about quantum algorithms.

Summary

Qiskit Optimization’s workflow is: define the problem as a QuadraticProgram, optionally convert to QUBO with QuadraticProgramToQubo, and solve with MinimumEigenOptimizer wrapping QAOA or a classical eigensolver. The same interface works for Max-Cut, knapsack, TSP, VRP, and any other problem you can express as a quadratic binary program.

The QUBO formulation is the bridge between classical optimization problems and quantum algorithms. Understanding how constraints become penalty terms, how slack variables expand the variable count, and how the QAOA circuit encodes the objective function gives you the tools to debug and improve your quantum optimization workflows.

For classical optimizer selection, COBYLA works well on simulators, SPSA is preferred on real hardware, and gradient-based methods like L-BFGS-B are best reserved for noiseless simulation. The parameter landscape visualization technique helps you understand convergence behavior and choose initialization strategies.

The key bottleneck is qubit count: problem size scales quadratically or worse in qubits for most formulations. Until fault-tolerant hardware arrives, practical quantum optimization is limited to small problem instances or requires classical decomposition techniques like RQAOA. The Grover optimizer offers an alternative approach for very small instances where an exact answer is needed.

Use the scaling analysis and common mistakes sections as a reference when building your own optimization workflows. Start with small problems where you can verify the quantum result against a classical brute-force solution, then gradually increase complexity as you build intuition for the algorithm’s behavior.

Was this tutorial helpful?