Randomized Benchmarking with Qiskit

Run randomized benchmarking protocols in Qiskit to measure average gate fidelity and diagnose systematic errors on real quantum hardware.

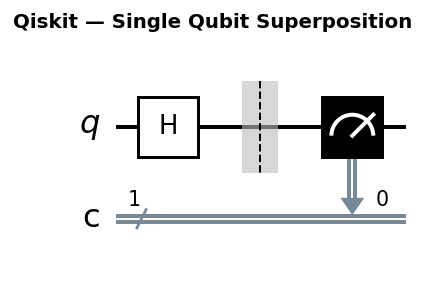

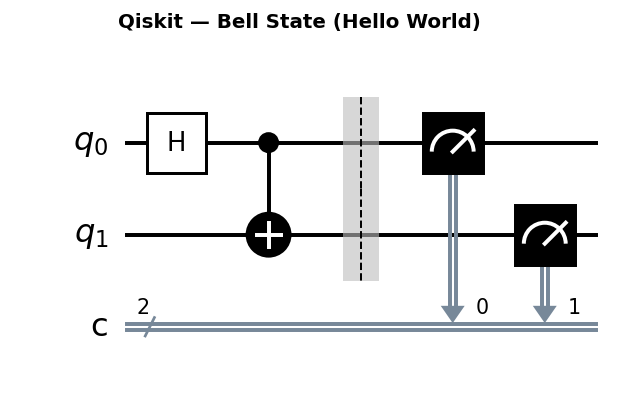

Circuit diagrams

Randomized benchmarking (RB) is the industry-standard protocol for measuring average gate fidelity on quantum hardware. Unlike process tomography, RB is efficient (logarithmic in circuit count), noise-robust, and gives a single interpretable number: the error per Clifford (EPC). If you operate a quantum processor or run algorithms on one, RB is the first tool you should reach for when asking “how good are my gates, really?”

Why Gate Fidelity Matters

Average gate fidelity is the probability that a quantum gate does exactly what it was supposed to do, averaged over all possible input states. A gate with 99.9% fidelity sounds nearly perfect, but consider what that means in practice: 1 error in every 1,000 gate applications. A circuit with 100 gates accumulates roughly a 10% probability of at least one error. A 1,000-gate circuit is almost guaranteed to fail. Fidelity numbers that look impressive in isolation become alarming when you multiply by circuit depth.

This is why measuring gate fidelity precisely matters so much. Small improvements (say, from 99.5% to 99.9%) translate to dramatically longer circuits that can run successfully. And you cannot improve what you cannot measure. RB gives you that measurement cheaply, reliably, and in a way that separates gate errors from other noise sources.

How Randomized Benchmarking Works

The core idea of RB is elegant: apply a sequence of random gates that should compose to the identity, then check whether the output is correct. If the gates were perfect, you would always get the right answer. Gate errors cause the success probability to decay as the sequence gets longer, and the rate of that decay tells you the error per gate.

Here is the protocol in detail:

Step 1: Build random Clifford sequences. For a given sequence length m, choose m random elements from the Clifford group. The Clifford group is the set of all unitaries that map Pauli operators to Pauli operators under conjugation. For a single qubit, this group has 24 elements; for two qubits, it has 11,520.

Why Clifford gates specifically? Because the Clifford group forms a unitary 2-design. This is a precise mathematical property meaning that the average behavior over random Clifford gates is identical to the average over all possible unitary gates. Gate-dependent errors (where some gates are noisier than others) get averaged out, leaving only the average error rate. This is the key property that makes RB robust: you do not need to know the structure of the noise to get a reliable estimate.

Step 2: Append the inverse. After choosing m random Cliffords C_1, C_2, …, C_m, compute their composition C_m * … * C_2 * C_1 and append its inverse as the final gate. The full sequence now implements the identity operation. On a noiseless device, the qubits return to their initial state (the all-zeros state |00…0>).

Step 3: Measure the survival probability. Run the circuit many times (typically 1,024 or more shots) and record the fraction of outcomes that give the correct all-zeros result. This fraction is the survival probability for sequence length m. On a noiseless device, survival probability is always 1.0. With noise, it drops below 1.0, and the longer the sequence, the lower it goes.

Step 4: Repeat and fit. Generate multiple random sequences for each length m (to reduce statistical variance), repeat for many values of m, and fit the survival probability to the exponential decay model:

P(m) = A * p^m + B

Each parameter in this formula has a clear physical meaning:

- A (amplitude) accounts for state preparation and measurement (SPAM) errors. In a perfect experiment A = 1, but real SPAM errors reduce it. Crucially, A scales the overall curve but does not affect the decay rate.

- p (depolarizing parameter) captures the actual gate quality. It ranges from 0 (completely depolarizing) to 1 (perfect gates). This is the number you care about most.

- B (asymptotic baseline) is the floor that the survival probability decays toward. Without any quantum coherence, the system reaches the completely mixed state, which gives the correct measurement outcome with probability 1/d, where d = 2^n is the Hilbert space dimension. For a single qubit, B = 0.5; for two qubits, B = 0.25.

This decomposition is precisely why RB is SPAM-robust. SPAM errors affect A and B but not p. Since the error rate comes from p alone, your gate quality estimate is insensitive to how well you prepare and measure states.

Step 5: Compute the error per Clifford. The depolarizing parameter p converts to the error per Clifford (EPC) through:

EPC = (1 - p) * (1 - 1/d)

For a single qubit (d = 2), this simplifies to EPC = (1 - p) / 2. The factor (1 - 1/d) accounts for the fact that a completely depolarizing channel still gives the correct answer by chance with probability 1/d. The EPC is therefore the probability that the gate introduces a detectable error, averaged over all input states and all Clifford gates.

Installation

pip install qiskit qiskit-aer qiskit-experiments

Note: All code in this tutorial requires

qiskit-experiments. Install it before running any blocks.

Running Standard RB

from qiskit_aer import AerSimulator

from qiskit_experiments.library import StandardRB

import numpy as np

# Define qubit(s) to benchmark and Clifford sequence lengths

qubits = (0,)

lengths = [1, 10, 20, 50, 75, 100, 125, 150]

num_samples = 10 # random sequences per length

rb_exp = StandardRB(

physical_qubits=qubits,

lengths=lengths,

num_samples=num_samples,

seed=42,

)

backend = AerSimulator()

job = rb_exp.run(backend, shots=1024)

result = job.block_for_results()

# Extract EPC

epc = result.analysis_results("EPC")

print(f"Error per Clifford: {epc.value:.6f} +/- {epc.chisq:.6f}")

Choosing Sequence Lengths

The sequence lengths you choose directly affect the quality of your fit. The goal is to sample enough of the exponential decay curve to pin down the decay rate p with low uncertainty. Here are the key guidelines:

Cover the full decay range. You want lengths that span from near-unity survival probability down to the asymptotic floor B. If all your data points sit at the top of the curve (short sequences only), the fit has very little information about the slope. If all your points sit at the floor (sequences too long), everything looks the same and the fit is again unconstrained.

Target 3 to 5 half-lives. The “half-life” of the decay is the sequence length at which the survival probability drops halfway from A to B. If you estimate your per-gate error rate is roughly r, then p is approximately 1 - 2r (for a single qubit), and the half-life is roughly ln(2) / ln(1/p). Your longest sequence should be 3 to 5 times this value. For typical single-qubit error rates of 0.1% to 0.5%, this means maximum lengths of roughly 200 to 1,000.

Use logarithmic or semi-logarithmic spacing. The decay is exponential, so the curve changes most rapidly at short lengths and flattens at long lengths. Packing more data points at short lengths (where the information content per point is highest) and spacing them out at longer lengths gives a better fit. A practical approach: start with lengths like [1, 2, 5, 10, 20, 50, 100, 200, 500] and adjust based on initial results.

Include length 1. A single Clifford plus its inverse provides a data point that is almost entirely dominated by SPAM errors, which helps the fitter separate A from p.

Run a pilot experiment first. If you have no idea what error rate to expect, run a quick RB experiment with a broad range of lengths (say, [1, 10, 50, 100, 500, 1000]) and just 3 samples per length. Examine the raw data to see where the survival probability reaches the floor, then design your full experiment around that range.

Plotting the Decay Curve

# Requires: qiskit_experiments

import matplotlib.pyplot as plt

fig = result.figure(0)

plt.savefig("rb_decay.png", dpi=150, bbox_inches="tight")

plt.show()

The plot shows the measured survival probabilities at each sequence length along with the fitted exponential. Deviations from the fit indicate non-Markovian noise or gate-dependent errors.

Two-Qubit Randomized Benchmarking

Two-qubit RB characterizes the joint performance of two qubits and the entangling gate:

# Requires: qiskit_experiments

qubits_2q = (0, 1)

lengths_2q = [1, 5, 10, 20, 30, 40, 50]

num_samples = 10

rb_2q = StandardRB(

physical_qubits=qubits_2q,

lengths=lengths_2q,

num_samples=num_samples,

seed=42,

)

job = rb_2q.run(backend, shots=2048)

result_2q = job.block_for_results()

epc_2q = result_2q.analysis_results("EPC")

print(f"2Q Error per Clifford: {epc_2q.value:.5f}")

Two-qubit Clifford EPC is typically 10 to 50 times larger than single-qubit EPC on real devices. This reflects the fact that two-qubit Cliffords decompose into many more native gates (often 1.5 CNOTs plus several single-qubit gates on average), and the two-qubit gate itself is usually the noisiest operation on the processor.

Note that the sequence lengths for two-qubit RB are shorter than for single-qubit RB. This is because the higher error rate causes faster decay, so the survival probability reaches the floor sooner. If you use the same lengths as single-qubit RB, most of your data points will sit at the noise floor and the fit will be poor.

Interleaved RB: Measuring a Specific Gate

Standard RB gives you the average error over all Clifford gates, but you often want to know the error of one specific gate (for example, the CNOT). Interleaved RB solves this by alternating the target gate with random Cliffords:

from qiskit import QuantumCircuit

from qiskit_experiments.library import InterleavedRB

# Target gate: CNOT

cnot = QuantumCircuit(2, name="cx")

cnot.cx(0, 1)

irb = InterleavedRB(

interleaved_element=cnot,

physical_qubits=(0, 1),

lengths=[1, 5, 10, 20, 30],

num_samples=10,

seed=42,

)

job = irb.run(backend, shots=2048)

result_irb = job.block_for_results()

epc_gate = result_irb.analysis_results("EPC")

print(f"CNOT error per gate: {epc_gate.value:.5f}")

Interleaved RB runs two experiments: a standard RB experiment and a second experiment where the target gate is inserted between every pair of random Cliffords. The ratio of the two decay rates gives an estimate of the target gate’s error. Technically, the interleaved EPC is an upper bound on the actual gate error, not an exact measurement. The bound is tight when the noise is close to depolarizing, but can be loose for highly coherent errors.

Interpreting Results

| Metric | Typical Value (Superconducting) |

|---|---|

| 1Q EPC | 0.001 - 0.005 |

| 2Q EPC | 0.01 - 0.05 |

| 1Q Average Gate Fidelity | > 99.5% |

| 2Q Average Gate Fidelity | > 95% |

These ranges reflect current state-of-the-art superconducting processors. Trapped-ion systems often achieve lower 1Q EPC (below 0.001) and comparable or better 2Q EPC, though at slower gate speeds.

What to check when results look wrong

EPC is 10x higher than expected. First, verify that you selected the right physical qubits. On many devices, some qubits are significantly noisier than others due to fabrication variation or proximity to defects. Check the device’s calibration data for the specific qubits you targeted. If the qubits look fine individually, check for crosstalk: simultaneous operations on neighboring qubits can increase error rates substantially.

The decay is not exponential. RB theory predicts a clean single-exponential decay when the noise is Markovian (memoryless) and gate-independent. If your data clearly deviates from the exponential fit, this signals a problem. Non-Markovian noise (where the error rate changes during the sequence due to drift, heating, or 1/f noise) causes the decay to curve away from the fit. Gate-dependent errors can also break the single-exponential model. In both cases, the fitted EPC is still meaningful as an average, but the uncertainty is larger than the fit might suggest. Consider running multiple RB experiments over time to check for drift.

A is very low (close to 0.5 for single qubit, or 0.25 for two qubits). The amplitude A reflects SPAM quality. An A value near 1/d means your state preparation or measurement is so noisy that the experiment starts almost at the noise floor, leaving very little dynamic range for the decay. The fit for p will have large uncertainty. To fix this, investigate your readout calibration: check readout assignment fidelity, T1 decay during measurement, and state preparation errors.

B deviates significantly from 1/d. In theory, B = 1/d (0.5 for one qubit, 0.25 for two qubits). A significant deviation can indicate leakage to states outside the computational subspace (common in transmon qubits, which have higher energy levels beyond |0> and |1>). Leakage causes B to drop below 1/d because the leaked population never returns the correct measurement outcome.

When to Use RB (and When Not To)

RB is not the only characterization tool available, and choosing the right tool for the job matters. Here is how RB compares to the main alternatives:

Quantum Process Tomography (QPT)

QPT reconstructs the full quantum channel (the complete mathematical description of what the gate actually does, including all error mechanisms). It answers detailed questions: “Is the error mostly dephasing? Is there a coherent over-rotation? How much leakage is there?” The cost is steep. QPT requires preparing a tomographically complete set of input states and measuring a complete set of output observables. For n qubits this means O(16^n) circuits, making it impractical beyond 2 or 3 qubits. QPT is also sensitive to SPAM errors, which can bias the reconstructed channel. Use QPT when you need detailed error diagnosis for a small gate set, not for routine monitoring.

Gate Set Tomography (GST)

GST is a self-consistent protocol that simultaneously characterizes the gates, state preparation, and measurement. It avoids the SPAM sensitivity of QPT by treating preparation and measurement as unknowns alongside the gates. GST produces a complete, gauge-consistent description of your gate set. The tradeoff is complexity: GST requires carefully designed circuit sets, longer experiments, and more sophisticated analysis. It scales better than full QPT but is still much heavier than RB. Use GST when you need the full error channel for a small gate set and are willing to invest the experimental time.

Cross-Entropy Benchmarking (XEB)

XEB, used prominently by Google, compares measured output distributions to ideal simulations. It works with non-Clifford gates (unlike standard RB, which is restricted to the Clifford group) and can benchmark circuits that are classically hard to simulate. The downside is that XEB requires a classical simulation of the ideal circuit, which limits it to moderate circuit sizes. It also conflates gate errors with other noise sources differently than RB does.

When RB is the right choice

Use randomized benchmarking for:

- Routine monitoring of gate quality over time. RB is fast enough to run between calibration cycles.

- Quick sanity checks before running an algorithm. A 5-minute RB experiment tells you whether the device is performing as expected.

- Comparing hardware before and after a calibration update. RB gives you a single number to compare.

- Setting performance baselines for a new device or a new set of qubits.

- Isolating specific gate errors via interleaved RB when you need more detail than standard RB provides but less than full tomography.

Common Mistakes

Using too few samples per length

Each data point in the RB decay curve is an average over multiple random sequences. With fewer than 5 samples per length, the variance of each point is large, and the exponential fit can be unreliable. For publication-quality results, use 20 or more samples per length. For quick diagnostics, 10 samples is usually sufficient. Fewer than 5 is risky.

Not running enough shots per circuit

Each circuit needs enough measurement shots to estimate the survival probability with reasonable precision. At 100 shots, the statistical uncertainty on a probability near 0.9 is about 0.03, which is comparable to the signal you are trying to measure. Use at least 1,024 shots per circuit. For two-qubit RB (where the survival probability decays faster and the asymptotic floor is lower), 2,048 or more shots reduce noise in the fit.

Confusing EPC with native gate error rate

The EPC measures the average error per Clifford gate, but a Clifford gate is not a single native gate on the hardware. Each single-qubit Clifford decomposes into an average of approximately 1.875 native single-qubit gates (this is a property of the Clifford group decomposition, and the exact number depends on the native gate set). For two-qubit Cliffords, the decomposition is more expensive: typically 1.5 CNOT gates plus several single-qubit gates.

To estimate the error per native gate from the Clifford EPC, divide by the average number of native gates per Clifford. For single-qubit: native error is roughly EPC / 1.875. This is an approximation that assumes errors add linearly, which holds when error rates are small.

Running two-qubit RB on non-adjacent qubits

Two-qubit Clifford gates require two-qubit interactions, which on most hardware only exist between physically adjacent qubits. If you run two-qubit RB on qubits (0, 3) and the device only has connectivity 0-1-2-3, the transpiler will insert SWAP gates to route the two-qubit operations. These extra gates introduce additional errors that inflate the measured EPC beyond what you would see for a directly connected pair. Always check the device’s coupling map before selecting qubits for two-qubit RB.

Ignoring transpilation effects

When running on real hardware, Qiskit transpiles your circuits into the device’s native gate set. The transpilation choices (optimization level, routing strategy, gate decompositions) affect the number of native gates per Clifford. If you are comparing RB results across different software versions or transpilation settings, differences in the transpiled circuits can cause apparent changes in EPC that have nothing to do with the hardware. Pin your transpilation settings for consistent comparisons.

Not accounting for drift

Gate error rates on real hardware drift over time due to fluctuations in control electronics, two-level system (TLS) defects, and environmental noise. An RB experiment that takes 30 minutes might see different error rates at the beginning and end. This manifests as a non-exponential decay or inconsistent results between runs. For high-precision work, interleave your sequence lengths randomly rather than running all short sequences first and all long sequences last. The qiskit-experiments library handles this by default, but verify if you are constructing circuits manually.

RB vs. Process Tomography

RB is preferred over QPT for routine gate quality checks because it requires far fewer circuits, gives a noise-robust estimate of average fidelity, and automatically averages over gate-dependent errors. A single-qubit QPT experiment needs 16 circuits at minimum and is SPAM-sensitive. A single-qubit RB experiment with 8 lengths and 10 samples needs 80 circuits but gives a SPAM-free error estimate. The difference grows dramatically at two qubits: QPT needs 256+ circuits while RB still needs only ~80 circuits for a reliable fit. Use QPT when you need the full channel description for detailed error modeling; use RB when you need a reliable quality number.

Was this tutorial helpful?