QAOA for MaxCut: A Complete Qiskit Walkthrough

Build the Quantum Approximate Optimization Algorithm from scratch in Qiskit to solve MaxCut on small graphs. Understand the circuit structure, cost function, and how to tune the depth parameter p.

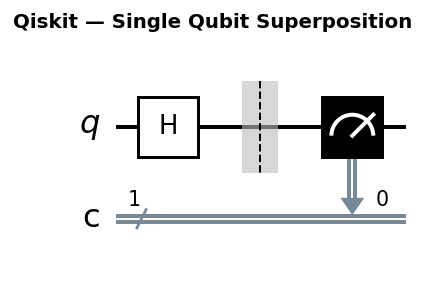

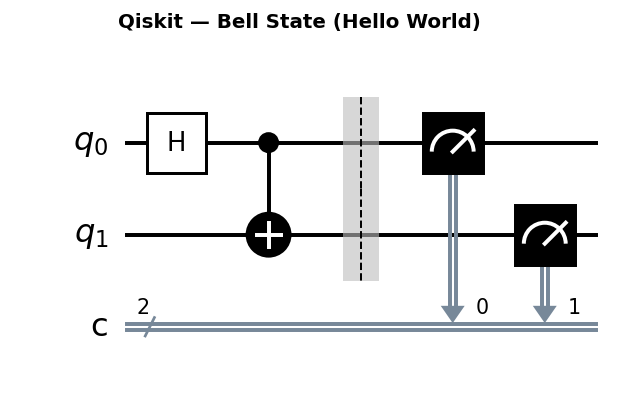

Circuit diagrams

QAOA (Quantum Approximate Optimization Algorithm) is one of the most studied near-term quantum algorithms. It targets combinatorial optimization problems, problems where you want to find the best assignment from a discrete set of possibilities. MaxCut is the standard benchmark: given a graph, partition vertices into two groups to maximize edges between them.

This tutorial builds QAOA for MaxCut from scratch in Qiskit, explains why the circuit looks the way it does, and shows how increasing depth parameter p improves solution quality. Along the way you will learn the gate-level decomposition, extend to weighted graphs, visualize the optimization landscape, analyze circuit depth for hardware, and implement Recursive QAOA.

Setup

pip install qiskit qiskit-aer networkx scipy matplotlib

import numpy as np

from scipy.optimize import minimize

import networkx as nx

import matplotlib.pyplot as plt

from qiskit import QuantumCircuit

from qiskit.quantum_info import SparsePauliOp, Operator

from qiskit.primitives import StatevectorEstimator, StatevectorSampler

from qiskit.circuit import ParameterVector

The MaxCut problem

Given a graph G = (V, E), partition vertices V into sets S and complement S-bar. The cut value is the number of edges (u, v) where u and v are in different sets. MaxCut finds the partition that maximizes this count.

import networkx as nx

# Build a 4-node test graph

G = nx.Graph()

G.add_edges_from([(0,1), (1,2), (2,3), (3,0), (0,2)])

# Brute-force optimal (feasible for n=4)

best_cut = 0

best_partition = None

for mask in range(2**G.number_of_nodes()):

partition = [(mask >> i) & 1 for i in range(G.number_of_nodes())]

cut = sum(1 for (u, v) in G.edges() if partition[u] != partition[v])

if cut > best_cut:

best_cut = cut

best_partition = partition

print(f"Optimal cut: {best_cut}, partition: {best_partition}")

For this 4-node graph with 5 edges, the optimal cut is 4 (only one edge remains uncut). There are multiple optimal partitions. As the graph grows, brute-force becomes infeasible: the search space is 2^n.

The cost Hamiltonian

Each vertex is a qubit: |0> means “in set S”, |1> means “in complement”. An edge (u, v) contributes 1 when the qubits differ. The cost operator for edge (u, v):

C_(u,v) = (1 - Z_u Z_v) / 2

When both qubits match (both |0> or both |1>), Z_u Z_v = +1 and C = 0. When they differ, Z_u Z_v = -1 and C = 1. The total cost Hamiltonian is:

C = sum_{(u,v) in E} (1 - Z_u Z_v) / 2

Each computational basis state |x> is an eigenstate of C with eigenvalue equal to the cut value of partition x. This is the key property that makes QAOA work: the cost Hamiltonian encodes the objective function directly into its eigenvalues.

def maxcut_hamiltonian(graph: nx.Graph) -> SparsePauliOp:

n = graph.number_of_nodes()

terms = []

for (u, v) in graph.edges():

pauli_str = ['I'] * n

pauli_str[u] = 'Z'

pauli_str[v] = 'Z'

zz_str = ''.join(reversed(pauli_str)) # Qiskit: rightmost = qubit 0

terms.append((zz_str, -0.5))

terms.append(('I' * n, 0.5))

return SparsePauliOp.from_list(terms).simplify()

H_cost = maxcut_hamiltonian(G)

print(H_cost)

QAOA theory: why it works

QAOA prepares a trial state by alternating two unitaries for p layers. Start from the uniform superposition |+>^n, which places equal amplitude on every computational basis state:

|psi(gamma, beta)> = U_B(beta_p) U_C(gamma_p) ... U_B(beta_1) U_C(gamma_1) |+>^n

The two unitaries serve distinct roles:

Problem unitary U_C(gamma) = exp(-i * gamma * C) applies a phase to each basis state proportional to its cut value. States with large cuts accumulate large phases. States with small cuts accumulate small phases. This is a diagonal operator in the computational basis: it changes no amplitudes, only phases.

Mixer unitary U_B(beta) = exp(-i * beta * B) where B = sum_i X_i. Because X_i flips qubit i, this operator mixes amplitude between nearby basis states (those differing by a single bit flip). It converts the phase differences planted by U_C into amplitude differences.

The interplay between these two operations creates constructive interference on high-cut states and destructive interference on low-cut states. Each additional layer (higher p) provides finer control over this interference pattern.

For p approaching infinity, QAOA recovers the adiabatic algorithm: a continuous path from the ground state of B (the uniform superposition) to the ground state of C (the optimal cut). The quantum adiabatic theorem guarantees this converges to the exact solution, provided the path is traversed slowly enough. Finite-p QAOA is a Trotterized (discretized) version of this continuous evolution.

The mathematical structure is:

<C> = <+|^n [prod_{k=1}^{p} U_C(gamma_k)^dag U_B(beta_k)^dag] C [prod_{k=1}^{p} U_B(beta_k) U_C(gamma_k)] |+>^n

This is a 2p-parameter variational landscape. Classical optimization searches for the (gamma*, beta*) that maximizes

Gate decomposition of U_C

For MaxCut, the cost unitary for a single edge (u, v) is:

exp(-i * gamma * (1 - Z_u Z_v) / 2) = exp(-i * gamma / 2) * exp(i * gamma * Z_u Z_v / 2)

The first factor exp(-i * gamma / 2) is a global phase and has no effect on measurement outcomes. We drop it. The remaining operator is:

exp(i * gamma * Z_u Z_v / 2)

To understand the ZZ rotation, consider the matrix form. In the two-qubit computational basis {|00>, |01>, |10>, |11>}, the operator Z_u tensor Z_v is diagonal with eigenvalues (+1, -1, -1, +1). So:

exp(i * theta * ZZ) = diag(e^{i*theta}, e^{-i*theta}, e^{-i*theta}, e^{i*theta})

Written as a full matrix with theta = gamma/2:

| cos(theta) + i*sin(theta) 0 0 0 |

| 0 cos(theta) - i*sin(theta) 0 0 |

| 0 0 cos(theta) - i*sin(theta) 0 |

| 0 0 0 cos(theta) + i*sin(theta) |

This decomposes into standard gates as: CNOT(u, v), Rz(2*gamma, v), CNOT(u, v). Here is the derivation:

- CNOT maps Z_u tensor Z_v to I tensor Z_v (it diagonalizes the ZZ interaction onto qubit v alone).

- Rz(2*gamma, v) = exp(-i * gamma * Z_v) applies the desired phase to qubit v.

- The second CNOT undoes the basis change.

The net result is exp(i * gamma * Z_u Z_v / 2) up to a global phase absorbed by Rz.

Let’s verify this decomposition in Qiskit:

from qiskit.quantum_info import Operator

import numpy as np

gamma_val = 0.7 # arbitrary test value

# Build the ZZ rotation using CNOT-Rz-CNOT

qc_decomp = QuantumCircuit(2)

qc_decomp.cx(0, 1)

qc_decomp.rz(2 * gamma_val, 1)

qc_decomp.cx(0, 1)

# Build the exact ZZ rotation matrix

theta = gamma_val / 2

zz_exact = np.diag([

np.exp(1j * gamma_val / 2),

np.exp(-1j * gamma_val / 2),

np.exp(-1j * gamma_val / 2),

np.exp(1j * gamma_val / 2)

])

# Compare (up to global phase)

op_decomp = Operator(qc_decomp).data

# Align global phase by matching element (0,0)

phase = zz_exact[0, 0] / op_decomp[0, 0]

print("Max error:", np.max(np.abs(zz_exact - phase * op_decomp)))

# Should print ~0.0 (machine precision)

The QAOA circuit

QAOA alternates between two unitaries for p layers, starting from the uniform superposition:

- Problem unitary U_C(gamma): encodes the cost Hamiltonian

- Mixer unitary U_B(beta): X rotations enabling exploration

For MaxCut, U_C(gamma) on edge (u,v) decomposes to: CX, RZ(2*gamma), CX.

def build_qaoa_circuit(graph: nx.Graph, p: int):

n = graph.number_of_nodes()

gamma = ParameterVector('gamma', p)

beta = ParameterVector('beta', p)

qc = QuantumCircuit(n)

qc.h(range(n)) # Start in |+>^n

for layer in range(p):

# Problem unitary: apply ZZ rotation for each edge

for (u, v) in graph.edges():

qc.cx(u, v)

qc.rz(2 * gamma[layer], v)

qc.cx(u, v)

# Mixer unitary: X rotation on each qubit

for i in range(n):

qc.rx(2 * beta[layer], i)

return qc, gamma, beta

qc, gamma_params, beta_params = build_qaoa_circuit(G, p=1)

print(f"p=1: depth={qc.depth()}, params={qc.num_parameters}")

Optimizing the parameters

The QAOA expectation value is a function of (gamma, beta). Classical optimization finds the parameters that maximize the expected cut value.

def qaoa_cost(params, qc, gamma_params, beta_params, H, p):

estimator = StatevectorEstimator()

gamma_vals = params[:p]

beta_vals = params[p:]

param_dict = {g: gamma_vals[i] for i, g in enumerate(gamma_params)}

param_dict.update({b: beta_vals[i] for i, b in enumerate(beta_params)})

bound_qc = qc.assign_parameters(param_dict)

result = estimator.run([(bound_qc, H)]).result()

return -float(result[0].data.evs) # negate: minimize() -> we want max

p = 1

qc, gamma_params, beta_params = build_qaoa_circuit(G, p)

np.random.seed(42)

result = minimize(

qaoa_cost, np.random.uniform(0, np.pi, 2*p),

args=(qc, gamma_params, beta_params, H_cost, p),

method='COBYLA', options={'maxiter': 500}

)

optimal_params = result.x

print(f"Optimal cost: {-result.fun:.4f} (classical optimal: {best_cut})")

print(f"Approximation ratio: {-result.fun/best_cut:.4f}")

Sampling the solution

def sample_qaoa(qc, gamma_params, beta_params, params, p, shots=2048):

sampler = StatevectorSampler()

param_dict = {g: params[i] for i, g in enumerate(gamma_params)}

param_dict.update({b: params[p+i] for i, b in enumerate(beta_params)})

bound_qc = qc.assign_parameters(param_dict)

bound_qc.measure_all()

result = sampler.run([bound_qc], shots=shots).result()

return result[0].data.meas.get_counts()

counts = sample_qaoa(qc, gamma_params, beta_params, optimal_params, p)

print("\nTop bitstrings:")

for bitstring, count in sorted(counts.items(), key=lambda x: -x[1])[:6]:

partition = [int(b) for b in reversed(bitstring)]

cut = sum(1 for (u, v) in G.edges() if partition[u] != partition[v])

print(f" {bitstring}: cut={cut}, count={count}" + (" <-- optimal" if cut == best_cut else ""))

Effect of increasing p

for p_val in [1, 2, 3]:

qc_p, gp, bp = build_qaoa_circuit(G, p_val)

best_ratio = 0

for _ in range(5): # multiple restarts

res = minimize(qaoa_cost, np.random.uniform(0, np.pi, 2*p_val),

args=(qc_p, gp, bp, H_cost, p_val),

method='COBYLA', options={'maxiter': 1000})

best_ratio = max(best_ratio, -res.fun / best_cut)

print(f"p={p_val}: approx ratio={best_ratio:.4f}, depth={qc_p.depth()}")

Typical results on this 4-node graph:

- p=1: ratio ~0.75 (guaranteed >= 0.693 theoretically for any graph)

- p=2: ratio ~0.85

- p=3: ratio ~0.95+

Each additional layer increases the approximation ratio but also increases circuit depth, making hardware execution harder.

QAOA p=1 optimal parameters for 3-regular graphs

For 3-regular graphs (every vertex has exactly 3 neighbors), the optimal p=1 QAOA parameters are known analytically. This is one of the few cases where the variational landscape has a closed-form solution.

The optimal parameters are gamma* = pi/8 and beta* = pi/8. At these values, QAOA achieves an approximation ratio of at least 0.6924 on any 3-regular graph, regardless of the number of vertices.

# Generate a random 3-regular graph on 10 vertices

G3 = nx.random_regular_graph(3, 10, seed=42)

# Brute-force optimal cut

n3 = G3.number_of_nodes()

best_cut_3 = 0

for mask in range(2**n3):

partition = [(mask >> i) & 1 for i in range(n3)]

cut = sum(1 for (u, v) in G3.edges() if partition[u] != partition[v])

best_cut_3 = max(best_cut_3, cut)

H3 = maxcut_hamiltonian(G3)

qc3, gp3, bp3 = build_qaoa_circuit(G3, p=1)

# Analytical optimal parameters

analytical_gamma = np.pi / 8

analytical_beta = np.pi / 8

analytical_params = np.array([analytical_gamma, analytical_beta])

analytical_cost = -qaoa_cost(analytical_params, qc3, gp3, bp3, H3, 1)

analytical_ratio = analytical_cost / best_cut_3

print(f"Analytical params: gamma=pi/8, beta=pi/8")

print(f" Approximation ratio: {analytical_ratio:.4f}")

print(f" Expected cut: {analytical_cost:.2f}, optimal: {best_cut_3}")

# Compare to random parameter initializations

random_ratios = []

for seed in range(20):

rng = np.random.default_rng(seed)

random_params = rng.uniform(0, np.pi, 2)

random_cost = -qaoa_cost(random_params, qc3, gp3, bp3, H3, 1)

random_ratios.append(random_cost / best_cut_3)

print(f"\nRandom params (20 trials):")

print(f" Mean ratio: {np.mean(random_ratios):.4f}")

print(f" Max ratio: {np.max(random_ratios):.4f}")

print(f" Min ratio: {np.min(random_ratios):.4f}")

print(f"\nAnalytical params consistently outperform random initialization.")

This result is powerful because it means you can skip the classical optimization loop entirely for p=1 on 3-regular graphs. For other graph types, these parameters still serve as a strong starting point.

Landscape visualization

For p=1, QAOA has only two parameters: gamma and beta. We can visualize the entire cost landscape as a 2D heatmap. This reveals the structure of the optimization problem and helps explain why gradient-free optimizers like COBYLA work well at low p.

# Evaluate QAOA cost over a 2D grid

grid_size = 30

gamma_range = np.linspace(0, np.pi / 2, grid_size)

beta_range = np.linspace(0, np.pi / 4, grid_size)

landscape = np.zeros((grid_size, grid_size))

qc_land, gp_land, bp_land = build_qaoa_circuit(G, p=1)

for i, g in enumerate(gamma_range):

for j, b in enumerate(beta_range):

params = np.array([g, b])

# Store the expected cut value (positive, not negated)

landscape[j, i] = -qaoa_cost(params, qc_land, gp_land, bp_land, H_cost, 1)

# Find the grid maximum

max_idx = np.unravel_index(np.argmax(landscape), landscape.shape)

best_beta_grid = beta_range[max_idx[0]]

best_gamma_grid = gamma_range[max_idx[1]]

fig, ax = plt.subplots(figsize=(8, 6))

im = ax.imshow(

landscape, extent=[0, np.pi/2, np.pi/4, 0],

aspect='auto', cmap='viridis', origin='upper'

)

ax.set_xlabel('gamma')

ax.set_ylabel('beta')

ax.set_title('QAOA p=1 cost landscape (expected cut value)')

plt.colorbar(im, ax=ax, label='<C>')

# Mark the COBYLA optimum

ax.plot(optimal_params[0], optimal_params[1], 'r*', markersize=15,

label=f'COBYLA optimum ({-result.fun:.3f})')

# Mark the grid maximum

ax.plot(best_gamma_grid, best_beta_grid, 'wx', markersize=12, markeredgewidth=2,

label=f'Grid max ({landscape[max_idx]:.3f})')

ax.legend(loc='lower right')

plt.tight_layout()

plt.savefig('qaoa_landscape.png', dpi=150)

plt.show()

print(f"Grid maximum at gamma={best_gamma_grid:.4f}, beta={best_beta_grid:.4f}")

print(f"COBYLA optimum at gamma={optimal_params[0]:.4f}, beta={optimal_params[1]:.4f}")

The landscape for this 4-node graph is smooth with a single dominant basin. This is typical for small graphs at p=1. As p increases, the landscape becomes higher-dimensional and harder to visualize, but also develops more local minima, which motivates using multiple random restarts.

Circuit depth analysis

Understanding circuit resource counts is critical for assessing whether QAOA can run on real hardware. For a graph with n vertices, m edges, and p layers, the gate counts are:

| Gate | Count per layer | Total (p layers) |

|---|---|---|

| H | n (initial state only) | n |

| CNOT | 2m | 2mp |

| Rz | m | mp |

| Rx | n | np |

For our 4-node, 5-edge graph:

n_nodes = G.number_of_nodes()

m_edges = G.number_of_edges()

print(f"Graph: {n_nodes} vertices, {m_edges} edges\n")

print(f"{'p':>3} | {'CNOTs':>6} | {'Rz':>4} | {'Rx':>4} | {'H':>3} | {'Total gates':>11} | {'Circuit depth':>13}")

print("-" * 65)

for p_val in [1, 2, 3, 5, 10]:

cnots = 2 * m_edges * p_val

rzs = m_edges * p_val

rxs = n_nodes * p_val

hs = n_nodes

total = cnots + rzs + rxs + hs

qc_tmp, _, _ = build_qaoa_circuit(G, p_val)

print(f"{p_val:>3} | {cnots:>6} | {rzs:>4} | {rxs:>4} | {hs:>3} | {total:>11} | {qc_tmp.depth():>13}")

On current NISQ hardware, each CNOT gate has approximately 0.5% error rate. The expected circuit fidelity, assuming independent errors, is:

cnot_error = 0.005

for p_val in [1, 2, 3, 5]:

n_cnots = 2 * m_edges * p_val

fidelity = (1 - cnot_error) ** n_cnots

print(f"p={p_val}: {n_cnots} CNOTs, expected fidelity = {fidelity:.3f}")

At p=3 with 30 CNOTs, the expected fidelity is about 0.86. At p=5, it drops to 0.78. At p=10, it falls below 0.61. This sets a practical ceiling on how many layers you can run before noise overwhelms the signal. Error mitigation techniques (ZNE, PEC) can push this ceiling higher, but at a cost in shot overhead.

Weighted MaxCut

Real-world graph problems often have weighted edges. In weighted MaxCut, each edge (u, v) has a weight w_{uv}, and the objective is:

C = sum_{(u,v) in E} w_{uv} * (1 - Z_u Z_v) / 2

The only change in the QAOA circuit is the Rz rotation angle: instead of Rz(2gamma, v), use Rz(2gamma*w_{uv}, v). Heavier edges get larger rotations, biasing the algorithm toward cutting them.

def weighted_maxcut_hamiltonian(graph: nx.Graph) -> SparsePauliOp:

"""Build the cost Hamiltonian for a weighted MaxCut instance.

Edge weights are read from the 'weight' attribute."""

n = graph.number_of_nodes()

terms = []

for (u, v, data) in graph.edges(data=True):

w = data.get('weight', 1.0)

pauli_str = ['I'] * n

pauli_str[u] = 'Z'

pauli_str[v] = 'Z'

zz_str = ''.join(reversed(pauli_str))

terms.append((zz_str, -0.5 * w))

terms.append(('I' * n, 0.5 * w))

return SparsePauliOp.from_list(terms).simplify()

def build_weighted_qaoa_circuit(graph: nx.Graph, p: int):

"""Build a QAOA circuit where Rz angles scale with edge weights."""

n = graph.number_of_nodes()

gamma = ParameterVector('gamma', p)

beta = ParameterVector('beta', p)

qc = QuantumCircuit(n)

qc.h(range(n))

for layer in range(p):

for (u, v, data) in graph.edges(data=True):

w = data.get('weight', 1.0)

qc.cx(u, v)

qc.rz(2 * gamma[layer] * w, v) # weight scales the rotation

qc.cx(u, v)

for i in range(n):

qc.rx(2 * beta[layer], i)

return qc, gamma, beta

# Example: 4-node graph with weights

Gw = nx.Graph()

Gw.add_edge(0, 1, weight=1.0)

Gw.add_edge(1, 2, weight=2.0)

Gw.add_edge(2, 3, weight=3.0)

Gw.add_edge(3, 0, weight=4.0)

Gw.add_edge(0, 2, weight=5.0)

# Brute-force weighted MaxCut

best_wcut = 0

best_wpartition = None

for mask in range(2**Gw.number_of_nodes()):

partition = [(mask >> i) & 1 for i in range(Gw.number_of_nodes())]

wcut = sum(

data['weight'] for (u, v, data) in Gw.edges(data=True)

if partition[u] != partition[v]

)

if wcut > best_wcut:

best_wcut = wcut

best_wpartition = partition

print(f"Weighted optimal cut: {best_wcut}, partition: {best_wpartition}")

# Run weighted QAOA

Hw = weighted_maxcut_hamiltonian(Gw)

qcw, gpw, bpw = build_weighted_qaoa_circuit(Gw, p=1)

np.random.seed(42)

best_wresult = None

for _ in range(5):

res = minimize(

qaoa_cost, np.random.uniform(0, np.pi, 2),

args=(qcw, gpw, bpw, Hw, 1),

method='COBYLA', options={'maxiter': 500}

)

if best_wresult is None or res.fun < best_wresult.fun:

best_wresult = res

print(f"QAOA expected weighted cut: {-best_wresult.fun:.4f}")

print(f"Weighted approximation ratio: {-best_wresult.fun / best_wcut:.4f}")

The weighted version biases QAOA toward cutting high-weight edges, which is exactly what the objective function demands. The Hamiltonian structure is identical; only the Rz angles change.

Warm starting from classical solutions

The QAOA optimization landscape can have local minima, especially at higher p. Starting from a good initial point can significantly improve convergence. One strategy is to warm-start from a classical heuristic solution.

A greedy MaxCut algorithm assigns each vertex to the partition that maximizes the immediate gain. We use the greedy solution to construct an initial state closer to the optimal, then run QAOA with parameters initialized near the identity (small gamma and beta) so the quantum circuit starts near the greedy solution.

def greedy_maxcut(graph):

"""Simple greedy MaxCut: assign each vertex to maximize cut gain."""

n = graph.number_of_nodes()

partition = [0] * n

for v in range(n):

# Count edges to each side

gain_0 = sum(1 for u in graph.neighbors(v) if partition[u] == 1)

gain_1 = sum(1 for u in graph.neighbors(v) if partition[u] == 0)

partition[v] = 1 if gain_1 > gain_0 else 0

cut = sum(1 for (u, v) in graph.edges() if partition[u] != partition[v])

return partition, cut

greedy_part, greedy_cut = greedy_maxcut(G)

print(f"Greedy cut: {greedy_cut}, partition: {greedy_part}")

print(f"Optimal cut: {best_cut}")

# Warm-start strategy: initialize near the greedy solution

# Use small random perturbations around (0, 0) so the circuit

# starts close to the identity (preserving the initial state structure)

p_warm = 2

qc_warm, gp_warm, bp_warm = build_qaoa_circuit(G, p_warm)

# Warm start: small initial parameters

warm_init = np.random.uniform(0, 0.3, 2 * p_warm)

# Cold start: random initial parameters

cold_init = np.random.uniform(0, np.pi, 2 * p_warm)

np.random.seed(123)

warm_results = []

cold_results = []

for trial in range(10):

rng = np.random.default_rng(trial)

# Warm start

w_init = rng.uniform(0, 0.3, 2 * p_warm)

w_res = minimize(

qaoa_cost, w_init,

args=(qc_warm, gp_warm, bp_warm, H_cost, p_warm),

method='COBYLA', options={'maxiter': 300}

)

warm_results.append(-w_res.fun / best_cut)

# Cold start

c_init = rng.uniform(0, np.pi, 2 * p_warm)

c_res = minimize(

qaoa_cost, c_init,

args=(qc_warm, gp_warm, bp_warm, H_cost, p_warm),

method='COBYLA', options={'maxiter': 300}

)

cold_results.append(-c_res.fun / best_cut)

print(f"\nWarm start (10 trials): mean ratio = {np.mean(warm_results):.4f}, "

f"best = {np.max(warm_results):.4f}")

print(f"Cold start (10 trials): mean ratio = {np.mean(cold_results):.4f}, "

f"best = {np.max(cold_results):.4f}")

Warm starting reduces wasted function evaluations on poor regions of the landscape. On hardware, where each function evaluation requires queuing a quantum job, this savings is substantial.

Running QAOA on IBM hardware

Running QAOA on real IBM quantum hardware introduces noise, queue latency, and gate set restrictions. The workflow has several additional steps compared to simulation.

# This code requires an IBM Quantum account and qiskit-ibm-runtime.

# Install with: pip install qiskit-ibm-runtime

from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

# Step 1: Build the parametrized circuit (same as before)

p_hw = 1

qc_hw, gp_hw, bp_hw = build_qaoa_circuit(G, p_hw)

# Step 2: Transpile to a real backend's native gate set.

# We use optimization_level=3 for maximum gate reduction.

# Replace 'ibm_brisbane' with your available backend.

#

# from qiskit_ibm_runtime import QiskitRuntimeService

# service = QiskitRuntimeService()

# backend = service.least_busy(min_num_qubits=4)

# pm = generate_preset_pass_manager(optimization_level=3, backend=backend)

# transpiled_qc = pm.run(qc_hw)

# print(f"Transpiled depth: {transpiled_qc.depth()}")

# print(f"Transpiled CNOT count: {transpiled_qc.count_ops().get('cx', 0)}")

# Step 3: Run estimation with error mitigation.

# EstimatorV2 with resilience_level=2 enables Zero Noise Extrapolation (ZNE),

# which runs the circuit at multiple noise levels and extrapolates to zero noise.

#

# from qiskit_ibm_runtime import EstimatorV2, SamplerV2, Session, Options

#

# with Session(backend=backend) as session:

# estimator = EstimatorV2(mode=session)

# estimator.options.resilience_level = 2 # ZNE

#

# def hardware_cost(params):

# gamma_vals = params[:p_hw]

# beta_vals = params[p_hw:]

# param_dict = {g: gamma_vals[i] for i, g in enumerate(gp_hw)}

# param_dict.update({b: beta_vals[i] for i, b in enumerate(bp_hw)})

# bound = transpiled_qc.assign_parameters(param_dict)

# result = estimator.run([(bound, H_cost)]).result()

# return -float(result[0].data.evs)

#

# # Step 4: Optimize with limited evaluations.

# # Each evaluation is a hardware job, so budget carefully.

# # SPSA is a good choice for noisy function evaluations.

# hw_result = minimize(

# hardware_cost,

# np.array([np.pi/8, np.pi/8]), # warm start for small graphs

# method='COBYLA',

# options={'maxiter': 50} # limited budget due to queue time

# )

#

# # Step 5: Sample the optimized circuit

# sampler = SamplerV2(mode=session)

# param_dict = {g: hw_result.x[i] for i, g in enumerate(gp_hw)}

# param_dict.update({b: hw_result.x[p_hw+i] for i, b in enumerate(bp_hw)})

# bound_final = transpiled_qc.assign_parameters(param_dict)

# bound_final.measure_all()

# hw_counts = sampler.run([bound_final], shots=4096).result()

# print(hw_counts[0].data.meas.get_counts())

Key considerations for hardware execution:

- Transpilation matters. The transpiler maps your abstract circuit to the hardware’s physical qubit connectivity and native gates (typically CX, Rz, SX, X on IBM devices). High optimization levels reduce gate count but take longer to compile.

- Error mitigation is essential. Without ZNE or similar techniques, noise biases the expectation value toward zero (depolarizing noise). Resilience level 2 uses ZNE; level 1 uses measurement error mitigation only.

- Budget your function evaluations. Each COBYLA iteration submits a job to the hardware queue. With 50 iterations and 30-second queue times, optimization takes roughly 25 minutes. Warm starting from known good parameters reduces the number of iterations needed.

- Expect degradation. A circuit that achieves 0.95 approximation ratio in simulation might achieve 0.70-0.80 on hardware, depending on the backend’s error rates and circuit depth.

Recursive QAOA (RQAOA)

Standard QAOA at low p often produces mediocre approximation ratios. Recursive QAOA (RQAOA) improves results by iteratively reducing the graph based on qubit-qubit correlations measured from the QAOA output state.

The algorithm:

- Run QAOA (typically at p=1) on the current graph.

- Measure the correlation <Z_u Z_v> for each edge.

- Find the edge with the strongest correlation (largest |<Z_u Z_v>|).

- If the correlation is positive (vertices tend to be in the same partition), merge u and v into a single vertex.

- If the correlation is negative (vertices tend to be in different partitions), merge u and v with negation (assign opposite partitions).

- Reduce the graph by one vertex and repeat from step 1.

- When the graph is small enough (e.g., 1 vertex), the partition is fully determined.

def compute_zz_correlations(qc, gamma_params, beta_params, params, p, graph):

"""Compute <Z_u Z_v> for each edge using the optimized QAOA state."""

n = qc.num_qubits

estimator = StatevectorEstimator()

param_dict = {g: params[i] for i, g in enumerate(gamma_params)}

param_dict.update({b: params[p+i] for i, b in enumerate(beta_params)})

bound_qc = qc.assign_parameters(param_dict)

correlations = {}

for (u, v) in graph.edges():

pauli_str = ['I'] * n

pauli_str[u] = 'Z'

pauli_str[v] = 'Z'

zz_op = SparsePauliOp.from_list([(''.join(reversed(pauli_str)), 1.0)])

result = estimator.run([(bound_qc, zz_op)]).result()

correlations[(u, v)] = float(result[0].data.evs)

return correlations

def rqaoa(graph, p=1, max_iter=500):

"""Run Recursive QAOA on a MaxCut instance."""

current_graph = graph.copy()

# Track variable assignments: maps original vertex -> (reference_vertex, sign)

# sign=+1 means same partition, sign=-1 means opposite partition

assignments = {v: (v, 1) for v in graph.nodes()}

while current_graph.number_of_nodes() > 1:

n = current_graph.number_of_nodes()

# Relabel nodes to 0..n-1 for circuit building

mapping = {old: new for new, old in enumerate(current_graph.nodes())}

reverse_mapping = {new: old for old, new in mapping.items()}

relabeled = nx.relabel_nodes(current_graph, mapping)

# Run QAOA p=1

H = maxcut_hamiltonian(relabeled)

qc_r, gp_r, bp_r = build_qaoa_circuit(relabeled, p)

best_res = None

for _ in range(3):

res = minimize(

qaoa_cost,

np.random.uniform(0, np.pi, 2 * p),

args=(qc_r, gp_r, bp_r, H, p),

method='COBYLA',

options={'maxiter': max_iter}

)

if best_res is None or res.fun < best_res.fun:

best_res = res

# Compute correlations

correlations = compute_zz_correlations(

qc_r, gp_r, bp_r, best_res.x, p, relabeled

)

# Find most correlated edge

best_edge = max(correlations, key=lambda e: abs(correlations[e]))

best_corr = correlations[best_edge]

u_rel, v_rel = best_edge

u_orig = reverse_mapping[u_rel]

v_orig = reverse_mapping[v_rel]

# Determine relationship: positive correlation means same partition,

# negative means different partitions

same_partition = best_corr > 0

# Update assignments: all vertices pointing to v_orig now point through u_orig

for vertex in list(assignments.keys()):

ref, sign = assignments[vertex]

if ref == v_orig:

if same_partition:

assignments[vertex] = (u_orig, sign)

else:

assignments[vertex] = (u_orig, -sign)

# Merge v_orig into u_orig in the graph

for neighbor in list(current_graph.neighbors(v_orig)):

if neighbor != u_orig:

if current_graph.has_edge(u_orig, neighbor):

pass # keep existing edge (could add weights)

else:

current_graph.add_edge(u_orig, neighbor)

current_graph.remove_node(v_orig)

# The last remaining vertex gets partition 0

last_vertex = list(current_graph.nodes())[0]

base_partition = {last_vertex: 0}

# Reconstruct full partition

final_partition = [0] * graph.number_of_nodes()

for vertex in graph.nodes():

ref, sign = assignments[vertex]

base_val = base_partition.get(ref, 0)

final_partition[vertex] = base_val if sign == 1 else 1 - base_val

# Compute cut value

cut = sum(1 for (u, v) in graph.edges()

if final_partition[u] != final_partition[v])

return final_partition, cut

# Run RQAOA on our test graph

np.random.seed(42)

rqaoa_partition, rqaoa_cut = rqaoa(G)

print(f"RQAOA partition: {rqaoa_partition}")

print(f"RQAOA cut: {rqaoa_cut}")

print(f"Optimal cut: {best_cut}")

print(f"RQAOA ratio: {rqaoa_cut / best_cut:.4f}")

RQAOA often outperforms standard QAOA at the same p value because it leverages the correlation structure in the quantum state to make hard variable assignments. The tradeoff is that it requires running QAOA n-1 times (once per reduction step), which increases the total computational cost.

MaxCut approximation guarantees

Different algorithms provide different guarantees on solution quality. The approximation ratio is the expected cut divided by the optimal cut.

| Algorithm | Approximation ratio | Notes |

|---|---|---|

| Random assignment | 0.500 | Assign each vertex to S with probability 1/2 |

| Greedy heuristic | 0.50 to 0.70 | Instance-dependent, no worst-case guarantee |

| QAOA p=1 | >= 0.6924 | Proven lower bound for all graphs |

| QAOA p=2 | ~0.7559 | Numerically observed on worst-case instances |

| QAOA p=3 | ~0.7924 | Numerically observed |

| Goemans-Williamson SDP | >= 0.878 | Best known polynomial-time classical algorithm |

| Exact (brute force) | 1.000 | Exponential time |

The Goemans-Williamson (GW) result is a landmark in approximation algorithms. It solves a semidefinite programming relaxation of MaxCut and rounds the continuous solution to a discrete partition. Under the Unique Games Conjecture, 0.878 is the best ratio achievable in polynomial time by any classical algorithm.

QAOA does not currently beat GW at any fixed p. However, as p grows with the problem size (p = O(n)), QAOA can in principle achieve ratio 1.0. Whether QAOA provides a practical advantage at moderate p on specific graph families remains an open research question.

# Verify random assignment ratio experimentally

random_cuts = []

for _ in range(10000):

rand_part = np.random.randint(0, 2, G.number_of_nodes())

cut = sum(1 for (u, v) in G.edges() if rand_part[u] != rand_part[v])

random_cuts.append(cut)

print(f"Random assignment: mean ratio = {np.mean(random_cuts)/best_cut:.4f} "

f"(theory: 0.5000)")

print(f"Greedy: ratio = {greedy_cut/best_cut:.4f}")

print(f"QAOA p=1: ratio = {-result.fun/best_cut:.4f}")

QAOA for other problems beyond MaxCut

QAOA generalizes to any problem expressible as a cost function over binary variables. Any QUBO (Quadratic Unconstrained Binary Optimization) maps directly to a diagonal Hamiltonian on qubits. The mixer stays the same (X rotations); only the cost Hamiltonian changes.

Maximum Independent Set

Given a graph, find the largest set of vertices with no edges between them. The cost Hamiltonian combines a reward for selecting vertices with a penalty for selecting adjacent pairs:

C = sum_v Z_v - P * sum_{(u,v) in E} (1 + Z_u)(1 + Z_v) / 4

where P is a penalty strength large enough to make any invalid solution (with adjacent selected vertices) worse than any valid one. Setting P > n ensures this.

def mis_hamiltonian(graph: nx.Graph, penalty: float = None) -> SparsePauliOp:

"""Build cost Hamiltonian for Maximum Independent Set.

Convention: |1> means vertex is in the independent set."""

n = graph.number_of_nodes()

if penalty is None:

penalty = n + 1 # guarantee penalty dominates

terms = []

# Reward: -Z_v for each vertex (negate because we encode selecting as |1>

# and Z|1> = -|1>, so the eigenvalue for selected vertex is -1,

# and -(- 1) = +1 reward)

for v in range(n):

pauli_str = ['I'] * n

pauli_str[v] = 'Z'

terms.append((''.join(reversed(pauli_str)), -0.5))

terms.append(('I' * n, -0.5))

# Penalty for adjacent selected vertices

for (u, v) in graph.edges():

# (1 + Z_u)(1 + Z_v) / 4 = (I + Z_u + Z_v + Z_u Z_v) / 4

pauli_u = ['I'] * n

pauli_u[u] = 'Z'

pauli_v = ['I'] * n

pauli_v[v] = 'Z'

pauli_uv = ['I'] * n

pauli_uv[u] = 'Z'

pauli_uv[v] = 'Z'

terms.append(('I' * n, penalty / 4))

terms.append((''.join(reversed(pauli_u)), penalty / 4))

terms.append((''.join(reversed(pauli_v)), penalty / 4))

terms.append((''.join(reversed(pauli_uv)), penalty / 4))

return SparsePauliOp.from_list(terms).simplify()

H_mis = mis_hamiltonian(G)

print("MIS Hamiltonian terms:", len(H_mis))

Graph coloring

For 3-coloring, encode each vertex with 2 qubits (representing colors 0, 1, 2 in binary, with state |11> penalized as invalid). The cost Hamiltonian penalizes adjacent vertices sharing the same color and penalizes the invalid state |11> on each vertex.

This is more complex than MaxCut because it requires multi-qubit interactions and a larger qubit register (2 qubits per vertex instead of 1).

def graph_coloring_hamiltonian(graph: nx.Graph, n_colors: int = 3,

penalty: float = 10.0) -> SparsePauliOp:

"""Build cost Hamiltonian for graph coloring with penalty terms.

Uses 2 qubits per vertex for 3-coloring (colors 0,1,2 encoded as 00,01,10).

State 11 is penalized as invalid."""

n = graph.number_of_nodes()

n_qubits = 2 * n

terms = []

# Penalty for invalid state |11> on each vertex

# Projector onto |11> for qubits (2v, 2v+1): (1+Z_{2v})(1+Z_{2v+1})/4

for v in range(n):

q0, q1 = 2 * v, 2 * v + 1

# (1 + Z_q0)(1 + Z_q1)/4 terms

for pauli_ops, coeff in [

({}, penalty / 4),

({q0: 'Z'}, penalty / 4),

({q1: 'Z'}, penalty / 4),

({q0: 'Z', q1: 'Z'}, penalty / 4),

]:

pstr = ['I'] * n_qubits

for q, p in pauli_ops.items():

pstr[q] = p

terms.append((''.join(reversed(pstr)), coeff))

# Penalty for adjacent vertices sharing the same color

# For each edge (u,v) and each valid color c in {00, 01, 10}:

# penalize when both u and v are color c

for (u, v) in graph.edges():

for color in range(n_colors):

bits = [(color >> 0) & 1, (color >> 1) & 1]

# Projector for vertex u having this color

# and vertex v having this color

u_qubits = [2*u, 2*u+1]

v_qubits = [2*v, 2*v+1]

# Build projector: product of (1 +/- Z)/2 for each qubit

# bit=0 -> (1+Z)/2, bit=1 -> (1-Z)/2

all_qubits = u_qubits + v_qubits

all_bits = bits + bits # same color for both vertices

# Expand the product of (1 +/- Z)/2 terms

from itertools import product as iterproduct

for choices in iterproduct([0, 1], repeat=4):

coeff = penalty

pstr = ['I'] * n_qubits

for idx, (q, b, c) in enumerate(

zip(all_qubits, all_bits, choices)

):

coeff /= 2

if c == 1: # picked Z term

pstr[q] = 'Z'

if b == 1: # (1-Z)/2, so Z contributes -1

coeff *= -1

# Only add if coeff is nonzero

if abs(coeff) > 1e-12:

terms.append((''.join(reversed(pstr)), coeff))

return SparsePauliOp.from_list(terms).simplify()

# Example: 3-coloring of a triangle

G_tri = nx.Graph([(0,1), (1,2), (2,0)])

H_color = graph_coloring_hamiltonian(G_tri, n_colors=3, penalty=10.0)

print(f"Graph coloring Hamiltonian: {H_color.size} terms on "

f"{G_tri.number_of_nodes() * 2} qubits")

The key takeaway: QAOA’s structure (alternating cost and mixer unitaries) applies to any cost Hamiltonian. The challenge for complex problems is that the penalty terms increase circuit depth and the optimization landscape becomes harder to navigate.

Common mistakes

1. Not using multiple random restarts

The QAOA landscape has local minima, especially at higher p. A single optimization run can get stuck. Always run multiple restarts and take the best.

# Bad: single restart

res = minimize(qaoa_cost, np.random.uniform(0, np.pi, 2*p),

args=(qc, gamma_params, beta_params, H_cost, p),

method='COBYLA')

# Good: multiple restarts

best = None

for _ in range(10):

res = minimize(qaoa_cost, np.random.uniform(0, np.pi, 2*p),

args=(qc, gamma_params, beta_params, H_cost, p),

method='COBYLA', options={'maxiter': 500})

if best is None or res.fun < best.fun:

best = res

2. Wrong sign convention

QAOA maximizes the cut, but scipy.minimize minimizes. You must negate the cost function. Forgetting this produces the worst cut instead of the best.

# Correct: negate for minimization

return -float(result[0].data.evs)

# Wrong: would find the minimum cut

return float(result[0].data.evs)

3. Qiskit’s bitstring ordering

Qiskit uses little-endian ordering: qubit 0 is the rightmost character in a bitstring. When converting a bitstring to a partition, you must reverse it or index carefully.

bitstring = "1010"

# Qiskit ordering: qubit 3 is leftmost, qubit 0 is rightmost

# So bitstring "1010" means: qubit 0 = 0, qubit 1 = 1, qubit 2 = 0, qubit 3 = 1

# Correct: reverse to get vertex ordering

partition = [int(b) for b in reversed(bitstring)]

# partition = [0, 1, 0, 1] -> vertex 0 = 0, vertex 1 = 1, vertex 2 = 0, vertex 3 = 1

# Also correct: use indexing

partition = [int(bitstring[-(i+1)]) for i in range(len(bitstring))]

4. Confusing approximation ratio with sampling success

A high approximation ratio for the expectation value

# Always verify: decode each sampled bitstring and compute its cut

for bitstring, count in sorted(counts.items(), key=lambda x: -x[1])[:5]:

partition = [int(b) for b in reversed(bitstring)]

actual_cut = sum(1 for (u, v) in G.edges() if partition[u] != partition[v])

print(f" {bitstring}: actual cut = {actual_cut}, sampled {count} times")

5. Ignoring hardware budget constraints

On real hardware, each function evaluation goes through the quantum device queue. An optimization loop with 500 iterations is impractical when each iteration takes 30 seconds. Limit COBYLA to 50-100 evaluations and use warm starting.

# Simulation: can afford many iterations

minimize(cost_fn, x0, method='COBYLA', options={'maxiter': 1000})

# Hardware: budget carefully

minimize(cost_fn, warm_start_x0, method='COBYLA', options={'maxiter': 50})

Practical notes

QAOA is not a practical solver today. For small instances, classical greedy algorithms match or exceed QAOA without the overhead. The algorithm’s potential advantage (if any) lies in large-scale fault-tolerant hardware that does not yet exist.

Parameter transferability. For 3-regular graphs specifically, the optimal p=1 parameters are gamma=pi/8, beta=pi/8 regardless of the instance. Warm-starting from a similar instance’s parameters speeds up optimization significantly.

Use gradient-free optimizers on hardware. COBYLA and BOBYQA are more robust to shot noise than gradient-based methods. On real hardware with finite shots, gradient estimates are too noisy to be useful at small shot counts.

MaxCut is NP-hard. QAOA provides an approximation, not a guarantee of the exact optimal. For graph partitioning in practice, use METIS or spectral methods; they are fast and well-understood. QAOA is a research platform, not a production solver.

Recursive QAOA (RQAOA) improves quality at low p. If you are limited to p=1 by hardware noise, RQAOA extracts more information from each run by correlating variables and reducing the graph iteratively.

Circuit depth is the bottleneck. On current hardware, the CNOT error rate (~0.5%) limits useful circuit depth to roughly 50-100 CNOTs before noise overwhelms the signal. Plan your QAOA depth budget accordingly: for a graph with m edges, each layer costs 2m CNOTs.

Was this tutorial helpful?