QAOA for Max-Cut in Cirq

Implement the Quantum Approximate Optimization Algorithm to solve a small Max-Cut problem using Cirq, with parameterized circuits and classical optimization.

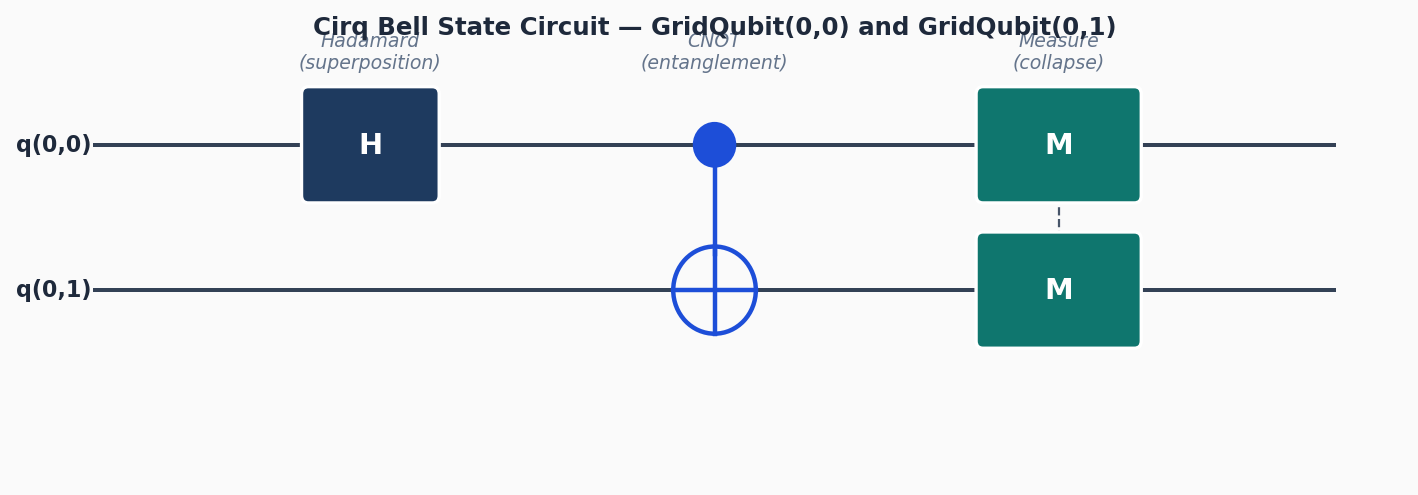

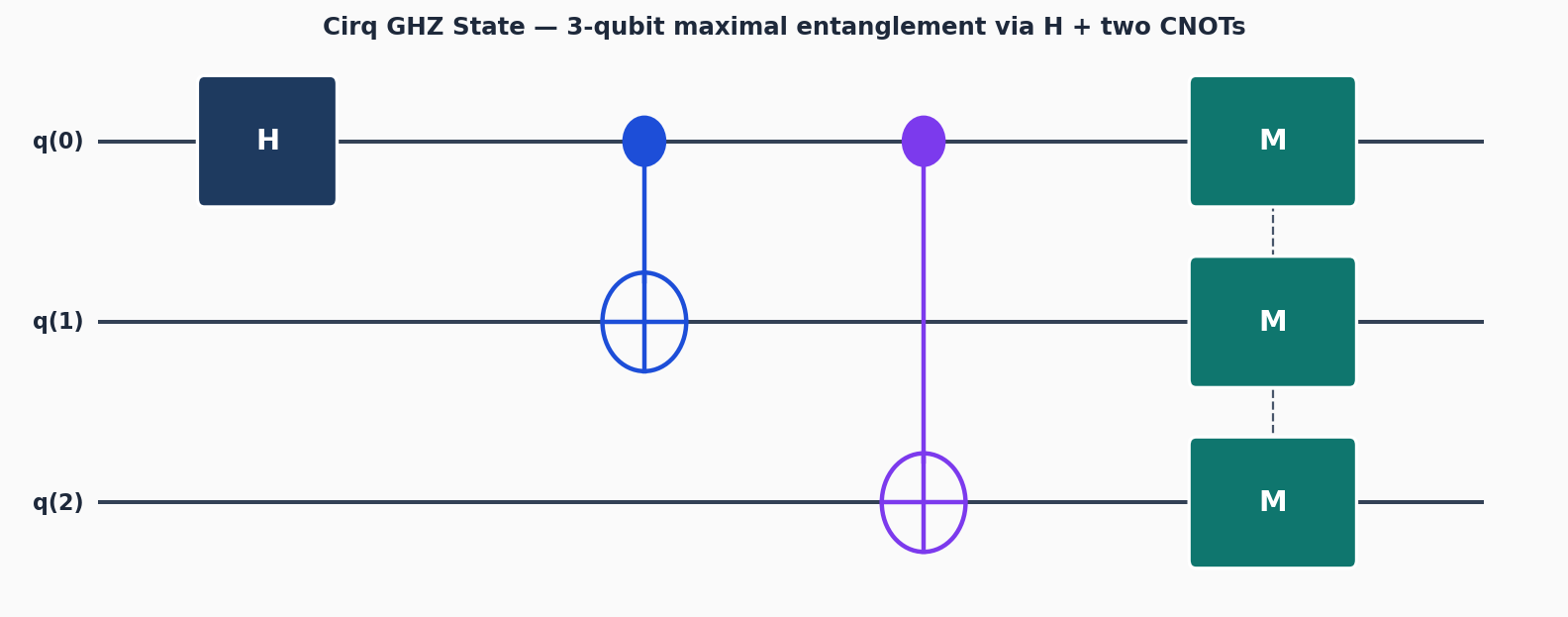

Circuit diagrams

Introduction to QAOA

The Quantum Approximate Optimization Algorithm (QAOA) is a hybrid quantum-classical algorithm designed to solve combinatorial optimization problems. Farhi, Goldstone, and Gutmann introduced it in 2014 as one of the first gate-based algorithms specifically targeting near-term, noisy intermediate-scale quantum (NISQ) devices.

The core insight behind QAOA is straightforward: encode the cost function of an optimization problem as a quantum Hamiltonian (an energy function over qubit states), then use a parameterized quantum circuit to prepare a quantum state that has a high expectation value under this Hamiltonian. A classical optimizer sits in an outer loop, tuning the circuit parameters to push that expectation value higher and higher.

The algorithm works in three steps, repeated until convergence:

- Prepare an initial superposition over all possible solutions.

- Apply p layers of alternating “problem” and “mixer” unitaries, each controlled by tunable parameters (gamma and beta).

- Measure the output state, compute the cost, and feed the result back to a classical optimizer that updates gamma and beta.

As the circuit depth p increases, QAOA can approximate the optimal solution more closely. In the limit where p approaches infinity, QAOA provably solves the optimization problem exactly (it becomes equivalent to quantum adiabatic computation). On current NISQ hardware, practical implementations typically use p=1 or p=2, since each additional layer increases circuit depth and accumulates more noise.

The Max-Cut Problem

Max-Cut asks: given a graph, partition its vertices into two groups to maximize the number of edges that cross between the two groups. An edge is “cut” when its two endpoints land in different groups.

To build intuition, consider the simplest nontrivial example: a triangle graph with 3 nodes and 3 edges.

0

/ \

/ \

1-----2

Each node goes into group A or group B. There are 2^3 = 8 possible assignments:

| Assignment (0,1,2) | Cut edges | Count |

|---|---|---|

| A, A, A | none | 0 |

| A, A, B | (0,2), (1,2) | 2 |

| A, B, A | (0,1), (1,2) | 2 |

| A, B, B | (0,1), (0,2) | 2 |

| B, A, A | (0,1), (0,2) | 2 |

| B, A, B | (0,1), (1,2) | 2 |

| B, B, A | (0,2), (1,2) | 2 |

| B, B, B | none | 0 |

The maximum cut has 2 edges. Six of the eight partitions achieve this maximum (every partition except “all same group” is optimal). The symmetry here is typical: swapping all labels between groups A and B always preserves the cut value, so optimal solutions come in pairs at minimum.

For a 5-node graph, brute force checks 2^5 = 32 partitions, which is trivial. But for a graph with n = 100 nodes, brute force requires checking 2^100 partitions, a number larger than the number of atoms in the observable universe. Max-Cut is NP-hard in general, meaning no known classical algorithm solves all instances in polynomial time. This is precisely the kind of problem where QAOA offers a structured approach.

For this tutorial, we use a 5-node graph:

0---1---3

\ / |

2 |

| |

4-----+

with edges (0,1), (0,2), (1,2), (1,3), (2,4), (3,4). The optimal cut for this graph is 5.

Problem Hamiltonian

To run QAOA, we need to express the Max-Cut cost function as a quantum operator (Hamiltonian). Each qubit represents a vertex: state |0> means “group A” and state |1> means “group B.”

The key building block is the Pauli-Z operator. Recall that Z|0> = +|0> and Z|1> = -|1>. For two qubits i and j, the tensor product Z_i Z_j acts on computational basis states as follows:

- Z_i Z_j |00> = (+1)(+1)|00> = +|00>

- Z_i Z_j |01> = (+1)(-1)|01> = -|01>

- Z_i Z_j |10> = (-1)(+1)|10> = -|10>

- Z_i Z_j |11> = (-1)(-1)|11> = +|11>

So Z_i Z_j measures +1 when both qubits are in the same state (both 0 or both 1) and -1 when they differ (one 0, one 1). An edge is “cut” exactly when its endpoints differ. We want an operator that gives 1 for a cut edge and 0 for an uncut edge. Simple algebra gives us:

(I - Z_i Z_j) / 2

Check: when qubits match, (1 - (+1))/2 = 0. When qubits differ, (1 - (-1))/2 = 1. This is exactly the indicator function for “edge (i,j) is cut.”

The full cost Hamiltonian sums this over all edges:

C = sum_{(i,j) in edges} (I - Z_i Z_j) / 2

The eigenvalue of C on a computational basis state |x> equals the number of cut edges in partition x. Maximizing the expectation value <x|C|x> is equivalent to solving Max-Cut.

Since the identity term (I/2 summed over edges) is just a constant offset, it does not affect which state maximizes C. The optimization-relevant part is:

C_eff = -(1/2) sum_{(i,j) in edges} Z_i Z_j

Maximizing C is equivalent to minimizing the sum of Z_i Z_j terms.

Why ZZPowGate?

The problem unitary in QAOA is exp(-i * gamma * C). Since C is a sum of commuting ZZ terms (all Z_i Z_j operators commute with each other), the exponential factors into a product:

exp(-i * gamma * C) = product_{(i,j) in edges} exp(-i * gamma * (I - Z_i Z_j) / 2)

Dropping the global phase from the identity part, the relevant gate on each edge is:

exp(i * gamma * Z_i Z_j / 2)

Cirq’s ZZPowGate(exponent=t) implements the unitary exp(i * pi * t * Z_i Z_j). To match our desired rotation, we set:

t = gamma / (2 * pi) ... but wait

There is a subtlety here. Different references use different sign conventions. In our code below, we use exponent=gammas[layer] / np.pi, which corresponds to exp(i * pi * (gamma/pi) * Z_i Z_j) = exp(i * gamma * Z_i Z_j). The factor-of-2 difference from the Hamiltonian derivation is absorbed into the optimizer: gamma is a free parameter that the classical optimizer tunes, so the exact convention does not matter as long as it is consistent. The optimizer finds the right numerical value of gamma regardless.

For hardware that does not natively support a ZZ gate, the same operation decomposes into single-qubit and CNOT gates:

exp(i * theta * Z_i Z_j) = CNOT(i,j) -- RZ(2*theta)(j) -- CNOT(i,j)

This decomposition works because CNOT maps Z_i Z_j into Z_j in the rotated frame, so the RZ rotation on the target qubit implements the ZZ interaction.

The Mixer Hamiltonian

The mixer Hamiltonian drives transitions between computational basis states. Without it, the problem unitary alone would only apply phases to basis states and never change which states have high probability. The standard QAOA mixer is:

B = sum_j X_j

where X_j is the Pauli-X operator on qubit j. The Pauli-X operator flips |0> to |1> and vice versa, so exp(-i * beta * B) flips bits with some probability controlled by beta. This “mixes” the population across the full space of binary strings.

Why X specifically? The Pauli-X mixer has a useful property: it connects every computational basis state to every other one through some sequence of single-bit flips. In graph theory terms, the X mixer creates a hypercube connectivity graph over the Hilbert space of n-bit strings. This ensures QAOA can explore all possible solutions, not just a subset.

Since X operators on different qubits commute, the mixer unitary factors into independent single-qubit rotations:

exp(-i * beta * B) = product_j exp(-i * beta * X_j) = product_j RX(2 * beta)_j

Each qubit gets an RX(2*beta) rotation. This is computationally cheap: the mixer layer has depth 1 regardless of the number of qubits.

The initial state for QAOA is the uniform superposition |+>^n = H^n |0>^n. This is the ground state (lowest-energy eigenstate) of the mixer Hamiltonian B. Starting from the mixer ground state and gradually increasing the influence of the problem Hamiltonian mirrors the structure of quantum annealing, which is why QAOA converges to the exact answer as p grows.

Building the QAOA Circuit

The QAOA circuit has p layers. Each layer applies the problem unitary exp(-i * gamma * C) followed by the mixer unitary exp(-i * beta * sum_j X_j). For ZZ terms, the problem unitary decomposes into ZZPowGate on each edge. The mixer is simply X rotations on every qubit.

import cirq

import numpy as np

from scipy.optimize import minimize

# Define a 5-node graph as an edge list

edges = [(0, 1), (0, 2), (1, 2), (1, 3), (2, 4), (3, 4)]

n_qubits = 5

qubits = cirq.LineQubit.range(n_qubits)

def qaoa_circuit(gammas, betas):

"""Build a QAOA circuit with p layers."""

p = len(gammas)

circuit = cirq.Circuit()

# Initial superposition

circuit.append(cirq.H.on_each(*qubits))

for layer in range(p):

# Problem unitary: exp(-i * gamma * Z_i Z_j) for each edge

for i, j in edges:

circuit.append(cirq.ZZPowGate(exponent=gammas[layer] / np.pi)(qubits[i], qubits[j]))

# Mixer unitary: exp(-i * beta * X_j) for each qubit

for q in qubits:

circuit.append(cirq.rx(2 * betas[layer])(q))

return circuit

Understanding p and the Variational Principle

The integer p controls the depth and expressivity of the QAOA circuit. Each layer adds one problem unitary (parameterized by gamma) and one mixer unitary (parameterized by beta), so a depth-p circuit has 2p total parameters.

p=1: The circuit applies a single round of “push toward good solutions” (problem unitary) followed by “explore the solution space” (mixer unitary). With only two free parameters (one gamma, one beta), the circuit can prepare a limited family of quantum states. For Max-Cut on typical graphs, p=1 QAOA achieves an approximation ratio of at least 0.6924 (this is a proven lower bound for 3-regular graphs). In practice, the ratio is often higher for specific instances, but p=1 rarely finds the exact optimum.

p=2: Two rounds of problem and mixer unitaries. The circuit can construct more complex superpositions by applying different rotation angles in each layer. The additional parameters (gamma_1, gamma_2, beta_1, beta_2) allow the circuit to partially undo mistakes from the first layer and refine the state. For our 5-node graph, p=2 typically gets much closer to the optimal cut of 5.

p approaching infinity: In this limit, QAOA becomes equivalent to quantum adiabatic computation. The Adiabatic Theorem guarantees that with sufficient depth, the circuit can prepare the exact ground state of the problem Hamiltonian, which encodes the optimal solution. This is a theoretical guarantee; it does not mean you need infinite depth in practice, just that more depth is always at least as good.

The trade-off is concrete: each additional layer adds 2 more parameters for the classical optimizer to tune, and it increases circuit depth proportionally. On real hardware, deeper circuits accumulate more gate errors and decoherence. For current NISQ devices with error rates around 0.1-1% per two-qubit gate, circuits deeper than roughly 50-100 two-qubit gates become unreliable. Since each QAOA layer applies one ZZ gate per edge, a graph with m edges at depth p uses p * m two-qubit gates. For our 6-edge graph, p=2 requires only 12 two-qubit gates, well within NISQ capabilities.

Computing the Cost Function (Sampling)

To evaluate the cut quality, we measure the circuit many times and compute the expected cost from the bitstring samples.

simulator = cirq.Simulator()

def cost_from_bitstring(bitstring):

"""Count edges crossing the partition defined by bitstring."""

cost = 0

for i, j in edges:

if bitstring[i] != bitstring[j]:

cost += 1

return cost

def evaluate_qaoa(params):

"""Evaluate negative expected cost (for minimization)."""

p = len(params) // 2

gammas = params[:p]

betas = params[p:]

circuit = qaoa_circuit(gammas, betas)

circuit.append(cirq.measure(*qubits, key='result'))

result = simulator.run(circuit, repetitions=1000)

measurements = result.measurements['result']

total_cost = sum(cost_from_bitstring(m) for m in measurements)

return -total_cost / len(measurements) # Negative because we minimize

Expectation Value vs Sampling

The code above samples 1000 shots and averages the cost. This mimics what happens on real quantum hardware, where measurement is inherently probabilistic. However, for classical simulation during development, there is a cleaner alternative: computing the exact expectation value using the statevector simulator.

def evaluate_qaoa_exact(params):

"""Evaluate negative expected cost using exact expectation values."""

p = len(params) // 2

gammas = params[:p]

betas = params[p:]

circuit = qaoa_circuit(gammas, betas)

# Build the cost Hamiltonian as a PauliSum

cost_hamiltonian = sum(

0.5 * (cirq.I(qubits[0]) - cirq.Z(qubits[i]) * cirq.Z(qubits[j]))

for i, j in edges

)

# Compute the exact expectation value

result = simulator.simulate(circuit)

state_vector = result.final_state_vector

expectation = cost_hamiltonian.expectation_from_state_vector(

state_vector, qubit_map={q: i for i, q in enumerate(qubits)}

).real

return -expectation # Negative for minimization

The two approaches differ in important ways:

- Sampling introduces statistical noise (shot noise). With 1000 shots, the standard error on the mean cost is roughly sigma / sqrt(1000), where sigma is the standard deviation of the cost distribution. This noise makes the optimization landscape bumpy, which can slow convergence. However, sampling is exactly what happens on real hardware, so it gives a realistic picture of algorithm performance.

- Exact expectation computes the true expectation value with no statistical noise. The optimization landscape is smooth, which helps gradient-free optimizers converge faster and more reliably. The downside: this requires storing and manipulating the full 2^n statevector, which is only feasible for small qubit counts (roughly n < 30 on a laptop). And it is not available on real hardware, only in simulation.

For development and debugging, use exact expectation values. For benchmarking performance that predicts hardware behavior, use sampling with a realistic shot count.

Classical Optimization with COBYLA

COBYLA (Constrained Optimization BY Linear Approximations) is a gradient-free optimizer. It builds a local linear model of the cost function and uses it to choose the next evaluation point.

Why use a gradient-free method for QAOA?

Gradient-based methods like Adam or L-BFGS require computing the gradient of the cost function with respect to each parameter. On a quantum computer, this is done using the parameter-shift rule: for each parameter, you evaluate the circuit at two shifted values (parameter + pi/2 and parameter - pi/2) and take the difference. This means 2 extra circuit evaluations per parameter per gradient step. For p=1 QAOA with 2 parameters, that is 4 extra evaluations per step. For p=3 with 6 parameters, it is 12 extra evaluations. The overhead scales linearly with the number of parameters.

COBYLA avoids this overhead entirely. It uses only function evaluations (no gradients), which means each optimization step requires just one circuit execution. The trade-off is that COBYLA may need more total steps to converge, and it can get stuck in local minima more easily than gradient-based methods on smooth landscapes. For small p (1 or 2), COBYLA works well in practice.

# p=1 QAOA

p = 1

initial_params = np.random.uniform(0, np.pi, size=2 * p)

result_p1 = minimize(evaluate_qaoa, initial_params, method='COBYLA',

options={'maxiter': 200})

# p=2 QAOA

p = 2

initial_params = np.random.uniform(0, np.pi, size=2 * p)

result_p2 = minimize(evaluate_qaoa, initial_params, method='COBYLA',

options={'maxiter': 500})

print(f"p=1 best expected cut: {-result_p1.fun:.3f}")

print(f"p=2 best expected cut: {-result_p2.fun:.3f}")

print(f"Optimal max cut for this graph: 5")

Grid Search as an Alternative

For small p, the QAOA cost landscape has a periodic structure (the cost function is periodic in both gamma and beta with period 2*pi). This makes grid search a viable alternative to black-box optimization, especially for p=1 where the parameter space is only two-dimensional.

# Grid search over (gamma, beta) for p=1

n_grid = 50

gamma_range = np.linspace(0, 2 * np.pi, n_grid)

beta_range = np.linspace(0, np.pi, n_grid)

best_cost = 0

best_params = (0, 0)

for gamma in gamma_range:

for beta in beta_range:

params = np.array([gamma, beta])

cost = -evaluate_qaoa_exact(params)

if cost > best_cost:

best_cost = cost

best_params = (gamma, beta)

print(f"Grid search best expected cut: {best_cost:.3f}")

print(f"Best (gamma, beta): ({best_params[0]:.3f}, {best_params[1]:.3f})")

The grid search evaluates 50 x 50 = 2500 parameter combinations. For p=1 this is practical (each evaluation is fast in simulation), and it gives a complete picture of the landscape. For p=2 and beyond, the parameter space grows to 4+ dimensions and grid search becomes impractical; use COBYLA or another optimizer instead.

Comparing p=1 vs p=2

With p=1, QAOA has limited expressivity. It can find good partitions but typically falls short of the true optimum. With p=2, the circuit has more variational freedom and can explore a richer set of quantum states. For this 5-node graph the optimal cut is 5 (cutting edges 0-1, 0-2, 1-3, 2-4, 3-4).

To inspect the best solution found:

# Run the optimized circuit and check the most frequent bitstring

p = 2

gammas = result_p2.x[:p]

betas = result_p2.x[p:]

circuit = qaoa_circuit(gammas, betas)

circuit.append(cirq.measure(*qubits, key='result'))

result = simulator.run(circuit, repetitions=5000)

hist = result.histogram(key='result')

most_common = max(hist, key=hist.get)

bitstring = format(most_common, f'0{n_qubits}b')

print(f"Most frequent bitstring: {bitstring}")

print(f"Cut value: {cost_from_bitstring([int(b) for b in bitstring])}")

Common Mistakes

Working with QAOA in Cirq involves several conventions that are easy to get wrong. Here are the most frequent pitfalls:

Wrong gamma convention with ZZPowGate. Cirq’s ZZPowGate(exponent=t) applies the unitary exp(i * pi * t * Z_i Z_j). If you intend a rotation angle of gamma, you need to set exponent = gamma / pi (as in the code above). A common error is to pass gamma directly as the exponent, which multiplies the intended rotation by pi. The optimizer might still converge, but it will find a different (rescaled) optimal gamma value, and transferring parameters between implementations with different conventions will produce wrong results.

Forgetting the minus sign in the cost function. The evaluate_qaoa function returns the negative expected cost because scipy.optimize.minimize minimizes by default. If you accidentally return the positive cost, COBYLA will minimize the cut value instead of maximizing it, and you will converge to the worst partition instead of the best one. This is a surprisingly common bug that produces “results” that look plausible but are wrong.

Starting COBYLA with all-zero initial parameters. When gamma = 0, the problem unitary is the identity (no rotation). When beta = 0, the mixer unitary is also the identity. The circuit reduces to just the initial Hadamard layer, which produces a uniform superposition over all bitstrings. At this point, the cost function is exactly the average cut value over all partitions, and small perturbations to gamma and beta produce nearly zero change in cost. COBYLA can get stuck at this flat region. Always initialize with random parameters drawn from a reasonable range, such as [0, pi].

Comparing p=1 and p=2 with different random seeds. The sampling-based cost function introduces shot noise, and the optimizer uses random initial parameters. If you run p=1 and p=2 with different random seeds, the comparison is not fair: one run might get lucky with its initial parameters or its measurement samples. For a meaningful comparison, fix the random seed before each run:

np.random.seed(42)

# ... run p=1 ...

np.random.seed(42)

# ... run p=2 ...

Or better yet, run each setting multiple times and compare the distributions.

Key Takeaways

- QAOA is a hybrid quantum-classical algorithm that encodes combinatorial optimization problems as quantum Hamiltonians and uses parameterized circuits to find approximate solutions.

- The problem Hamiltonian for Max-Cut uses ZZ interactions, one per graph edge. The term (I - Z_i Z_j)/2 is the indicator function for whether edge (i,j) is cut.

- The mixer Hamiltonian uses X rotations to drive transitions between computational basis states, ensuring the algorithm can explore all possible solutions.

- Increasing p improves solution quality at the cost of deeper circuits and more parameters. For NISQ hardware, p=1 or p=2 is practical.

- COBYLA is a practical optimizer for QAOA since it does not require gradient information, which avoids the 2x-per-parameter overhead of the parameter-shift rule. Grid search is a useful alternative for p=1.

- Use exact expectation values during development for clean optimization landscapes. Switch to sampling when benchmarking realistic hardware performance.

- Watch out for convention mismatches in gate definitions, sign errors in the cost function, degenerate initial parameters, and inconsistent random seeds when comparing runs.

For larger graphs or higher p values, consider using cirq.PauliSum to compute expectation values analytically via simulator.simulate_expectation_values instead of sampling.

Was this tutorial helpful?