Quantum Amplitude Estimation: From Theory to Finance Applications

Implement canonical QAE and Iterative QAE in Qiskit, understand the connection to Grover and QPE, and apply amplitude estimation to European call option pricing.

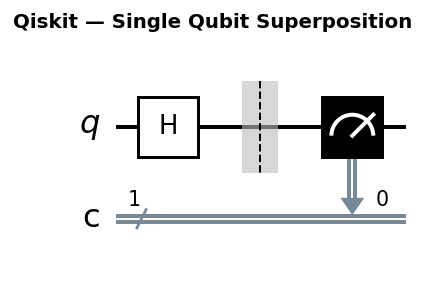

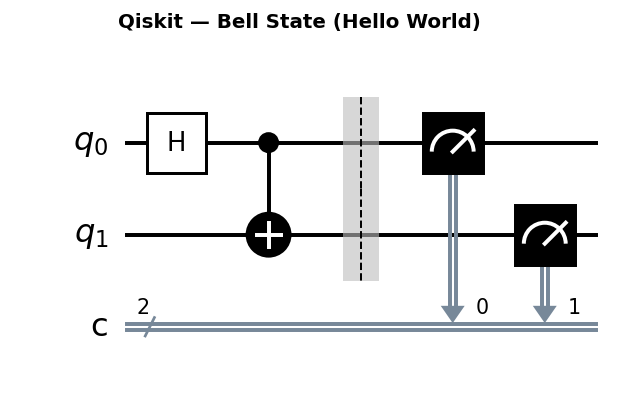

Circuit diagrams

Quantum Amplitude Estimation (QAE) is one of the most practically motivated near-term quantum algorithms. It estimates the probability that a quantum state is in a marked subspace . Classical Monte Carlo computes such expectations with error using samples. QAE achieves error using calls to the state preparation oracle, a quadratic speedup.

The central application in quantum finance is pricing derivatives: the expectation of a payoff function over an underlying asset distribution maps directly to an amplitude estimation problem.

Mathematical Derivation of the QAE Speedup

To understand why QAE offers a quadratic speedup, we need to trace the argument from Grover’s operator through quantum phase estimation to the final accuracy bound.

Classical baseline. Classical Monte Carlo estimates an expectation by drawing independent samples and computing the sample mean . By the central limit theorem, the standard error is , so achieving error requires samples.

Quantum setup. Suppose a unitary prepares a state that encodes the quantity of interest as an amplitude:

where is the amplitude we wish to estimate. The Grover operator (defined below) has eigenvalues where .

Phase estimation precision. Quantum Phase Estimation (QPE) with counting qubits extracts the phase with precision:

This requires controlled applications of , since QPE applies for a total of oracle calls.

From phase precision to amplitude precision. Since , the error in relates to the error in via the chain rule:

Since , we get . Setting oracle queries gives:

Compare this to the classical . For a target accuracy :

| Method | Queries needed | Scaling |

|---|---|---|

| Classical Monte Carlo | queries give error | |

| Quantum Amplitude Estimation | queries give error |

This is the quadratic speedup. For , classical Monte Carlo needs roughly samples, while QAE needs roughly oracle calls.

The Core Idea: Amplitude and the Grover Operator

Given a circuit that prepares , define the Grover operator:

where reflects about and marks the good states. The eigenvalues of are . Quantum Phase Estimation on extracts the phase , giving .

The Grover Operator Q in Detail

The Grover operator is built from two reflections. Understanding each component is essential for implementing QAE correctly.

: Reflection about the good subspace. This operator flips the sign of states in the “good” subspace and leaves “bad” states unchanged:

In practice, when the good/bad distinction is encoded in a single objective qubit (qubit 0 in Qiskit convention), is simply a gate on that qubit. The gate maps and , which flips the sign of the “good” component if marks success.

: Reflection about . This operator flips the sign of every computational basis state except :

For qubits, implement it as:

- Apply to all qubits (maps to )

- Apply a multi-controlled gate (flips sign of only)

- Apply to all qubits (undo step 1)

This sequence produces .

Full operator . The operator applies first (mark good states), then (uncompute state preparation), then (reflect about zero), then (re-prepare), with an overall minus sign.

Eigenvalue derivation. The key insight is that acts within a two-dimensional subspace spanned by and . In this subspace, acts as a rotation by angle :

where . The eigenvalues of this rotation matrix are , with eigenstates:

QPE extracts the phase , from which we recover .

Canonical QAE with QPE

import numpy as np

from qiskit import QuantumCircuit, QuantumRegister, ClassicalRegister

from qiskit.circuit.library import QFT

from qiskit.primitives import StatevectorSampler as Sampler

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

import matplotlib.pyplot as plt

# Note: qiskit-finance examples require: pip install qiskit-finance

def build_state_preparation(a_target):

"""

Simple A circuit: rotates |0> to sqrt(1-a)|0> + sqrt(a)|1>.

Objective qubit is qubit 0.

"""

theta = 2 * np.arcsin(np.sqrt(a_target))

qc = QuantumCircuit(1)

qc.ry(theta, 0)

return qc

def canonical_qae(A_circuit, m_qubits, shots=10000):

"""

Canonical QAE using QPE with m counting qubits.

Returns estimated amplitude a_hat.

"""

n_objective = A_circuit.num_qubits

counting = QuantumRegister(m_qubits, 'count')

objective = QuantumRegister(n_objective, 'obj')

creg = ClassicalRegister(m_qubits)

qc = QuantumCircuit(counting, objective, creg)

# Initialize counting qubits

qc.h(counting)

# Apply controlled-Q^(2^j) for each counting qubit

# For this demo, we use A directly and build the Grover operator

qc.append(A_circuit, objective)

# Apply A^\dagger for QPE (simplified: just measure A's output)

# Full QPE-based QAE requires controlled-Q gates; here we sketch structure

qc.append(QFT(m_qubits, inverse=True), counting)

qc.measure(counting, creg)

sampler = Sampler()

job = sampler.run([(qc, [], shots)])

result = job.result()

counts = result[0].data.meas.get_counts()

# Most frequent bitstring gives estimated phase

best = max(counts, key=counts.get)

k = int(best, 2)

theta_hat = k * np.pi / (2**m_qubits)

a_hat = np.sin(theta_hat)**2

return a_hat

# Test with known amplitude

a_true = 0.3

A = build_state_preparation(a_true)

print(f"True amplitude: {a_true}")

print(f"Circuit:\n{A.draw()}")

Iterative QAE (IQAE): No QPE Required

Canonical QAE needs ancilla qubits and a full QPE circuit with controlled- gates, which are deep. Iterative QAE (Suzuki et al., 2020) avoids QPE entirely. It uses a classical outer loop that adaptively chooses the Grover oracle power and the number of shots, achieving the same query complexity with shallow circuits.

How IQAE Works: The Algorithm in Detail

The Suzuki et al. (2020) algorithm maintains a confidence interval for the true angle and iteratively tightens it. Here is the step-by-step procedure:

Step 1: Initialize. Set , , and choose the target precision and confidence level .

Step 2: Choose the oracle power . In each round , select to be the largest power such that the interval maps injectively under the function . Intuitively, applying amplifies the phase by a factor of , which increases sensitivity but introduces aliasing if the interval is too wide. The algorithm picks to be as large as possible without aliasing.

Step 3: Run the quantum circuit. Prepare , apply , and measure the objective qubit. Repeat for shots. Let be the number of times the objective qubit is measured as .

Step 4: Update the confidence interval. The probability of measuring after is . Using the observed fraction and a Chernoff-Hoeffding bound, compute a confidence interval for . Invert through to obtain an updated interval for . Intersect with the previous interval .

Step 5: Check convergence. If (ensuring that the amplitude precision meets the target), stop. Otherwise, return to Step 2.

The schedule of oracle powers roughly follows , doubling each round. Since round uses shots with , the total number of oracle calls is:

This achieves the same total query complexity as canonical QAE, but each individual circuit has depth at most for that round.

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

# Build estimation problem

a_true = 0.3

A = build_state_preparation(a_true)

# Mark the |1> state on the objective qubit as "good"

problem = EstimationProblem(

state_preparation=A,

objective_qubits=[0],

)

# Run IQAE

iqae = IterativeAmplitudeEstimation(

epsilon_target=0.01, # target accuracy

alpha=0.05, # confidence level 1 - alpha = 95%

sampler=Sampler(),

)

result = iqae.estimate(problem)

print(f"True amplitude: {a_true:.4f}")

print(f"IQAE estimate: {result.estimation:.4f}")

print(f"95% CI: [{result.confidence_interval[0]:.4f}, {result.confidence_interval[1]:.4f}]")

print(f"Oracle queries used: {result.num_oracle_queries}")

IQAE’s confidence interval narrows as , matching the theoretical speedup over Monte Carlo’s .

Comparing QAE Variants

Several QAE variants have been developed, each with different trade-offs. The following table summarizes the four main approaches:

| Algorithm | Ancilla qubits | Circuit depth | Confidence interval | Key property |

|---|---|---|---|---|

| Canonical QAE | QPE-based, asymptotically optimal | |||

| IQAE (Suzuki et al.) | 0 | No ancillas, adaptive schedule | ||

| MLAE (Maximum Likelihood) | 0 | Uses ML fitting instead of Chernoff bounds | ||

| FQAE (Faster QAE) | 0 | Better constant factors in query count |

MLAE (Suzuki et al., 2020) runs circuits with several different Grover powers , collects the measurement outcomes, and fits the amplitude parameter via maximum likelihood estimation. It avoids the Chernoff bound step of IQAE and can achieve tighter estimates for the same number of queries in practice.

from qiskit_algorithms import MaximumLikelihoodAmplitudeEstimation

a_true = 0.3

A = build_state_preparation(a_true)

problem = EstimationProblem(

state_preparation=A,

objective_qubits=[0],

)

# MLAE with a specified evaluation schedule

# evaluation_schedule is the list of Grover powers k to use

mlae = MaximumLikelihoodAmplitudeEstimation(

evaluation_schedule=5, # uses powers k = 0, 1, 2, 4, 8 (geometric schedule)

sampler=Sampler(),

)

result_mlae = mlae.estimate(problem)

print(f"True amplitude: {a_true:.4f}")

print(f"MLAE estimate: {result_mlae.estimation:.4f}")

print(f"95% CI: [{result_mlae.confidence_interval[0]:.4f}, {result_mlae.confidence_interval[1]:.4f}]")

print(f"Oracle queries used: {result_mlae.num_oracle_queries}")

The choice of variant depends on hardware constraints. IQAE is the most widely used in Qiskit because it adapts to the problem automatically. MLAE can be preferable when you want to fix the circuit schedule in advance (useful for batch execution on cloud hardware). Canonical QAE is mainly of theoretical interest today, since the deep controlled- circuits are impractical on near-term devices.

Accuracy vs Circuit Depth Trade-off

The key engineering tension in QAE is between estimation accuracy and circuit depth:

- Canonical QAE with counting qubits achieves error but requires circuits of depth due to high-power Grover iterations.

- IQAE achieves error with oracle complexity and maximum circuit depth , matching canonical QAE but spreading the work over many shorter circuits.

- Classical Monte Carlo achieves error with circuit evaluations, each of depth .

For near-term hardware with gate error rate , circuits deeper than gates accumulate too much noise. This limits how many Grover iterations can be applied, reducing QAE’s advantage. Error mitigation and fault tolerance are required for the full quantum speedup.

Accuracy vs. Circuit Depth: Printed Comparison Table

import numpy as np

epsilons = [0.1, 0.05, 0.02, 0.01, 0.005]

print(f"{'epsilon':>10} | {'Classical MC':>14} | {'IQAE queries':>14} | {'Max depth (k)':>14} | {'Speedup':>10}")

print("-" * 72)

for eps in epsilons:

classical = int(np.ceil(1 / eps**2))

# IQAE total queries: approximately pi/(4*epsilon)

quantum = int(np.ceil(np.pi / (4 * eps)))

# Maximum single-circuit depth (largest Grover power)

max_k = int(np.ceil(1 / (2 * eps)))

speedup = classical / quantum

print(f"{eps:>10.3f} | {classical:>14,} | {quantum:>14,} | {max_k:>14,} | {speedup:>10.1f}x")

Expected output:

epsilon | Classical MC | IQAE queries | Max depth (k) | Speedup

------------------------------------------------------------------------

0.100 | 100 | 8 | 5 | 12.5x

0.050 | 400 | 16 | 10 | 25.0x

0.020 | 2,500 | 40 | 25 | 62.5x

0.010 | 10,000 | 79 | 50 | 126.6x

0.005 | 40,000 | 158 | 100 | 253.2x

The speedup ratio grows as , confirming the quadratic advantage.

import numpy as np

# Illustrate accuracy vs queries

epsilons = [0.1, 0.05, 0.02, 0.01, 0.005]

classical_queries = [int(1 / e**2) for e in epsilons]

quantum_queries = [int(np.pi / (4 * e)) for e in epsilons] # O(1/epsilon)

plt.figure(figsize=(7, 4))

plt.loglog(epsilons, classical_queries, 'o-', label='Classical MC: O(1/eps^2)')

plt.loglog(epsilons, quantum_queries, 's-', label='QAE: O(1/eps)')

plt.xlabel('Target error epsilon')

plt.ylabel('Number of oracle queries')

plt.title('QAE vs Monte Carlo Query Complexity')

plt.legend()

plt.gca().invert_xaxis()

plt.tight_layout()

plt.savefig('qae_complexity.png', dpi=150)

Oracle Query Count: Empirical Verification

The following code runs IQAE at several target accuracies and records the actual number of oracle queries used. This lets us verify empirically that IQAE scales as .

import numpy as np

from qiskit.primitives import StatevectorSampler as Sampler

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

a_true = 0.3

A = build_state_preparation(a_true)

problem = EstimationProblem(

state_preparation=A,

objective_qubits=[0],

)

epsilons = [0.1, 0.05, 0.01, 0.005]

print(f"{'epsilon':>10} | {'Classical (1/eps^2)':>20} | {'IQAE queries':>14} | {'Speedup':>10}")

print("-" * 64)

for eps in epsilons:

iqae = IterativeAmplitudeEstimation(

epsilon_target=eps,

alpha=0.05,

sampler=Sampler(),

)

result = iqae.estimate(problem)

classical = int(np.ceil(1 / eps**2))

speedup = classical / max(result.num_oracle_queries, 1)

print(f"{eps:>10.3f} | {classical:>20,} | {result.num_oracle_queries:>14,} | {speedup:>10.1f}x")

print("\nIf IQAE scales as O(1/eps), doubling 1/eps should roughly double the query count.")

Confidence Intervals in Depth

IQAE returns a confidence interval with the guarantee that:

The parameter (default 0.05 for 95% confidence) controls how conservative the interval is. The width of the interval scales as , matching the requested precision.

Tightening the Confidence Interval

Reducing produces a wider interval (higher confidence) for the same . Conversely, reducing produces a narrower interval at higher query cost.

import numpy as np

from qiskit.primitives import StatevectorSampler as Sampler

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

a_true = 0.3

A = build_state_preparation(a_true)

problem = EstimationProblem(state_preparation=A, objective_qubits=[0])

# Compare different confidence levels

for alpha in [0.10, 0.05, 0.01, 0.001]:

iqae = IterativeAmplitudeEstimation(

epsilon_target=0.01,

alpha=alpha,

sampler=Sampler(),

)

result = iqae.estimate(problem)

ci = result.confidence_interval

width = ci[1] - ci[0]

print(f"alpha={alpha:.3f} ({100*(1-alpha):.1f}% CI): "

f"[{ci[0]:.4f}, {ci[1]:.4f}], width={width:.4f}, "

f"queries={result.num_oracle_queries}")

Amplitude vs. Expectation Value

An important subtlety: the confidence interval is for the amplitude , not directly for the expectation value of interest. When using QAE for a finance or integration application, the mapping from amplitude to expectation value involves a normalization factor:

The normalization factor depends on how the payoff function was encoded into the amplitude. For example, in option pricing, Qiskit Finance provides the interpret() method that handles this conversion:

# The raw amplitude from QAE

raw_amplitude = result.estimation

# The interpret() method applies the correct normalization

# to map from amplitude space back to dollar values

estimated_price = european_call.interpret(result.estimation_processed)

The confidence interval must also be mapped through interpret(). Since the mapping is typically linear, the interval transforms straightforwardly:

Note that the confidence interval is not necessarily symmetric around the estimate. The Chernoff bound used in IQAE produces intervals that can be slightly asymmetric, especially near the boundaries or .

European Call Option Pricing

A European call option pays at maturity, where is the asset price and is the strike. The fair price is . Under Black-Scholes, follows a log-normal distribution.

Qiskit Finance encodes the distribution in a quantum register and computes the expected payoff as an amplitude.

# Requires: pip install qiskit-finance

from qiskit_finance.circuit.library import LogNormalDistribution

from qiskit_finance.applications.estimation import EuropeanCallPricing

# Parameters

S0 = 2.0 # initial price

vol = 0.4 # volatility

r = 0.05 # risk-free rate

T = 40/365 # time to maturity

K = 1.896 # strike price

c_approx = 10 # number of qubits for distribution

# Log-normal distribution circuit

mu = (r - 0.5 * vol**2) * T + np.log(S0)

sigma = vol * np.sqrt(T)

mean = np.exp(mu + sigma**2 / 2)

variance = (np.exp(sigma**2) - 1) * np.exp(2 * mu + sigma**2)

low = np.maximum(0, mean - 3 * np.sqrt(variance))

high = mean + 3 * np.sqrt(variance)

num_uncertainty_qubits = 3

uncertainty_model = LogNormalDistribution(

num_uncertainty_qubits,

mu=mu,

sigma=sigma**2,

bounds=(low, high),

)

# Build the pricing problem

european_call = EuropeanCallPricing(

num_state_qubits=num_uncertainty_qubits,

strike_price=K,

rescaling_factor=0.25,

bounds=(low, high),

uncertainty_model=uncertainty_model,

)

problem_finance = european_call.to_estimation_problem()

# Run IQAE on the pricing problem

iqae_finance = IterativeAmplitudeEstimation(

epsilon_target=0.01,

alpha=0.05,

sampler=Sampler(),

)

result_finance = iqae_finance.estimate(problem_finance)

confidence_interval = european_call.interpret(result_finance.confidence_interval_processed)

estimated_price = european_call.interpret(result_finance.estimation_processed)

print(f"Estimated option price: {estimated_price:.4f}")

print(f"95% confidence interval: [{confidence_interval[0]:.4f}, {confidence_interval[1]:.4f}]")

# Black-Scholes reference

from scipy.stats import norm

d1 = (np.log(S0 / K) + (r + 0.5 * vol**2) * T) / (vol * np.sqrt(T))

d2 = d1 - vol * np.sqrt(T)

bs_price = S0 * norm.cdf(d1) - K * np.exp(-r * T) * norm.cdf(d2)

print(f"Black-Scholes reference: {bs_price:.4f}")

Amplitude Estimation for Numerical Integration

QAE can estimate definite integrals, generalizing the option pricing example to arbitrary functions. The idea is to encode a function on as an amplitude and use QAE to extract the integral .

Encoding scheme. Discretize into equally spaced points for . The state preparation operator does two things:

- Prepares a uniform superposition over :

- For each , applies a controlled rotation on an ancilla qubit:

After these steps, the probability of measuring the ancilla as is:

QAE estimates this amplitude , giving us the integral estimate.

Important constraint: The function values must satisfy for the rotation to produce valid amplitudes. For functions with range outside , normalize by the maximum value and rescale the final answer.

Example: Estimating

import numpy as np

from qiskit import QuantumCircuit

from qiskit.primitives import StatevectorSampler as Sampler

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

n_qubits = 4 # 2^4 = 16 discretization points

num_points = 2**n_qubits

def build_integration_circuit(n_qubits):

"""

Build a circuit that encodes f(x) = x^2 for uniform x in [0,1].

Uses n_qubits for the x register and 1 ancilla (objective qubit).

"""

n_total = n_qubits + 1 # x register + objective qubit

qc = QuantumCircuit(n_total)

# Step 1: Uniform superposition over x values

for i in range(n_qubits):

qc.h(i)

# Step 2: Controlled rotations to encode f(x) = x^2

# For each basis state |j>, rotate ancilla by angle 2*arcsin(sqrt(f(x_j)))

# where x_j = j / 2^n and f(x_j) = x_j^2

num_points = 2**n_qubits

for j in range(num_points):

x_j = j / num_points

f_val = x_j**2 # f(x) = x^2, already in [0, 1]

if f_val > 0:

# Rotation angle for encoding amplitude sqrt(f_val)

angle = 2 * np.arcsin(np.sqrt(f_val))

# Build the control pattern for basis state |j>

# Convert j to binary, apply X gates for 0-bits, then multi-controlled RY

ctrl_state = format(j, f'0{n_qubits}b')

# Apply X gates to qubits where ctrl_state has '0'

for bit_idx, bit in enumerate(reversed(ctrl_state)):

if bit == '0':

qc.x(bit_idx)

# Multi-controlled RY on the objective qubit (last qubit)

qc.mcry(angle, list(range(n_qubits)), n_qubits)

# Undo X gates

for bit_idx, bit in enumerate(reversed(ctrl_state)):

if bit == '0':

qc.x(bit_idx)

return qc

A_integration = build_integration_circuit(n_qubits)

# The objective qubit is the last qubit (index n_qubits)

problem_integration = EstimationProblem(

state_preparation=A_integration,

objective_qubits=[n_qubits],

)

iqae_integration = IterativeAmplitudeEstimation(

epsilon_target=0.01,

alpha=0.05,

sampler=Sampler(),

)

result_integration = iqae_integration.estimate(problem_integration)

# The estimated amplitude approximates the integral

integral_estimate = result_integration.estimation

exact_value = 1 / 3 # integral of x^2 from 0 to 1

print(f"QAE estimate of integral(x^2, 0, 1): {integral_estimate:.4f}")

print(f"Exact value: {exact_value:.4f}")

print(f"Absolute error: {abs(integral_estimate - exact_value):.4f}")

print(f"Note: discretization with {num_points} points introduces its own error")

This approach generalizes to any function that can be efficiently encoded as a quantum rotation. The discretization error from using points adds an contribution on top of the QAE estimation error.

Portfolio Risk Estimation: VaR and CVaR

Beyond option pricing, QAE applies to portfolio risk metrics. Value at Risk (VaR) and Conditional Value at Risk (CVaR) are standard measures of portfolio downside risk. Both can be formulated as amplitude estimation problems.

Value at Risk (VaR) at confidence level is the loss threshold such that the probability of exceeding it is at most :

Conditional VaR (CVaR) is the expected loss given that the loss exceeds VaR:

Quantum Approach to Risk Estimation

The quantum encoding works as follows:

- Encode the portfolio loss distribution as a quantum state, where the amplitudes represent the probability of each discretized loss level.

- Mark the “bad” outcomes where loss exceeds a threshold . This sets up the objective qubit.

- Use QAE to estimate the probability of loss exceeding .

- For VaR: perform a binary search over thresholds to find the critical value.

- For CVaR: encode a conditional payoff and estimate its expectation.

import numpy as np

from qiskit import QuantumCircuit

from qiskit.primitives import StatevectorSampler as Sampler

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

def build_risk_estimation_circuit(loss_probs, threshold_index):

"""

Build a circuit for estimating Pr[Loss > threshold].

Args:

loss_probs: array of probabilities for each discretized loss level.

Must sum to 1. Length must be a power of 2.

threshold_index: index in loss_probs above which losses are "bad".

"""

n_qubits = int(np.log2(len(loss_probs)))

assert 2**n_qubits == len(loss_probs), "Length must be power of 2"

n_total = n_qubits + 1 # loss register + objective qubit

qc = QuantumCircuit(n_total)

# Step 1: Encode the loss distribution

# Use initialize to prepare the exact probability amplitudes

amplitudes_full = np.zeros(2**n_total)

for j, p in enumerate(loss_probs):

if j >= threshold_index:

# "Bad" outcome: objective qubit = |1>

# Index in full register: j (loss) with qubit n_qubits = 1

idx_bad = j + 2**n_qubits

amplitudes_full[idx_bad] = np.sqrt(p)

else:

# "Good" outcome: objective qubit = |0>

amplitudes_full[j] = np.sqrt(p)

# Normalize (should already be normalized if loss_probs sums to 1)

norm = np.linalg.norm(amplitudes_full)

if norm > 0:

amplitudes_full = amplitudes_full / norm

qc.initialize(amplitudes_full, range(n_total))

return qc

# Example: simple portfolio with 8 discretized loss levels

n_loss_qubits = 3

num_levels = 2**n_loss_qubits

# Simulated loss distribution (skewed, heavier right tail)

raw_probs = np.array([0.05, 0.15, 0.25, 0.25, 0.15, 0.08, 0.05, 0.02])

loss_probs = raw_probs / raw_probs.sum() # normalize to valid distribution

# Set threshold: losses at index 5, 6, 7 are "bad"

threshold_index = 5

prob_exceed_classical = loss_probs[threshold_index:].sum()

A_risk = build_risk_estimation_circuit(loss_probs, threshold_index)

problem_risk = EstimationProblem(

state_preparation=A_risk,

objective_qubits=[n_loss_qubits], # objective qubit is the last one

)

iqae_risk = IterativeAmplitudeEstimation(

epsilon_target=0.01,

alpha=0.05,

sampler=Sampler(),

)

result_risk = iqae_risk.estimate(problem_risk)

print(f"Estimated Pr[Loss > threshold]: {result_risk.estimation:.4f}")

print(f"Classical exact probability: {prob_exceed_classical:.4f}")

print(f"95% CI: [{result_risk.confidence_interval[0]:.4f}, "

f"{result_risk.confidence_interval[1]:.4f}]")

For a full portfolio risk application, you would replace the simple discrete distribution with one generated from correlated asset models (e.g., using a Gaussian copula or a multivariate normal distribution encoded via quantum circuits). The qiskit-finance library provides utilities for constructing these distributions.

Running on Real Hardware with Error Mitigation

On real quantum hardware, noise degrades QAE estimates. Each application of the Grover operator accumulates gate errors, so circuits with large oracle powers are the most affected. This creates a practical dilemma: higher powers give more information per shot, but noise corrupts the signal.

The Noise-Depth Trade-off

For a gate error rate per two-qubit gate, a circuit with gates has an overall fidelity of roughly . If each Grover iteration uses gates, then applying uses gates with fidelity:

For current hardware with and gates per Grover iteration, circuits become unreliable around , limiting the achievable accuracy to .

Practical Strategy: Limit Circuit Depth

IQAE’s epsilon_target parameter implicitly controls the maximum circuit depth. On near-term hardware, choose conservatively to keep circuits shallow enough for the available error rates.

from qiskit.primitives import StatevectorSampler as Sampler

from qiskit_algorithms import IterativeAmplitudeEstimation, EstimationProblem

# For real hardware, use SamplerV2 from qiskit_ibm_runtime:

#

# from qiskit_ibm_runtime import SamplerV2, QiskitRuntimeService

#

# service = QiskitRuntimeService()

# backend = service.least_busy(simulator=False, min_num_qubits=5)

# sampler = SamplerV2(backend)

#

# To limit circuit depth, set epsilon_target to a moderate value.

# On current hardware, epsilon_target=0.05 is often the practical limit.

a_true = 0.3

A = build_state_preparation(a_true)

problem = EstimationProblem(state_preparation=A, objective_qubits=[0])

# Simulate the hardware-limited scenario with a conservative epsilon

iqae_hw = IterativeAmplitudeEstimation(

epsilon_target=0.05, # conservative for near-term hardware

alpha=0.05,

sampler=Sampler(), # replace with SamplerV2(backend) for real hardware

)

result_hw = iqae_hw.estimate(problem)

print(f"Estimate (hardware-friendly): {result_hw.estimation:.4f}")

print(f"Oracle queries used: {result_hw.num_oracle_queries}")

print(f"True value: {a_true:.4f}")

print()

print("For real hardware, also consider:")

print(" - Dynamical decoupling to reduce idle-qubit decoherence")

print(" - Twirled readout error extinction (TREX) for measurement error mitigation")

print(" - Zero-noise extrapolation (ZNE) to estimate the noiseless result")

On real devices, additional error mitigation techniques help recover accuracy:

- Measurement error mitigation corrects for readout errors by calibrating the confusion matrix of the measurement qubits.

- Zero-noise extrapolation (ZNE) runs circuits at several artificially increased noise levels and extrapolates back to zero noise.

- Dynamical decoupling inserts identity-equivalent gate sequences during idle periods to suppress decoherence.

These techniques are available through qiskit_ibm_runtime options and can be combined with IQAE to improve results on near-term hardware.

Connection to Quantum Monte Carlo and Beyond

QAE is fundamentally a quantum-accelerated Monte Carlo method. Classical Monte Carlo underpins vast areas of computational science:

- Computational chemistry: estimating molecular energies, reaction rates, and thermodynamic properties via path integral Monte Carlo.

- Materials science: simulating phase transitions, lattice models, and transport properties.

- Financial engineering: pricing exotic derivatives, computing risk metrics, and optimizing portfolios.

- Machine learning: Bayesian inference, sampling from complex distributions, and training generative models.

A quadratic speedup in Monte Carlo would have broad impact across all these fields. However, several challenges remain before QAE delivers practical advantages:

Oracle construction. The hardest part of applying QAE is often building the oracle that encodes the distribution of interest. For financial distributions, this requires loading classical probability distributions into quantum states, which can itself be expensive. For physics simulations, encoding the Boltzmann distribution or path integral measure is a major open problem.

Quadratic vs. exponential. The QAE speedup is “only” quadratic. For a problem where classical Monte Carlo takes samples, QAE needs oracle calls, but each oracle call involves a full quantum circuit execution. If the circuit is much more expensive than a classical sample, the crossover point may be very large.

Constant factors. The scaling of QAE hides constant factors. In practice, IQAE uses roughly total queries (including shots), which shifts the crossover compared to the idealized .

Despite these challenges, QAE remains one of the strongest candidates for practical quantum advantage in the near to medium term, particularly in finance where the payoff from even modest speedups can be substantial.

Interpreting the Results

QAE encodes the entire distribution in superposition and extracts the expected payoff through interference, rather than sampling individual price paths. The quantum circuit directly encodes the log-normal distribution into amplitudes, and the payoff function is implemented as a controlled rotation.

The result depends critically on the number of qubits used to discretize the distribution. More qubits capture finer price resolution but increase circuit depth. The num_uncertainty_qubits=3 setting above gives a coarse 8-point discretization; real applications would use 10-12 qubits for sufficient accuracy.

The theoretical advantage appears only when the circuit can run with sufficient depth on real hardware. Current error rates require error mitigation or fault-tolerant execution to realize the query advantage over classical Monte Carlo.

Common Mistakes

Working with QAE involves several subtle pitfalls. Here are the most common mistakes and how to avoid them.

1. Confusing amplitude with probability . The amplitude estimated by QAE equals , which is the probability of measuring the objective qubit as . These are the same quantity only when the state is a simple superposition over computational basis states. In more complex encodings (multi-qubit registers with entanglement), the amplitude estimated by QAE is the probability of the objective qubit being , which encodes the quantity of interest through the specific circuit construction. Do not assume the raw amplitude is the final answer without understanding the encoding.

2. Passing a backend directly instead of a Sampler.

IQAE and other qiskit_algorithms estimators require a Sampler primitive, not a raw backend. This is a common source of errors when migrating from older Qiskit code:

# WRONG: passing a backend directly

# iqae = IterativeAmplitudeEstimation(epsilon_target=0.01, alpha=0.05, backend=backend)

# CORRECT: wrap in a Sampler

from qiskit.primitives import StatevectorSampler as Sampler

sampler = Sampler()

iqae = IterativeAmplitudeEstimation(epsilon_target=0.01, alpha=0.05, sampler=sampler)

# For real hardware, use SamplerV2:

# from qiskit_ibm_runtime import SamplerV2

# sampler = SamplerV2(backend)

3. Setting epsilon_target too small for near-term hardware. A target accuracy of requires Grover circuits with iterations. On current hardware with error rates around per gate, such circuits produce noise-dominated results. Start with on real devices and decrease only if results are stable.

4. Not normalizing the payoff function to . Amplitude encoding requires that the function being estimated maps to valid probabilities in . If your payoff function has range for some , divide by before encoding and multiply the final result by . Failing to normalize produces invalid quantum states (the rotation angles exceed , wrapping around and giving incorrect results).

# Example: if payoff ranges from 0 to 100

max_payoff = 100.0

normalized_payoff = payoff / max_payoff # now in [0, 1]

# ... run QAE on normalized_payoff ...

# Rescale the result

estimated_value = qae_result.estimation * max_payoff

5. Forgetting to call interpret() for finance problems.

The raw QAE result is an amplitude in . For finance applications built with qiskit-finance, the interpret() method applies the rescaling factor and offset to convert from amplitude space back to the original units (e.g., dollars). Skipping this step gives a number that looks plausible but has the wrong scale.

# WRONG: using the raw amplitude as the price

wrong_price = result_finance.estimation

# CORRECT: apply the interpret() method

correct_price = european_call.interpret(result_finance.estimation_processed)

6. Treating the confidence interval as symmetric. IQAE’s confidence interval is generally not symmetric around the point estimate. Near the boundaries ( close to 0 or 1), the interval can be noticeably skewed. Always report both bounds rather than using . This asymmetry arises because the Chernoff bound constrains each tail independently, and the mapping through introduces additional asymmetry.

Was this tutorial helpful?