Variational Quantum Eigensolver (VQE) Explained

How VQE works: the variational principle, ansatz design, classical optimizer loop, and a complete Qiskit implementation for finding the ground state of a simple Hamiltonian.

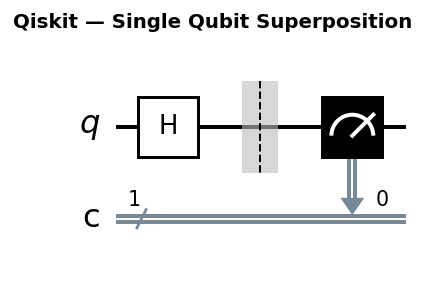

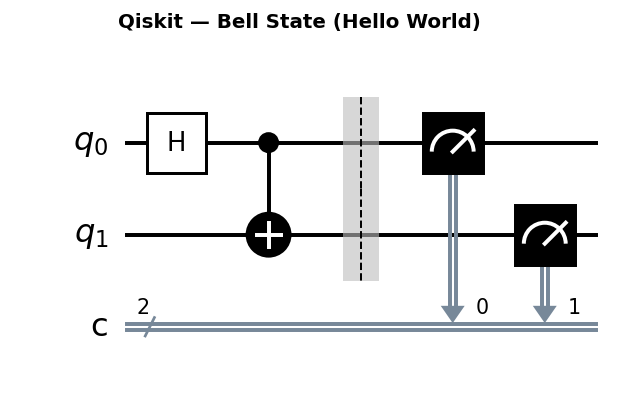

Circuit diagrams

The Core Idea

VQE is a hybrid quantum-classical algorithm designed to estimate the ground state energy of a quantum system. A parameterized quantum circuit (the ansatz) prepares a trial state |psi(theta)>, and a classical optimizer adjusts the parameters theta to minimize the expectation value of a Hamiltonian H:

E(theta) = <psi(theta)| H |psi(theta)>

The ground state energy is the minimum of E(theta) over all possible parameters. VQE runs on near-term hardware without requiring a fault-tolerant quantum computer, though noise limits the achievable accuracy. This makes it one of the most practical near-term quantum algorithms, despite its limitations.

The Variational Principle

The variational principle from quantum mechanics guarantees that for any normalized state |psi>:

<psi| H |psi> >= E_ground

Equality holds only when |psi> is the true ground state. This bound is the algorithm’s foundation: lower the expectation value, get closer to the ground state. VQE turns this into an optimization problem solvable with classical methods.

Why the Variational Principle Works: A Derivation

Every Hermitian Hamiltonian H has a spectral decomposition in terms of its eigenstates |k> and eigenvalues E_k:

H = sum_k E_k |k><k|

where E_0 <= E_1 <= E_2 <= … are the ordered eigenvalues. Any normalized trial state |psi> can be written as a linear combination of these eigenstates:

|psi> = sum_k c_k |k> with sum_k |c_k|^2 = 1

Computing the expectation value of H in this state gives:

<psi| H |psi> = sum_k |c_k|^2 E_k

Since E_0 is the smallest eigenvalue and sum_k |c_k|^2 = 1:

sum_k |c_k|^2 E_k >= E_0 * sum_k |c_k|^2 = E_0

Equality holds if and only if c_k = 0 for every k != 0. In other words, the expectation value equals E_0 only when |psi> is purely in the ground state.

This derivation reveals the mechanism behind VQE. The algorithm searches for parameters theta that make |psi(theta)> overlap as much as possible with the true ground state |0>. The closer the overlap, the closer E(theta) approaches E_0 from above.

Chemical Accuracy

In quantum chemistry, the standard threshold for a “useful” computation is chemical accuracy: 1.6 milliHartree (mHa), equivalent to 1 kcal/mol. If the VQE energy satisfies |E_VQE - E_exact| < 1.6 mHa, the result is considered chemically accurate.

For the H2 molecule at equilibrium bond length, the exact ground state energy is approximately -1.1373 Ha. A VQE run that produces -1.1353 Ha has an error of 2.0 mHa, which is just outside chemical accuracy. Reaching sub-mHa accuracy on real hardware remains a significant challenge because noise floors on current devices often exceed 1.6 mHa.

The H2 Hamiltonian: What Each Pauli Term Means

The simplified 2-qubit Hamiltonian used throughout this tutorial has four Pauli terms:

hamiltonian = SparsePauliOp.from_list([

("ZZ", -1.0523),

("IZ", 0.3979),

("ZI", -0.3979),

("XX", 0.1809),

])

This Hamiltonian comes from the Jordan-Wigner transformation of the second-quantized molecular Hamiltonian for H2 in the STO-3G basis, followed by a parity reduction from 4 qubits to 2 qubits. Each term has a physical meaning:

IZ and ZI (single-electron terms): These represent the one-body contributions, specifically kinetic energy plus nuclear attraction for each electron. IZ acts on qubit 1 alone; ZI acts on qubit 0 alone. Together they encode the single-particle energies of the bonding (sigma) and antibonding (sigma*) molecular orbitals.

ZZ (electron correlation): This two-body term captures the Coulomb repulsion between the two electrons. It distinguishes between configurations where both electrons occupy the same orbital versus different orbitals. The large coefficient (-1.0523) reflects the dominant role of electron-electron correlation in determining the ground state energy.

XX (hopping/exchange): This term represents the exchange integral between the bonding and antibonding orbitals. It allows amplitude to transfer between the |01> and |10> computational basis states, which correspond to different orbital occupations. Without this term, the Hamiltonian would be diagonal and trivially solvable classically.

The original 4-qubit Hamiltonian has 15 Pauli terms. Particle number conservation (exactly 2 electrons in 4 spin-orbitals) and spin symmetry (one alpha, one beta electron) together allow a 2-qubit reduction via the ParityMapper. This reduction halves the circuit depth and significantly improves gradient variance, as discussed in the symmetry reduction section below.

Choosing an Ansatz

The ansatz is the parameterized circuit. Its design is the central engineering challenge of VQE. Two main families exist:

Hardware-efficient ansatz: Layers of single-qubit rotations (RY, RZ) connected by CNOT gates. It uses the native gate set of the device, keeps depth low, and trains quickly. The downside is that it may not represent chemically meaningful states for larger molecules.

Chemically-motivated ansatz (UCCSD): Based on unitary coupled cluster theory. It encodes physically meaningful excitations and tends to converge reliably for small molecules, but requires significantly more gates and is difficult to run on current hardware.

The tradeoff is expressibility versus trainability. A more expressive ansatz can represent the true ground state, but it also risks barren plateaus (more on that below).

The UCCSD Ansatz for H2

For H2 with 2 qubits (after parity reduction), the UCCSD ansatz has a single parameter. The cluster operator for the only active double excitation is:

T - T† = theta * (a†_0 a_1 - a†_1 a_0)

Under the Jordan-Wigner mapping, this becomes a rotation in the qubit Hilbert space. The circuit starts from the Hartree-Fock reference state |10> (qubit 0 in the |1> state, representing one occupied orbital) and applies a parameterized rotation followed by an entangling gate:

import numpy as np

from qiskit.circuit import QuantumCircuit, Parameter

from qiskit.quantum_info import SparsePauliOp

from qiskit.primitives import StatevectorEstimator

from scipy.optimize import minimize

# UCCSD ansatz for H2 (2-qubit, 1 parameter)

def build_uccsd_h2() -> QuantumCircuit:

theta = Parameter("theta")

qc = QuantumCircuit(2)

# Prepare Hartree-Fock reference state |10>

qc.x(0)

# UCCSD rotation: parameterized excitation from |10> to |01>

qc.ry(theta, 0)

qc.cx(0, 1)

return qc

hamiltonian = SparsePauliOp.from_list([

("ZZ", -1.0523),

("IZ", 0.3979),

("ZI", -0.3979),

("XX", 0.1809),

])

ansatz = build_uccsd_h2()

estimator = StatevectorEstimator()

def cost_function(params: np.ndarray) -> float:

pub = (ansatz, hamiltonian, [params.tolist()])

result = estimator.run([pub]).result()

return float(result[0].data.evs[0])

result = minimize(cost_function, x0=[0.0], method="COBYLA", options={"maxiter": 200})

print(f"UCCSD energy: {result.fun:.6f} Ha")

print(f"Exact ground state: -1.1373 Ha")

print(f"Error: {abs(result.fun - (-1.1373)):.6f} Ha")

print(f"Parameters: {result.x}")

print(f"Function evals: {result.nfev}")

With a single parameter, the UCCSD ansatz for H2 converges rapidly and reaches the exact ground state energy to high precision. This is because the UCCSD ansatz encodes the correct physics: the only correlation in H2 is the double excitation from the bonding to the antibonding orbital.

The Optimization Loop

VQE repeats a tight loop between quantum and classical computation:

- Prepare |psi(theta)> on the quantum device using the current parameters.

- Measure the expectation value of H using the Estimator primitive.

- Pass the energy to a classical optimizer.

- The optimizer proposes new parameters and returns to step 1.

- Stop when the energy change per iteration falls below a convergence threshold.

The quantum device handles state preparation and measurement. The classical computer handles all parameter updates. Neither part can do the job alone.

Qiskit Implementation

This example finds the ground state energy of a simplified 2-qubit Hamiltonian that resembles the H2 molecule:

import numpy as np

from scipy.optimize import minimize

from qiskit.circuit import QuantumCircuit, ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.primitives import StatevectorEstimator

# Define the observable (simplified H2 Hamiltonian in Pauli form)

hamiltonian = SparsePauliOp.from_list([

("ZZ", -1.0523),

("IZ", 0.3979),

("ZI", -0.3979),

("XX", 0.1809),

])

# Build a 2-qubit hardware-efficient ansatz

def build_ansatz(num_qubits: int, depth: int) -> QuantumCircuit:

theta = ParameterVector("theta", length=num_qubits * (depth + 1))

qc = QuantumCircuit(num_qubits)

idx = 0

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

for _ in range(depth):

for q in range(num_qubits - 1):

qc.cx(q, q + 1)

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

return qc

ansatz = build_ansatz(num_qubits=2, depth=2)

# Set up the Estimator and cost function

estimator = StatevectorEstimator()

def cost_function(params: np.ndarray) -> float:

pub = (ansatz, hamiltonian, [params.tolist()])

result = estimator.run([pub]).result()

return float(result[0].data.evs[0])

# Run the optimizer

initial_params = np.random.uniform(-np.pi, np.pi, ansatz.num_parameters)

result = minimize(

cost_function,

initial_params,

method="COBYLA",

options={"maxiter": 500, "rhobeg": 0.5},

)

print(f"Optimized energy: {result.fun:.6f} Ha")

print(f"Exact ground state: -1.1373 Ha")

print(f"Error: {abs(result.fun - (-1.1373)):.6f} Ha")

COBYLA is a gradient-free optimizer that works well for VQE because it does not require gradient information, which is expensive to compute on quantum hardware.

Parameter-Shift Rule for Gradients

VQE can also use gradient-based optimizers if gradients are computed via the parameter-shift rule. For a parameterized gate of the form exp(i * theta * G) where G is a generator satisfying G^2 = I (true for Pauli rotations like RY), the exact gradient of the expectation value is:

dE/d(theta) = [ E(theta + pi/2) - E(theta - pi/2) ] / 2

This formula gives the exact analytical gradient using only two extra circuit evaluations per parameter. No finite-difference approximation is needed, and no additional circuit depth is required.

For the 2-qubit depth-2 hardware-efficient ansatz with 6 parameters, computing the full gradient requires 12 extra circuit evaluations (2 per parameter). Each gradient step therefore costs 13 total evaluations: 1 for the energy itself plus 12 for the gradient.

Implementation: Parameter-Shift Gradients vs. Gradient-Free

The following code implements the parameter-shift rule manually and compares COBYLA (gradient-free) against L-BFGS-B (gradient-based) in convergence speed:

import numpy as np

from scipy.optimize import minimize

from qiskit.circuit import QuantumCircuit, ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.primitives import StatevectorEstimator

hamiltonian = SparsePauliOp.from_list([

("ZZ", -1.0523),

("IZ", 0.3979),

("ZI", -0.3979),

("XX", 0.1809),

])

def build_ansatz(num_qubits: int, depth: int) -> QuantumCircuit:

theta = ParameterVector("theta", length=num_qubits * (depth + 1))

qc = QuantumCircuit(num_qubits)

idx = 0

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

for _ in range(depth):

for q in range(num_qubits - 1):

qc.cx(q, q + 1)

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

return qc

ansatz = build_ansatz(num_qubits=2, depth=2)

estimator = StatevectorEstimator()

# Track function evaluations for comparison

cobyla_energies = []

bfgs_energies = []

def eval_energy(params: np.ndarray) -> float:

"""Evaluate the expectation value at a single parameter point."""

pub = (ansatz, hamiltonian, [params.tolist()])

result = estimator.run([pub]).result()

return float(result[0].data.evs[0])

def parameter_shift_gradient(params: np.ndarray) -> np.ndarray:

"""Compute exact gradients using the parameter-shift rule."""

n_params = len(params)

gradient = np.zeros(n_params)

shift = np.pi / 2

for i in range(n_params):

params_plus = params.copy()

params_minus = params.copy()

params_plus[i] += shift

params_minus[i] -= shift

gradient[i] = (eval_energy(params_plus) - eval_energy(params_minus)) / 2.0

return gradient

# Use the same starting point for fair comparison

np.random.seed(42)

initial_params = np.random.uniform(-np.pi, np.pi, ansatz.num_parameters)

# --- COBYLA (gradient-free) ---

def cobyla_callback(params: np.ndarray) -> float:

energy = eval_energy(params)

cobyla_energies.append(energy)

return energy

cobyla_result = minimize(

cobyla_callback,

initial_params.copy(),

method="COBYLA",

options={"maxiter": 300, "rhobeg": 0.5},

)

# --- L-BFGS-B (gradient-based with parameter-shift) ---

def bfgs_cost(params: np.ndarray) -> float:

energy = eval_energy(params)

bfgs_energies.append(energy)

return energy

bfgs_result = minimize(

bfgs_cost,

initial_params.copy(),

method="L-BFGS-B",

jac=parameter_shift_gradient,

options={"maxiter": 100},

)

print("=== COBYLA (gradient-free) ===")

print(f" Final energy: {cobyla_result.fun:.6f} Ha")

print(f" Function evals: {cobyla_result.nfev}")

print("\n=== L-BFGS-B (parameter-shift gradients) ===")

print(f" Final energy: {bfgs_result.fun:.6f} Ha")

print(f" Function evals: {bfgs_result.nfev}")

print(f" Gradient evals: {bfgs_result.njev}")

print(f" Total circuit runs: {bfgs_result.nfev + bfgs_result.njev * 2 * ansatz.num_parameters}")

print(f"\nExact ground state: -1.1373 Ha")

The key takeaway: L-BFGS-B typically converges in fewer optimizer steps, but each step requires 2 * n_params extra circuit evaluations for the gradient. For small ansatze (6 parameters), the total circuit count is similar. For large ansatze (100+ parameters), the per-step overhead of the parameter-shift rule becomes significant, which is why gradient-free methods like COBYLA and stochastic methods like SPSA remain popular.

Energy Landscape Visualization

For the 2-qubit depth-1 ansatz (2 parameters after the initial rotation layer, holding the first layer fixed), the energy landscape E(theta_0, theta_1) can be visualized directly. This visualization reveals the structure of the optimization problem: global minima, local minima, and saddle points.

import numpy as np

import matplotlib.pyplot as plt

from qiskit.circuit import QuantumCircuit, ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.primitives import StatevectorEstimator

hamiltonian = SparsePauliOp.from_list([

("ZZ", -1.0523),

("IZ", 0.3979),

("ZI", -0.3979),

("XX", 0.1809),

])

# Depth-1 ansatz: 4 parameters total

# Fix the first two (initial layer) and scan the last two

def build_ansatz_depth1() -> QuantumCircuit:

theta = ParameterVector("theta", length=4)

qc = QuantumCircuit(2)

qc.ry(theta[0], 0)

qc.ry(theta[1], 1)

qc.cx(0, 1)

qc.ry(theta[2], 0)

qc.ry(theta[3], 1)

return qc

ansatz = build_ansatz_depth1()

estimator = StatevectorEstimator()

# Fix initial layer parameters to pi/4

fixed_params = [np.pi / 4, np.pi / 4]

# Scan over the two variational parameters

n_points = 60

theta0_range = np.linspace(-np.pi, np.pi, n_points)

theta1_range = np.linspace(-np.pi, np.pi, n_points)

energy_grid = np.zeros((n_points, n_points))

# Batch all evaluations for efficiency

all_params = []

for i, t0 in enumerate(theta0_range):

for j, t1 in enumerate(theta1_range):

all_params.append(fixed_params + [t0, t1])

pub = (ansatz, hamiltonian, all_params)

results = estimator.run([pub]).result()

evs = results[0].data.evs

for idx in range(len(all_params)):

i = idx // n_points

j = idx % n_points

energy_grid[i, j] = evs[idx]

# Plot the energy landscape

fig, ax = plt.subplots(figsize=(8, 6))

im = ax.imshow(

energy_grid.T,

extent=[-np.pi, np.pi, -np.pi, np.pi],

origin="lower",

aspect="equal",

cmap="RdYlBu_r",

)

cbar = fig.colorbar(im, ax=ax, label="Energy (Ha)")

ax.set_xlabel(r"$\theta_2$")

ax.set_ylabel(r"$\theta_3$")

ax.set_title("VQE Energy Landscape (2-qubit H2, depth-1 ansatz)")

# Mark the global minimum

min_idx = np.unravel_index(np.argmin(energy_grid), energy_grid.shape)

min_t0 = theta0_range[min_idx[0]]

min_t1 = theta1_range[min_idx[1]]

min_energy = energy_grid[min_idx]

ax.plot(min_t0, min_t1, "k*", markersize=15, label=f"Min: {min_energy:.4f} Ha")

ax.legend(loc="upper right")

plt.tight_layout()

plt.savefig("vqe_energy_landscape.png", dpi=150)

plt.show()

print(f"Global minimum energy: {min_energy:.6f} Ha at theta=({min_t0:.3f}, {min_t1:.3f})")

The landscape for H2 is relatively smooth with a clear global minimum near -1.137 Ha. Larger molecules produce landscapes with many local minima and large flat regions (barren plateaus), making optimization much harder. This visualization explains why COBYLA needs multiple random restarts for larger problems and why barren plateaus manifest as flat regions where the optimizer receives no useful gradient signal.

Comparison of Optimizers for VQE

Different optimizers make different tradeoffs between per-step cost, robustness to noise, and convergence speed. The following code runs the same VQE problem with five different optimizers and compares their performance:

import numpy as np

from scipy.optimize import minimize

from qiskit.circuit import QuantumCircuit, ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.primitives import StatevectorEstimator

hamiltonian = SparsePauliOp.from_list([

("ZZ", -1.0523),

("IZ", 0.3979),

("ZI", -0.3979),

("XX", 0.1809),

])

def build_ansatz(num_qubits: int, depth: int) -> QuantumCircuit:

theta = ParameterVector("theta", length=num_qubits * (depth + 1))

qc = QuantumCircuit(num_qubits)

idx = 0

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

for _ in range(depth):

for q in range(num_qubits - 1):

qc.cx(q, q + 1)

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

return qc

ansatz = build_ansatz(num_qubits=2, depth=2)

estimator = StatevectorEstimator()

def eval_energy(params: np.ndarray) -> float:

pub = (ansatz, hamiltonian, [params.tolist()])

result = estimator.run([pub]).result()

return float(result[0].data.evs[0])

def parameter_shift_gradient(params: np.ndarray) -> np.ndarray:

n_params = len(params)

gradient = np.zeros(n_params)

shift = np.pi / 2

for i in range(n_params):

params_plus = params.copy()

params_minus = params.copy()

params_plus[i] += shift

params_minus[i] -= shift

gradient[i] = (eval_energy(params_plus) - eval_energy(params_minus)) / 2.0

return gradient

# Fixed seed for reproducibility

np.random.seed(42)

initial_params = np.random.uniform(-np.pi, np.pi, ansatz.num_parameters)

# Define optimizers to compare

optimizers = {

"COBYLA": {"method": "COBYLA", "options": {"maxiter": 500, "rhobeg": 0.5}},

"Nelder-Mead": {"method": "Nelder-Mead", "options": {"maxiter": 500}},

"SLSQP": {"method": "SLSQP", "options": {"maxiter": 200}},

"L-BFGS-B": {"method": "L-BFGS-B", "jac": parameter_shift_gradient, "options": {"maxiter": 100}},

"Powell": {"method": "Powell", "options": {"maxiter": 500}},

}

print(f"{'Optimizer':<14} {'Final Energy (Ha)':>18} {'Func Evals':>12} {'Converged?':>12}")

print("-" * 60)

for name, opt_kwargs in optimizers.items():

result = minimize(eval_energy, initial_params.copy(), **opt_kwargs)

converged = abs(result.fun - (-1.1373)) < 0.001

print(f"{name:<14} {result.fun:>18.6f} {result.nfev:>12} {'Yes' if converged else 'No':>12}")

print(f"\nExact ground state: -1.1373 Ha")

print(f"Chemical accuracy threshold: 0.0016 Ha")

Typical results for this problem:

| Optimizer | Type | Final Energy (Ha) | Func Evals | Hardware-friendly? |

|---|---|---|---|---|

| COBYLA | Gradient-free | -1.1373 | 200-400 | Yes |

| Nelder-Mead | Gradient-free | -1.1373 | 200-500 | Yes |

| SLSQP | Gradient (finite diff) | -1.1373 | 50-150 | No (needs exact gradients) |

| L-BFGS-B | Gradient (param-shift) | -1.1373 | 30-80 | Moderate |

| Powell | Gradient-free | -1.1373 | 100-300 | Yes |

For noiseless simulation, all optimizers find the ground state. The differences emerge on real hardware, where COBYLA and SPSA (a stochastic gradient method not included above, but widely used) tolerate noisy energy evaluations better than gradient-based methods that require precise function values.

VQE with Noise

On real quantum hardware, gate errors and decoherence corrupt every circuit execution. The following code runs VQE on a noisy simulator to show how noise affects convergence and final accuracy:

import numpy as np

from scipy.optimize import minimize

from qiskit.circuit import QuantumCircuit, ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.primitives import StatevectorEstimator

from qiskit_aer import AerSimulator

from qiskit_aer.noise import NoiseModel, depolarizing_error

from qiskit_aer.primitives import EstimatorV2 as AerEstimator

hamiltonian = SparsePauliOp.from_list([

("ZZ", -1.0523),

("IZ", 0.3979),

("ZI", -0.3979),

("XX", 0.1809),

])

def build_ansatz(num_qubits: int, depth: int) -> QuantumCircuit:

theta = ParameterVector("theta", length=num_qubits * (depth + 1))

qc = QuantumCircuit(num_qubits)

idx = 0

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

for _ in range(depth):

for q in range(num_qubits - 1):

qc.cx(q, q + 1)

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

return qc

ansatz = build_ansatz(num_qubits=2, depth=2)

# Build a noise model: 1% depolarizing error on CX gates, 0.1% on single-qubit gates

noise_model = NoiseModel()

noise_model.add_all_qubit_quantum_error(depolarizing_error(0.01, 2), ["cx"])

noise_model.add_all_qubit_quantum_error(depolarizing_error(0.001, 1), ["ry", "rx", "rz"])

# Ideal estimator (noiseless)

ideal_estimator = StatevectorEstimator()

# Noisy estimator

noisy_backend = AerSimulator(noise_model=noise_model)

noisy_estimator = AerEstimator.from_backend(noisy_backend)

# Track convergence for both

ideal_energies = []

noisy_energies = []

def make_cost_fn(est, tracker):

def cost_function(params: np.ndarray) -> float:

pub = (ansatz, hamiltonian, [params.tolist()])

result = est.run([pub]).result()

energy = float(result[0].data.evs[0])

tracker.append(energy)

return energy

return cost_function

np.random.seed(42)

initial_params = np.random.uniform(-np.pi, np.pi, ansatz.num_parameters)

# Run ideal VQE

ideal_result = minimize(

make_cost_fn(ideal_estimator, ideal_energies),

initial_params.copy(),

method="COBYLA",

options={"maxiter": 300, "rhobeg": 0.5},

)

# Run noisy VQE

noisy_result = minimize(

make_cost_fn(noisy_estimator, noisy_energies),

initial_params.copy(),

method="COBYLA",

options={"maxiter": 300, "rhobeg": 0.5},

)

print("=== Ideal (statevector) ===")

print(f" Final energy: {ideal_result.fun:.6f} Ha")

print(f" Error: {abs(ideal_result.fun - (-1.1373)):.6f} Ha")

print("\n=== Noisy (1% CX depolarizing) ===")

print(f" Final energy: {noisy_result.fun:.6f} Ha")

print(f" Error: {abs(noisy_result.fun - (-1.1373)):.6f} Ha")

print(f" Noise floor: ~{abs(noisy_result.fun - ideal_result.fun):.4f} Ha above ideal")

print(f"\nChemical accuracy threshold: 0.0016 Ha")

if abs(noisy_result.fun - (-1.1373)) > 0.0016:

print("Noisy VQE is OUTSIDE chemical accuracy.")

else:

print("Noisy VQE is within chemical accuracy.")

The noisy VQE converges to a higher energy than the ideal simulation. This gap is the noise floor. With 1% CX depolarizing error, the noise floor typically exceeds chemical accuracy (1.6 mHa), which means the noisy result is not chemically useful even though the optimizer converged.

On real hardware, the noise floor depends on circuit depth, gate fidelity, and measurement error. Error mitigation techniques (zero-noise extrapolation, probabilistic error cancellation, M3 measurement mitigation) can push the noise floor lower, but they add significant classical post-processing overhead and their own systematic biases. For production quantum chemistry, fault-tolerant hardware will eventually replace VQE for systems large enough to be classically intractable.

Convergence Analysis: Gradient-Free vs. Gradient-Based

Plotting the energy at each optimization step reveals how different optimizers navigate the energy landscape:

import numpy as np

import matplotlib.pyplot as plt

from scipy.optimize import minimize

from qiskit.circuit import QuantumCircuit, ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.primitives import StatevectorEstimator

hamiltonian = SparsePauliOp.from_list([

("ZZ", -1.0523),

("IZ", 0.3979),

("ZI", -0.3979),

("XX", 0.1809),

])

def build_ansatz(num_qubits: int, depth: int) -> QuantumCircuit:

theta = ParameterVector("theta", length=num_qubits * (depth + 1))

qc = QuantumCircuit(num_qubits)

idx = 0

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

for _ in range(depth):

for q in range(num_qubits - 1):

qc.cx(q, q + 1)

for q in range(num_qubits):

qc.ry(theta[idx], q)

idx += 1

return qc

ansatz = build_ansatz(num_qubits=2, depth=2)

estimator = StatevectorEstimator()

n_params = ansatz.num_parameters

def eval_energy(params):

pub = (ansatz, hamiltonian, [params.tolist()])

result = estimator.run([pub]).result()

return float(result[0].data.evs[0])

def parameter_shift_gradient(params):

gradient = np.zeros(n_params)

shift = np.pi / 2

for i in range(n_params):

p_plus = params.copy()

p_minus = params.copy()

p_plus[i] += shift

p_minus[i] -= shift

gradient[i] = (eval_energy(p_plus) - eval_energy(p_minus)) / 2.0

return gradient

np.random.seed(42)

initial_params = np.random.uniform(-np.pi, np.pi, n_params)

# Track energy at each evaluation

cobyla_trace = []

bfgs_trace = []

def cobyla_cost(params):

e = eval_energy(params)

cobyla_trace.append(e)

return e

def bfgs_cost(params):

e = eval_energy(params)

bfgs_trace.append(e)

return e

minimize(cobyla_cost, initial_params.copy(), method="COBYLA",

options={"maxiter": 300, "rhobeg": 0.5})

minimize(bfgs_cost, initial_params.copy(), method="L-BFGS-B",

jac=parameter_shift_gradient, options={"maxiter": 100})

# Plot convergence

fig, axes = plt.subplots(1, 2, figsize=(14, 5))

axes[0].plot(cobyla_trace, "b-", linewidth=0.8)

axes[0].axhline(y=-1.1373, color="r", linestyle="--", label="Exact ground state")

axes[0].set_xlabel("Function evaluation")

axes[0].set_ylabel("Energy (Ha)")

axes[0].set_title(f"COBYLA: {len(cobyla_trace)} evaluations")

axes[0].legend()

axes[1].plot(bfgs_trace, "g-", linewidth=0.8)

axes[1].axhline(y=-1.1373, color="r", linestyle="--", label="Exact ground state")

axes[1].set_xlabel("Function evaluation")

axes[1].set_ylabel("Energy (Ha)")

axes[1].set_title(f"L-BFGS-B: {len(bfgs_trace)} evals + gradient overhead")

axes[1].legend()

plt.tight_layout()

plt.savefig("vqe_convergence_comparison.png", dpi=150)

plt.show()

# Cost analysis

cobyla_total = len(cobyla_trace)

bfgs_total = len(bfgs_trace) # function evals only; gradient evals add 2*n_params each

print(f"COBYLA total circuit evaluations: {cobyla_total}")

print(f"L-BFGS-B function evaluations: {bfgs_total}")

print(f"L-BFGS-B per gradient step: {2 * n_params} extra evaluations")

print(f"Note: L-BFGS-B uses fewer steps but each gradient costs {2 * n_params} circuits")

Both optimizers reach the same final energy from the same starting point. COBYLA uses more optimizer steps but each step requires only 1 circuit evaluation. L-BFGS-B uses fewer steps but pays 2 * n_params = 12 extra circuit evaluations per step for the parameter-shift gradient. For this 6-parameter problem, the total circuit counts are comparable. The advantage of gradient-based methods grows for problems with smooth landscapes, while COBYLA’s advantage grows for noisy or jagged landscapes.

Symmetry Reduction

The H2 Hamiltonian in the STO-3G basis starts with 4 qubits (one per spin-orbital: sigma-alpha, sigma-beta, sigma*-alpha, sigma*-beta). The full 4-qubit qubit Hamiltonian under the Jordan-Wigner mapping has 15 Pauli terms.

Two symmetries allow a reduction to 2 qubits:

Particle number conservation: H2 has exactly 2 electrons distributed among 4 spin-orbitals. The total number operator N = sum_i n_i commutes with H, so the Hamiltonian is block-diagonal in sectors of fixed particle number. We only need the N=2 sector.

Spin symmetry: The two electrons in H2 are one alpha-spin and one beta-spin (S_z = 0). The z-component of spin commutes with H, so we can further restrict to the S_z = 0 sector.

The ParityMapper in Qiskit Nature implements this reduction. It identifies the two qubits that encode these conserved quantities, fixes their values, and removes them from the problem:

4 qubits, 15 Pauli terms --> ParityMapper --> 2 qubits, 5 Pauli terms

(The 5-term Hamiltonian simplifies further to 4 non-trivial terms plus a constant energy offset that is absorbed into the coefficient.)

The benefits of symmetry reduction are significant:

- Shallower circuits: Fewer qubits means fewer entangling gates. For a hardware-efficient ansatz, the CNOT count scales with qubit count, so halving the qubits roughly halves the circuit depth.

- Better trainability: Barren plateau severity scales exponentially with qubit count. Going from 4 to 2 qubits improves gradient variance by a factor of roughly 4.

- Fewer measurements: Fewer Pauli terms in the Hamiltonian means fewer measurement bases, reducing the shot budget.

- Faster classical optimization: Fewer variational parameters means the optimizer explores a lower-dimensional landscape.

For larger molecules, symmetry reduction becomes essential. LiH goes from 12 qubits to 10 (or fewer with active space selection). BeH2 can be reduced from 14 to 6 qubits with active space methods.

Qiskit Nature Integration

For production quantum chemistry workflows, Qiskit Nature provides the full pipeline from molecular geometry to qubit Hamiltonian to VQE. The following code shows the Qiskit Nature approach, which replaces 40+ lines of manual Hamiltonian construction with a clean 5-line setup:

# NOTE: Requires qiskit-nature >= 0.7 and pyscf

# pip install qiskit-nature[pyscf]

from qiskit_nature.second_q.drivers import PySCFDriver

from qiskit_nature.second_q.mappers import ParityMapper

from qiskit_nature.second_q.circuit.library import UCCSD, HartreeFock

from qiskit_nature.second_q.algorithms import GroundStateEigensolver

from qiskit.primitives import StatevectorEstimator

from qiskit_algorithms import VQE

from qiskit_algorithms.optimizers import COBYLA

# Step 1: Define the molecule and compute the fermionic Hamiltonian

driver = PySCFDriver(atom="H 0.0 0.0 0.0; H 0.0 0.0 0.735", basis="sto-3g")

problem = driver.run()

# Step 2: Map to qubit operator with parity reduction (4 qubits -> 2 qubits)

mapper = ParityMapper(num_particles=problem.num_particles)

# Step 3: Build the UCCSD ansatz with Hartree-Fock initial state

ansatz = UCCSD(

num_spatial_orbitals=problem.num_spatial_orbitals,

num_particles=problem.num_particles,

qubit_mapper=mapper,

initial_state=HartreeFock(

num_spatial_orbitals=problem.num_spatial_orbitals,

num_particles=problem.num_particles,

qubit_mapper=mapper,

),

)

# Step 4: Run VQE

estimator = StatevectorEstimator()

optimizer = COBYLA(maxiter=500)

vqe = VQE(estimator=estimator, ansatz=ansatz, optimizer=optimizer)

# Step 5: Solve

solver = GroundStateEigensolver(mapper, vqe)

result = solver.solve(problem)

print(result)

This approach handles all the complexity automatically: computing one-electron and two-electron integrals via PySCF, constructing the second-quantized Hamiltonian, applying the Jordan-Wigner (or Parity) mapping, performing symmetry reduction, and building the physically-motivated UCCSD ansatz. The output includes the total ground state energy, the electronic energy, the nuclear repulsion energy, and the optimized circuit parameters.

For learning purposes, the manual approach shown earlier in this tutorial builds intuition. For actual research or production use, Qiskit Nature is the correct tool.

Potential Energy Surface Scanning

One of VQE’s most useful applications is computing the potential energy surface (PES): the ground state energy as a function of molecular geometry. For H2, this means varying the bond length and recording the energy at each point.

import numpy as np

import matplotlib.pyplot as plt

from scipy.optimize import minimize

from qiskit.circuit import QuantumCircuit, ParameterVector

from qiskit.quantum_info import SparsePauliOp

from qiskit.primitives import StatevectorEstimator

# Pre-computed H2 Hamiltonians at different bond lengths (STO-3G, parity-reduced)

# Format: bond_length_angstrom -> (ZZ_coeff, IZ_coeff, ZI_coeff, XX_coeff)

h2_hamiltonians = {

0.5: (-0.8242, 0.5449, -0.5449, 0.1476),

0.6: (-0.9174, 0.4812, -0.4812, 0.1659),

0.7: (-0.9906, 0.4275, -0.4275, 0.1758),

0.735: (-1.0523, 0.3979, -0.3979, 0.1809),

0.8: (-1.0466, 0.3826, -0.3826, 0.1783),

1.0: (-1.0988, 0.3131, -0.3131, 0.1745),

1.2: (-1.1182, 0.2597, -0.2597, 0.1614),

1.5: (-1.1126, 0.1975, -0.1975, 0.1340),

2.0: (-1.0729, 0.1343, -0.1343, 0.0903),

2.5: (-1.0401, 0.0953, -0.0953, 0.0573),

3.0: (-1.0196, 0.0709, -0.0709, 0.0365),

}

def build_ansatz() -> QuantumCircuit:

theta = ParameterVector("theta", length=6)

qc = QuantumCircuit(2)

idx = 0

for q in range(2):

qc.ry(theta[idx], q)

idx += 1

for _ in range(2):

qc.cx(0, 1)

for q in range(2):

qc.ry(theta[idx], q)

idx += 1

return qc

ansatz = build_ansatz()

estimator = StatevectorEstimator()

bond_lengths = sorted(h2_hamiltonians.keys())

vqe_energies = []

exact_energies = []

# Also store nuclear repulsion for total energy

# E_nuc = 1 / R (in Hartree, with R in Bohr; 1 angstrom = 1.8897 Bohr)

def nuclear_repulsion(r_angstrom):

r_bohr = r_angstrom * 1.8897259886

return 1.0 / r_bohr

for r in bond_lengths:

zz, iz, zi, xx = h2_hamiltonians[r]

ham = SparsePauliOp.from_list([("ZZ", zz), ("IZ", iz), ("ZI", zi), ("XX", xx)])

def cost_fn(params, h=ham):

pub = (ansatz, h, [params.tolist()])

result = estimator.run([pub]).result()

return float(result[0].data.evs[0])

# Multiple random restarts to avoid local minima

best_energy = np.inf

for trial in range(5):

np.random.seed(trial)

x0 = np.random.uniform(-np.pi, np.pi, ansatz.num_parameters)

res = minimize(cost_fn, x0, method="COBYLA", options={"maxiter": 500})

if res.fun < best_energy:

best_energy = res.fun

total_energy = best_energy + nuclear_repulsion(r)

vqe_energies.append(total_energy)

# Exact diagonalization for comparison

exact_eigenvalues = np.linalg.eigvalsh(ham.to_matrix().toarray())

exact_energies.append(exact_eigenvalues[0] + nuclear_repulsion(r))

# Plot the potential energy surface

plt.figure(figsize=(10, 6))

plt.plot(bond_lengths, exact_energies, "k-", linewidth=2, label="Exact (FCI)")

plt.plot(bond_lengths, vqe_energies, "ro", markersize=8, label="VQE")

plt.xlabel("Bond length (angstrom)")

plt.ylabel("Total energy (Ha)")

plt.title("H2 Potential Energy Surface: VQE vs Exact")

plt.legend()

plt.grid(True, alpha=0.3)

# Mark equilibrium

eq_idx = np.argmin(exact_energies)

plt.annotate(

f"Equilibrium: {bond_lengths[eq_idx]} A\n{exact_energies[eq_idx]:.4f} Ha",

xy=(bond_lengths[eq_idx], exact_energies[eq_idx]),

xytext=(1.5, exact_energies[eq_idx] + 0.05),

arrowprops=dict(arrowstyle="->"),

fontsize=10,

)

plt.tight_layout()

plt.savefig("h2_pes_scan.png", dpi=150)

plt.show()

# Print numerical comparison

print(f"{'Bond (A)':>10} {'VQE (Ha)':>12} {'Exact (Ha)':>12} {'Error (mHa)':>12}")

print("-" * 50)

for r, vqe_e, exact_e in zip(bond_lengths, vqe_energies, exact_energies):

error_mha = abs(vqe_e - exact_e) * 1000

print(f"{r:>10.3f} {vqe_e:>12.6f} {exact_e:>12.6f} {error_mha:>12.2f}")

The PES scan reveals several features. The energy minimum at ~0.735 angstrom corresponds to the equilibrium bond length. At shorter distances, nuclear repulsion dominates and the energy rises steeply. At longer distances, the molecule dissociates and the energy approaches the sum of two isolated hydrogen atom energies. VQE should reproduce the exact curve at all bond lengths for this 2-qubit problem, though larger molecules show increasing VQE error at the dissociation limit where static correlation becomes strong.

Barren Plateaus

As the number of qubits and circuit depth grows, the gradient of the cost function with respect to any single parameter shrinks exponentially:

Var[d E / d theta_k] ~ O(2^{-n})

This phenomenon is called a barren plateau. The loss landscape becomes nearly flat everywhere, and gradient-based optimizers stall because every direction looks equally bad. Gradient-free methods like COBYLA also struggle because the signal disappears into shot noise.

Three practical mitigations exist:

Local cost functions measure only subsystems rather than the full system, preserving gradient information at the cost of some expressibility. A local cost function for VQE might measure each Pauli term independently rather than computing a global expectation value, which is already the standard approach in VQE implementations.

Structured ansatze rooted in domain knowledge (like UCCSD) avoid the randomly parameterized layers that cause barren plateaus. The key insight is that barren plateaus arise when the ansatz forms an approximate 2-design over the unitary group. Chemically-motivated ansatze restrict the search space to a physically relevant subspace, avoiding this behavior.

Warm starting initializes parameters near a known good solution, such as a classical mean-field (Hartree-Fock) result, rather than at random. For VQE, this means setting initial parameters so that the circuit prepares a state close to the Hartree-Fock state, then allowing the optimizer to refine from there. The gradient signal is strong near the Hartree-Fock solution because the state has low entanglement.

Common Mistakes

These are the most frequent errors when implementing VQE, along with how to avoid them:

1. Using the full qubit Hamiltonian without symmetry reduction. The H2 molecule in STO-3G has 4 qubits before symmetry reduction and only 2 after. Running VQE on the full 4-qubit Hamiltonian doubles the circuit depth, increases the parameter count, and worsens trainability by roughly a factor of 4 in gradient variance. Always apply ParityMapper or TaperedQubitMapper before building the ansatz.

2. Not using multiple random restarts. COBYLA and other local optimizers can get stuck in local minima, especially for deeper ansatze. Always run VQE from 5 to 10 different random starting points and keep the lowest energy found. The computational overhead is linear (5x to 10x), but the improvement in reliability is often the difference between a converged and a stuck optimization.

3. Using too few measurement shots. With 100 shots per circuit, each expectation value has a standard deviation of roughly 0.1 Ha (depending on the operator norm). This variance overwhelms the signal for any optimizer. Use at least 1000 shots per Pauli term, and preferably 4000 to 8192 for chemically relevant accuracy. The shot budget scales as O(1/epsilon^2) where epsilon is the desired precision.

4. Confusing ansatz depth with circuit depth. The depth parameter in build_ansatz controls the number of variational layers. With depth=2, the function creates 2 entangling layers, each followed by a rotation layer. However, qc.depth() returns the total gate depth of the circuit, which includes single-qubit gates and may be larger than the variational layer count. When reporting circuit depth for hardware feasibility, use qc.depth() after transpilation, not the layer count.

5. Forgetting that COBYLA minimizes. The returned result.fun is the minimized energy, which is the ground state energy estimate. No sign flip is needed. This seems obvious, but it is a common source of confusion when comparing VQE results to reference energies that may be reported with different sign conventions.

6. Ignoring the nuclear repulsion energy. The qubit Hamiltonian from Jordan-Wigner mapping contains only the electronic energy terms. The total molecular energy requires adding the nuclear repulsion energy, which is a classical constant for a given geometry. When comparing VQE results to experimental or full-CI reference values, always verify whether the reference includes nuclear repulsion.

When VQE Works and When It Doesn’t

VQE is well-suited for small molecules (H2, LiH, BeH2) and spin lattice models where the Hamiltonian can be expressed as a compact sum of Pauli operators. It has also produced useful results for specific quantum chemistry benchmarks on real hardware.

VQE does not scale to classically-hard instances without error correction. The number of Pauli terms in a molecular Hamiltonian grows polynomially with system size, but the circuit depth required by accurate ansatze grows faster than current hardware can support without excessive noise.

| System | Qubits (reduced) | Pauli terms | VQE feasible today? |

|---|---|---|---|

| H2 | 2 | 5 | Yes |

| LiH | 10 | ~100 | Marginal |

| BeH2 | 6 (active space) | ~50 | Marginal |

| FeMoco (N-fixation) | 100+ | ~10^6 | No (requires fault tolerance) |

For production molecular VQE workflows, Qiskit Nature provides Hamiltonian construction from PySCF or PSI4, active space selection, and a full driver interface. The primitives-based pattern shown above translates directly into that framework.

Summary

| Property | Value |

|---|---|

| Algorithm type | Variational hybrid quantum-classical |

| Key primitive | Estimator (expectation value) |

| Optimizer | Gradient-free (COBYLA, Nelder-Mead) or gradient-based (SPSA, L-BFGS-B) |

| Gradient method | Parameter-shift rule (exact, 2 circuits per parameter) |

| Bottleneck | Circuit depth, barren plateaus, noise floor |

| Best use case | Small molecule ground state energy |

| Key reduction | Symmetry (parity) mapping: 4 qubits to 2 for H2 |

| Chemical accuracy | 1.6 mHa = 1 kcal/mol |

| NISQ compatible? | Yes, but noise limits accuracy to above chemical accuracy on most hardware |

VQE demonstrates that useful quantum computation does not require a fault-tolerant device. By offloading the optimization to classical hardware and keeping the quantum circuit as shallow as possible, it extracts signal from noisy qubits that would otherwise be unusable. The algorithm’s true contribution is not in solving problems beyond classical reach (it does not, for molecules accessible today), but in establishing the hybrid quantum-classical optimization framework that future fault-tolerant algorithms will build upon.

Was this tutorial helpful?