Getting Started with D-Wave Ocean SDK

Install the D-Wave Ocean SDK, formulate a simple optimization problem as a QUBO, and solve it using D-Wave's quantum annealer or simulator.

Circuit diagrams

D-Wave takes a fundamentally different approach from gate-based quantum computers. Instead of applying logic gates to qubits, D-Wave uses quantum annealing: a physical process where the system naturally evolves toward its lowest energy configuration, which corresponds to the solution of an optimization problem.

This tutorial walks through installing the Ocean SDK, understanding the physics behind quantum annealing, formulating problems as QUBOs and Ising models, and solving several optimization problems with both local simulators and real D-Wave hardware.

Gate-Based vs Quantum Annealing

Gate-based computers (IBM, Google, IonQ) execute sequences of unitary operations on qubits and can in principle run any quantum algorithm. Quantum annealers are purpose-built for optimization: finding the minimum of an objective function over binary variables.

The trade-off is specialization vs generality. Annealers are not universal quantum computers, but they can solve certain combinatorial optimization problems very naturally by mapping them to the physical Ising model.

How Quantum Annealing Works

Quantum annealing exploits quantum mechanics to search for the ground state (lowest energy configuration) of a problem Hamiltonian. The system evolves according to a time-dependent Hamiltonian:

H(s) = A(s) * H_driver + B(s) * H_problem

where s is the anneal fraction, going from 0 to 1 during the anneal. The two components are:

H_driver (the transverse-field term):

H_driver = -sum_i sigma_x_i

This is the transverse-field Ising Hamiltonian. The Pauli-X operator sigma_x_i flips qubit i, so this term drives transitions between computational basis states. Its ground state is a uniform superposition of all possible bitstrings, meaning all candidate solutions are equally represented.

H_problem (the problem Hamiltonian):

H_problem = sum_i h_i * sigma_z_i + sum_{i<j} J_ij * sigma_z_i * sigma_z_j

This encodes your optimization problem. The h_i values are local fields (biases on individual qubits) and the J_ij values are couplings between qubits. The ground state of H_problem is the solution to your optimization problem.

The anneal schedule:

- At

s = 0:A(s) >> B(s). The Hamiltonian is dominated by H_driver. The system starts in the ground state of H_driver, which is a uniform superposition of all states. Every candidate solution has equal amplitude. - During the anneal (

0 < s < 1):A(s)decreases whileB(s)increases. The problem Hamiltonian gradually takes over. - At

s = 1:A(s) << B(s). The Hamiltonian is dominated by H_problem. If the anneal was slow enough (the adiabatic condition), the system remains in the ground state throughout and ends in the ground state of H_problem, which is the optimal solution.

The key quantum effect is tunneling. During the anneal, the transverse field allows the system to tunnel through energy barriers between local minima, rather than having to climb over them as classical simulated annealing does. This is analogous to a ball passing through a hill rather than rolling over it.

D-Wave provides the specific A(s) and B(s) schedules for each processor. You can retrieve them via the Ocean SDK:

from dwave.system import DWaveSampler

# Requires LEAP credentials

sampler = DWaveSampler()

schedule = sampler.properties['anneal_schedule']

# Returns list of (s, A(s), B(s)) triples in GHz

In practice, the anneal takes about 20 microseconds by default. The system does not always reach the true ground state (the adiabatic condition requires infinitely slow annealing for hard problems), so D-Wave runs many independent anneals and returns the best results.

Installation

pip install dwave-ocean-sdk

This installs the full Ocean stack:

dimod: the core library for defining binary quadratic models (BQMs)neal: classical simulated annealing solver (free, no D-Wave account needed)dwave-system: connects to real D-Wave hardware via LEAP credentialsdwave-networkx: graph algorithms formulated as optimization problems

To use real D-Wave hardware, you also need to configure your LEAP API credentials:

dwave config create

This prompts you for your API token, which you can find in your LEAP dashboard. For this tutorial, most examples use the local SimulatedAnnealingSampler, which requires no account.

What is a QUBO?

A Quadratic Unconstrained Binary Optimization (QUBO) problem asks: find a binary vector x that minimizes

E(x) = sum_i Q_ii * x_i + sum_{i<j} Q_ij * x_i * x_j

where each x_i is 0 or 1. The matrix Q defines the objective. On D-Wave hardware, this maps directly onto the physical qubits and couplers of the Advantage processor.

The Ising Model: QUBO’s Spin Cousin

D-Wave hardware natively operates in the Ising model formulation, which uses spin variables s_i in {-1, +1} instead of binary variables x_i in {0, 1}. The Ising energy function is:

E_Ising(s) = sum_i h_i * s_i + sum_{i<j} J_ij * s_i * s_j

where h_i are the local fields (biases) and J_ij are the coupling strengths between spins.

Conversion between QUBO and Ising:

The two formulations are related by the substitution x_i = (1 + s_i) / 2, or equivalently s_i = 2*x_i - 1. This is a simple linear mapping:

x_i = 0corresponds tos_i = -1x_i = 1corresponds tos_i = +1

Starting from a QUBO matrix Q, the Ising parameters are:

h_i = Q_ii / 2 + sum_{j != i} Q_ij / 4

J_ij = Q_ij / 4

offset = sum_i Q_ii / 2 + sum_{i<j} Q_ij / 4

The offset is a constant energy shift that does not affect the optimal solution.

Ocean handles this conversion automatically. You can build a model in either formulation and switch freely:

import dimod

# Build a BQM in QUBO (binary) form

bqm = dimod.BinaryQuadraticModel({'a': -1, 'b': -1}, {('a', 'b'): 2}, 0.0, 'BINARY')

# Convert to Ising form

h, J, offset = bqm.to_ising()

print(f"h (local fields): {h}")

print(f"J (couplings): {J}")

print(f"offset: {offset}")

# Build a BQM directly from Ising parameters

bqm_ising = dimod.BinaryQuadraticModel.from_ising(h, J, offset)

When you submit a QUBO to DWaveSampler, Ocean converts it to the Ising form for the QPU and converts the results back. You never need to do this conversion manually, but understanding it helps when reading D-Wave documentation and debugging embedding issues.

Formulating a Number Partitioning Problem

The number partitioning problem: given a set of numbers, divide them into two groups such that their sums are as close as possible. This is NP-hard in general.

For numbers [3, 1, 2, 5, 4], we want to find a binary assignment where group 0 and group 1 have equal sums (total = 15, so each group should sum to 7.5, meaning we want sums of 7 and 8).

Deriving the QUBO Step by Step

Let n_i be the i-th number in our set, and x_i in {0, 1} be the binary variable indicating which group number i belongs to. Define group A as the numbers where x_i = 0 and group B as the numbers where x_i = 1.

Step 1: Write the objective. We want to minimize the squared difference between the two group sums:

minimize (sum_A - sum_B)^2

Each number n_i contributes +n_i if x_i = 0 (group A) or -n_i if x_i = 1 (group B). So the contribution of number i to (sum_A - sum_B) is n_i * (1 - 2*x_i). This gives:

(sum_A - sum_B) = sum_i n_i * (1 - 2*x_i)

Step 2: Expand the square.

(sum_i n_i * (1 - 2*x_i))^2

= (sum_i n_i - 2 * sum_i n_i * x_i)^2

= (total - 2 * sum_i n_i * x_i)^2

Let S = sum_i n_i * x_i (the sum of numbers in group B). Then:

(total - 2*S)^2 = total^2 - 4 * total * S + 4 * S^2

Step 3: Expand S and S^2 in terms of x_i.

S = sum_i n_i * x_i

S^2 = (sum_i n_i * x_i)^2 = sum_i n_i^2 * x_i^2 + 2 * sum_{i<j} n_i * n_j * x_i * x_j

Since x_i is binary, x_i^2 = x_i. So:

S^2 = sum_i n_i^2 * x_i + 2 * sum_{i<j} n_i * n_j * x_i * x_j

Step 4: Substitute back and collect terms.

E(x) = total^2 - 4 * total * sum_i n_i * x_i + 4 * (sum_i n_i^2 * x_i + 2 * sum_{i<j} n_i * n_j * x_i * x_j)

Grouping by type:

- Constant:

total^2(does not affect the minimum, drop it) - Linear:

(-4 * total * n_i + 4 * n_i^2) * x_i = 4 * n_i * (n_i - total) * x_i - Quadratic:

8 * n_i * n_j * x_i * x_j

Dividing everything by 4 (a positive scalar does not change the minimizer):

Q_ii = n_i * (n_i - total)

Q_ij = 2 * n_i * n_j (for i < j)

This is the QUBO formulation. The factor-of-4 simplification is valid because multiplying the entire objective by a positive constant preserves the optimal solution.

Building the QUBO in Code

import dimod

# The set of numbers to partition

numbers = [3, 1, 2, 5, 4]

n = len(numbers)

# QUBO formulation derived above:

# Q_ii = n_i * (n_i - total) (linear terms, on diagonal)

# Q_ij = 2 * n_i * n_j (quadratic terms, off-diagonal)

total = sum(numbers)

Q = {}

for i in range(n):

# Diagonal (linear) terms

Q[(i, i)] = numbers[i] * (numbers[i] - total)

for j in range(i + 1, n):

# Off-diagonal (quadratic) terms

Q[(i, j)] = 2 * numbers[i] * numbers[j]

print("QUBO matrix (non-zero entries):")

for (i, j), val in Q.items():

print(f" Q[{i},{j}] = {val}")

The ability to derive QUBOs from scratch is essential. Many real problems do not have a pre-built QUBO library function, and you will need to follow exactly this process: write the objective, expand, collect terms by degree, and read off the Q matrix.

Solving with the Local Simulated Annealing Sampler

No D-Wave account is needed to use SimulatedAnnealingSampler. It runs classical simulated annealing on your CPU and is useful for development and testing.

from neal import SimulatedAnnealingSampler

sampler = SimulatedAnnealingSampler()

# Run 1000 reads (independent annealing runs)

sampleset = sampler.sample_qubo(Q, num_reads=1000)

# The sampleset contains all 1000 solutions sorted by energy

best = sampleset.first

print(f"\nBest solution: {best.sample}")

print(f"Energy: {best.energy}")

# Decode the result

assignment = best.sample

group_a = [numbers[i] for i, val in assignment.items() if val == 0]

group_b = [numbers[i] for i, val in assignment.items() if val == 1]

print(f"\nGroup A: {group_a}, sum = {sum(group_a)}")

print(f"Group B: {group_b}, sum = {sum(group_b)}")

# Group A: [3, 4], sum = 7

# Group B: [1, 2, 5], sum = 8 -- optimal partition

Understanding the SampleSet in Depth

The SampleSet object is the core result type in Ocean. It contains every solution sampled, along with energies and metadata.

# Look at the top 5 solutions

print(sampleset.truncate(5).to_pandas_dataframe())

# Energy distribution

energies = sampleset.record.energy.tolist()

print(f"Min energy: {min(energies)}")

print(f"Mean energy: {sum(energies)/len(energies):.1f}")

print(f"Times optimal found: {energies.count(min(energies))}")

Lower energy always means a better solution. The ground state energy (E=0 for a perfectly balanced partition) corresponds to the optimal answer.

The record array

The sampleset.record is a structured NumPy array with the following fields:

import numpy as np

# Each row in the record contains:

# - sample: the binary variable assignments

# - energy: the QUBO/Ising energy of the sample

# - num_occurrences: how many times this exact solution appeared

print(f"Record dtype: {sampleset.record.dtype}")

print(f"Number of unique samples: {len(sampleset.record)}")

print(f"Total reads: {sum(sampleset.record.num_occurrences)}")

Variable labels and data vectors

# Variable labels (in the order they appear in the record)

print(f"Variables: {sampleset.variables}")

# Additional per-sample data (timing, chain break info on real hardware)

print(f"Data vectors: {list(sampleset.data_vectors.keys())}")

Timing information on real hardware

When you run on a real D-Wave QPU, the sampleset.info dictionary contains detailed timing breakdown:

# Example timing info from a real QPU run (values in microseconds):

# {

# 'timing': {

# 'qpu_sampling_time': 21048.0, # total time on QPU

# 'qpu_anneal_time_per_sample': 20.0, # anneal duration per read

# 'qpu_readout_time_per_sample': 37.8, # measurement time per read

# 'qpu_access_time': 25311.2, # total QPU access including overhead

# 'total_post_processing_time': 312.0, # classical post-processing

# 'qpu_programming_time': 8183.2, # time to program the QPU

# }

# }

The QPU access time (anneal + readout) is typically 20 to 200 microseconds per sample. The total wall-clock time also includes network latency and queue waiting, which can range from seconds to minutes depending on system load.

Using a BinaryQuadraticModel Directly

Ocean’s dimod library also provides a higher-level BinaryQuadraticModel (BQM) class that is easier to work with for larger problems:

import dimod

from neal import SimulatedAnnealingSampler

numbers = [3, 1, 2, 5, 4]

n = len(numbers)

total = sum(numbers)

# Build the same problem as a BQM

bqm = dimod.BinaryQuadraticModel(vartype='BINARY')

for i in range(n):

bqm.add_variable(i, numbers[i] * (numbers[i] - total))

for j in range(i + 1, n):

bqm.add_interaction(i, j, 2 * numbers[i] * numbers[j])

sampler = SimulatedAnnealingSampler()

sampleset = sampler.sample(bqm, num_reads=500)

print(sampleset.first.sample)

BQMs are interchangeable with QUBOs for the sampler API and are easier to manipulate programmatically. You can also convert between formulations:

# Convert BQM to Ising form

h, J, offset = bqm.to_ising()

print(f"Ising h: {h}")

print(f"Ising J: {J}")

print(f"Offset: {offset}")

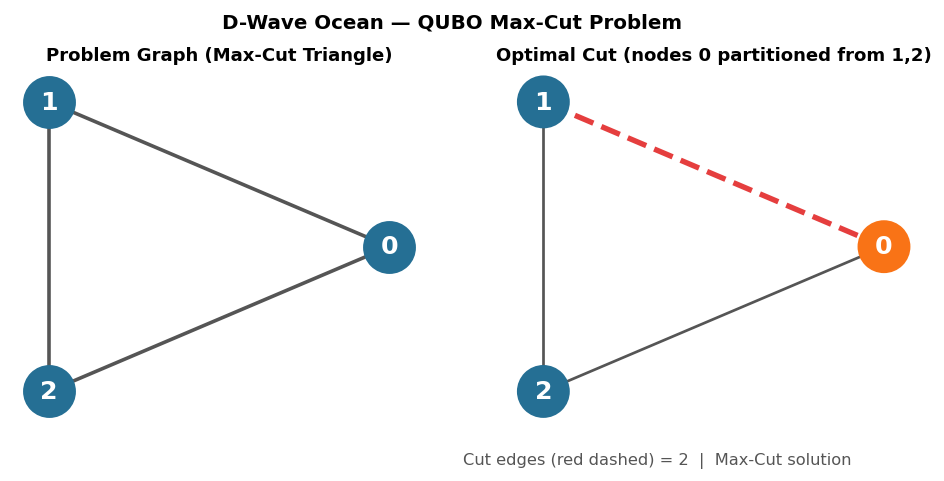

Maximum Cut (MaxCut) Problem

MaxCut is a canonical combinatorial optimization problem: given a graph G, partition the vertices into two sets S and S-bar to maximize the number of edges between S and S-bar. MaxCut is NP-hard in general but maps beautifully to QUBO.

QUBO Formulation

For each edge (i, j) with weight w_ij, the edge is “cut” when x_i != x_j. The XOR of two binary variables is x_i + x_j - 2*x_i*x_j, which equals 1 when the variables differ and 0 when they agree. So the number of cut edges is:

Cut(x) = sum_{(i,j) in E} w_ij * (x_i + x_j - 2 * x_i * x_j)

We want to maximize this, but QUBO format minimizes. So we negate the objective:

E(x) = -sum_{(i,j) in E} w_ij * (x_i + x_j - 2 * x_i * x_j)

Collecting terms:

- Linear:

Q_ii = -sum_{j: (i,j) in E} w_ij(each edge contributes-w_ijto both endpoints) - Quadratic:

Q_ij = 2 * w_ijfor each edge(i,j)

Example: 4-Vertex Cycle Graph

Consider a cycle graph with 4 vertices and edges: (0,1), (1,2), (2,3), (3,0). All edges have weight 1. The maximum cut is 4 (alternating partition: {0,2} vs {1,3}).

import dimod

from neal import SimulatedAnnealingSampler

# Define a 4-vertex cycle graph (all weights = 1)

edges = [(0, 1), (1, 2), (2, 3), (3, 0)]

# Build the QUBO

Q = {}

for i, j in edges:

# Linear terms: subtract 1 from diagonal for each endpoint

Q[(i, i)] = Q.get((i, i), 0) - 1

Q[(j, j)] = Q.get((j, j), 0) - 1

# Quadratic term: +2 for each edge

Q[(i, j)] = Q.get((i, j), 0) + 2

print("MaxCut QUBO:")

for key, val in sorted(Q.items()):

print(f" Q{key} = {val}")

# Solve

sampler = SimulatedAnnealingSampler()

sampleset = sampler.sample_qubo(Q, num_reads=1000)

best = sampleset.first

print(f"\nBest partition: {best.sample}")

print(f"Energy: {best.energy}")

# Count the cut edges

assignment = best.sample

cut_edges = sum(1 for i, j in edges if assignment[i] != assignment[j])

print(f"Cut edges: {cut_edges} out of {len(edges)}")

# Expected: 4 cut edges (optimal for a 4-cycle)

The optimal solutions are {0:0, 1:1, 2:0, 3:1} and {0:1, 1:0, 2:1, 3:0} (and their bit-flipped equivalents). Both cut all 4 edges.

Graph Coloring with dwave-networkx

The dwave_networkx library provides higher-level functions that generate QUBOs for common graph problems automatically. Graph coloring asks: assign one of k colors to each vertex such that no two adjacent vertices share the same color.

import networkx as nx

import dwave_networkx as dnx

from neal import SimulatedAnnealingSampler

# Create a Petersen graph (10 vertices, 15 edges, 3-colorable)

G = nx.petersen_graph()

# Solve the graph coloring problem

sampler = SimulatedAnnealingSampler()

coloring = dnx.min_vertex_coloring(G, sampler=sampler, num_reads=1000)

print(f"Coloring: {coloring}")

print(f"Number of colors used: {len(set(coloring.values()))}")

# Verify the coloring is valid

valid = all(coloring[u] != coloring[v] for u, v in G.edges())

print(f"Valid coloring: {valid}")

Under the hood, dnx.min_vertex_coloring constructs a QUBO that encodes two requirements: each vertex gets exactly one color, and adjacent vertices get different colors. The function handles all the QUBO construction and result decoding for you.

Penalty Terms and Constraints

Most real optimization problems have constraints (equalities or inequalities that solutions must satisfy). The standard technique for encoding constraints in a QUBO is the penalty method: add a term P * (constraint violation)^2 to the energy function, where P is a positive penalty weight.

How Penalty Terms Work

The number partitioning problem is naturally unconstrained: every binary assignment defines a valid partition. Graph coloring, however, has the constraint that each vertex must receive exactly one color.

For k colors and vertex i, we introduce binary variables x_{i,c} for each color c. The constraint is:

x_{i,0} + x_{i,1} + ... + x_{i,k-1} = 1 (exactly one color per vertex)

We encode this as a penalty:

P * (x_{i,0} + x_{i,1} + ... + x_{i,k-1} - 1)^2

Expanding for k=3:

P * (x_{i,0} + x_{i,1} + x_{i,2} - 1)^2

= P * (x_{i,0}^2 + x_{i,1}^2 + x_{i,2}^2 + 2*x_{i,0}*x_{i,1} + 2*x_{i,0}*x_{i,2} + 2*x_{i,1}*x_{i,2}

- 2*x_{i,0} - 2*x_{i,1} - 2*x_{i,2} + 1)

Since x^2 = x for binary variables:

= P * (-x_{i,0} - x_{i,1} - x_{i,2} + 2*x_{i,0}*x_{i,1} + 2*x_{i,0}*x_{i,2} + 2*x_{i,1}*x_{i,2} + 1)

This penalty equals 0 when exactly one variable is 1, and is strictly positive otherwise. The constant +1 can be dropped since it does not affect the minimum.

The conflict penalty (no two adjacent vertices share a color) adds for each edge (u, v) and each color c:

P_conflict * x_{u,c} * x_{v,c}

Choosing the Penalty Weight

The penalty weight P must be large enough that violating a constraint is never beneficial. Specifically, P must exceed the maximum possible improvement in the objective from violating a constraint. If P is too small, the solver finds lower-energy solutions that violate constraints. If P is too large, it dominates the energy landscape and makes it harder for the annealer to distinguish between feasible solutions.

A practical rule of thumb: set P to 2 to 5 times the largest coefficient in the objective part of the QUBO. Then check the returned solutions for constraint violations. If you see violations, increase P. If solution quality degrades (all solutions look the same), decrease P.

Hybrid Solvers for Larger Problems

D-Wave’s LEAP platform provides hybrid solvers that combine quantum annealing with classical heuristics. These solvers can handle problems with thousands of variables, far beyond what fits directly on the QPU.

from dwave.system import LeapHybridSampler

import dimod

import random

# Generate a larger number partitioning problem (50 numbers)

random.seed(42)

numbers = [random.randint(1, 100) for _ in range(50)]

total = sum(numbers)

n = len(numbers)

# Build the QUBO

Q = {}

for i in range(n):

Q[(i, i)] = numbers[i] * (numbers[i] - total)

for j in range(i + 1, n):

Q[(i, j)] = 2 * numbers[i] * numbers[j]

bqm = dimod.BinaryQuadraticModel.from_qubo(Q)

# Solve with LeapHybridSampler (requires LEAP account with hybrid access)

hybrid_sampler = LeapHybridSampler()

hybrid_result = hybrid_sampler.sample(bqm, label="partition-50")

best = hybrid_result.first

assignment = best.sample

group_a_sum = sum(numbers[i] for i in range(n) if assignment[i] == 0)

group_b_sum = sum(numbers[i] for i in range(n) if assignment[i] == 1)

print(f"Hybrid solver: groups sum to {group_a_sum} and {group_b_sum}")

print(f"Difference: {abs(group_a_sum - group_b_sum)}")

The hybrid solver uses a combination of techniques: it decomposes the problem, solves subproblems on the QPU, and recombines results using classical optimization. For problems that exceed the QPU’s qubit count or connectivity, the hybrid solver is the recommended approach.

You can compare the hybrid result with the classical simulated annealing sampler on the same problem:

from neal import SimulatedAnnealingSampler

sa_sampler = SimulatedAnnealingSampler()

sa_result = sa_sampler.sample(bqm, num_reads=1000)

sa_best = sa_result.first

sa_assignment = sa_best.sample

sa_group_a = sum(numbers[i] for i in range(n) if sa_assignment[i] == 0)

sa_group_b = sum(numbers[i] for i in range(n) if sa_assignment[i] == 1)

print(f"SA solver: groups sum to {sa_group_a} and {sa_group_b}")

print(f"Difference: {abs(sa_group_a - sa_group_b)}")

print(f"Hybrid energy: {best.energy}, SA energy: {sa_best.energy}")

Switching to Real D-Wave Hardware

Once you have a LEAP account (free tier available), swap the sampler:

from dwave.system import DWaveSampler, EmbeddingComposite

# EmbeddingComposite handles the minor-embedding step automatically

# (mapping your logical variables onto the physical Pegasus graph)

sampler = EmbeddingComposite(DWaveSampler())

sampleset = sampler.sample_qubo(Q, num_reads=1000, label="number-partition")

print(sampleset.first.sample)

print(sampleset.info) # includes timing and QPU access time

EmbeddingComposite automatically solves the minor-embedding problem: finding a mapping from your logical variable graph onto the physical topology of the D-Wave Advantage processor (Pegasus graph, ~5000 qubits). For small problems this is fast. For large problems it can be the bottleneck.

The Pegasus Graph and Minor Embedding

D-Wave Advantage uses the Pegasus topology: each physical qubit connects to 15 neighbors. This is far from fully connected. If your QUBO has two variables i and j with a non-zero coupling Q_ij, the corresponding physical qubits must be directly connected on the Pegasus graph. When they are not, the embedding must represent one logical variable as a chain of multiple physical qubits.

How Chains Work

A chain is a set of physical qubits that all represent the same logical variable. The physical qubits in a chain are strongly coupled together (using a negative J coupling called the chain strength) so they tend to agree on the same spin value. When all physical qubits in a chain have the same value, the chain is intact. When they disagree, a chain break has occurred, and the logical variable’s value is ambiguous.

Embedding a Fully Connected Problem

A fully connected graph on 10 variables requires every pair to be coupled. On the Pegasus graph, this requires chains of length roughly 3. You can inspect the embedding using minorminer:

import minorminer

import dwave_networkx as dnx

import networkx as nx

# Create the target Pegasus graph (same topology as Advantage)

target = dnx.pegasus_graph(16)

# Create a fully connected source graph on 10 nodes

source = nx.complete_graph(10)

# Find an embedding

embedding = minorminer.find_embedding(source, target)

# Inspect chain lengths

for logical_var, chain in embedding.items():

print(f"Variable {logical_var}: chain length {len(chain)}, "

f"physical qubits {chain}")

As your problem grows, chains get longer, consuming more physical qubits and increasing the risk of chain breaks. A 100-variable fully connected problem might require chains of length 10 or more, using most of the QPU’s 5000+ qubits just for the embedding overhead.

Chain Strength

The chain strength controls how strongly the physical qubits within a chain are coupled. Setting it correctly is critical:

- Too weak: chains break frequently, producing invalid solutions where different physical qubits in the same chain disagree.

- Too strong: the chain coupling dominates the problem Hamiltonian, making all the problem couplings look the same to the annealer. This reduces solution quality.

A safe default is to set chain strength to 2 times the maximum absolute value of any QUBO coefficient:

max_q = max(abs(v) for v in Q.values())

chain_strength = 2 * max_q

sampler = EmbeddingComposite(DWaveSampler())

sampleset = sampler.sample_qubo(Q, num_reads=1000, chain_strength=chain_strength)

You can check for chain breaks in the results:

# chain_break_fraction is available in sampleset.data_vectors on QPU runs

if 'chain_break_fraction' in sampleset.data_vectors:

breaks = sampleset.data_vectors['chain_break_fraction']

print(f"Mean chain break fraction: {sum(breaks)/len(breaks):.3f}")

print(f"Samples with no chain breaks: {sum(1 for b in breaks if b == 0)}")

If more than 10% of samples have chain breaks, increase the chain strength or simplify the problem.

Tuning Annealing Parameters

The D-Wave QPU exposes several parameters that affect solution quality and throughput.

Annealing Time

The annealing_time parameter controls how long each anneal takes, in microseconds. The default is 20 microseconds, and the range is 1 to 2000 microseconds.

Longer annealing times make the process more adiabatic, giving the system more time to track the ground state. For difficult problems with small energy gaps, longer anneals can improve solution quality. For easy problems, shorter anneals are sufficient and allow more reads within the same QPU access time.

from dwave.system import DWaveSampler, EmbeddingComposite

sampler = EmbeddingComposite(DWaveSampler())

# Compare solution quality at different annealing times

for anneal_time in [5, 20, 100, 500]:

sampleset = sampler.sample_qubo(

Q,

num_reads=500,

annealing_time=anneal_time,

label=f"anneal-{anneal_time}us"

)

energies = sampleset.record.energy

print(f"Anneal time {anneal_time:>4} us: "

f"best energy = {min(energies):.1f}, "

f"mean energy = {energies.mean():.1f}")

Number of Reads

The num_reads parameter sets the number of independent annealing runs. More reads increase the probability of finding the ground state. The maximum is typically 10,000 per call.

sampleset = sampler.sample_qubo(Q, num_reads=5000)

Combining Parameters

For production workloads, a common strategy is to first sweep annealing times on a small number of reads to find the sweet spot, then run many reads at that time:

# Step 1: Find the best annealing time (small reads for speed)

best_time = 20

best_energy = float('inf')

for t in [5, 10, 20, 50, 100, 200]:

ss = sampler.sample_qubo(Q, num_reads=100, annealing_time=t)

e = ss.first.energy

if e < best_energy:

best_energy = e

best_time = t

# Step 2: Run many reads at the best annealing time

final = sampler.sample_qubo(Q, num_reads=5000, annealing_time=best_time)

print(f"Best solution energy: {final.first.energy}")

Classical SA vs Quantum Annealing

Simulated annealing (neal.SimulatedAnnealingSampler) uses thermal fluctuations to escape local minima. Quantum annealing uses quantum tunneling. The two approaches have different strengths depending on the problem structure.

import dimod

from neal import SimulatedAnnealingSampler

import random

# Generate a random 50-variable Ising problem

random.seed(42)

n_vars = 50

h = {i: random.uniform(-1, 1) for i in range(n_vars)}

J = {}

for i in range(n_vars):

for j in range(i + 1, n_vars):

if random.random() < 0.3: # 30% connectivity (sparse)

J[(i, j)] = random.uniform(-1, 1)

bqm = dimod.BinaryQuadraticModel.from_ising(h, J)

# Solve with classical SA

sa_sampler = SimulatedAnnealingSampler()

sa_result = sa_sampler.sample(bqm, num_reads=1000)

# Analyze energy distribution

sa_energies = sa_result.record.energy

print(f"SA best energy: {min(sa_energies):.4f}")

print(f"SA mean energy: {sa_energies.mean():.4f}")

print(f"SA energy std: {sa_energies.std():.4f}")

print(f"SA times optimal: {list(sa_energies).count(min(sa_energies))}")

To compare against the QPU (requires LEAP credentials):

from dwave.system import DWaveSampler, EmbeddingComposite

qpu_sampler = EmbeddingComposite(DWaveSampler())

qpu_result = qpu_sampler.sample(bqm, num_reads=1000)

qpu_energies = qpu_result.record.energy

print(f"QPU best energy: {min(qpu_energies):.4f}")

print(f"QPU mean energy: {qpu_energies.mean():.4f}")

print(f"QPU energy std: {qpu_energies.std():.4f}")

For random sparse Ising problems, classical simulated annealing often performs comparably to D-Wave. The quantum advantage is more apparent for structured problems with tall, thin energy barriers that quantum tunneling can penetrate efficiently, such as random 3D Ising models or certain constraint satisfaction problems with a “clustered” solution landscape.

The practical advantage of D-Wave is speed: each QPU anneal takes about 20 microseconds, so 1000 reads complete in roughly 20 milliseconds of QPU time. Classical simulated annealing on a 50-variable problem might take seconds to achieve the same quality. For time-critical applications, this constant-factor speedup matters.

Common Mistakes

1. Forgetting to negate for maximization

QUBO format minimizes the energy. If your problem is a maximization (like MaxCut), you must negate the objective. Forgetting this is the most common source of wrong answers.

# WRONG: maximizes nothing, finds minimum cut instead

Q_wrong = {}

for i, j in edges:

Q_wrong[(i, i)] = Q_wrong.get((i, i), 0) + 1

Q_wrong[(j, j)] = Q_wrong.get((j, j), 0) + 1

Q_wrong[(i, j)] = Q_wrong.get((i, j), 0) - 2

# CORRECT: negate to convert maximization to minimization

Q_right = {}

for i, j in edges:

Q_right[(i, i)] = Q_right.get((i, i), 0) - 1

Q_right[(j, j)] = Q_right.get((j, j), 0) - 1

Q_right[(i, j)] = Q_right.get((i, j), 0) + 2

2. Chain strength too low

If the chain strength is smaller than the largest QUBO coefficient, chains will break frequently. A safe starting point:

chain_strength = 2 * max(abs(v) for v in Q.values())

3. Using DWaveSampler without EmbeddingComposite

DWaveSampler() expects your problem to be expressed directly in terms of physical qubit indices on the Pegasus graph. Unless you are managing your own embedding, always wrap it with EmbeddingComposite:

# WRONG: fails unless your variable labels match physical qubit indices

# and your problem graph is a subgraph of the Pegasus topology

sampler = DWaveSampler()

# CORRECT: handles embedding automatically

sampler = EmbeddingComposite(DWaveSampler())

4. Expecting the global optimum from a single run

Quantum annealing is a heuristic. It does not guarantee finding the global optimum. Always run multiple reads and take the best:

# Run many reads

sampleset = sampler.sample_qubo(Q, num_reads=1000)

# Take the best

best_solution = sampleset.first.sample

best_energy = sampleset.first.energy

For critical applications, compare results across multiple calls with different random seeds or annealing parameters.

5. Forgetting to configure credentials

Before using DWaveSampler or LeapHybridSampler, you must configure your LEAP API token:

dwave config create

This stores your credentials locally. Without this step, any call to real hardware raises an authentication error. You can verify your setup with:

dwave ping

What to Try Next

- Formulate a traveling salesman problem (TSP) as a QUBO using penalty terms for city visitation constraints

- Experiment with

dwave.inspectorto visualize the embedding and energy landscape of your problems on the QPU - Explore the

dwave-hybridframework for building custom hybrid workflows that combine classical and quantum solvers - Read about reverse annealing, which starts from a known solution and explores nearby states for local refinement

- See the Ocean SDK reference for the full sampler and BQM API

Was this tutorial helpful?