Simulated Annealing with D-Wave Ocean

Use D-Wave Ocean's SimulatedAnnealingSampler to test QUBO formulations locally before committing to quantum hardware.

Circuit diagrams

Before sending a problem to D-Wave’s quantum hardware, you should always verify your formulation works correctly using a classical solver. D-Wave Ocean’s dwave.samplers module provides a SimulatedAnnealingSampler that runs entirely on your CPU, requires no API token, and returns results in the same SampleSet format as the QPU. This makes it the ideal tool for debugging QUBO formulations and establishing a performance baseline.

What Simulated Annealing Actually Does

Simulated annealing is inspired by the physical process of slowly cooling a metal. When a blacksmith heats steel to a high temperature, the atoms move freely and can escape unfavorable configurations. As the metal cools slowly, the atoms settle into a low-energy crystal structure. Cool it too fast and you get a brittle, disordered mess. Cool it slowly and the material reaches a strong, well-ordered state near its global energy minimum.

The algorithm translates this directly into optimization. You start with a random solution and a high “temperature” parameter. At each step, you propose a small change to the current solution (flip a single variable, for instance). If the change lowers the energy, you accept it. If the change raises the energy, you still accept it with some probability that depends on the temperature: at high temperature, you accept almost any uphill move, which lets the search escape local minima. As temperature decreases over many iterations, you become increasingly selective, accepting only improvements and small uphill steps. Eventually, at near-zero temperature, the system freezes into whatever low-energy configuration it has found.

This is the same landscape-exploration strategy that quantum annealing uses, but performed classically with a random walk instead of quantum tunneling. Where a quantum annealer exploits quantum superposition to explore multiple paths through the energy landscape simultaneously, simulated annealing relies on thermal fluctuations to hop over barriers one step at a time. For many problems, both approaches find similar quality solutions. The key difference is how they scale on hard problem instances with tall, narrow energy barriers.

Why a Classical Baseline Matters

Quantum annealing is not guaranteed to find the global optimum; it is a heuristic. Without a classical reference point, you cannot tell whether a poor QPU result comes from a bad formulation or from hardware limitations like noise and limited connectivity. Simulated annealing gives you that reference. If SA cannot find good solutions to your problem, the formulation itself likely needs work, and no amount of QPU time will fix that.

Setup

pip install dwave-ocean-sdk

The SimulatedAnnealingSampler is included in the Ocean SDK via dwave.samplers. No cloud access is needed.

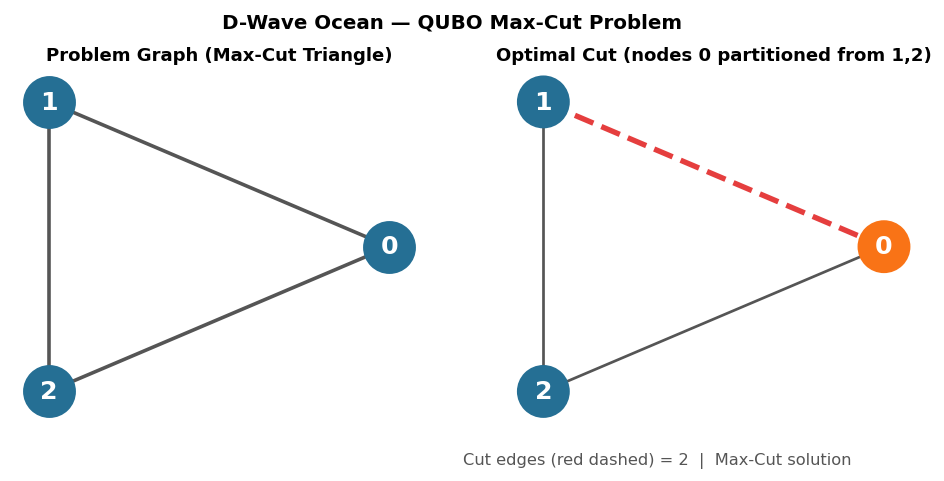

Max-Cut Example

The Max-Cut problem asks: given a graph, partition its vertices into two sets to maximize the number of edges crossing the partition. This maps naturally to a QUBO where each vertex gets a binary variable.

import dimod

from dwave.samplers import SimulatedAnnealingSampler

# Define a graph as a list of edges (unweighted)

edges = [(0, 1), (0, 3), (1, 2), (2, 3), (2, 4), (3, 4)]

# Build the QUBO for Max-Cut

# For each edge (i, j), we want x_i != x_j.

# The contribution is: -x_i - x_j + 2*x_i*x_j

# (This is minimized when x_i and x_j differ.)

Q = {}

for i, j in edges:

Q[(i, i)] = Q.get((i, i), 0) - 1

Q[(j, j)] = Q.get((j, j), 0) - 1

Q[(i, j)] = Q.get((i, j), 0) + 2

bqm = dimod.BinaryQuadraticModel.from_qubo(Q)

print(f"Problem: {bqm.num_variables} variables, {bqm.num_interactions} interactions")

Running the Sampler

sampler = SimulatedAnnealingSampler()

sampleset = sampler.sample(

bqm,

num_reads=200, # 200 independent runs

num_sweeps=1000, # 1000 sweeps per run

)

print(f"Best energy: {sampleset.first.energy}")

print(f"Best sample: {sampleset.first.sample}")

Parameter Tuning

The SimulatedAnnealingSampler has three parameters that control how thoroughly it searches the solution space. Understanding them is essential for getting good results on real problems.

num_reads: Coverage of the Solution Landscape

Each “read” is an independent run starting from a random initial configuration. Because simulated annealing is stochastic, different runs can find different solutions. More reads give you better coverage of the solution landscape.

For quick debugging, 10-50 reads is enough to verify that your QUBO formulation is correct. For production use on hard problems, 200-1000 reads is typical. The full set of results across all reads tells you something valuable: if most reads find the same best energy, the problem likely has a clear global minimum. If energies are scattered across a wide range, the landscape is rough and you may need more sweeps per run.

num_sweeps: Thoroughness per Run

A “sweep” is a single pass through all variables, proposing one flip per variable. More sweeps mean the algorithm has more time to explore and settle into low-energy states within each run.

There are diminishing returns. On easy problems, 100 sweeps may suffice. On hard problems with many local minima, you might need 5,000 or more. The right strategy is to increase num_sweeps until the best energy stabilizes across runs.

Here is a concrete way to find the right value:

from dwave.samplers import SimulatedAnnealingSampler

import dimod

# Assume bqm is already defined from the Max-Cut example above

sampler = SimulatedAnnealingSampler()

for sweeps in [100, 500, 1000, 5000]:

sampleset = sampler.sample(bqm, num_reads=100, num_sweeps=sweeps)

energies = [datum.energy for datum in sampleset.data(fields=["energy"])]

best = min(energies)

mean = sum(energies) / len(energies)

print(f" sweeps={sweeps:5d} best={best:.1f} mean={mean:.2f}")

For the small Max-Cut example above, even 100 sweeps finds the optimum reliably. On a 500-variable problem, you would see the best energy improve substantially between 100 and 1000 sweeps, then plateau. That plateau is your signal to stop increasing.

beta_range: The Temperature Schedule

The beta_range parameter controls the inverse temperature schedule. Beta is 1/T, so low beta means high temperature (lots of exploration) and high beta means low temperature (fine-tuning only). The sampler sweeps linearly from the starting beta to the ending beta over the course of num_sweeps steps.

The default schedule works well for most moderate problems. If you find that the sampler gets stuck in local minima, try widening the range by starting at a lower beta (higher initial temperature):

sampleset = sampler.sample(

bqm,

num_reads=200,

num_sweeps=2000,

beta_range=[0.01, 10.0], # Start hotter, end colder than default

)

A wider range gives the algorithm more time at high temperature to escape local minima before cooling down to refine the best solution.

Interpreting the SampleSet

The SampleSet object stores every sample along with its energy and how many times it was observed:

# Show unique solutions sorted by energy

for sample, energy, num_occ in list(sampleset.data(

fields=["sample", "energy", "num_occurrences"],

sorted_by="energy",

))[:5]:

partition = {v: s for v, s in sample.items()}

cut_size = sum(1 for i, j in edges if partition[i] != partition[j])

print(f" Cut={cut_size} energy={energy:.1f} occurrences={num_occ} {dict(sample)}")

# Timing information

print(f"\nTiming: {sampleset.info.get('timing', 'N/A')}")

The energy is negative for Max-Cut (since we framed it as minimization), so the lowest energy corresponds to the largest cut.

Reading the Energy Landscape

The full set of samples from all reads contains more information than just the best solution. The distribution of energies tells you about the structure of the optimization landscape, which is valuable for understanding both the problem and the solver’s performance.

import collections

# Extract all energies from the SampleSet

energies = [datum.energy for datum in sampleset.data(fields=["energy"])]

# Basic statistics

best_energy = min(energies)

worst_energy = max(energies)

mean_energy = sum(energies) / len(energies)

num_at_best = energies.count(best_energy)

print(f"Best energy: {best_energy:.1f}")

print(f"Worst energy: {worst_energy:.1f}")

print(f"Mean energy: {mean_energy:.2f}")

print(f"Reads finding best: {num_at_best}/{len(energies)} ({100*num_at_best/len(energies):.0f}%)")

What the distribution tells you:

- Most reads find the same best energy. The landscape has a clear global minimum (or a set of degenerate optima at the same energy) and SA finds it reliably. Your parameters are sufficient for this problem.

- Energies cluster in a few distinct groups. The landscape has a small number of local minima at different energy levels. This is common for structured optimization problems. Check whether the best cluster represents the true optimum by increasing

num_sweeps. - Energies are spread across a wide range. Either the problem has a very rough landscape with many local minima, or

num_sweepsis too low for the algorithm to converge. Try doublingnum_sweepsand see if the distribution tightens. If it does, the problem is tractable but needs more computation. If it stays broad, the problem may genuinely be hard.

To visualize the distribution (optional, requires matplotlib):

import matplotlib.pyplot as plt

plt.hist(energies, bins=20, edgecolor="black")

plt.xlabel("Energy")

plt.ylabel("Number of reads")

plt.title("SA Energy Distribution (200 reads)")

plt.axvline(best_energy, color="red", linestyle="--", label=f"Best: {best_energy:.1f}")

plt.legend()

plt.tight_layout()

plt.savefig("sa_energy_histogram.png", dpi=150)

plt.show()

A histogram with a sharp peak at the lowest energy is exactly what you want to see before moving to the QPU. It means SA solves the problem reliably, and you have a strong baseline to compare against.

Verifying Your Max-Cut Solution

For small problems, you should verify that the solver actually found the optimal solution. Our graph has 5 nodes and 6 edges, so the maximum possible cut is 6 (every edge crosses the partition). Let’s check the best solution by hand.

best_sample = sampleset.first.sample

print(f"Best partition: {best_sample}")

# Check each edge

cut_edges = []

uncut_edges = []

for i, j in edges:

if best_sample[i] != best_sample[j]:

cut_edges.append((i, j))

else:

uncut_edges.append((i, j))

print(f"\nCut edges ({len(cut_edges)}/{len(edges)}):")

for i, j in cut_edges:

print(f" ({i}, {j}): node {i}={best_sample[i]}, node {j}={best_sample[j]}")

if uncut_edges:

print(f"\nUncut edges ({len(uncut_edges)}):")

for i, j in uncut_edges:

print(f" ({i}, {j}): node {i}={best_sample[i]}, node {j}={best_sample[j]}")

else:

print("\nAll edges are cut. This is a perfect partition.")

For this specific graph, the optimal Max-Cut value is 5 (not 6, because the graph structure makes it impossible to cut all edges simultaneously). You can verify this by noting that nodes 2, 3, and 4 form a triangle. In any 2-partition, at least one edge of a triangle must remain uncut.

If the solver consistently returns a cut of 5, it is finding the true optimum. If you see a cut of 4 or less, increase num_sweeps.

Comparing Against Random Solutions

A simple but useful check: compare your solver against random assignments to confirm the annealer is actually doing meaningful work.

import random

random_energies = []

for _ in range(200):

random_sample = {v: random.randint(0, 1) for v in range(5)}

energy = bqm.energy(random_sample)

random_energies.append(energy)

sa_best = sampleset.first.energy

random_mean = sum(random_energies) / len(random_energies)

random_best = min(random_energies)

print(f"Random baseline: mean energy = {random_mean:.2f}, best = {random_best:.1f}")

print(f"Simulated annealing: best energy = {sa_best:.2f}")

print(f"SA improvement over random mean: {random_mean - sa_best:.2f}")

print(f"SA improvement over random best: {random_best - sa_best:.2f}")

If simulated annealing only marginally beats random sampling, your problem may be trivial, or your formulation may have issues worth investigating before moving to hardware. On this small Max-Cut instance, random sampling often finds the optimum too, because the problem only has 32 possible assignments. The gap between SA and random grows dramatically on larger, harder problems.

When SA Is Enough vs. When You Need the QPU

Simulated annealing is a surprisingly strong solver. Before investing QPU time (and QPU cost), consider whether SA already meets your needs.

SA handles moderate problems well. On modern hardware, the SimulatedAnnealingSampler comfortably solves QUBO problems with up to roughly 10,000 variables in seconds to minutes. For well-structured problems like Max-Cut on sparse graphs, SA often finds near-optimal solutions at this scale.

QPU has structural advantages on specific problems. Current D-Wave hardware (Advantage systems) provides 5,000+ qubits connected in a Pegasus graph topology. The QPU does not simply have “more compute.” It exploits quantum tunneling to traverse energy barriers that classical thermal fluctuations cannot efficiently cross. This advantage shows up most clearly on problems with tall, narrow barriers in the energy landscape, where SA gets trapped in local minima but the QPU tunnels through.

Problem structure matters more than problem size. A 500-variable problem with a rugged, frustrated landscape can be harder for SA than a 5,000-variable problem with smooth structure. If SA struggles to converge (the energy distribution stays wide despite increasing num_sweeps), that is a signal the problem might benefit from quantum annealing.

The hybrid solver bridges the gap. D-Wave’s LeapHybridSampler combines classical and quantum resources automatically and handles problems with up to 1,000,000 variables. For most production use cases, the hybrid solver is the practical choice, and SA serves as your local testing and baseline tool.

As a general workflow:

- Prototype and debug with SA locally (free, fast, no network needed).

- Validate on small instances where you can verify optimality.

- Scale up with the hybrid solver for production-sized problems.

- Use direct QPU access when you need to study quantum effects or benchmark quantum vs. classical on specific problem structures.

Next Steps

Once your QUBO formulation produces correct results with simulated annealing, swapping in a different sampler requires changing only a few lines. The SampleSet interface stays the same across all Ocean samplers, so your analysis code works without modification.

To use the QPU directly:

from dwave.system import DWaveSampler, EmbeddingComposite

# EmbeddingComposite handles mapping your problem onto the QPU's topology

sampler = EmbeddingComposite(DWaveSampler())

sampleset = sampler.sample(

bqm,

num_reads=200,

# num_sweeps does not apply to the QPU; annealing_time controls the anneal duration

annealing_time=20, # microseconds

)

To use the hybrid solver for large problems:

from dwave.system import LeapHybridSampler

sampler = LeapHybridSampler()

sampleset = sampler.sample(bqm)

# The hybrid solver manages its own parameters internally

Both require a D-Wave Leap account and API token. Set your token via dwave config create or the DWAVE_API_TOKEN environment variable before running.

With SA as your local baseline and the QPU or hybrid solver for production runs, you have a complete workflow for developing, validating, and deploying quantum annealing solutions.

Was this tutorial helpful?