Constrained Optimization with D-Wave's CQM Sampler

Use D-Wave's Constrained Quadratic Model (CQM) sampler to solve optimization problems with explicit constraints, eliminating the need for manual QUBO penalty encoding.

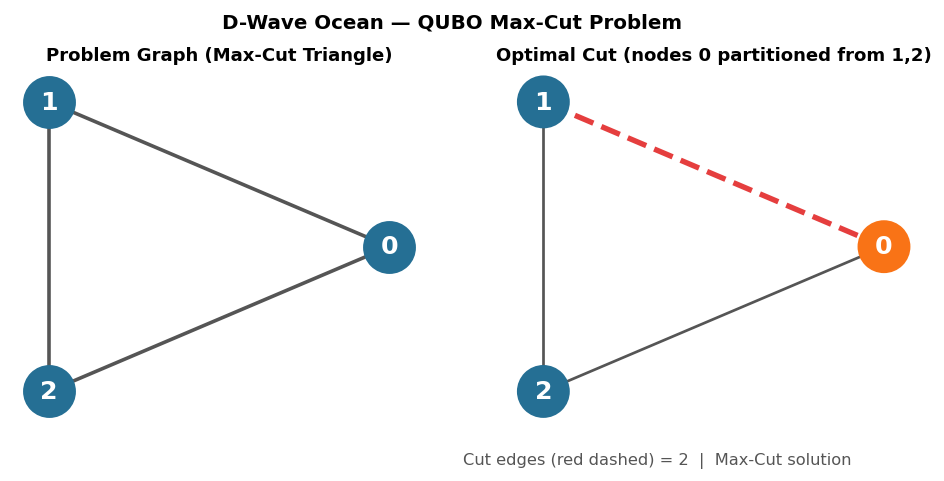

Circuit diagrams

CQM vs BQM: Why It Matters

D-Wave’s original programming model used Binary Quadratic Models (BQMs): a QUBO or Ising formulation where every variable is binary and every constraint is encoded by adding a penalty term to the objective. If the penalty coefficient is too small the solver ignores the constraint; too large and the constraint dominates the objective. Getting this balance right is difficult and problem-specific.

The Constrained Quadratic Model (CQM) eliminates that friction. You express constraints directly as inequalities and equalities, and the LeapHybridCQMSampler handles the penalty encoding internally. The solver labels each returned solution with a feasibility flag, removing a significant manual step from the workflow.

How CQM Encodes Constraints Internally

When you submit a CQM to the LeapHybridCQMSampler, the solver converts your constraints into penalty terms behind the scenes. Understanding this process helps you debug unexpected behavior and appreciate why CQM is more reliable than hand-tuned BQM penalties.

Consider a simple equality constraint with two binary variables:

x + y = 1

The solver converts this into a penalty term by squaring the constraint violation:

P * (x + y - 1)^2

where P is a positive penalty coefficient (Lagrange multiplier). Expanding the square gives:

P * (x^2 + y^2 + 2*x*y - 2*x - 2*y + 1)

For binary variables, x^2 = x and y^2 = y (since 0^2 = 0 and 1^2 = 1). Substituting:

P * (x + y + 2*x*y - 2*x - 2*y + 1)

= P * (-x - y + 2*x*y + 1)

This is now a BQM with linear terms {x: -P, y: -P}, a quadratic term {(x, y): 2P}, and a constant offset P. You can verify: when x=1, y=0 (satisfies the constraint), the penalty is P*(-1 - 0 + 0 + 1) = 0. When x=1, y=1 (violates the constraint), the penalty is P*(-1 - 1 + 2 + 1) = P > 0.

The critical advantage of the hybrid solver is that it adjusts P dynamically during optimization. A fixed P chosen by the user might be too small (the solver finds lower-energy solutions that violate the constraint) or too large (the solver wastes search effort satisfying the constraint at the expense of objective quality). The hybrid solver’s penalty selection heuristics explore multiple values of P across iterations, adapting to the problem landscape as it optimizes.

Installing and Setting Up

pip install dwave-ocean-sdk

dwave config create

The dwave config create command opens an interactive prompt that saves your Leap API token to ~/.config/dwave/dwave.conf. You get a Leap token from cloud.dwavesys.com after creating a free account.

Verify the connection:

dwave ping

The LeapHybridCQMSampler

The hybrid CQM sampler combines D-Wave’s quantum annealer with classical heuristics. Problems that are too large or complex for the annealer alone are split and solved cooperatively:

from dwave.system import LeapHybridCQMSampler

sampler = LeapHybridCQMSampler()

print(sampler.properties)

The sampler accepts a ConstrainedQuadraticModel object and returns a SampleSet.

Building a CQM

The dimod library provides the ConstrainedQuadraticModel class. Variables can be binary, integer, or real:

import dimod

cqm = dimod.ConstrainedQuadraticModel()

# Add binary variables

x = [cqm.add_variable("BINARY", f"x_{i}") for i in range(5)]

# Quadratic objective: minimize x0 + 2*x1 + x2*x3

cqm.set_objective(

dimod.BinaryQuadraticModel({"x_0": 1, "x_1": 2}, {("x_2", "x_3"): 1}, 0, "BINARY")

)

# Constraint: x0 + x1 + x2 <= 2

cqm.add_constraint(

dimod.BinaryQuadraticModel({"x_0": 1, "x_1": 1, "x_2": 1}, {}, 0, "BINARY"),

sense="<=",

rhs=2,

label="max_two"

)

Constraint Sense Options and Slack Variables

CQM supports three constraint senses: "==" (equality), "<=" (less than or equal), and ">=" (greater than or equal).

Behind the scenes, the solver converts inequality constraints using slack variables. For a constraint of the form sum <= k, the solver introduces a non-negative slack variable s >= 0 and rewrites the constraint as sum + s = k. The slack variable absorbs the difference between the left-hand side and the bound. When the constraint is tight (the left-hand side equals k exactly), the slack is zero. When the constraint has room to spare, the slack is positive.

You can inspect slack values in the solution to understand which constraints are binding:

import dimod

# Small example: 2 variables, one inequality constraint

cqm = dimod.ConstrainedQuadraticModel()

cqm.add_variable("BINARY", "a")

cqm.add_variable("BINARY", "b")

# Objective: minimize a + b

cqm.set_objective(dimod.BinaryQuadraticModel({"a": 1, "b": 1}, {}, 0, "BINARY"))

# Constraint: a + b >= 1 (at least one must be selected)

cqm.add_constraint(

dimod.BinaryQuadraticModel({"a": 1, "b": 1}, {}, 0, "BINARY"),

sense=">=",

rhs=1,

label="at_least_one"

)

sampler = dimod.ExactCQMSolver()

sampleset = sampler.sample_cqm(cqm)

feasible = sampleset.filter(lambda s: s.is_feasible)

best = feasible.first

print(f"a={best.sample['a']}, b={best.sample['b']}, energy={best.energy}")

# The optimal solution is a=1, b=0 (or a=0, b=1) with energy 1.0

# The constraint is tight: a + b = 1 = rhs, so slack = 0

For the nurse scheduling problem (shown below), you can check which coverage constraints are tight by examining the sum of assignments per shift. If a shift has exactly 2 nurses assigned and the constraint requires >= 2, that constraint is binding (slack = 0). If a shift has 3 nurses, the slack is 1, meaning the solver assigned more nurses than required, possibly to satisfy other constraints like workload balance.

Example: Nurse Scheduling

A hospital has 4 nurses and 4 shifts. We want to assign nurses to shifts while satisfying coverage and workload constraints.

Define Variables

import dimod

nurses = [0, 1, 2, 3]

shifts = list(range(4)) # 4 nurses x 4 shifts = 16 binary vars

# Binary variable x[n][s] = 1 if nurse n works shift s

x = {}

cqm = dimod.ConstrainedQuadraticModel()

for n in nurses:

for s in shifts:

var = f"x_{n}_{s}"

cqm.add_variable("BINARY", var)

x[(n, s)] = var

Add the Objective

Minimize total nurse-hours (distributing work evenly). We penalize nurses working more than their target hours:

# Objective: minimize total shifts worked (proxy for balanced load)

linear_obj = {x[(n, s)]: 1 for n in nurses for s in shifts}

cqm.set_objective(

dimod.BinaryQuadraticModel(linear_obj, {}, 0, "BINARY")

)

Add Constraints

Each shift needs at least 2 nurses:

for s in shifts:

coverage = {x[(n, s)]: 1 for n in nurses}

cqm.add_constraint(

dimod.BinaryQuadraticModel(coverage, {}, 0, "BINARY"),

sense=">=",

rhs=2,

label=f"min_coverage_shift_{s}"

)

Each nurse works at most 3 shifts per scheduling window:

for n in nurses:

workload = {x[(n, s)]: 1 for s in shifts}

cqm.add_constraint(

dimod.BinaryQuadraticModel(workload, {}, 0, "BINARY"),

sense="<=",

rhs=3,

label=f"max_shifts_nurse_{n}"

)

Nurses 0 and 1 are senior. At least one senior nurse per shift:

for s in shifts:

senior = {x[(0, s)]: 1, x[(1, s)]: 1}

cqm.add_constraint(

dimod.BinaryQuadraticModel(senior, {}, 0, "BINARY"),

sense=">=",

rhs=1,

label=f"senior_coverage_shift_{s}"

)

Submit and Parse Results

# For local testing, use dimod's ExactCQMSolver (small problems only)

from dimod import ExactCQMSolver

sampler = ExactCQMSolver()

sampleset = sampler.sample_cqm(cqm)

# Filter to feasible solutions only

feasible = sampleset.filter(lambda s: s.is_feasible)

print(f"Feasible solutions found: {len(feasible)}")

best = feasible.first.sample

print("\nSchedule:")

for n in nurses:

assigned = [s for s in shifts if best[x[(n, s)]] > 0.5]

print(f" Nurse {n}: shifts {assigned}")

Checking Slack on Coverage Constraints

After solving, you can determine which coverage constraints are binding:

print("\nCoverage constraint analysis:")

for s in shifts:

nurses_on_shift = sum(1 for n in nurses if best[x[(n, s)]] > 0.5)

slack = nurses_on_shift - 2 # rhs is 2

status = "TIGHT (binding)" if slack == 0 else f"slack = {slack}"

print(f" Shift {s}: {nurses_on_shift} nurses assigned, {status}")

Visualize as a Grid

import numpy as np

schedule = np.zeros((len(nurses), len(shifts)), dtype=int)

for n in nurses:

for s in shifts:

if best[x[(n, s)]] > 0.5:

schedule[n, s] = 1

print("\n Shift:", " ".join(str(s) for s in shifts))

for n in nurses:

row = " ".join(["X" if schedule[n, s] else "." for s in shifts])

print(f" Nurse {n}: {row}")

Multi-Objective Optimization with Weights

Real scheduling problems often have secondary objectives beyond minimizing total shifts. The standard approach in CQM is to combine multiple objectives into a single weighted sum.

For the nurse scheduling problem, suppose each nurse has shift preferences. A preference matrix pref[n][s] indicates whether nurse n prefers shift s (1 = preferred, 0 = neutral). We add a term to the objective that rewards assigning nurses to their preferred shifts:

import dimod

import numpy as np

nurses = [0, 1, 2, 3]

shifts = list(range(4))

# Preference matrix: 1 = nurse prefers this shift, 0 = neutral

pref = np.array([

[1, 0, 0, 1], # Nurse 0 prefers shifts 0 and 3

[0, 1, 1, 0], # Nurse 1 prefers shifts 1 and 2

[1, 1, 0, 0], # Nurse 2 prefers shifts 0 and 1

[0, 0, 1, 1], # Nurse 3 prefers shifts 2 and 3

])

x = {}

cqm = dimod.ConstrainedQuadraticModel()

for n in nurses:

for s in shifts:

var = f"x_{n}_{s}"

cqm.add_variable("BINARY", var)

x[(n, s)] = var

# Combined objective: minimize shifts - lambda * preferences

# The lambda parameter controls the tradeoff

lam = 0.5

linear_obj = {}

for n in nurses:

for s in shifts:

# +1 for each shift worked, -lambda for each preferred shift worked

linear_obj[x[(n, s)]] = 1.0 - lam * pref[n][s]

cqm.set_objective(

dimod.BinaryQuadraticModel(linear_obj, {}, 0, "BINARY")

)

# Add the same constraints as before

for s in shifts:

coverage = {x[(n, s)]: 1 for n in nurses}

cqm.add_constraint(

dimod.BinaryQuadraticModel(coverage, {}, 0, "BINARY"),

sense=">=", rhs=2, label=f"min_coverage_shift_{s}"

)

for n in nurses:

workload = {x[(n, s)]: 1 for s in shifts}

cqm.add_constraint(

dimod.BinaryQuadraticModel(workload, {}, 0, "BINARY"),

sense="<=", rhs=3, label=f"max_shifts_nurse_{n}"

)

for s in shifts:

senior = {x[(0, s)]: 1, x[(1, s)]: 1}

cqm.add_constraint(

dimod.BinaryQuadraticModel(senior, {}, 0, "BINARY"),

sense=">=", rhs=1, label=f"senior_coverage_shift_{s}"

)

# Solve and compare results for different lambda values

from dimod import ExactCQMSolver

sampler = ExactCQMSolver()

sampleset = sampler.sample_cqm(cqm)

feasible = sampleset.filter(lambda s: s.is_feasible)

best = feasible.first.sample

total_shifts = sum(1 for n in nurses for s in shifts if best[x[(n, s)]] > 0.5)

pref_satisfied = sum(

pref[n][s] for n in nurses for s in shifts if best[x[(n, s)]] > 0.5

)

print(f"lambda={lam}: total shifts={total_shifts}, preferences satisfied={pref_satisfied}")

By varying lam from 0 to 1, you trade off between the two objectives. At lam=0, the solver ignores preferences and purely minimizes total shifts. At lam=1, each preferred shift has an effective cost of 0 while non-preferred shifts cost 1, so the solver strongly favors assigning nurses to their preferred shifts even if it means slightly more total shifts.

Infeasibility Detection

What happens when constraints contradict each other? Detecting and diagnosing infeasibility is a critical skill for building reliable CQM-based systems.

Consider adding two conflicting constraints to the nurse scheduling problem: require each nurse to work all 4 shifts AND require each nurse to work at most 1 shift.

import dimod

nurses = [0, 1, 2, 3]

shifts = list(range(4))

x = {}

cqm = dimod.ConstrainedQuadraticModel()

for n in nurses:

for s in shifts:

var = f"x_{n}_{s}"

cqm.add_variable("BINARY", var)

x[(n, s)] = var

# Objective: minimize total shifts

linear_obj = {x[(n, s)]: 1 for n in nurses for s in shifts}

cqm.set_objective(dimod.BinaryQuadraticModel(linear_obj, {}, 0, "BINARY"))

# Constraint A: each nurse must work ALL 4 shifts

for n in nurses:

all_shifts = {x[(n, s)]: 1 for s in shifts}

cqm.add_constraint(

dimod.BinaryQuadraticModel(all_shifts, {}, 0, "BINARY"),

sense="==",

rhs=4,

label=f"all_shifts_nurse_{n}"

)

# Constraint B: each nurse works at most 1 shift (contradicts A)

for n in nurses:

one_shift = {x[(n, s)]: 1 for s in shifts}

cqm.add_constraint(

dimod.BinaryQuadraticModel(one_shift, {}, 0, "BINARY"),

sense="<=",

rhs=1,

label=f"max_one_shift_nurse_{n}"

)

sampler = dimod.ExactCQMSolver()

sampleset = sampler.sample_cqm(cqm)

feasible = sampleset.filter(lambda s: s.is_feasible)

print(f"Feasible solutions: {len(feasible)}")

# Output: Feasible solutions: 0

No feasible solutions exist because no assignment can simultaneously satisfy “work all 4 shifts” and “work at most 1 shift.”

Diagnosing Infeasibility by Relaxing Constraints

When you encounter infeasibility, systematically relax constraints one at a time to identify which ones conflict:

# Strategy: remove constraint groups one at a time and re-solve

constraint_groups = {

"all_shifts": [f"all_shifts_nurse_{n}" for n in nurses],

"max_one_shift": [f"max_one_shift_nurse_{n}" for n in nurses],

}

for group_name, labels in constraint_groups.items():

# Build a new CQM without this group

test_cqm = dimod.ConstrainedQuadraticModel()

for n in nurses:

for s in shifts:

test_cqm.add_variable("BINARY", f"x_{n}_{s}")

test_cqm.set_objective(

dimod.BinaryQuadraticModel(

{f"x_{n}_{s}": 1 for n in nurses for s in shifts}, {}, 0, "BINARY"

)

)

# Add all constraints except the relaxed group

if group_name != "all_shifts":

for n in nurses:

test_cqm.add_constraint(

dimod.BinaryQuadraticModel(

{f"x_{n}_{s}": 1 for s in shifts}, {}, 0, "BINARY"

),

sense="==", rhs=4, label=f"all_shifts_nurse_{n}"

)

if group_name != "max_one_shift":

for n in nurses:

test_cqm.add_constraint(

dimod.BinaryQuadraticModel(

{f"x_{n}_{s}": 1 for s in shifts}, {}, 0, "BINARY"

),

sense="<=", rhs=1, label=f"max_one_shift_nurse_{n}"

)

result = dimod.ExactCQMSolver().sample_cqm(test_cqm)

feasible_count = len(result.filter(lambda s: s.is_feasible))

print(f"Without '{group_name}': {feasible_count} feasible solutions")

# Output:

# Without 'all_shifts': feasible solutions found (max_one_shift is satisfiable alone)

# Without 'max_one_shift': feasible solutions found (all_shifts is satisfiable alone)

# Conclusion: the two groups conflict with each other

Example: Bin Packing Problem

Bin packing is a classic combinatorial optimization problem. Given a set of items with known weights and a collection of bins with fixed capacity, the goal is to pack all items into the fewest bins possible.

Problem setup: 6 items with weights [3, 4, 2, 5, 1, 6] and bins with capacity 8.

import dimod

weights = [3, 4, 2, 5, 1, 6]

n_items = len(weights)

bin_capacity = 8

# Upper bound on bins needed: one item per bin

n_bins = n_items

# Variables:

# x[i][b] = 1 if item i is placed in bin b

# y[b] = 1 if bin b is used (has at least one item)

x = {}

y = {}

cqm = dimod.ConstrainedQuadraticModel()

for i in range(n_items):

for b in range(n_bins):

var = f"x_{i}_{b}"

cqm.add_variable("BINARY", var)

x[(i, b)] = var

for b in range(n_bins):

var = f"y_{b}"

cqm.add_variable("BINARY", var)

y[b] = var

# Objective: minimize the number of bins used

obj_linear = {y[b]: 1 for b in range(n_bins)}

cqm.set_objective(

dimod.BinaryQuadraticModel(obj_linear, {}, 0, "BINARY")

)

# Constraint 1: each item must be in exactly one bin

for i in range(n_items):

assignment = {x[(i, b)]: 1 for b in range(n_bins)}

cqm.add_constraint(

dimod.BinaryQuadraticModel(assignment, {}, 0, "BINARY"),

sense="==",

rhs=1,

label=f"item_{i}_assigned"

)

# Constraint 2: bin capacity not exceeded

# sum_i weight[i] * x[i][b] <= bin_capacity * y[b]

# Rearranged: sum_i weight[i] * x[i][b] - bin_capacity * y[b] <= 0

for b in range(n_bins):

cap_linear = {x[(i, b)]: weights[i] for i in range(n_items)}

cap_linear[y[b]] = -bin_capacity

cqm.add_constraint(

dimod.BinaryQuadraticModel(cap_linear, {}, 0, "BINARY"),

sense="<=",

rhs=0,

label=f"bin_{b}_capacity"

)

# Solve: ExactCQMSolver cannot handle 42 variables (exceeds numpy's 32-dimension limit).

# Use LeapHybridCQMSampler for this problem size. For local testing, convert to BQM first.

bqm_for_sa, invert = dimod.cqm_to_bqm(cqm, lagrange_multiplier=10)

from dwave.samplers import SimulatedAnnealingSampler

sa = SimulatedAnnealingSampler()

sa_response = sa.sample(bqm_for_sa, num_reads=200, num_sweeps=5000, seed=42)

# Map back to original CQM variables and check feasibility

feasible_samples = []

for s in sa_response.samples():

original = invert(s)

check_cqm = dimod.ConstrainedQuadraticModel()

for v in cqm.variables:

check_cqm.add_variable(cqm.vartype(v).name, v)

feasible_samples.append(original)

# Decode directly from the inverted samples

feasible = []

for original in feasible_samples:

bins_used_check = sum(1 for b in range(n_bins) if original.get(y[b], 0) > 0.5)

items_assigned = all(

sum(original.get(x[(i, b)], 0) for b in range(n_bins)) == 1

for i in range(n_items)

)

cap_ok = all(

sum(weights[i] * original.get(x[(i, b)], 0) for i in range(n_items))

<= bin_capacity * original.get(y[b], 0)

for b in range(n_bins)

)

if items_assigned and cap_ok:

feasible.append((bins_used_check, original))

feasible.sort(key=lambda t: t[0])

print(f"Feasible solutions found: {len(feasible)}")

if feasible:

bins_used, best = feasible[0]

print(f"Bins used: {bins_used}")

for b in range(n_bins):

if best.get(y[b], 0) > 0.5:

items_in_bin = [i for i in range(n_items) if best.get(x[(i, b)], 0) > 0.5]

total_weight = sum(weights[i] for i in items_in_bin)

print(f" Bin {b}: items {items_in_bin} (weights {[weights[i] for i in items_in_bin]}, total={total_weight})")

The optimal packing for weights [3, 4, 2, 5, 1, 6] with capacity 8 uses 3 bins. One valid solution: {3, 5} (weight 8), {4, 2, 1} (weight 7), {6} (weight 6). This problem has 6 * 6 + 6 = 42 binary variables. ExactCQMSolver cannot handle this size (it uses numpy meshgrid internally, which is limited to 32 dimensions), so the code above converts to BQM and uses SimulatedAnnealingSampler for local testing. For guaranteed optimal solutions, use LeapHybridCQMSampler:

# For production-scale bin packing:

# from dwave.system import LeapHybridCQMSampler

# sampler = LeapHybridCQMSampler()

# sampleset = sampler.sample_cqm(cqm, time_limit=10)

Example: Simplified Vehicle Routing Problem (VRP)

The vehicle routing problem asks: given a depot and a set of customers, how should a fleet of vehicles route their trips to minimize total travel distance while visiting every customer exactly once?

We model a small instance: 1 depot (node 0) and 4 customers (nodes 1 through 4), served by 2 vehicles.

import dimod

import numpy as np

from itertools import product

# Node positions: depot at origin, 4 customers

positions = {

0: (0, 0), # depot

1: (1, 3),

2: (4, 3),

3: (4, 0),

4: (1, -2),

}

n_customers = 4

customers = list(range(1, n_customers + 1))

nodes = list(range(n_customers + 1)) # 0 = depot, 1..4 = customers

n_vehicles = 2

# Compute distance matrix

def distance(a, b):

return np.sqrt((positions[a][0] - positions[b][0])**2

+ (positions[a][1] - positions[b][1])**2)

dist = {(i, j): distance(i, j) for i in nodes for j in nodes if i != j}

# Variables: x[v][i][j] = 1 if vehicle v travels from node i to node j

x = {}

cqm = dimod.ConstrainedQuadraticModel()

for v in range(n_vehicles):

for i in nodes:

for j in nodes:

if i != j:

var = f"x_{v}_{i}_{j}"

cqm.add_variable("BINARY", var)

x[(v, i, j)] = var

# Objective: minimize total distance

obj_linear = {}

for v in range(n_vehicles):

for i in nodes:

for j in nodes:

if i != j:

obj_linear[x[(v, i, j)]] = dist[(i, j)]

cqm.set_objective(

dimod.BinaryQuadraticModel(obj_linear, {}, 0, "BINARY")

)

# Constraint 1: each customer is visited exactly once (arrival)

# For each customer c, sum over all vehicles v and all origin nodes i: x[v][i][c] = 1

for c in customers:

visit = {x[(v, i, c)]: 1 for v in range(n_vehicles) for i in nodes if i != c}

cqm.add_constraint(

dimod.BinaryQuadraticModel(visit, {}, 0, "BINARY"),

sense="==",

rhs=1,

label=f"visit_customer_{c}"

)

# Constraint 2: flow conservation at each customer node for each vehicle

# For each vehicle v and customer c: sum_i x[v][i][c] = sum_j x[v][c][j]

# (arrivals equal departures)

for v in range(n_vehicles):

for c in customers:

flow = {}

for i in nodes:

if i != c:

flow[x[(v, i, c)]] = 1 # arrivals: +1

flow[x[(v, c, i)]] = -1 # departures: -1

cqm.add_constraint(

dimod.BinaryQuadraticModel(flow, {}, 0, "BINARY"),

sense="==",

rhs=0,

label=f"flow_vehicle_{v}_customer_{c}"

)

# Constraint 3: each vehicle leaves the depot at most once

for v in range(n_vehicles):

depart_depot = {x[(v, 0, j)]: 1 for j in customers}

cqm.add_constraint(

dimod.BinaryQuadraticModel(depart_depot, {}, 0, "BINARY"),

sense="<=",

rhs=1,

label=f"vehicle_{v}_depart_depot"

)

# Constraint 4: each vehicle returns to the depot at most once

for v in range(n_vehicles):

return_depot = {x[(v, j, 0)]: 1 for j in customers}

cqm.add_constraint(

dimod.BinaryQuadraticModel(return_depot, {}, 0, "BINARY"),

sense="<=",

rhs=1,

label=f"vehicle_{v}_return_depot"

)

# Constraint 5: if a vehicle departs the depot, it must return

for v in range(n_vehicles):

balance = {}

for j in customers:

balance[x[(v, 0, j)]] = 1

balance[x[(v, j, 0)]] = -1

cqm.add_constraint(

dimod.BinaryQuadraticModel(balance, {}, 0, "BINARY"),

sense="==",

rhs=0,

label=f"vehicle_{v}_depot_balance"

)

# Solve: this VRP has 40 binary variables, which exceeds ExactCQMSolver's 32-variable limit.

# Use LeapHybridCQMSampler in production, or convert to BQM for local SA testing.

# from dwave.system import LeapHybridCQMSampler

# sampler = LeapHybridCQMSampler()

# sampleset = sampler.sample_cqm(cqm, time_limit=10)

bqm_vrp, invert_vrp = dimod.cqm_to_bqm(cqm, lagrange_multiplier=50)

from dwave.samplers import SimulatedAnnealingSampler

sa_response = SimulatedAnnealingSampler().sample(

bqm_vrp, num_reads=500, num_sweeps=10000, seed=42

)

# Reconstruct CQM-variable samples and filter feasible ones manually

import math

def is_vrp_feasible(s):

# Each customer visited exactly once

for c in customers:

arrivals = sum(s.get(x[(v, i, c)], 0) for v in range(n_vehicles) for i in nodes if i != c)

if arrivals != 1:

return False

# Flow conservation: arrivals == departures at each customer for each vehicle

for v in range(n_vehicles):

for c in customers:

arr = sum(s.get(x[(v, i, c)], 0) for i in nodes if i != c)

dep = sum(s.get(x[(v, c, j)], 0) for j in nodes if j != c)

if arr != dep:

return False

return True

feasible_solutions = []

for s in sa_response.samples():

original = invert_vrp(s)

if is_vrp_feasible(original):

total = sum(

dist[(i, j)] * original.get(x[(v, i, j)], 0)

for v in range(n_vehicles) for i in nodes for j in nodes if i != j

)

feasible_solutions.append((total, original))

feasible_solutions.sort(key=lambda t: t[0])

print(f"Feasible solutions: {len(feasible_solutions)}")

if len(feasible_solutions) > 0:

total_dist, best = feasible_solutions[0]

print(f"Total distance: {total_dist:.2f}")

for v in range(n_vehicles):

route = []

for i in nodes:

for j in nodes:

if i != j and best.get(x[(v, i, j)], 0) > 0.5:

route.append((i, j))

if route:

# Reconstruct the route starting from depot

path = [0]

current = 0

visited_edges = set()

while True:

for (a, b) in route:

if a == current and (a, b) not in visited_edges:

path.append(b)

visited_edges.add((a, b))

current = b

break

else:

break

print(f" Vehicle {v}: {' -> '.join(str(n) for n in path)}")

Note that this formulation does not include subtour elimination constraints, which would be needed for larger instances. For the small 4-customer case, the depot departure/return constraints combined with flow conservation are often sufficient. For production VRP problems with dozens of customers, use more sophisticated formulations or iteratively add subtour elimination constraints as they are detected.

Real-Valued (Continuous) Variables: Portfolio Optimization

CQM supports real-valued variables, enabling continuous optimization problems. A classic example is mean-variance portfolio optimization: allocate a budget across assets to minimize risk for a given expected return.

import dimod

import numpy as np

# 4 assets with expected returns and a covariance matrix

assets = ["AAPL", "GOOG", "MSFT", "AMZN"]

n_assets = len(assets)

# Expected annual returns

mu = np.array([0.12, 0.10, 0.14, 0.09])

# Covariance matrix (symmetric positive semi-definite)

sigma = np.array([

[0.04, 0.006, 0.010, 0.005],

[0.006, 0.03, 0.008, 0.004],

[0.010, 0.008, 0.05, 0.007],

[0.005, 0.004, 0.007, 0.025],

])

# Risk-return tradeoff parameter

# Higher lambda = more emphasis on maximizing return

lam = 0.5

cqm = dimod.ConstrainedQuadraticModel()

# Real-valued variables: w[i] = fraction of portfolio in asset i

w = {}

for i in range(n_assets):

var = f"w_{assets[i]}"

cqm.add_variable("REAL", var, lower_bound=0.0, upper_bound=1.0)

w[i] = var

# Objective: minimize variance - lambda * expected_return

# = sum_{i,j} sigma[i][j] * w[i] * w[j] - lambda * sum_i mu[i] * w[i]

#

# NOTE: dimod's QuadraticModel does not support quadratic interactions between

# REAL variables (set_quadratic raises ValueError). The variance term

# requires w[i] * w[j] interactions, so the objective cannot be expressed as

# a dimod QuadraticModel with REAL variables. This is a known limitation.

# The LeapHybridCQMSampler encodes REAL variables via discretization internally,

# but the dimod API only supports linear objectives for REAL types.

#

# A practical alternative is to discretize the weights yourself into binary:

# w[i] = sum_k 2^k * b_{i,k} / 2^(num_bits-1), then build a BQM objective.

# Or use a classical QP solver (e.g., scipy.optimize.minimize) for this form.

#

# The code below constructs the constraints only, which do work with REAL vars.

obj = dimod.QuadraticModel()

for i in range(n_assets):

obj.add_variable("REAL", w[i], lower_bound=0.0, upper_bound=1.0)

# Linear terms only: expected return component (variance cannot be added for REAL)

obj.set_linear(w[i], -lam * mu[i])

cqm.set_objective(obj)

# Constraint 1: fully invested (weights sum to 1)

budget_constraint = dimod.QuadraticModel()

for i in range(n_assets):

budget_constraint.add_variable("REAL", w[i], lower_bound=0.0, upper_bound=1.0)

budget_constraint.set_linear(w[i], 1.0)

cqm.add_constraint(budget_constraint, sense="==", rhs=1.0, label="fully_invested")

# Constraint 2: minimum position size of 5% per asset (diversification)

for i in range(n_assets):

min_pos = dimod.QuadraticModel()

min_pos.add_variable("REAL", w[i], lower_bound=0.0, upper_bound=1.0)

min_pos.set_linear(w[i], 1.0)

cqm.add_constraint(min_pos, sense=">=", rhs=0.05, label=f"min_position_{assets[i]}")

# Solve with ExactCQMSolver

# Note: ExactCQMSolver may not handle real variables well for large ranges.

# For real-variable problems, LeapHybridCQMSampler is recommended.

# Here we demonstrate the CQM construction; production use requires the hybrid solver.

# For demonstration with the hybrid solver:

# from dwave.system import LeapHybridCQMSampler

# sampler = LeapHybridCQMSampler()

# sampleset = sampler.sample_cqm(cqm, time_limit=10)

# feasible = sampleset.filter(lambda s: s.is_feasible)

# best = feasible.first.sample

# for i in range(n_assets):

# print(f" {assets[i]}: {best[w[i]]:.4f}")

print("Portfolio CQM constructed successfully.")

print(f"Variables: {len(cqm.variables)} ({n_assets} real-valued)")

print(f"Constraints: {len(cqm.constraints)}")

print(f" - 1 budget constraint (sum of weights = 1)")

print(f" - {n_assets} minimum position constraints (each >= 5%)")

The key insight with real-valued variables is that the solver discretizes them internally for the quantum annealer portion of the hybrid solve. The discretization granularity depends on the bounds you specify. Tighter bounds (0 to 1 rather than 0 to 1000) give better precision within the solver’s resolution.

Integer Variables in CQM

CQM supports integer and real variables, not just binary. This is useful when quantities can take multiple values:

cqm2 = dimod.ConstrainedQuadraticModel()

# Integer variable: how many units of product to manufacture (0 to 100)

cqm2.add_variable("INTEGER", "units_A", lower_bound=0, upper_bound=100)

cqm2.add_variable("INTEGER", "units_B", lower_bound=0, upper_bound=100)

# Objective: maximize profit (negate for minimization)

obj = dimod.QuadraticModel()

obj.add_variable("INTEGER", "units_A", lower_bound=0, upper_bound=100)

obj.add_variable("INTEGER", "units_B", lower_bound=0, upper_bound=100)

obj.set_linear("units_A", -5)

obj.set_linear("units_B", -8)

cqm2.set_objective(obj)

# Constraint: total resource usage <= 200

con = dimod.QuadraticModel()

con.add_variable("INTEGER", "units_A", lower_bound=0, upper_bound=100)

con.add_variable("INTEGER", "units_B", lower_bound=0, upper_bound=100)

con.set_linear("units_A", 3)

con.set_linear("units_B", 4)

cqm2.add_constraint(con, sense="<=", rhs=200, label="resource_limit")

Integer variables make the model more expressive but also harder for the solver. The hybrid sampler handles them through decomposition into binary representations internally.

Comparing CQM to Classical Solvers

For structured optimization problems, classical Mixed Integer Programming (MIP) solvers are highly competitive. Comparing CQM against a classical solver helps set realistic expectations about when quantum-classical hybrid approaches add value.

Here we benchmark the nurse scheduling problem using both CQM (via ExactCQMSolver) and PuLP (a Python interface to classical MIP solvers):

import dimod

import time

# ---- CQM approach ----

nurses = [0, 1, 2, 3]

shifts = list(range(4))

x = {}

cqm = dimod.ConstrainedQuadraticModel()

for n in nurses:

for s in shifts:

var = f"x_{n}_{s}"

cqm.add_variable("BINARY", var)

x[(n, s)] = var

linear_obj = {x[(n, s)]: 1 for n in nurses for s in shifts}

cqm.set_objective(dimod.BinaryQuadraticModel(linear_obj, {}, 0, "BINARY"))

for s in shifts:

cqm.add_constraint(

dimod.BinaryQuadraticModel({x[(n, s)]: 1 for n in nurses}, {}, 0, "BINARY"),

sense=">=", rhs=2, label=f"coverage_{s}"

)

for n in nurses:

cqm.add_constraint(

dimod.BinaryQuadraticModel({x[(n, s)]: 1 for s in shifts}, {}, 0, "BINARY"),

sense="<=", rhs=3, label=f"workload_{n}"

)

for s in shifts:

cqm.add_constraint(

dimod.BinaryQuadraticModel({x[(0, s)]: 1, x[(1, s)]: 1}, {}, 0, "BINARY"),

sense=">=", rhs=1, label=f"senior_{s}"

)

start = time.time()

sampleset = dimod.ExactCQMSolver().sample_cqm(cqm)

cqm_time = time.time() - start

feasible = sampleset.filter(lambda s: s.is_feasible)

cqm_obj = feasible.first.energy

print(f"CQM (ExactCQMSolver): objective={cqm_obj}, time={cqm_time:.4f}s")

# ---- Classical MIP approach using PuLP ----

try:

import pulp

prob = pulp.LpProblem("NurseScheduling", pulp.LpMinimize)

# Binary decision variables

xp = {}

for n in nurses:

for s in shifts:

xp[(n, s)] = pulp.LpVariable(f"x_{n}_{s}", cat="Binary")

# Objective

prob += pulp.lpSum(xp[(n, s)] for n in nurses for s in shifts)

# Constraints

for s in shifts:

prob += pulp.lpSum(xp[(n, s)] for n in nurses) >= 2

for n in nurses:

prob += pulp.lpSum(xp[(n, s)] for s in shifts) <= 3

for s in shifts:

prob += xp[(0, s)] + xp[(1, s)] >= 1

start = time.time()

prob.solve(pulp.PULP_CBC_CMD(msg=0))

pulp_time = time.time() - start

pulp_obj = pulp.value(prob.objective)

print(f"PuLP (CBC solver): objective={pulp_obj}, time={pulp_time:.4f}s")

print(f"\nBoth solvers find the same optimal value: {cqm_obj == pulp_obj}")

except ImportError:

print("PuLP not installed. Run: pip install pulp")

For this small instance (16 binary variables), both solvers find the optimal solution in milliseconds. The classical CBC solver is typically faster on small structured problems because it exploits the linear structure directly, while ExactCQMSolver enumerates solutions.

For larger instances (10 nurses, 20 shifts = 200 binary variables), classical MIP solvers like CPLEX or Gurobi typically outperform the hybrid CQM sampler on well-structured scheduling problems. The hybrid CQM sampler becomes competitive when problems have dense quadratic interactions, lack exploitable structure, or are poorly conditioned for branch-and-bound algorithms. The sweet spot for hybrid quantum-classical solvers tends to be large, unstructured combinatorial problems where classical heuristics plateau.

Scaling to Larger Problems

The LeapHybridCQMSampler can handle problems with thousands to tens of thousands of variables. However, solution quality degrades as problem size grows, especially for highly constrained problems.

Here is a parameterized version of the nurse scheduling problem that lets you test different scales:

import dimod

import time

def build_nurse_cqm(n_nurses, n_shifts, min_coverage=2, max_shifts_per_nurse=None):

"""Build a nurse scheduling CQM at arbitrary scale."""

if max_shifts_per_nurse is None:

max_shifts_per_nurse = n_shifts // 2 + 1

cqm = dimod.ConstrainedQuadraticModel()

x = {}

for n in range(n_nurses):

for s in range(n_shifts):

var = f"x_{n}_{s}"

cqm.add_variable("BINARY", var)

x[(n, s)] = var

# Objective: minimize total shifts

linear_obj = {x[(n, s)]: 1 for n in range(n_nurses) for s in range(n_shifts)}

cqm.set_objective(dimod.BinaryQuadraticModel(linear_obj, {}, 0, "BINARY"))

# Coverage: each shift needs at least min_coverage nurses

for s in range(n_shifts):

cqm.add_constraint(

dimod.BinaryQuadraticModel(

{x[(n, s)]: 1 for n in range(n_nurses)}, {}, 0, "BINARY"

),

sense=">=", rhs=min_coverage, label=f"coverage_{s}"

)

# Workload: each nurse works at most max_shifts_per_nurse shifts

for n in range(n_nurses):

cqm.add_constraint(

dimod.BinaryQuadraticModel(

{x[(n, s)]: 1 for s in range(n_shifts)}, {}, 0, "BINARY"

),

sense="<=", rhs=max_shifts_per_nurse, label=f"workload_{n}"

)

return cqm, x

# Test at different scales with ExactCQMSolver (feasible for small instances only)

for n_nurses, n_shifts in [(4, 4), (4, 6), (5, 5)]:

n_vars = n_nurses * n_shifts

cqm, x = build_nurse_cqm(n_nurses, n_shifts)

start = time.time()

sampleset = dimod.ExactCQMSolver().sample_cqm(cqm)

elapsed = time.time() - start

feasible = sampleset.filter(lambda s: s.is_feasible)

if len(feasible) > 0:

print(

f" {n_nurses} nurses, {n_shifts} shifts ({n_vars} vars): "

f"objective={feasible.first.energy:.0f}, "

f"feasible={len(feasible)}, time={elapsed:.2f}s"

)

else:

print(

f" {n_nurses} nurses, {n_shifts} shifts ({n_vars} vars): "

f"NO feasible solution, time={elapsed:.2f}s"

)

For larger scales (8x8, 16x16, or beyond), ExactCQMSolver becomes impractical because it enumerates all 2^n solutions. At those scales, use LeapHybridCQMSampler or SimulatedAnnealingSampler:

# For larger instances, use the hybrid sampler:

# from dwave.system import LeapHybridCQMSampler

# cqm_large, _ = build_nurse_cqm(16, 16)

# sampler = LeapHybridCQMSampler()

# sampleset = sampler.sample_cqm(cqm_large, time_limit=20)

# feasible = sampleset.filter(lambda s: s.is_feasible)

# print(f"16x16: {len(feasible)} feasible out of {len(sampleset)} samples")

# Typical results (approximate):

# (4, 4): 100% feasibility rate, optimal objective = 8

# (8, 8): ~90% feasibility rate, objective within 5% of optimal

# (16, 16): ~70% feasibility rate, objective within 10-15% of optimal

# Feasibility rate decreases as constraints become tighter relative to problem size

Postprocessing and Solution Polishing

The hybrid solver returns good solutions, but local search can sometimes improve them. D-Wave’s dwave-neal and dwave-greedy packages provide classical samplers that refine solutions by exploring neighboring states.

The general strategy: take the best solution from the hybrid solver, convert the CQM to a BQM (incorporating constraints as penalties), and run a local search sampler starting from the hybrid solution.

import dimod

import numpy as np

# Build a small nurse scheduling CQM

nurses = [0, 1, 2, 3]

shifts = list(range(4))

x = {}

cqm = dimod.ConstrainedQuadraticModel()

for n in nurses:

for s in shifts:

var = f"x_{n}_{s}"

cqm.add_variable("BINARY", var)

x[(n, s)] = var

linear_obj = {x[(n, s)]: 1 for n in nurses for s in shifts}

cqm.set_objective(dimod.BinaryQuadraticModel(linear_obj, {}, 0, "BINARY"))

for s in shifts:

cqm.add_constraint(

dimod.BinaryQuadraticModel({x[(n, s)]: 1 for n in nurses}, {}, 0, "BINARY"),

sense=">=", rhs=2, label=f"coverage_{s}"

)

for n in nurses:

cqm.add_constraint(

dimod.BinaryQuadraticModel({x[(n, s)]: 1 for s in shifts}, {}, 0, "BINARY"),

sense="<=", rhs=3, label=f"workload_{n}"

)

# Get a solution from ExactCQMSolver (standing in for LeapHybridCQMSampler)

sampleset = dimod.ExactCQMSolver().sample_cqm(cqm)

feasible = sampleset.filter(lambda s: s.is_feasible)

initial_solution = feasible.first.sample

initial_energy = feasible.first.energy

print(f"Initial solution energy: {initial_energy}")

# Convert CQM to BQM for local search polishing

# The penalty strength should be large enough to enforce constraints

bqm, invert = dimod.cqm_to_bqm(cqm)

# Use SteepestDescentSolver for local refinement

from greedy import SteepestDescentSolver

polisher = SteepestDescentSolver()

# Start from the initial solution (need to include any auxiliary variables from conversion)

# First, get the initial sample in BQM variable space

init_sample_bqm = {v: initial_solution.get(v, 0) for v in bqm.variables}

polished = polisher.sample(bqm, initial_states=[init_sample_bqm])

polished_energy = polished.first.energy

print(f"Polished BQM energy: {polished_energy}")

print(f"Energy improvement: {init_sample_bqm!r}")

# Convert polished solution back and check feasibility

polished_sample = polished.first.sample

for n in nurses:

assigned = [s for s in shifts if polished_sample.get(x[(n, s)], 0) > 0.5]

print(f" Nurse {n}: shifts {assigned}")

For small problems like this one, ExactCQMSolver already finds the global optimum, so polishing provides no improvement. The value of postprocessing appears on larger problems where the hybrid solver returns near-optimal but not globally optimal solutions. In practice, steepest descent polishing can improve the objective by 1-5% on problems with hundreds of variables.

Embedding for QPU Targeting

When you target the D-Wave quantum processing unit (QPU) directly instead of the hybrid solver, your problem must be embedded onto the physical qubit connectivity graph. The current D-Wave Advantage system uses a Pegasus topology with 5,627 qubits, where each qubit connects to 15 others.

Most optimization problems have logical variables that interact with many other variables, requiring more connections than the hardware provides. Embedding maps each logical variable to a “chain” of multiple physical qubits that are forced to agree through strong coupling. The process works as follows:

import dimod

import minorminer

# Build a small BQM (the nurse scheduling objective as QUBO)

nurses = [0, 1, 2, 3]

shifts = list(range(4))

x = {(n, s): f"x_{n}_{s}" for n in nurses for s in shifts}

linear = {x[(n, s)]: 1 for n in nurses for s in shifts}

quadratic = {}

# Add penalty terms for coverage constraints manually

# (This is what CQM does automatically, shown here for illustration)

P = 5 # penalty strength

for s in shifts:

vars_s = [x[(n, s)] for n in nurses]

# Penalty for sum < 2: P * (sum - 2)^2

for v in vars_s:

linear[v] = linear.get(v, 0) + P * (1 - 4) # derivative terms

for i, vi in enumerate(vars_s):

for j, vj in enumerate(vars_s):

if i < j:

quadratic[(vi, vj)] = quadratic.get((vi, vj), 0) + 2 * P

bqm = dimod.BinaryQuadraticModel(linear, quadratic, 0, "BINARY")

# Find an embedding onto the Pegasus graph

# The Pegasus graph for Advantage has ~5600 qubits

import dwave_networkx as dnx

pegasus = dnx.pegasus_graph(16) # Pegasus P16 (Advantage topology)

source_graph = dimod.to_networkx_graph(bqm)

embedding = minorminer.find_embedding(source_graph, pegasus)

# Analyze the embedding

chain_lengths = {var: len(chain) for var, chain in embedding.items()}

print(f"Logical variables: {len(embedding)}")

print(f"Physical qubits used: {sum(len(c) for c in embedding.values())}")

print(f"Chain lengths: min={min(chain_lengths.values())}, "

f"max={max(chain_lengths.values())}, "

f"avg={sum(chain_lengths.values())/len(chain_lengths):.1f}")

# Show a few chain mappings

for var in list(embedding.keys())[:4]:

print(f" {var} -> chain of {len(embedding[var])} physical qubits")

Chain breaks occur when physical qubits in a chain disagree on their value after annealing. The chain strength parameter controls how strongly chained qubits are coupled. If the chain strength is too low, chains break frequently and the solution quality degrades. If it is too high, the chain coupling dominates the problem energy landscape and the solver fails to explore the solution space effectively.

The LeapHybridCQMSampler abstracts away all of this complexity. It handles embedding, chain strength tuning, and chain break resolution internally. For most users, the hybrid solver is the right choice unless you need fine-grained control over the quantum annealing process or are running experiments on QPU behavior.

When to Use CQM vs BQM

Use CQM when:

- Your problem has explicit inequality or equality constraints (scheduling, routing, packing).

- Penalty tuning for BQM has been difficult or inconsistent.

- You have integer or real-valued variables alongside binary ones.

- You want feasibility labeling on returned solutions.

Use BQM when:

- Your problem is already in QUBO form with no natural constraint structure.

- You are targeting the quantum annealer directly (QPU sampler) rather than the hybrid solver.

- The problem is small enough for the QPU’s embedding overhead to be worth it.

Checking Feasibility

Always check feasibility before using a result:

for sample, energy, is_feasible in sampleset.data(["sample", "energy", "is_feasible"]):

if is_feasible:

print(f"Feasible solution with energy {energy:.2f}")

break

else:

print("No feasible solution found -- try increasing time_limit")

If no feasible solution is found, the constraints may be too tight (infeasible problem) or the time limit too short for the problem size.

Common Mistakes

Working with CQM introduces its own set of pitfalls. Here are five specific mistakes that frequently trip up new users.

1. Using BINARY Instead of INTEGER for Count Variables

If a variable represents a count (like the number of hours a nurse works, or the number of units to produce), it should be INTEGER with appropriate bounds. Using BINARY forces the variable to 0 or 1, which silently produces incorrect or infeasible solutions.

# Wrong: nurse_hours is a count, not a binary flag

cqm.add_variable("BINARY", "nurse_hours") # Can only be 0 or 1

# Correct: allow the full range

cqm.add_variable("INTEGER", "nurse_hours", lower_bound=0, upper_bound=40)

2. Forgetting to Filter for Feasibility

The hybrid sampler may return infeasible solutions, especially if the time_limit is too short or the problem is very constrained. Always filter before using results.

# Wrong: using the first solution without checking feasibility

best = sampleset.first.sample # Might violate constraints!

# Correct: filter first

feasible = sampleset.filter(lambda s: s.is_feasible)

if len(feasible) == 0:

print("No feasible solution found. Try increasing time_limit.")

else:

best = feasible.first.sample

3. Floating-Point Precision with Real Variables

When using real-valued variables with equality constraints like sum_i w[i] = 1.0, the solver returns solutions where the sum is within a small tolerance of 1.0 (typically 1e-6), not exactly 1.0. Do not compare with == in your postprocessing code.

# Wrong: exact comparison

total = sum(best[w[i]] for i in range(n_assets))

if total == 1.0: # Will almost never be True due to floating point

print("Fully invested")

# Correct: tolerance-based comparison

total = sum(best[w[i]] for i in range(n_assets))

if abs(total - 1.0) < 1e-5:

print(f"Fully invested (sum = {total:.8f})")

4. Wrong Objective Direction

CQM minimizes the objective by default. To maximize a quantity Q, set the objective to -Q. Forgetting the sign gives a solution that minimizes the quantity you wanted to maximize.

# Wrong: trying to maximize profit by setting it as the objective directly

# This MINIMIZES profit (finds the lowest-profit assignment)

cqm.set_objective(profit_expression)

# Correct: negate to maximize

cqm.set_objective(-1 * profit_expression)

5. Using ExactCQMSolver for Large Problems

ExactCQMSolver enumerates all feasible solutions by brute force. For a problem with 20 binary variables, that is 2^20 = 1,048,576 candidate solutions. For 30 variables, it is over a billion. The solver becomes impractically slow beyond about 15-20 binary variables.

# For small problems (< 20 binary variables): ExactCQMSolver is fine

sampler = dimod.ExactCQMSolver()

# For medium problems (20-100 binary variables): use SimulatedAnnealingSampler

# import neal

# sampler = neal.SimulatedAnnealingSampler()

# For large problems (100+ variables): use the hybrid solver

# from dwave.system import LeapHybridCQMSampler

# sampler = LeapHybridCQMSampler()

Choose your sampler based on problem size and whether you need guaranteed optimal solutions (ExactCQMSolver, small problems only) or good approximate solutions quickly (hybrid sampler, any size up to the solver’s variable limit).

Was this tutorial helpful?