Quantum 101: Quantum Computing & Quantum Internet

Delft University of Technology (QuTech)

Quantum computers are not simply faster classical computers. They operate on fundamentally different principles and are better suited to a narrow but important set of problems. Here is an honest, technical look at what quantum computers actually do differently and where the advantage is real.

A classical bit is a physical system with two stable states, representing 0 or 1. Every operation in classical computing boils down to manipulating these bits one deterministic state at a time.

A qubit is a quantum two-level system. Unlike a classical bit, a qubit can exist in a superposition of 0 and 1 simultaneously. Mathematically, this means a qubit's state is a combination of both possibilities at once, with probability amplitudes that determine how likely each outcome is when measured. Measuring the qubit collapses it to 0 or 1, just like a classical bit -- but until that measurement happens, the qubit carries both possibilities with tunable amplitudes.

Physical implementations of qubits include superconducting circuits (used by IBM and Google), trapped ions (IonQ, Quantinuum), photonic systems, and neutral atoms. Each has different error rates, connectivity constraints, and operating temperature requirements. All of them are extraordinarily sensitive to environmental noise, which is why quantum error correction is so critical and so difficult.

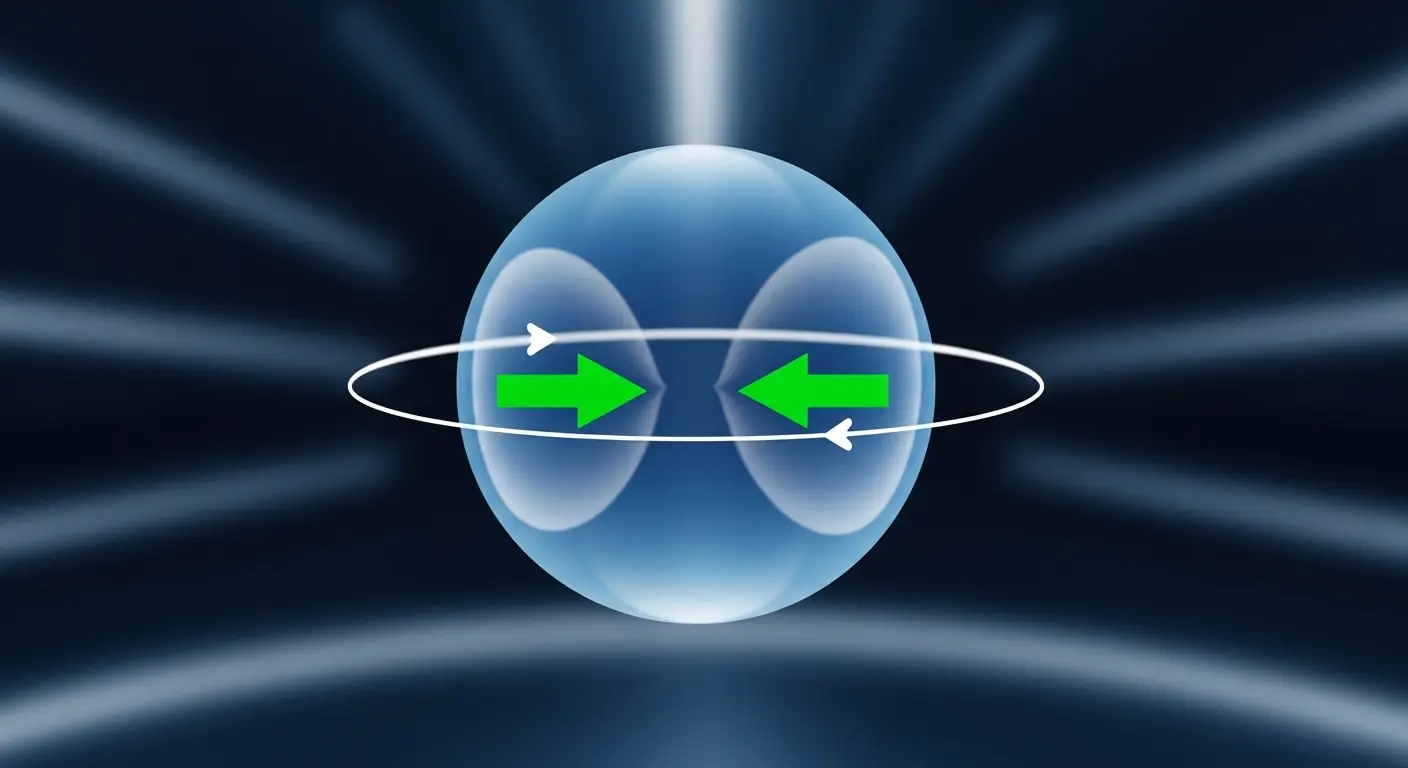

The key resource that quantum computing exploits is not superposition alone but the combination of superposition, entanglement, and interference. Entanglement correlates qubits so that operations on one qubit affect others instantaneously regardless of physical separation. Interference allows quantum algorithms to amplify correct answers and cancel wrong ones. The art of quantum algorithm design is engineering these interference effects so the right answer has high probability when measured.

"Cannot" is a strong word. More precisely: quantum computers can solve certain problems far more efficiently than any known classical algorithm. The most important examples:

Shor's algorithm factors an N-digit number in polynomial time. The best classical algorithms take sub-exponential but super-polynomial time. This is the threat to RSA encryption: a sufficiently large quantum computer running Shor's algorithm would break RSA in hours instead of the millions of years a classical computer would need. This is why the cryptography community is urgently standardizing post-quantum algorithms.

Grover's algorithm searches an unsorted database of N items in O(sqrt(N)) operations vs. O(N) classically. This is a quadratic speedup -- significant for large databases and cryptographic brute-force attacks, though not exponential. See the Grover's algorithm page for a full explanation.

Simulating a quantum system on a classical computer requires exponential resources as the system grows larger -- the state space of N qubits requires 2^N classical bits to represent exactly. A quantum computer can simulate quantum systems naturally, with resources that scale polynomially. This is the application most likely to show near-term practical advantage in drug discovery and materials science.

Quantum computers have significant limitations that classical computers do not, and those limitations matter for virtually everything people use computers for today.

Web browsing, document editing, video streaming, database queries, mobile apps -- all of these are deterministic, memory-intensive tasks that classical hardware handles efficiently. Quantum computers offer no advantage here and cannot run these workloads directly.

Training large neural networks is a classical workload dominated by matrix multiplication on GPUs and TPUs. There is no known quantum algorithm that provides a clear speedup over state-of-the-art classical AI training. Quantum machine learning is an active research area, but practical advantage over classical ML for real datasets remains unproven.

Today's quantum hardware has error rates around 0.1-1% per gate operation. Classical transistors fail so rarely that error correction is not needed for most computations. Until quantum error correction matures, quantum computers require heroic engineering to run even moderate-depth circuits reliably.

Classical computers support arbitrary programs. Quantum computers require problems to be reformulated as quantum circuits with careful attention to coherence time, qubit connectivity, and gate sets. Writing quantum software is significantly harder than classical software development, and the toolchain is far less mature.

| Property | Classical computing | Quantum computing |

|---|---|---|

| Basic unit | Bit (0 or 1) | Qubit (superposition of 0 and 1) |

| Computation model | Deterministic logic gates | Unitary operations, interference, measurement |

| Error rates | Effectively zero (transistor level) | 0.1-1% per gate (current NISQ hardware) |

| Programming languages | Python, C, Java, JavaScript, hundreds more | Qiskit, Cirq, PennyLane, Q#, Quil |

| Best at | General-purpose tasks, AI, databases | Factoring, search, quantum simulation |

| Availability | Universal: laptops, phones, servers | Cloud access only; cryogenic operating conditions |

The honest answer depends on the problem. For a narrow class of tasks, quantum advantage has already been demonstrated or is close -- IBM's and Google's experiments show that quantum processors can sample from certain probability distributions faster than classical supercomputers. But these demonstrations are carefully chosen to favor quantum hardware; they do not translate directly to commercially valuable problems.

For practically useful quantum advantage -- solving real chemistry, optimization, or cryptanalysis problems faster than the best classical methods -- current estimates from leading researchers suggest:

Top-rated beginner courses to get you started with the fundamentals.

Delft University of Technology (QuTech)

Xanadu / PennyLane Team

IBM Quantum / Qiskit Team